mirror of

https://github.com/ultralytics/ultralytics

synced 2026-04-21 14:07:18 +00:00

Add NVIDIA DALI GPU preprocessing guide (#24102)

Some checks are pending

CI / Benchmarks (yolo26n, macos-26, 3.12) (push) Waiting to run

CI / Benchmarks (yolo26n, ubuntu-24.04-arm, 3.12) (push) Waiting to run

CI / Benchmarks (yolo26n, ubuntu-latest, 3.12) (push) Waiting to run

CI / Tests (macos-26, 3.12, latest) (push) Waiting to run

CI / Tests (ubuntu-24.04-arm, 3.12, latest) (push) Waiting to run

CI / Tests (ubuntu-latest, 3.12, latest) (push) Waiting to run

CI / Tests (ubuntu-latest, 3.8, 1.8.0, 0.9.0) (push) Waiting to run

CI / Tests (windows-latest, 3.12, latest) (push) Waiting to run

CI / SlowTests (macos-26, 3.12, latest) (push) Waiting to run

CI / SlowTests (ubuntu-24.04-arm, 3.12, latest) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.10, 1.11.0, 0.12.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.10, 1.12.0, 0.13.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.10, 1.13.0, 0.14.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.11, 2.0.0, 0.15.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.11, 2.1.0, 0.16.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.10.0, 0.25.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.11.0, 0.26.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.2.0, 0.17.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.3.0, 0.18.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.4.0, 0.19.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.5.0, 0.20.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.6.0, 0.21.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.7.0, 0.22.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.8.0, 0.23.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.9.0, 0.24.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, latest) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.8, 1.8.0, 0.9.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.9, 1.10.0, 0.11.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.9, 1.9.0, 0.10.0) (push) Waiting to run

CI / SlowTests (windows-latest, 3.12, latest) (push) Waiting to run

CI / GPU (push) Waiting to run

CI / RaspberryPi (push) Waiting to run

CI / NVIDIA_Jetson (JetPack5.1.2, 1.23.5, https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.16.3-cp38-cp38-linux_aarch64.whl, 3.8, jetson-jp512, https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.2.0-cp38-c… (push) Waiting to run

CI / NVIDIA_Jetson (JetPack6.2, 1.26.4, https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.20.0-cp310-cp310-linux_aarch64.whl, 3.10, jetson-jp62, https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.5.0a0+872d… (push) Waiting to run

CI / Conda (ubuntu-latest, 3.12) (push) Waiting to run

CI / Summary (push) Blocked by required conditions

Publish Docs / Docs (push) Waiting to run

Publish to PyPI / check (push) Waiting to run

Publish to PyPI / build (push) Blocked by required conditions

Publish to PyPI / publish (push) Blocked by required conditions

Publish to PyPI / sbom (push) Blocked by required conditions

Publish to PyPI / notify (push) Blocked by required conditions

Some checks are pending

CI / Benchmarks (yolo26n, macos-26, 3.12) (push) Waiting to run

CI / Benchmarks (yolo26n, ubuntu-24.04-arm, 3.12) (push) Waiting to run

CI / Benchmarks (yolo26n, ubuntu-latest, 3.12) (push) Waiting to run

CI / Tests (macos-26, 3.12, latest) (push) Waiting to run

CI / Tests (ubuntu-24.04-arm, 3.12, latest) (push) Waiting to run

CI / Tests (ubuntu-latest, 3.12, latest) (push) Waiting to run

CI / Tests (ubuntu-latest, 3.8, 1.8.0, 0.9.0) (push) Waiting to run

CI / Tests (windows-latest, 3.12, latest) (push) Waiting to run

CI / SlowTests (macos-26, 3.12, latest) (push) Waiting to run

CI / SlowTests (ubuntu-24.04-arm, 3.12, latest) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.10, 1.11.0, 0.12.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.10, 1.12.0, 0.13.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.10, 1.13.0, 0.14.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.11, 2.0.0, 0.15.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.11, 2.1.0, 0.16.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.10.0, 0.25.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.11.0, 0.26.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.2.0, 0.17.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.3.0, 0.18.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.4.0, 0.19.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.5.0, 0.20.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.6.0, 0.21.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.7.0, 0.22.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.8.0, 0.23.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, 2.9.0, 0.24.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.12, latest) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.8, 1.8.0, 0.9.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.9, 1.10.0, 0.11.0) (push) Waiting to run

CI / SlowTests (ubuntu-latest, 3.9, 1.9.0, 0.10.0) (push) Waiting to run

CI / SlowTests (windows-latest, 3.12, latest) (push) Waiting to run

CI / GPU (push) Waiting to run

CI / RaspberryPi (push) Waiting to run

CI / NVIDIA_Jetson (JetPack5.1.2, 1.23.5, https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.16.3-cp38-cp38-linux_aarch64.whl, 3.8, jetson-jp512, https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.2.0-cp38-c… (push) Waiting to run

CI / NVIDIA_Jetson (JetPack6.2, 1.26.4, https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.20.0-cp310-cp310-linux_aarch64.whl, 3.10, jetson-jp62, https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.5.0a0+872d… (push) Waiting to run

CI / Conda (ubuntu-latest, 3.12) (push) Waiting to run

CI / Summary (push) Blocked by required conditions

Publish Docs / Docs (push) Waiting to run

Publish to PyPI / check (push) Waiting to run

Publish to PyPI / build (push) Blocked by required conditions

Publish to PyPI / publish (push) Blocked by required conditions

Publish to PyPI / sbom (push) Blocked by required conditions

Publish to PyPI / notify (push) Blocked by required conditions

Signed-off-by: Glenn Jocher <glenn.jocher@ultralytics.com> Co-authored-by: UltralyticsAssistant <web@ultralytics.com> Co-authored-by: fatih akyon <34196005+fcakyon@users.noreply.github.com> Co-authored-by: Jing Qiu <61612323+Laughing-q@users.noreply.github.com> Co-authored-by: Onuralp SEZER <onuralp@ultralytics.com> Co-authored-by: Glenn Jocher <glenn.jocher@ultralytics.com>

This commit is contained in:

parent

6438ebd4d7

commit

73fcaec788

12 changed files with 649 additions and 64 deletions

|

|

@ -46,6 +46,7 @@ Here's a compilation of in-depth guides to help you master different aspects of

|

||||||

- [Maintaining Your Computer Vision Model](model-monitoring-and-maintenance.md): Understand the key practices for monitoring, maintaining, and documenting computer vision models to guarantee accuracy, spot anomalies, and mitigate data drift.

|

- [Maintaining Your Computer Vision Model](model-monitoring-and-maintenance.md): Understand the key practices for monitoring, maintaining, and documenting computer vision models to guarantee accuracy, spot anomalies, and mitigate data drift.

|

||||||

- [Model Deployment Options](model-deployment-options.md): Overview of YOLO [model deployment](https://www.ultralytics.com/glossary/model-deployment) formats like ONNX, OpenVINO, and TensorRT, with pros and cons for each to inform your deployment strategy.

|

- [Model Deployment Options](model-deployment-options.md): Overview of YOLO [model deployment](https://www.ultralytics.com/glossary/model-deployment) formats like ONNX, OpenVINO, and TensorRT, with pros and cons for each to inform your deployment strategy.

|

||||||

- [Model YAML Configuration Guide](model-yaml-config.md): A comprehensive deep dive into Ultralytics' model architecture definitions. Explore the YAML format, understand the module resolution system, and learn how to integrate custom modules seamlessly.

|

- [Model YAML Configuration Guide](model-yaml-config.md): A comprehensive deep dive into Ultralytics' model architecture definitions. Explore the YAML format, understand the module resolution system, and learn how to integrate custom modules seamlessly.

|

||||||

|

- [NVIDIA DALI GPU Preprocessing](nvidia-dali.md): Eliminate CPU preprocessing bottlenecks by running YOLO letterbox resize, padding, and normalization on the GPU using NVIDIA DALI, with Triton Inference Server integration.

|

||||||

- [NVIDIA DGX Spark](nvidia-dgx-spark.md): Quickstart guide for deploying YOLO models on NVIDIA DGX Spark devices.

|

- [NVIDIA DGX Spark](nvidia-dgx-spark.md): Quickstart guide for deploying YOLO models on NVIDIA DGX Spark devices.

|

||||||

- [NVIDIA Jetson](nvidia-jetson.md): Quickstart guide for deploying YOLO models on NVIDIA Jetson devices.

|

- [NVIDIA Jetson](nvidia-jetson.md): Quickstart guide for deploying YOLO models on NVIDIA Jetson devices.

|

||||||

- [OpenVINO Latency vs Throughput Modes](optimizing-openvino-latency-vs-throughput-modes.md): Learn latency and throughput optimization techniques for peak YOLO inference performance.

|

- [OpenVINO Latency vs Throughput Modes](optimizing-openvino-latency-vs-throughput-modes.md): Learn latency and throughput optimization techniques for peak YOLO inference performance.

|

||||||

|

|

|

||||||

570

docs/en/guides/nvidia-dali.md

Normal file

570

docs/en/guides/nvidia-dali.md

Normal file

|

|

@ -0,0 +1,570 @@

|

||||||

|

---

|

||||||

|

comments: true

|

||||||

|

description: Learn how to use NVIDIA DALI for GPU-accelerated preprocessing with Ultralytics YOLO models. Eliminate CPU bottlenecks by running letterbox resize, padding, and normalization on the GPU for faster TensorRT and Triton deployments.

|

||||||

|

keywords: NVIDIA DALI, GPU preprocessing, Ultralytics, YOLO, YOLO26, TensorRT, Triton Inference Server, letterbox, inference optimization, deep learning, computer vision, deployment, video processing, batch inference, DALI pipeline, CV-CUDA

|

||||||

|

---

|

||||||

|

|

||||||

|

# GPU-Accelerated Preprocessing with NVIDIA DALI

|

||||||

|

|

||||||

|

## Introduction

|

||||||

|

|

||||||

|

When deploying [Ultralytics YOLO](../models/index.md) models in production, [preprocessing](https://www.ultralytics.com/glossary/data-preprocessing) often becomes the bottleneck. While [TensorRT](../integrations/tensorrt.md) can run model [inference](../modes/predict.md) in just a few milliseconds, the CPU-based preprocessing (resize, pad, normalize) can take 2-10ms per image, especially at high resolutions. [NVIDIA DALI](https://docs.nvidia.com/deeplearning/dali/user-guide/docs/index.html) (Data Loading Library) solves this by moving the entire preprocessing pipeline to the GPU.

|

||||||

|

|

||||||

|

This guide walks you through building DALI pipelines that exactly replicate Ultralytics YOLO preprocessing, integrating them with `model.predict()`, processing video streams, and deploying end-to-end with [Triton Inference Server](triton-inference-server.md).

|

||||||

|

|

||||||

|

!!! tip "Who is this guide for?"

|

||||||

|

|

||||||

|

This guide is for engineers deploying YOLO models in production environments where CPU preprocessing is a measured bottleneck — typically [TensorRT](../integrations/tensorrt.md) deployments on NVIDIA GPUs, high-throughput video pipelines, or [Triton Inference Server](triton-inference-server.md) setups. If you're running standard inference with `model.predict()` and don't have a preprocessing bottleneck, the default CPU pipeline works well.

|

||||||

|

|

||||||

|

!!! summary "Quick Summary"

|

||||||

|

|

||||||

|

- **Building a DALI pipeline?** Use `fn.resize(mode="not_larger")` + `fn.crop(out_of_bounds_policy="pad")` + `fn.crop_mirror_normalize` to replicate YOLO's letterbox preprocessing on GPU.

|

||||||

|

- **Integrating with Ultralytics?** Pass the DALI output as a `torch.Tensor` to `model.predict()` — Ultralytics skips image preprocessing automatically.

|

||||||

|

- **Deploying with Triton?** Use the DALI backend with a TensorRT ensemble for zero-CPU preprocessing.

|

||||||

|

|

||||||

|

## Why Use DALI for YOLO Preprocessing

|

||||||

|

|

||||||

|

In a typical YOLO inference pipeline, the preprocessing steps run on the CPU:

|

||||||

|

|

||||||

|

1. **Decode** the image (JPEG/PNG)

|

||||||

|

2. **Resize** while preserving aspect ratio

|

||||||

|

3. **Pad** to the target size (letterbox)

|

||||||

|

4. **Normalize** pixel values from `[0, 255]` to `[0, 1]`

|

||||||

|

5. **Convert** layout from HWC to CHW

|

||||||

|

|

||||||

|

With DALI, all these operations run on the GPU, eliminating the CPU bottleneck. This is especially valuable when:

|

||||||

|

|

||||||

|

| Scenario | Why DALI Helps |

|

||||||

|

| ------------------------------------------------------------------------ | ----------------------------------------------------------------------------------------------------------------------- |

|

||||||

|

| **Fast GPU inference** | [TensorRT](../integrations/tensorrt.md) engines with sub-millisecond inference make CPU preprocessing the dominant cost |

|

||||||

|

| **High-resolution inputs** | 1080p and 4K video streams require expensive resize operations |

|

||||||

|

| **Large [batch sizes](https://www.ultralytics.com/glossary/batch-size)** | Server-side inference processing many images in parallel |

|

||||||

|

| **Limited CPU cores** | Edge devices like [NVIDIA Jetson](nvidia-jetson.md), or dense GPU servers with few CPU cores per GPU |

|

||||||

|

|

||||||

|

## Prerequisites

|

||||||

|

|

||||||

|

!!! warning "Linux Only"

|

||||||

|

|

||||||

|

NVIDIA DALI supports **Linux only**. It is not available on Windows or macOS.

|

||||||

|

|

||||||

|

Install the required packages:

|

||||||

|

|

||||||

|

=== "CUDA 12.x"

|

||||||

|

|

||||||

|

```bash

|

||||||

|

pip install ultralytics

|

||||||

|

pip install --extra-index-url https://pypi.nvidia.com nvidia-dali-cuda120

|

||||||

|

```

|

||||||

|

|

||||||

|

=== "CUDA 11.x"

|

||||||

|

|

||||||

|

```bash

|

||||||

|

pip install ultralytics

|

||||||

|

pip install --extra-index-url https://pypi.nvidia.com nvidia-dali-cuda110

|

||||||

|

```

|

||||||

|

|

||||||

|

**Requirements:**

|

||||||

|

|

||||||

|

- NVIDIA GPU (compute capability 5.0+ / Maxwell or newer)

|

||||||

|

- CUDA 11.0+ or 12.0+

|

||||||

|

- Python 3.10-3.14

|

||||||

|

- Linux operating system

|

||||||

|

|

||||||

|

## Understanding YOLO Preprocessing

|

||||||

|

|

||||||

|

Before building a DALI pipeline, it helps to understand exactly what Ultralytics does during preprocessing. The key class is `LetterBox` in [`ultralytics/data/augment.py`](https://github.com/ultralytics/ultralytics/blob/main/ultralytics/data/augment.py):

|

||||||

|

|

||||||

|

```python

|

||||||

|

from ultralytics.data.augment import LetterBox

|

||||||

|

|

||||||

|

letterbox = LetterBox(

|

||||||

|

new_shape=(640, 640), # Target size

|

||||||

|

center=True, # Center the image (pad equally on both sides)

|

||||||

|

stride=32, # Stride alignment

|

||||||

|

padding_value=114, # Gray padding (114, 114, 114)

|

||||||

|

)

|

||||||

|

```

|

||||||

|

|

||||||

|

The full preprocessing pipeline in [`ultralytics/engine/predictor.py`](https://github.com/ultralytics/ultralytics/blob/main/ultralytics/engine/predictor.py) performs these steps:

|

||||||

|

|

||||||

|

| Step | Operation | CPU Function | DALI Equivalent |

|

||||||

|

| ---- | -------------------------- | ------------------------------- | --------------------------------------------- |

|

||||||

|

| 1 | Letterbox resize | `cv2.resize` | `fn.resize(mode="not_larger")` |

|

||||||

|

| 2 | Centered padding | `cv2.copyMakeBorder` | `fn.crop(out_of_bounds_policy="pad")` |

|

||||||

|

| 3 | BGR → RGB | `im[..., ::-1]` | `fn.decoders.image(output_type=types.RGB)` |

|

||||||

|

| 4 | HWC → CHW + normalize /255 | `np.transpose` + `tensor / 255` | `fn.crop_mirror_normalize(std=[255,255,255])` |

|

||||||

|

|

||||||

|

The letterbox operation preserves the aspect ratio by:

|

||||||

|

|

||||||

|

1. Computing scale: `r = min(target_h / h, target_w / w)`

|

||||||

|

2. Resizing to `(round(w * r), round(h * r))`

|

||||||

|

3. Padding the remaining space with gray (`114`) to reach the target size

|

||||||

|

4. Centering the image so padding is distributed equally on both sides

|

||||||

|

|

||||||

|

## DALI Pipeline for YOLO

|

||||||

|

|

||||||

|

Use the centered pipeline below as the default reference. It matches Ultralytics `LetterBox(center=True)` behavior, which is what standard YOLO inference uses.

|

||||||

|

|

||||||

|

### Centered Pipeline (Recommended, matches Ultralytics LetterBox)

|

||||||

|

|

||||||

|

This version exactly replicates the default Ultralytics preprocessing with centered padding, matching `LetterBox(center=True)`:

|

||||||

|

|

||||||

|

!!! example "DALI pipeline with centered padding (recommended)"

|

||||||

|

|

||||||

|

```python

|

||||||

|

import nvidia.dali as dali

|

||||||

|

import nvidia.dali.fn as fn

|

||||||

|

import nvidia.dali.types as types

|

||||||

|

|

||||||

|

|

||||||

|

@dali.pipeline_def(batch_size=8, num_threads=4, device_id=0)

|

||||||

|

def yolo_dali_pipeline_centered(image_dir, target_size=640):

|

||||||

|

"""DALI pipeline replicating YOLO preprocessing with centered padding.

|

||||||

|

|

||||||

|

Matches Ultralytics LetterBox(center=True) behavior exactly.

|

||||||

|

"""

|

||||||

|

# Read and decode images on GPU

|

||||||

|

jpegs, _ = fn.readers.file(file_root=image_dir, random_shuffle=False, name="Reader")

|

||||||

|

images = fn.decoders.image(jpegs, device="mixed", output_type=types.RGB)

|

||||||

|

|

||||||

|

# Aspect-ratio-preserving resize

|

||||||

|

resized = fn.resize(

|

||||||

|

images,

|

||||||

|

resize_x=target_size,

|

||||||

|

resize_y=target_size,

|

||||||

|

mode="not_larger",

|

||||||

|

interp_type=types.INTERP_LINEAR,

|

||||||

|

antialias=False, # Match cv2.INTER_LINEAR (no antialiasing)

|

||||||

|

)

|

||||||

|

|

||||||

|

# Centered padding using fn.crop with out_of_bounds_policy

|

||||||

|

# When crop size > image size, fn.crop centers the image and pads symmetrically

|

||||||

|

padded = fn.crop(

|

||||||

|

resized,

|

||||||

|

crop=(target_size, target_size),

|

||||||

|

out_of_bounds_policy="pad",

|

||||||

|

fill_values=114, # YOLO padding value

|

||||||

|

)

|

||||||

|

|

||||||

|

# Normalize and convert layout

|

||||||

|

output = fn.crop_mirror_normalize(

|

||||||

|

padded,

|

||||||

|

dtype=types.FLOAT,

|

||||||

|

output_layout="CHW",

|

||||||

|

mean=[0.0, 0.0, 0.0],

|

||||||

|

std=[255.0, 255.0, 255.0],

|

||||||

|

)

|

||||||

|

return output

|

||||||

|

```

|

||||||

|

|

||||||

|

!!! note "When is `fn.pad` enough?"

|

||||||

|

|

||||||

|

If you do not need exact `LetterBox(center=True)` parity, you can simplify the padding step by using `fn.pad(...)` instead of `fn.crop(..., out_of_bounds_policy="pad")`. That variant pads only the **right and bottom** edges, which can be acceptable for custom deployment pipelines, but it will not match Ultralytics' default centered letterbox behavior exactly.

|

||||||

|

|

||||||

|

!!! tip "Why `fn.crop` for centered padding?"

|

||||||

|

|

||||||

|

DALI's `fn.pad` operator only adds padding to the **right and bottom** edges. To get centered padding (matching Ultralytics `LetterBox(center=True)`), use `fn.crop` with `out_of_bounds_policy="pad"`. With the default `crop_pos_x=0.5` and `crop_pos_y=0.5`, the image is automatically centered with symmetric padding.

|

||||||

|

|

||||||

|

!!! warning "Antialias Mismatch"

|

||||||

|

|

||||||

|

DALI's `fn.resize` enables antialiasing by default (`antialias=True`), while OpenCV's `cv2.resize` with `INTER_LINEAR` does **not** apply antialiasing. Always set `antialias=False` in DALI to match the CPU pipeline. Omitting this causes subtle numerical differences that can affect [model accuracy](https://www.ultralytics.com/glossary/accuracy).

|

||||||

|

|

||||||

|

### Running the Pipeline

|

||||||

|

|

||||||

|

!!! example "Build and run a DALI pipeline"

|

||||||

|

|

||||||

|

```python

|

||||||

|

# Build and run the pipeline

|

||||||

|

pipe = yolo_dali_pipeline_centered(image_dir="/path/to/images", target_size=640)

|

||||||

|

pipe.build()

|

||||||

|

|

||||||

|

# Get a batch of preprocessed images

|

||||||

|

(output,) = pipe.run()

|

||||||

|

|

||||||

|

# Convert to numpy or PyTorch tensors

|

||||||

|

batch_np = output.as_cpu().as_array() # Shape: (batch_size, 3, 640, 640)

|

||||||

|

print(f"Output shape: {batch_np.shape}, dtype: {batch_np.dtype}")

|

||||||

|

print(f"Value range: [{batch_np.min():.4f}, {batch_np.max():.4f}]")

|

||||||

|

```

|

||||||

|

|

||||||

|

## Using DALI with Ultralytics Predict

|

||||||

|

|

||||||

|

You can pass a preprocessed [PyTorch](https://www.ultralytics.com/glossary/pytorch) tensor directly to `model.predict()`. When a `torch.Tensor` is passed, Ultralytics **skips image preprocessing** (letterbox, BGR→RGB, HWC→CHW, and /255 normalization) and only performs device transfer and dtype casting before sending it to the model.

|

||||||

|

|

||||||

|

Since Ultralytics doesn't have access to the original image dimensions in this case, detection box coordinates are returned in the 640×640 letterboxed space. To map them back to original image coordinates, use [`scale_boxes`](../reference/utils/ops.md) which handles the exact rounding logic used by `LetterBox`:

|

||||||

|

|

||||||

|

```python

|

||||||

|

from ultralytics.utils.ops import scale_boxes

|

||||||

|

|

||||||

|

# boxes: tensor of shape (N, 4) in xyxy format, in 640x640 letterboxed coords

|

||||||

|

# Scale boxes from letterboxed (640, 640) back to original (orig_h, orig_w)

|

||||||

|

boxes = scale_boxes((640, 640), boxes, (orig_h, orig_w))

|

||||||

|

```

|

||||||

|

|

||||||

|

This applies to all external preprocessing paths — direct tensor input, video streams, and Triton deployment.

|

||||||

|

|

||||||

|

!!! example "DALI + Ultralytics predict"

|

||||||

|

|

||||||

|

```python

|

||||||

|

from nvidia.dali.plugin.pytorch import DALIGenericIterator

|

||||||

|

|

||||||

|

from ultralytics import YOLO

|

||||||

|

|

||||||

|

# Load model

|

||||||

|

model = YOLO("yolo26n.pt")

|

||||||

|

|

||||||

|

# Create DALI iterator

|

||||||

|

pipe = yolo_dali_pipeline_centered(image_dir="/path/to/images", target_size=640)

|

||||||

|

pipe.build()

|

||||||

|

dali_iter = DALIGenericIterator(pipe, ["images"], reader_name="Reader")

|

||||||

|

|

||||||

|

# Run inference with DALI-preprocessed tensors

|

||||||

|

for batch in dali_iter:

|

||||||

|

images = batch[0]["images"] # Already on GPU, shape (B, 3, 640, 640)

|

||||||

|

results = model.predict(images, verbose=False)

|

||||||

|

for result in results:

|

||||||

|

print(f"Detected {len(result.boxes)} objects")

|

||||||

|

```

|

||||||

|

|

||||||

|

!!! tip "Zero Preprocessing Overhead"

|

||||||

|

|

||||||

|

When you pass a `torch.Tensor` to `model.predict()`, the image preprocessing step takes ~0.004ms (essentially zero) compared to ~1-10ms with CPU preprocessing. The tensor must be in BCHW format, float32 (or float16), and normalized to `[0, 1]`. Ultralytics will still handle device transfer and dtype casting automatically.

|

||||||

|

|

||||||

|

## DALI with Video Streams

|

||||||

|

|

||||||

|

For real-time video processing, use `fn.external_source` to feed frames from any source — [OpenCV](https://www.ultralytics.com/glossary/opencv), GStreamer, or custom capture libraries:

|

||||||

|

|

||||||

|

!!! example "DALI pipeline for video stream preprocessing"

|

||||||

|

|

||||||

|

=== "Pipeline Definition"

|

||||||

|

|

||||||

|

```python

|

||||||

|

import nvidia.dali as dali

|

||||||

|

import nvidia.dali.fn as fn

|

||||||

|

import nvidia.dali.types as types

|

||||||

|

|

||||||

|

|

||||||

|

@dali.pipeline_def(batch_size=1, num_threads=4, device_id=0)

|

||||||

|

def yolo_video_pipeline(target_size=640):

|

||||||

|

"""DALI pipeline for processing video frames from external source."""

|

||||||

|

# External source for feeding frames from OpenCV, GStreamer, etc.

|

||||||

|

frames = fn.external_source(device="cpu", name="input")

|

||||||

|

frames = fn.reshape(frames, layout="HWC")

|

||||||

|

|

||||||

|

# Move to GPU and preprocess

|

||||||

|

frames_gpu = frames.gpu()

|

||||||

|

resized = fn.resize(

|

||||||

|

frames_gpu,

|

||||||

|

resize_x=target_size,

|

||||||

|

resize_y=target_size,

|

||||||

|

mode="not_larger",

|

||||||

|

interp_type=types.INTERP_LINEAR,

|

||||||

|

antialias=False,

|

||||||

|

)

|

||||||

|

padded = fn.crop(

|

||||||

|

resized,

|

||||||

|

crop=(target_size, target_size),

|

||||||

|

out_of_bounds_policy="pad",

|

||||||

|

fill_values=114,

|

||||||

|

)

|

||||||

|

output = fn.crop_mirror_normalize(

|

||||||

|

padded,

|

||||||

|

dtype=types.FLOAT,

|

||||||

|

output_layout="CHW",

|

||||||

|

mean=[0.0, 0.0, 0.0],

|

||||||

|

std=[255.0, 255.0, 255.0],

|

||||||

|

)

|

||||||

|

return output

|

||||||

|

```

|

||||||

|

|

||||||

|

=== "Inference Loop (Simple OpenCV fallback)"

|

||||||

|

|

||||||

|

```python

|

||||||

|

import cv2

|

||||||

|

import numpy as np

|

||||||

|

import torch

|

||||||

|

|

||||||

|

from ultralytics import YOLO

|

||||||

|

|

||||||

|

model = YOLO("yolo26n.engine") # TensorRT model

|

||||||

|

|

||||||

|

pipe = yolo_video_pipeline(target_size=640)

|

||||||

|

pipe.build()

|

||||||

|

|

||||||

|

cap = cv2.VideoCapture("video.mp4")

|

||||||

|

while cap.isOpened():

|

||||||

|

ret, frame = cap.read()

|

||||||

|

if not ret:

|

||||||

|

break

|

||||||

|

|

||||||

|

# Feed BGR frame (convert to RGB for DALI)

|

||||||

|

frame_rgb = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

|

||||||

|

pipe.feed_input("input", [np.array(frame_rgb)])

|

||||||

|

(output,) = pipe.run()

|

||||||

|

|

||||||

|

# Convert DALI output to torch tensor for inference.

|

||||||

|

# This is a simple fallback path: using feed_input() with pipe.run() keeps a GPU->CPU->GPU copy.

|

||||||

|

# For high-throughput deployments, prefer a reader-based pipeline plus DALIGenericIterator to keep data on GPU.

|

||||||

|

tensor = torch.tensor(output.as_cpu().as_array()).to("cuda")

|

||||||

|

results = model.predict(tensor, verbose=False)

|

||||||

|

```

|

||||||

|

|

||||||

|

## Triton Inference Server with DALI

|

||||||

|

|

||||||

|

For production deployment, combine DALI preprocessing with [TensorRT](../integrations/tensorrt.md) inference in [Triton Inference Server](triton-inference-server.md) using an ensemble model. This eliminates CPU preprocessing entirely — raw JPEG bytes go in, detections come out, with everything processed on the GPU.

|

||||||

|

|

||||||

|

### Model Repository Structure

|

||||||

|

|

||||||

|

```

|

||||||

|

model_repository/

|

||||||

|

├── dali_preprocessing/

|

||||||

|

│ ├── 1/

|

||||||

|

│ │ └── model.dali

|

||||||

|

│ └── config.pbtxt

|

||||||

|

├── yolo_trt/

|

||||||

|

│ ├── 1/

|

||||||

|

│ │ └── model.plan

|

||||||

|

│ └── config.pbtxt

|

||||||

|

└── ensemble_dali_yolo/

|

||||||

|

├── 1/ # Empty directory (required by Triton)

|

||||||

|

└── config.pbtxt

|

||||||

|

```

|

||||||

|

|

||||||

|

### Step 1: Create the DALI Pipeline

|

||||||

|

|

||||||

|

Serialize the DALI pipeline for the Triton DALI backend:

|

||||||

|

|

||||||

|

!!! example "Serialize DALI pipeline for Triton"

|

||||||

|

|

||||||

|

```python

|

||||||

|

import nvidia.dali as dali

|

||||||

|

import nvidia.dali.fn as fn

|

||||||

|

import nvidia.dali.types as types

|

||||||

|

|

||||||

|

|

||||||

|

@dali.pipeline_def(batch_size=8, num_threads=4, device_id=0)

|

||||||

|

def triton_dali_pipeline():

|

||||||

|

"""DALI preprocessing pipeline for Triton deployment."""

|

||||||

|

# Input: raw encoded image bytes from Triton

|

||||||

|

images = fn.external_source(device="cpu", name="DALI_INPUT_0")

|

||||||

|

images = fn.decoders.image(images, device="mixed", output_type=types.RGB)

|

||||||

|

|

||||||

|

resized = fn.resize(

|

||||||

|

images,

|

||||||

|

resize_x=640,

|

||||||

|

resize_y=640,

|

||||||

|

mode="not_larger",

|

||||||

|

interp_type=types.INTERP_LINEAR,

|

||||||

|

antialias=False,

|

||||||

|

)

|

||||||

|

padded = fn.crop(

|

||||||

|

resized,

|

||||||

|

crop=(640, 640),

|

||||||

|

out_of_bounds_policy="pad",

|

||||||

|

fill_values=114,

|

||||||

|

)

|

||||||

|

output = fn.crop_mirror_normalize(

|

||||||

|

padded,

|

||||||

|

dtype=types.FLOAT,

|

||||||

|

output_layout="CHW",

|

||||||

|

mean=[0.0, 0.0, 0.0],

|

||||||

|

std=[255.0, 255.0, 255.0],

|

||||||

|

)

|

||||||

|

return output

|

||||||

|

|

||||||

|

|

||||||

|

# Serialize pipeline to model repository

|

||||||

|

pipe = triton_dali_pipeline()

|

||||||

|

pipe.serialize(filename="model_repository/dali_preprocessing/1/model.dali")

|

||||||

|

```

|

||||||

|

|

||||||

|

### Step 2: Export YOLO to TensorRT

|

||||||

|

|

||||||

|

!!! example "Export YOLO model to TensorRT engine"

|

||||||

|

|

||||||

|

```python

|

||||||

|

from ultralytics import YOLO

|

||||||

|

|

||||||

|

model = YOLO("yolo26n.pt")

|

||||||

|

model.export(format="engine", imgsz=640, half=True, batch=8)

|

||||||

|

# Copy the .engine file to model_repository/yolo_trt/1/model.plan

|

||||||

|

```

|

||||||

|

|

||||||

|

### Step 3: Configure Triton

|

||||||

|

|

||||||

|

**dali_preprocessing/config.pbtxt:**

|

||||||

|

|

||||||

|

```protobuf

|

||||||

|

name: "dali_preprocessing"

|

||||||

|

backend: "dali"

|

||||||

|

max_batch_size: 8

|

||||||

|

input [

|

||||||

|

{

|

||||||

|

name: "DALI_INPUT_0"

|

||||||

|

data_type: TYPE_UINT8

|

||||||

|

dims: [ -1 ]

|

||||||

|

}

|

||||||

|

]

|

||||||

|

output [

|

||||||

|

{

|

||||||

|

name: "DALI_OUTPUT_0"

|

||||||

|

data_type: TYPE_FP32

|

||||||

|

dims: [ 3, 640, 640 ]

|

||||||

|

}

|

||||||

|

]

|

||||||

|

```

|

||||||

|

|

||||||

|

**yolo_trt/config.pbtxt:**

|

||||||

|

|

||||||

|

```protobuf

|

||||||

|

name: "yolo_trt"

|

||||||

|

platform: "tensorrt_plan"

|

||||||

|

max_batch_size: 8

|

||||||

|

input [

|

||||||

|

{

|

||||||

|

name: "images"

|

||||||

|

data_type: TYPE_FP32

|

||||||

|

dims: [ 3, 640, 640 ]

|

||||||

|

}

|

||||||

|

]

|

||||||

|

output [

|

||||||

|

{

|

||||||

|

name: "output0"

|

||||||

|

data_type: TYPE_FP32

|

||||||

|

dims: [ 300, 6 ]

|

||||||

|

}

|

||||||

|

]

|

||||||

|

```

|

||||||

|

|

||||||

|

**ensemble_dali_yolo/config.pbtxt:**

|

||||||

|

|

||||||

|

```protobuf

|

||||||

|

name: "ensemble_dali_yolo"

|

||||||

|

platform: "ensemble"

|

||||||

|

max_batch_size: 8

|

||||||

|

input [

|

||||||

|

{

|

||||||

|

name: "INPUT"

|

||||||

|

data_type: TYPE_UINT8

|

||||||

|

dims: [ -1 ]

|

||||||

|

}

|

||||||

|

]

|

||||||

|

output [

|

||||||

|

{

|

||||||

|

name: "OUTPUT"

|

||||||

|

data_type: TYPE_FP32

|

||||||

|

dims: [ 300, 6 ]

|

||||||

|

}

|

||||||

|

]

|

||||||

|

ensemble_scheduling {

|

||||||

|

step [

|

||||||

|

{

|

||||||

|

model_name: "dali_preprocessing"

|

||||||

|

model_version: -1

|

||||||

|

input_map {

|

||||||

|

key: "DALI_INPUT_0"

|

||||||

|

value: "INPUT"

|

||||||

|

}

|

||||||

|

output_map {

|

||||||

|

key: "DALI_OUTPUT_0"

|

||||||

|

value: "preprocessed_image"

|

||||||

|

}

|

||||||

|

},

|

||||||

|

{

|

||||||

|

model_name: "yolo_trt"

|

||||||

|

model_version: -1

|

||||||

|

input_map {

|

||||||

|

key: "images"

|

||||||

|

value: "preprocessed_image"

|

||||||

|

}

|

||||||

|

output_map {

|

||||||

|

key: "output0"

|

||||||

|

value: "OUTPUT"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

]

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

!!! info "How Ensemble Mapping Works"

|

||||||

|

|

||||||

|

The ensemble connects models through **virtual tensor names**. The `output_map` value `"preprocessed_image"` in the DALI step matches the `input_map` value `"preprocessed_image"` in the TensorRT step. These are arbitrary names that link one step's output to the next step's input — they don't need to match any model's internal tensor names.

|

||||||

|

|

||||||

|

### Step 4: Send Inference Requests

|

||||||

|

|

||||||

|

!!! info "Why `tritonclient` instead of `YOLO(\"http://...\")`?"

|

||||||

|

|

||||||

|

Ultralytics has [built-in Triton support](triton-inference-server.md#running-inference) that handles pre/postprocessing automatically. However, it won't work with the DALI ensemble because `YOLO()` sends a preprocessed float32 tensor while the ensemble expects raw JPEG bytes. Use `tritonclient` directly for DALI ensembles, and the [built-in integration](triton-inference-server.md) for standard deployments without DALI.

|

||||||

|

|

||||||

|

!!! example "Send images to Triton ensemble"

|

||||||

|

|

||||||

|

```python

|

||||||

|

import numpy as np

|

||||||

|

import tritonclient.http as httpclient

|

||||||

|

|

||||||

|

client = httpclient.InferenceServerClient(url="localhost:8000")

|

||||||

|

|

||||||

|

# Load image as raw bytes (JPEG/PNG encoded)

|

||||||

|

image_data = np.fromfile("image.jpg", dtype="uint8")

|

||||||

|

image_data = np.expand_dims(image_data, axis=0) # Add batch dimension

|

||||||

|

|

||||||

|

# Create input

|

||||||

|

input_tensor = httpclient.InferInput("INPUT", image_data.shape, "UINT8")

|

||||||

|

input_tensor.set_data_from_numpy(image_data)

|

||||||

|

|

||||||

|

# Run inference through the ensemble

|

||||||

|

result = client.infer(model_name="ensemble_dali_yolo", inputs=[input_tensor])

|

||||||

|

detections = result.as_numpy("OUTPUT") # Shape: (1, 300, 6) -> [x1, y1, x2, y2, conf, class_id]

|

||||||

|

|

||||||

|

# Filter by confidence (no NMS needed — YOLO26 is end-to-end)

|

||||||

|

detections = detections[0] # First image

|

||||||

|

detections = detections[detections[:, 4] > 0.25] # Confidence threshold

|

||||||

|

print(f"Detected {len(detections)} objects")

|

||||||

|

```

|

||||||

|

|

||||||

|

!!! tip "Batching JPEG Images"

|

||||||

|

|

||||||

|

When sending a batch of JPEG images to Triton, pad all encoded byte arrays to the same length (the maximum byte count in the batch). Triton requires homogeneous batch shapes for the input tensor.

|

||||||

|

|

||||||

|

## Supported Tasks

|

||||||

|

|

||||||

|

DALI preprocessing works with all YOLO tasks that use the standard `LetterBox` pipeline:

|

||||||

|

|

||||||

|

| Task | Supported | Notes |

|

||||||

|

| ------------------------------------------- | --------- | -------------------------------------------------------- |

|

||||||

|

| [Detection](../tasks/detect.md) | ✅ | Standard letterbox preprocessing |

|

||||||

|

| [Segmentation](../tasks/segment.md) | ✅ | Same preprocessing as detection |

|

||||||

|

| [Pose Estimation](../tasks/pose.md) | ✅ | Same preprocessing as detection |

|

||||||

|

| [Oriented Detection (OBB)](../tasks/obb.md) | ✅ | Same preprocessing as detection |

|

||||||

|

| [Classification](../tasks/classify.md) | ❌ | Uses torchvision transforms (center crop), not letterbox |

|

||||||

|

|

||||||

|

## Limitations

|

||||||

|

|

||||||

|

- **Linux only**: DALI does not support Windows or macOS

|

||||||

|

- **NVIDIA GPU required**: No CPU-only fallback

|

||||||

|

- **Static pipeline**: Pipeline structure is defined at build time and cannot change dynamically

|

||||||

|

- **`fn.pad` is right/bottom only**: Use `fn.crop` with `out_of_bounds_policy="pad"` for centered padding

|

||||||

|

- **No rect mode**: DALI pipelines produce fixed-size outputs (e.g., 640×640). The `auto=True` rect mode that produces variable-size outputs (e.g., 384×640) is not supported. Note that while [TensorRT](../integrations/tensorrt.md) does support dynamic input shapes, a fixed-size DALI pipeline pairs naturally with a fixed-size engine for maximum throughput

|

||||||

|

- **Memory with multiple instances**: Using `instance_group` with `count` > 1 in Triton can cause high memory usage. Use the default instance group for the DALI model

|

||||||

|

|

||||||

|

## FAQ

|

||||||

|

|

||||||

|

### How does DALI preprocessing compare to CPU preprocessing speed?

|

||||||

|

|

||||||

|

The benefit depends on your pipeline. When GPU inference is already fast with [TensorRT](../integrations/tensorrt.md), CPU preprocessing at 2-10ms can become the dominant cost. DALI eliminates this bottleneck by running preprocessing on the GPU. The biggest gains are seen with high-resolution inputs (1080p, 4K), large [batch sizes](https://www.ultralytics.com/glossary/batch-size), and systems with limited CPU cores per GPU.

|

||||||

|

|

||||||

|

### Can I use DALI with PyTorch models (not just TensorRT)?

|

||||||

|

|

||||||

|

Yes. Use `DALIGenericIterator` to get preprocessed `torch.Tensor` outputs, then pass them to `model.predict()`. However, the performance benefit is greatest with [TensorRT](../integrations/tensorrt.md) models where inference is already very fast and CPU preprocessing becomes the bottleneck.

|

||||||

|

|

||||||

|

### What is the difference between `fn.pad` and `fn.crop` for padding?

|

||||||

|

|

||||||

|

`fn.pad` adds padding only to the **right and bottom** edges. `fn.crop` with `out_of_bounds_policy="pad"` centers the image and adds padding symmetrically on all sides, matching Ultralytics `LetterBox(center=True)` behavior.

|

||||||

|

|

||||||

|

### Does DALI produce pixel-identical results to CPU preprocessing?

|

||||||

|

|

||||||

|

Nearly identical. Set `antialias=False` in `fn.resize` to match OpenCV's `cv2.INTER_LINEAR`. Minor floating-point differences (< 0.001) may occur due to GPU vs CPU arithmetic, but these have no measurable impact on detection [accuracy](https://www.ultralytics.com/glossary/accuracy).

|

||||||

|

|

||||||

|

### What about CV-CUDA as an alternative to DALI?

|

||||||

|

|

||||||

|

[CV-CUDA](https://github.com/CVCUDA/CV-CUDA) is another NVIDIA library for GPU-accelerated vision processing. It provides per-operator control (like [OpenCV](https://www.ultralytics.com/glossary/opencv) but on GPU) rather than DALI's pipeline approach. CV-CUDA's `cvcuda.copymakeborder()` supports explicit per-side padding, making centered letterbox straightforward. Choose DALI for pipeline-based workflows (especially with [Triton](triton-inference-server.md)), and CV-CUDA for fine-grained operator-level control in custom inference code.

|

||||||

|

|

@ -124,7 +124,7 @@ Each event displays:

|

||||||

Some actions support undo directly from the Activity feed:

|

Some actions support undo directly from the Activity feed:

|

||||||

|

|

||||||

- **Settings changes**: Click **Undo** next to a recent settings update event to revert the change

|

- **Settings changes**: Click **Undo** next to a recent settings update event to revert the change

|

||||||

- Undo is available for a short time window after the action

|

- Undo is available for **one hour** after the action; after that, the undo button disappears

|

||||||

|

|

||||||

## Pagination

|

## Pagination

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -235,16 +235,17 @@ After upgrading:

|

||||||

|

|

||||||

### Cancel Pro

|

### Cancel Pro

|

||||||

|

|

||||||

Cancel anytime from the billing portal:

|

Cancel anytime from the Plans tab:

|

||||||

|

|

||||||

1. Go to **Settings > Billing**

|

1. Go to **Settings > Plans**

|

||||||

2. Click **Manage Subscription**

|

2. Click **Cancel Subscription** on the Pro plan card

|

||||||

3. Select **Cancel**

|

3. Confirm in the dialog

|

||||||

4. Confirm cancellation

|

|

||||||

|

If you cancel before the end of your billing period, a **Resume Subscription** button appears — click it to undo the cancellation before the period ends.

|

||||||

|

|

||||||

!!! note "Cancellation Timing"

|

!!! note "Cancellation Timing"

|

||||||

|

|

||||||

Pro features remain active until the end of your billing period. Monthly credits stop at cancellation.

|

Pro features remain active until the end of your current billing period. Monthly credits stop being granted at cancellation.

|

||||||

|

|

||||||

### Downgrading to Free

|

### Downgrading to Free

|

||||||

|

|

||||||

|

|

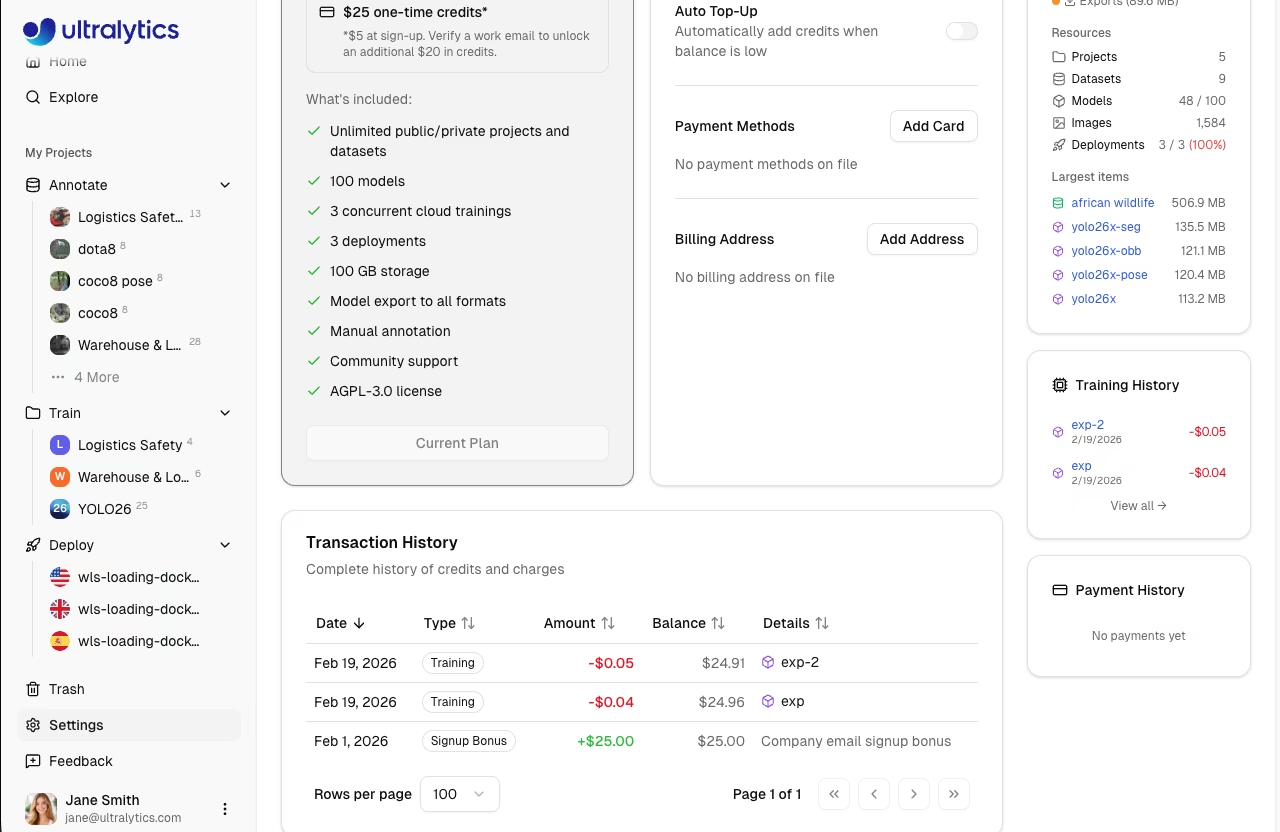

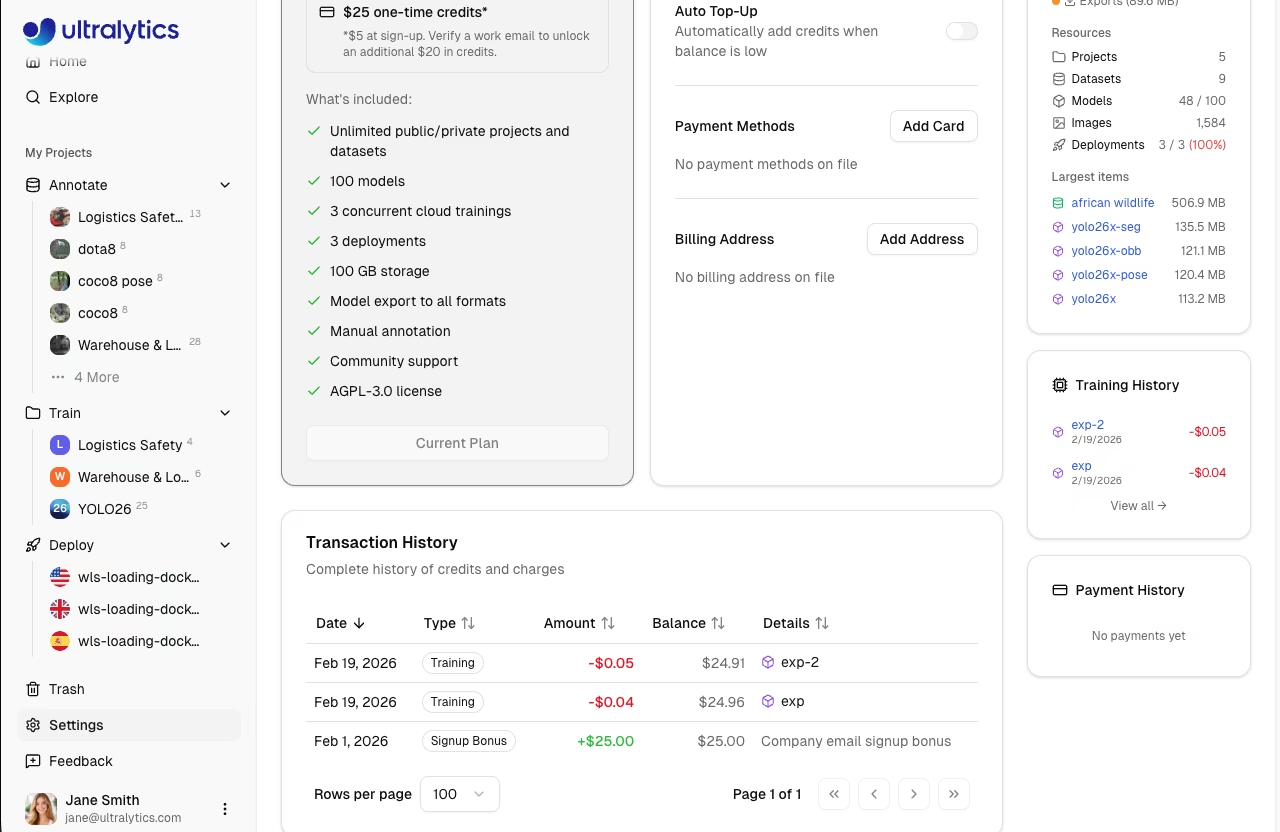

@ -272,13 +273,13 @@ View all transactions in `Settings > Billing`:

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

| Column | Description |

|

| Column | Description |

|

||||||

| ----------- | ----------------------------------------------------------------------------------------------------------- |

|

| ----------- | -------------------------------------------------------------------------------------------------------------------------------------- |

|

||||||

| **Date** | Transaction date |

|

| **Date** | Transaction date |

|

||||||

| **Type** | Signup Bonus, Credit Purchase, Monthly Grant, Training, Refund, Adjustment, Auto Top-Up, Auto Top-Up Failed |

|

| **Type** | Signup, Purchase, Subscription, Monthly Grant, Training, Refund, Adjustment, Promo, Auto Top-Up, Auto Top-Up Failed, Pro Credit Expiry |

|

||||||

| **Amount** | Transaction value (green for credits, red for charges) |

|

| **Amount** | Transaction value (green for credits, red for charges) |

|

||||||

| **Balance** | Resulting balance after transaction |

|

| **Balance** | Resulting balance after transaction |

|

||||||

| **Details** | Additional context (model link, receipt, period) |

|

| **Details** | Additional context (model link, receipt, period) |

|

||||||

|

|

||||||

## FAQ

|

## FAQ

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -180,7 +180,7 @@ Download all your data:

|

||||||

1. Go to **Settings > Profile**

|

1. Go to **Settings > Profile**

|

||||||

2. Scroll to the bottom section

|

2. Scroll to the bottom section

|

||||||

3. Click **Export Data**

|

3. Click **Export Data**

|

||||||

4. Receive download link via email

|

4. An asynchronous export job runs in the background; a **Download** link appears on the same page when the job completes (download link valid for 1 hour)

|

||||||

|

|

||||||

Export includes:

|

Export includes:

|

||||||

|

|

||||||

|

|

@ -188,7 +188,7 @@ Export includes:

|

||||||

- Dataset metadata

|

- Dataset metadata

|

||||||

- Model metadata

|

- Model metadata

|

||||||

- Training history

|

- Training history

|

||||||

- API key metadata (not secrets)

|

- API key metadata (keys themselves are never exported in plaintext)

|

||||||

|

|

||||||

#### Account Deletion

|

#### Account Deletion

|

||||||

|

|

||||||

|

|

@ -280,7 +280,7 @@ The `Teams` tab lets you manage workspace members, roles, and invitations. Teams

|

||||||

|

|

||||||

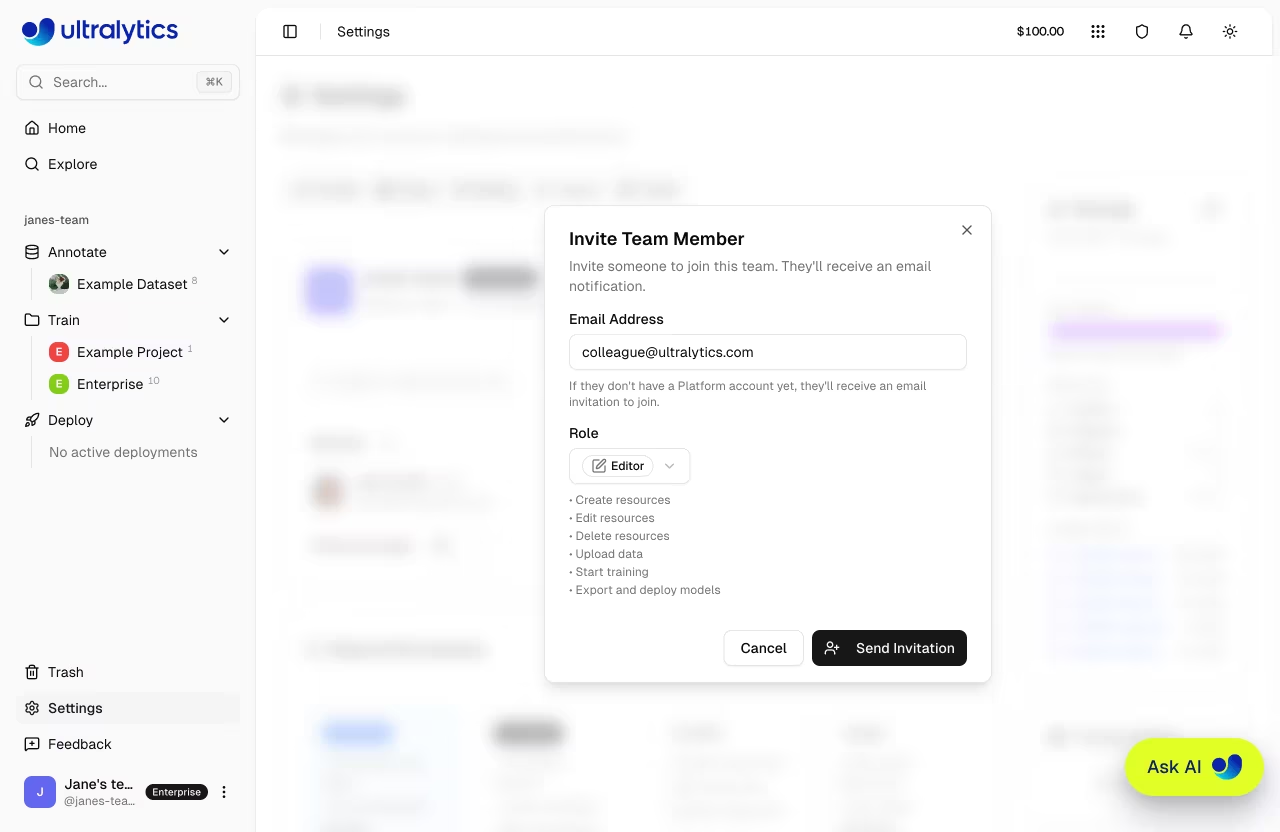

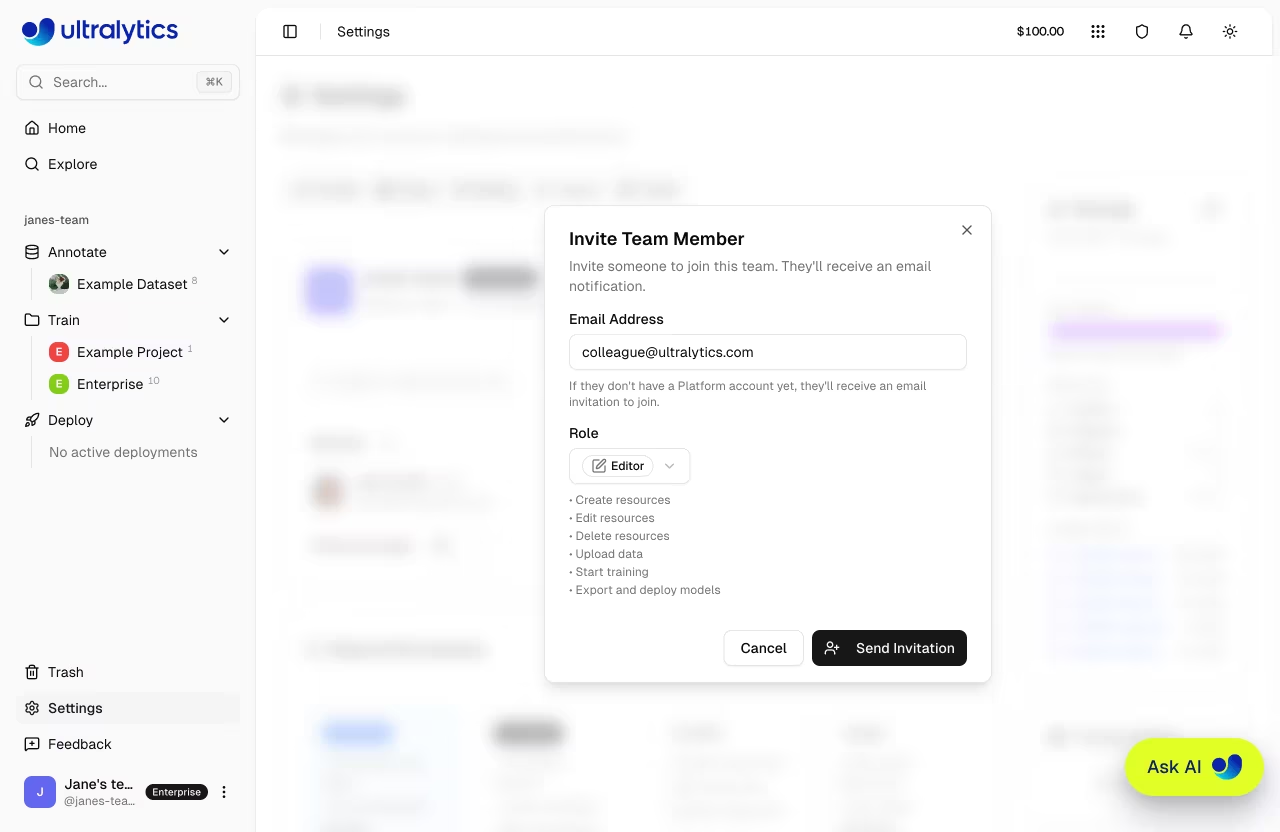

Owners and admins can manage the team:

|

Owners and admins can manage the team:

|

||||||

|

|

||||||

- **Invite members** via email (invitations expire after 7 days)

|

- **Invite members** via email (invites stay valid until accepted or canceled; pending invites count against the seat limit)

|

||||||

- **Change roles**: Click the role dropdown next to a member (only the owner can assign/remove the admin role)

|

- **Change roles**: Click the role dropdown next to a member (only the owner can assign/remove the admin role)

|

||||||

- **Remove members**: Click the menu and select **Remove**

|

- **Remove members**: Click the menu and select **Remove**

|

||||||

- **Cancel invites**: Cancel pending invitations that haven't been accepted

|

- **Cancel invites**: Cancel pending invitations that haven't been accepted

|

||||||

|

|

|

||||||

|

|

@ -87,7 +87,7 @@ Admins and Owners can invite new members to the team:

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

The invitee receives an email invitation with a link to accept and join the team. Invitations expire after 7 days. Once accepted, the team workspace appears in the invitee's workspace switcher. If an invite is missed, resend or cancel it from the Teams tab and send a fresh invite.

|

The invitee receives an email invitation with a link to accept and join the team. Invitations remain valid until accepted or canceled. Once accepted, the team workspace appears in the invitee's workspace switcher. If an invite is lost, **Resend** it from the Teams tab to rotate the token and send a fresh email, or **Cancel** it to free up the seat.

|

||||||

|

|

||||||

!!! note "Admin Invites"

|

!!! note "Admin Invites"

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -115,10 +115,7 @@ https://platform.ultralytics.com/api

|

||||||

|

|

||||||

## Rate Limits

|

## Rate Limits

|

||||||

|

|

||||||

The API uses a two-layer rate limiting system to protect against abuse while keeping legitimate usage unrestricted:

|

The API enforces per-API-key rate limits (sliding-window, Upstash Redis-backed) to protect against abuse while keeping legitimate usage unrestricted. Anonymous traffic is additionally protected by Vercel's platform-level abuse controls.

|

||||||

|

|

||||||

- **Per API key** — Limits enforced per API key on authenticated requests

|

|

||||||

- **Per IP** — 100 requests/min per IP address on all `/api/*` paths (applies to both authenticated and unauthenticated requests)

|

|

||||||

|

|

||||||

When throttled, the API returns `429` with retry metadata:

|

When throttled, the API returns `429` with retry metadata:

|

||||||

|

|

||||||

|

|

@ -321,7 +318,7 @@ Soft-deletes the dataset (moved to [trash](../account/trash.md), recoverable for

|

||||||

POST /api/datasets/{datasetId}/clone

|

POST /api/datasets/{datasetId}/clone

|

||||||

```

|

```

|

||||||

|

|

||||||

Creates a copy of the dataset with all images and labels. Only public datasets can be cloned.

|

Creates a copy of the dataset with all images and labels. Only public datasets can be cloned. Requires an active platform browser session — not available via API key.

|

||||||

|

|

||||||

**Body (all fields optional):**

|

**Body (all fields optional):**

|

||||||

|

|

||||||

|

|

@ -677,6 +674,8 @@ Soft-deletes the project (moved to [trash](../account/trash.md)).

|

||||||

POST /api/projects/{projectId}/clone

|

POST /api/projects/{projectId}/clone

|

||||||

```

|

```

|

||||||

|

|

||||||

|

Clones a public project (with all its models) into your workspace. Requires an active platform browser session — not available via API key.

|

||||||

|

|

||||||

### Project Icon

|

### Project Icon

|

||||||

|

|

||||||

Upload a project icon (multipart form with image file):

|

Upload a project icon (multipart form with image file):

|

||||||

|

|

@ -691,6 +690,8 @@ Remove the project icon:

|

||||||

DELETE /api/projects/{projectId}/icon

|

DELETE /api/projects/{projectId}/icon

|

||||||

```

|

```

|

||||||

|

|

||||||

|

Both require an active platform browser session — not available via API key.

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

## Models API

|

## Models API

|

||||||

|

|

@ -772,7 +773,7 @@ Returns signed download URLs for model files.

|

||||||

POST /api/models/{modelId}/clone

|

POST /api/models/{modelId}/clone

|

||||||

```

|

```

|

||||||

|

|

||||||

Clone a public model to one of your projects.

|

Clone a public model to one of your projects. Requires an active platform browser session — not available via API key.

|

||||||

|

|

||||||

**Body:**

|

**Body:**

|

||||||

|

|

||||||

|

|

@ -867,7 +868,9 @@ POST /api/models/{modelId}/predict

|

||||||

POST /api/models/{modelId}/predict/token

|

POST /api/models/{modelId}/predict/token

|

||||||

```

|

```

|

||||||

|

|

||||||

Get a short-lived token for direct prediction requests. The token bypasses the API proxy for lower-latency inference from client-side applications.

|

!!! note "Browser session only"

|

||||||

|

|

||||||

|

This route is used by the in-app Predict tab to issue short-lived inference tokens for direct browser → predict-service calls (lower latency, no API proxy). It requires an active platform browser session and is not available via API key. For programmatic inference, call [`POST /api/models/{modelId}/predict`](#run-inference) with your API key.

|

||||||

|

|

||||||

### Warmup Model

|

### Warmup Model

|

||||||

|

|

||||||

|

|

@ -875,7 +878,9 @@ Get a short-lived token for direct prediction requests. The token bypasses the A

|

||||||

POST /api/models/{modelId}/predict/warmup

|

POST /api/models/{modelId}/predict/warmup

|

||||||

```

|

```

|

||||||

|

|

||||||

Pre-load a model for faster first inference. Call this before running predictions to avoid delays on the initial request.

|

!!! note "Browser session only"

|

||||||

|

|

||||||

|

The warmup route is used by the Predict tab to pre-load a model's weights on the predict service before the user's first inference. It requires an active platform browser session and is not available via API key.

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

|

|

@ -951,7 +956,7 @@ POST /api/training/start

|

||||||

GET /api/models/{modelId}/training

|

GET /api/models/{modelId}/training

|

||||||

```

|

```

|

||||||

|

|

||||||

Returns the current training job status, metrics, and progress for a model.

|

Returns the current training job status, metrics, and progress for a model. Public projects are accessible anonymously; private projects require an active platform browser session (this route does not accept API-key authentication).

|

||||||

|

|

||||||

### Cancel Training

|

### Cancel Training

|

||||||

|

|

||||||

|

|

@ -959,7 +964,7 @@ Returns the current training job status, metrics, and progress for a model.

|

||||||

DELETE /api/models/{modelId}/training

|

DELETE /api/models/{modelId}/training

|

||||||

```

|

```

|

||||||

|

|

||||||

Terminates the running compute instance and marks the job as cancelled.

|

Terminates the running compute instance and marks the job as cancelled. Requires an active platform browser session — not available via API key.

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

|

|

@ -967,6 +972,10 @@ Terminates the running compute instance and marks the job as cancelled.

|

||||||

|

|

||||||

Deploy models to dedicated inference endpoints with health checks and monitoring. New deployments use scale-to-zero by default, and the API accepts an optional `resources` object. See [Endpoints documentation](../deploy/endpoints.md).

|

Deploy models to dedicated inference endpoints with health checks and monitoring. New deployments use scale-to-zero by default, and the API accepts an optional `resources` object. See [Endpoints documentation](../deploy/endpoints.md).

|

||||||

|

|

||||||

|

!!! info "API-key support by route"

|

||||||

|

|

||||||

|

Only `GET /api/deployments`, `POST /api/deployments`, `GET /api/deployments/{deploymentId}`, and `DELETE /api/deployments/{deploymentId}` support API-key authentication. The `predict`, `health`, `logs`, `metrics`, `start`, and `stop` sub-routes require an active platform browser session — they are convenience proxies for the in-app UI. For programmatic inference, call the deployment's own endpoint URL (e.g., `https://predict-abc123.run.app/predict`) directly with your API key. [Dedicated endpoints](../deploy/endpoints.md#using-endpoints) are not rate-limited.

|

||||||

|

|

||||||

```mermaid

|

```mermaid

|

||||||

graph LR

|

graph LR

|

||||||

A[Create] --> B[Deploying]

|

A[Create] --> B[Deploying]

|

||||||

|

|

@ -1118,6 +1127,10 @@ GET /api/deployments/{deploymentId}/logs

|

||||||

|

|

||||||

## Monitoring API

|

## Monitoring API

|

||||||

|

|

||||||

|

!!! note "Browser session only"

|

||||||

|

|

||||||

|

`GET /api/monitoring` is a UI-only route and requires an active platform browser session. It does not accept API-key authentication. Query individual deployment metrics via the per-deployment routes (which are also browser-session only) or use [Cloud Monitoring exports](https://cloud.google.com/monitoring) on the deployed Cloud Run service for programmatic access.

|

||||||

|

|

||||||

### Aggregated Metrics

|

### Aggregated Metrics

|

||||||

|

|

||||||

```http

|

```http

|

||||||

|

|

@ -1574,6 +1587,10 @@ DELETE /api/billing/payment-methods/{id}

|

||||||

|

|

||||||

Check your storage usage breakdown by category (datasets, models, exports) and see your largest items.

|

Check your storage usage breakdown by category (datasets, models, exports) and see your largest items.

|

||||||

|

|

||||||

|

!!! note "Browser session only"

|

||||||

|

|

||||||

|

Storage routes require an active platform browser session and are not accessible via API key. Use the [Settings > Profile](../account/settings.md#storage-usage) page in the UI for interactive breakdowns.

|

||||||

|

|

||||||

### Get Storage Info

|

### Get Storage Info

|

||||||

|

|

||||||

```http

|

```http

|

||||||

|

|

@ -2169,21 +2186,13 @@ print(f"mAP50-95: {metrics.box.map}")

|

||||||

|

|

||||||

## Webhooks

|

## Webhooks

|

||||||

|

|

||||||

Webhooks notify your server of Platform events via HTTP POST callbacks:

|

The Platform uses internal webhooks to stream real-time training metrics from the `ultralytics` Python SDK (running on cloud GPUs or remote/local machines) back to the Platform — epoch-by-epoch loss, mAP, system stats, and completion status. These webhooks are authenticated via the HMAC `webhookSecret` provisioned per training job and are not intended to be consumed by user applications.

|

||||||

|

|

||||||

| Event | Description |

|

!!! info "Working on your side"

|

||||||

| -------------------- | -------------------- |

|

|

||||||

| `training.started` | Training job started |

|

|

||||||

| `training.epoch` | Epoch completed |

|

|

||||||

| `training.completed` | Training finished |

|

|

||||||

| `training.failed` | Training failed |

|

|

||||||

| `export.completed` | Export ready |

|

|

||||||

|

|

||||||

!!! info "Plan Availability"

|

**All plans**: Training progress via the `ultralytics` SDK (real-time metrics, completion notifications) works automatically on every plan — just set `project=username/my-project name=my-run` when training and the SDK streams events back to the Platform. No user-side webhook registration is required.

|

||||||

|

|

||||||

**All plans**: Training webhooks via the Python SDK (real-time metrics, completion notifications) work automatically on every plan -- no configuration required.

|

**User-facing webhook subscriptions** (POST callbacks to a URL you control) are on the Enterprise roadmap and not currently available. In the meantime, poll `GET /api/models/{modelId}/training` for status or use the [activity feed](#activity-api) in the UI.

|

||||||

|

|

||||||

**Enterprise only**: Custom webhook endpoints that send HTTP POST callbacks to your own server URL require an Enterprise plan. See [Ultralytics Licensing](https://www.ultralytics.com/license) for details.

|

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

|

|

|

||||||

|

|

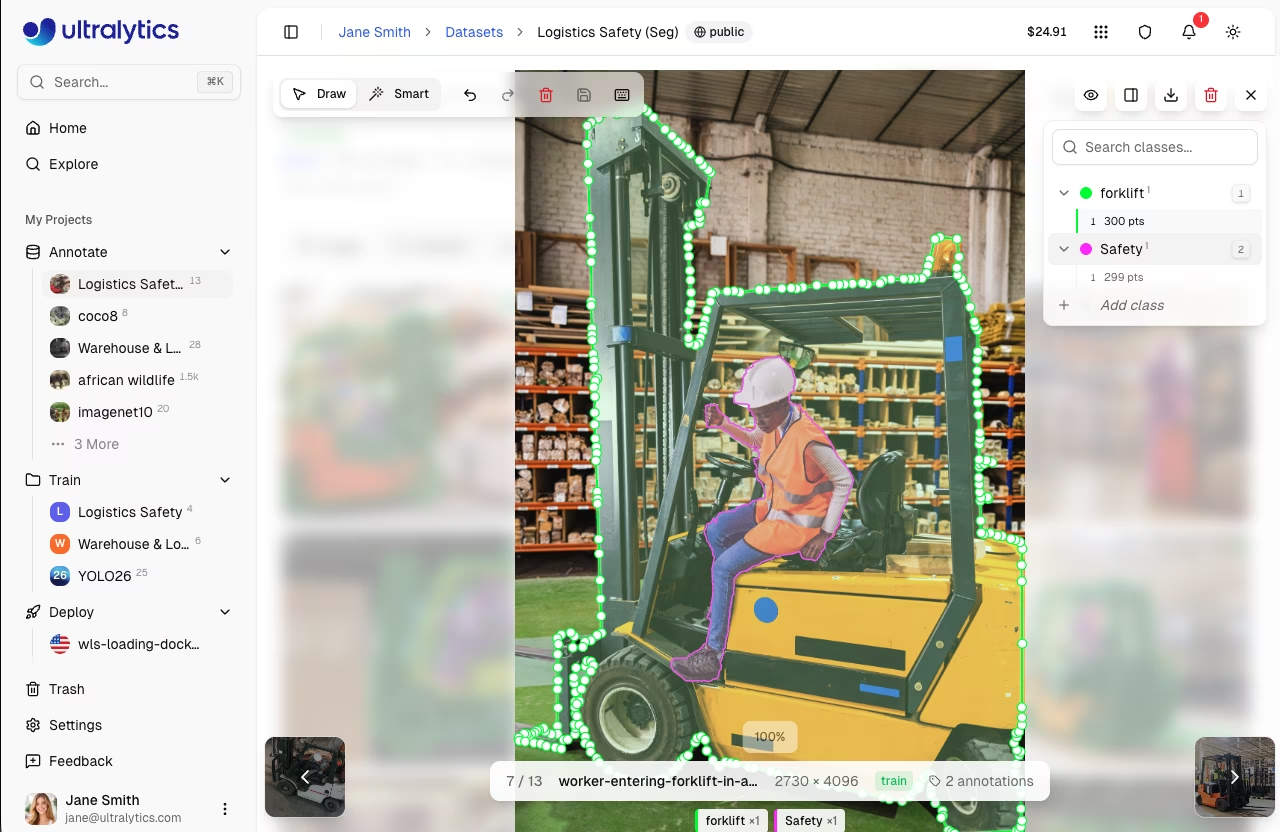

@ -150,8 +150,8 @@ Draw rectangular boxes around objects:

|

||||||

Draw precise polygon masks:

|

Draw precise polygon masks:

|

||||||

|

|

||||||

1. Enter edit mode and select `Draw`

|

1. Enter edit mode and select `Draw`

|

||||||

2. Click to add vertices

|

2. Click to add vertices, or hold `Shift` and move the mouse to freehand-draw dense points

|

||||||

3. Right-click or press `Enter` to close the polygon

|

3. Click the first vertex, or press `Enter` or `Escape` to close the polygon

|

||||||

4. Select a class from the dropdown

|

4. Select a class from the dropdown

|

||||||

|

|

||||||

|

|

||||||

|

|

@ -459,15 +459,17 @@ Efficient annotation with keyboard shortcuts:

|

||||||

|

|

||||||

=== "Drawing"

|

=== "Drawing"

|

||||||

|

|

||||||

| Shortcut | Action |

|

| Shortcut | Action |

|

||||||

| ------------------------------- | ----------------------------------------------------------- |

|

| ----------------------- | -------------------------------------------------------------------------------------- |

|

||||||

| `Click+Drag` | Draw bounding box (detect/OBB) |

|

| `Click+Drag` | Draw bounding box (detect/OBB) |

|

||||||

| `Click` | Add polygon point (segment) / Place skeleton (pose) |

|

| `Click` | Add polygon point (segment) / Place skeleton (pose) / Place SAM point (smart) |

|

||||||

| `Right-click` | Complete polygon / Add SAM negative point |

|

| `Shift (hold) + Move` | Freehand draw — continuously adds polygon vertices as the mouse moves |

|

||||||

| `Shift` + `click`/`right-click` | Place multiple SAM points before applying (auto-apply on) |

|

| `Click inside mask` | Subtract region from SAM mask (negative point) |

|

||||||

| `A` | Toggle auto-apply (Smart mode) |

|

| `Click outside mask` | Add to SAM mask (positive point) |

|

||||||

| `Enter` | Complete polygon / Confirm pose / Save SAM annotation |

|

| `Shift (hold) + Click` | Place multiple SAM points before auto-apply commits (Smart mode, auto-apply on) |

|

||||||

| `Escape` | Cancel pose / Save SAM annotation / Deselect / Exit |

|

| `A` | Toggle auto-apply (Smart mode) |

|

||||||

|

| `Enter` | Complete polygon / Confirm pose / Save SAM annotation |

|

||||||

|

| `Escape` | Cancel pose / Save SAM annotation / Deselect / Exit |

|

||||||

|

|

||||||

=== "Arrange (Z-Order)"

|

=== "Arrange (Z-Order)"

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -74,7 +74,7 @@ graph LR

|

||||||

|

|

||||||

- **Upload** images, videos, and dataset files to create training datasets

|

- **Upload** images, videos, and dataset files to create training datasets

|

||||||

- **Visualize** annotations with interactive overlays for all 5 YOLO task types (see [supported tasks](data/index.md#supported-tasks))

|

- **Visualize** annotations with interactive overlays for all 5 YOLO task types (see [supported tasks](data/index.md#supported-tasks))

|

||||||

- **Train** models on cloud GPUs (20 on all plans, 23 with Pro for H200 and B200) with real-time metrics

|

- **Train** models on cloud GPUs (20 on all plans, 23 with Pro or Enterprise for H200 and B200) with real-time metrics

|

||||||

- **Export** to [17 deployment formats](../modes/export.md) (ONNX, TensorRT, CoreML, TFLite, etc.)

|

- **Export** to [17 deployment formats](../modes/export.md) (ONNX, TensorRT, CoreML, TFLite, etc.)

|

||||||

- **Deploy** to 43 global regions with one-click dedicated endpoints

|

- **Deploy** to 43 global regions with one-click dedicated endpoints

|

||||||

- **Monitor** training progress, deployment health, and usage metrics

|

- **Monitor** training progress, deployment health, and usage metrics

|

||||||

|

|

@ -372,7 +372,7 @@ The Platform includes a full-featured annotation editor supporting:

|

||||||

| Shortcut | Action |