mirror of

https://github.com/ultralytics/ultralytics

synced 2026-04-21 14:07:18 +00:00

Refresh platform docs (#24281)

Co-authored-by: UltralyticsAssistant <web@ultralytics.com>

This commit is contained in:

parent

3681717315

commit

6438ebd4d7

13 changed files with 167 additions and 157 deletions

|

|

@ -35,27 +35,28 @@ The Account section helps you:

|

|||

|

||||

## Account Features

|

||||

|

||||

| Feature | Description |

|

||||

| ------------ | -------------------------------------------------------- |

|

||||

| **Settings** | Profile, social links, emails, data region, and API keys |

|

||||

| **Plans** | Free, Pro, and Enterprise plan comparison |

|

||||

| **Billing** | Credits, payment methods, and transaction history |

|

||||

| **Teams** | Members, roles, invites, and seat management |

|

||||

| **Trash** | Recover deleted items within 30 days |

|

||||

| **Emails** | Add, remove, verify, and set primary email address |

|

||||

| **Activity** | Event log with inbox, archive, search, and undo |

|

||||

| Feature | Description |

|

||||

| ------------ | ----------------------------------------------------------- |

|

||||

| **Settings** | Profile, emails, social links, and data region |

|

||||

| **API Keys** | Generate AES-256-GCM encrypted keys for programmatic access |

|

||||

| **Plans** | Free, Pro, and Enterprise plan comparison |

|

||||

| **Billing** | Credits, payment methods, and transaction history |

|

||||

| **Teams** | Members, roles, invites, and seat management |

|

||||

| **Trash** | Recover deleted items within 30 days |

|

||||

| **Activity** | Event log with inbox, archive, search, and undo |

|

||||

|

||||

## Settings Tabs

|

||||

|

||||

Account management is organized into tabs within `Settings`:

|

||||

Account management is organized into six tabs within `Settings` (in order):

|

||||

|

||||

| Tab | Description |

|

||||

| --------- | ---------------------------------------------------------------- |

|

||||

| `Profile` | Display name, bio, company, use case, emails, social links, keys |

|

||||

| `Plans` | Compare Free, Pro, and Enterprise plans |

|

||||

| `Billing` | Credit balance, top-up, payment methods, transactions |

|

||||

| `Teams` | Member list, roles, invites, seat allocation |

|

||||

| `Trash` | Soft-deleted projects, datasets, and models |

|

||||

| Tab | Description |

|

||||

| ---------- | ----------------------------------------------------------------------- |

|

||||

| `Profile` | Display name, bio, company, use case, emails, social links, data region |

|

||||

| `API Keys` | Create and manage API keys for remote training and programmatic access |

|

||||

| `Plans` | Compare Free, Pro, and Enterprise plans |

|

||||

| `Billing` | Credit balance, top-up, payment methods, transactions |

|

||||

| `Teams` | Member list, roles, invites, seat allocation |

|

||||

| `Trash` | Soft-deleted projects, datasets, and models (30-day recovery) |

|

||||

|

||||

## Security

|

||||

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: Ultralytics Platform, settings, profile, preferences, GDPR, data expor

|

|||

|

||||

[Ultralytics Platform](https://platform.ultralytics.com) settings allow you to configure your profile, social links, workspace preferences, and manage your data with GDPR-compliant export and deletion options.

|

||||

|

||||

Settings is organized into six tabs: `Profile`, `API Keys`, `Plans`, `Billing`, `Teams`, and `Trash`.

|

||||

Settings is organized into six tabs (in order): `Profile`, `API Keys`, `Plans`, `Billing`, `Teams`, and `Trash`.

|

||||

|

||||

## Profile Tab

|

||||

|

||||

|

|

|

|||

|

|

@ -73,7 +73,7 @@ Team members share the workspace credit balance and resource limits. All members

|

|||

|

||||

!!! note "Pro Plan Seat Billing"

|

||||

|

||||

On the Pro plan, each team member is a paid seat at $29/month (or $290/year). Monthly credits of $30/seat are added to the shared wallet.

|

||||

On the Pro plan, each team member is a paid seat at $29/month (or $290/year, a ~17% saving). Monthly credits of $30/seat are added to the team's shared wallet at the start of every billing cycle.

|

||||

|

||||

## Inviting Members

|

||||

|

||||

|

|

|

|||

|

|

@ -166,10 +166,9 @@ Access trash programmatically via the [REST API](../api/index.md#trash-api):

|

|||

|

||||

=== "Empty Trash"

|

||||

|

||||

```bash

|

||||

curl -X DELETE -H "Authorization: Bearer YOUR_API_KEY" \

|

||||

https://platform.ultralytics.com/api/trash/empty

|

||||

```

|

||||

!!! note "Browser session only"

|

||||

|

||||

`DELETE /api/trash/empty` requires an authenticated browser session and cannot be called with an API key. Use the **Empty Trash** button in [**Settings > Trash**](../account/settings.md#trash-tab) instead, or permanently delete individual items via `DELETE /api/trash` (API-key compatible).

|

||||

|

||||

## FAQ

|

||||

|

||||

|

|

|

|||

|

|

@ -538,17 +538,17 @@ GET /api/datasets/{datasetId}/images

|

|||

|

||||

**Query Parameters:**

|

||||

|

||||

| Parameter | Type | Description |

|

||||

| ------------------- | ------ | ------------------------------------------------------------------------------------------------------------- |

|

||||

| `split` | string | Filter by split: `train`, `val`, `test` |

|

||||

| `offset` | int | Pagination offset (default: 0) |

|

||||

| `limit` | int | Items per page (default: 50, max: 5000) |

|

||||

| `sort` | string | Sort order: `newest`, `oldest`, `name-asc`, `name-desc`, `size-asc`, `size-desc`, `labels-asc`, `labels-desc` |

|

||||

| `hasLabel` | string | Filter by label status (`true` or `false`) |

|

||||

| `hasError` | string | Filter by error status (`true` or `false`) |

|

||||

| `search` | string | Search by filename or image hash |

|

||||

| `includeThumbnails` | string | Include signed thumbnail URLs (default: `true`) |

|

||||

| `includeImageUrls` | string | Include signed full image URLs (default: `false`) |

|

||||

| Parameter | Type | Description |

|

||||

| ------------------- | ------ | -------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| `split` | string | Filter by split: `train`, `val`, `test` |

|

||||

| `offset` | int | Pagination offset (default: 0) |

|

||||

| `limit` | int | Items per page (default: 50, max: 5000) |

|

||||

| `sort` | string | Sort order: `newest`, `oldest`, `name-asc`, `name-desc`, `height-asc`, `height-desc`, `width-asc`, `width-desc`, `size-asc`, `size-desc`, `labels-asc`, `labels-desc` (some disabled for >100k image datasets) |

|

||||

| `hasLabel` | string | Filter by label status (`true` or `false`) |

|

||||

| `hasError` | string | Filter by error status (`true` or `false`) |

|

||||

| `search` | string | Search by filename or image hash |

|

||||

| `includeThumbnails` | string | Include signed thumbnail URLs (default: `true`) |

|

||||

| `includeImageUrls` | string | Include signed full image URLs (default: `false`) |

|

||||

|

||||

#### Get Signed Image URLs

|

||||

|

||||

|

|

@ -881,7 +881,7 @@ Pre-load a model for faster first inference. Call this before running prediction

|

|||

|

||||

## Training API

|

||||

|

||||

Launch YOLO training on cloud GPUs (RTX 4090, A100, H100) and monitor progress in real time. See [Cloud Training documentation](../train/cloud-training.md).

|

||||

Launch YOLO training on cloud GPUs (23 GPU types from RTX 2000 Ada to B200) and monitor progress in real time. See [Cloud Training documentation](../train/cloud-training.md).

|

||||

|

||||

```mermaid

|

||||

graph LR

|

||||

|

|

@ -1795,13 +1795,13 @@ POST /api/members

|

|||

|

||||

!!! info "Member Roles"

|

||||

|

||||

| Role | Permissions |

|

||||

| -------- | ------------------------------------------ |

|

||||

| `viewer` | Read-only access to workspace resources |

|

||||

| `editor` | Create, edit, and delete resources |

|

||||

| `admin` | Full access including member management |

|

||||

| Role | Permissions |

|

||||

| -------- | ------------------------------------------------------------------------------ |

|

||||

| `viewer` | Read-only access to workspace resources |

|

||||

| `editor` | Create, edit, and delete resources |

|

||||

| `admin` | Manage members, billing, and all resources (only assignable by the team owner) |

|

||||

|

||||

See [Teams](../account/teams.md) for role details in the UI.

|

||||

The team `owner` is the creator and cannot be invited. Owner is transferred separately via [`POST /api/members/transfer-ownership`](#transfer-ownership). See [Teams](../account/teams.md) for full role details.

|

||||

|

||||

### Update Member Role

|

||||

|

||||

|

|

|

|||

|

|

@ -121,10 +121,10 @@ graph LR

|

|||

|

||||

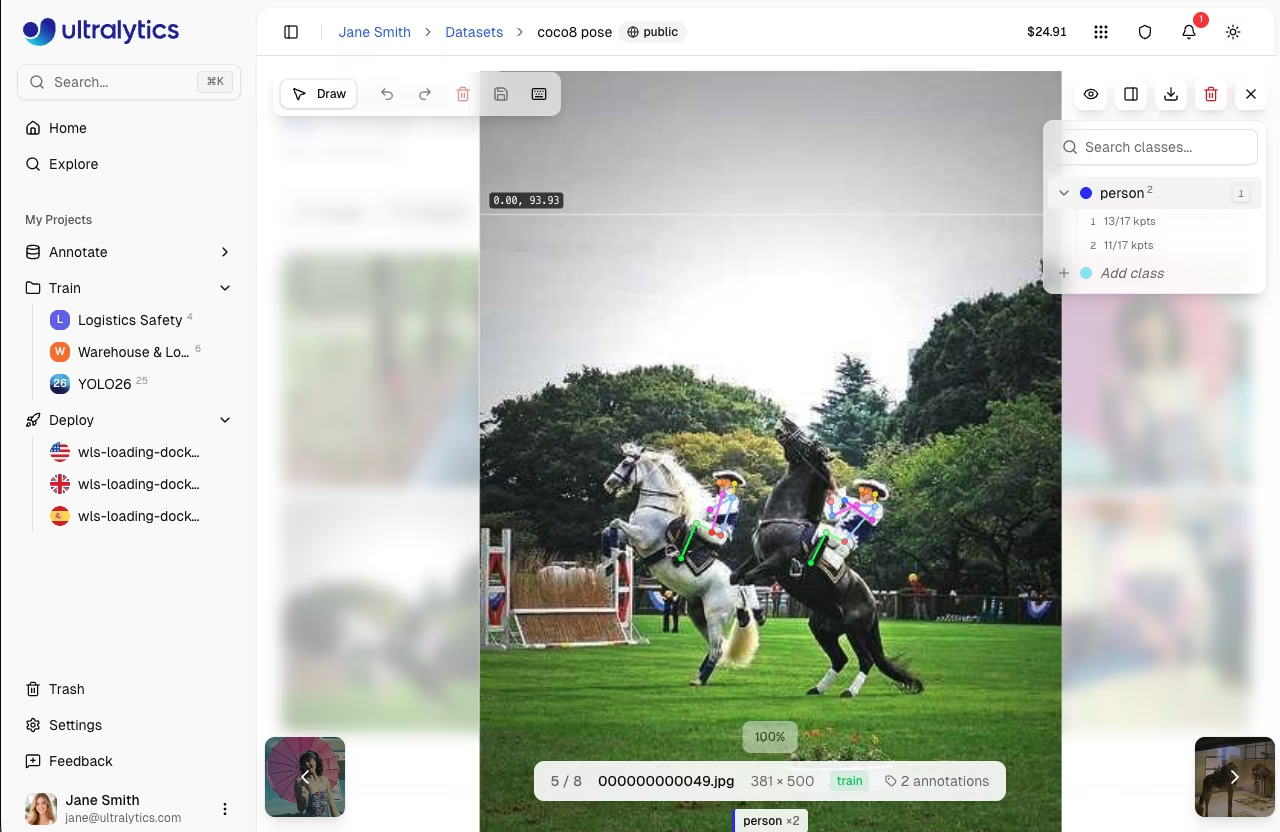

The editor provides two annotation modes, selectable from the toolbar:

|

||||

|

||||

| Mode | Description | Shortcut |

|

||||

| --------- | ------------------------------------------------------- | -------- |

|

||||

| **Draw** | Manual annotation with task-specific tools | `V` |

|

||||

| **Smart** | SAM-powered interactive annotation (detect/segment/OBB) | `S` |

|

||||

| Mode | Description | Shortcut |

|

||||

| ---------- | ----------------------------------------------------------------- | -------- |

|

||||

| **Manual** | Draw annotations with task-specific tools (all 5 task types) | `V` |

|

||||

| **Smart** | SAM or YOLO model-assisted annotation (detect, segment, OBB only) | `S` |

|

||||

|

||||

## Manual Annotation Tools

|

||||

|

||||

|

|

@ -179,13 +179,13 @@ Annotate poses using skeleton templates. Select a template from the toolbar, cli

|

|||

|

||||

The editor includes 5 built-in templates:

|

||||

|

||||

| Template | Keypoints | Description |

|

||||

| ---------- | --------- | ------------------------------------------------------------------------------------------------------------------ |

|

||||

| **Person** | 17 | [COCO human pose](../../datasets/pose/index.md) — nose, eyes, ears, shoulders, elbows, wrists, hips, knees, ankles |

|

||||

| **Hand** | 21 | MediaPipe hand landmarks — wrist, thumb, index, middle, ring, pinky joints |

|

||||

| **Face** | 68 | [iBUG 300W](https://ibug.doc.ic.ac.uk/resources/300-W/) facial landmarks — jaw, eyebrows, nose, eyes, mouth |

|

||||

| **Dog** | 18 | Animal pose — nose, head, neck, shoulders, legs, paws, tail |

|

||||

| **Box** | 4 | Corner keypoints — top-left, top-right, bottom-right, bottom-left |

|

||||

| Template | Keypoints | Description |

|

||||

| ---------- | --------- | ---------------------------------------------------------------------------------------------------------------------- |

|

||||

| **Person** | 17 | [COCO human body pose](../../datasets/pose/coco.md) — nose, eyes, ears, shoulders, elbows, wrists, hips, knees, ankles |

|

||||

| **Hand** | 21 | [Ultralytics Hand Keypoints](../../datasets/pose/hand-keypoints.md) — wrist, thumb, index, middle, ring, pinky joints |

|

||||

| **Face** | 68 | [iBUG 300W](https://ibug.doc.ic.ac.uk/resources/300-W/) facial landmarks — jaw, eyebrows, nose, eyes, mouth |

|

||||

| **Dog** | 18 | AP-10K animal pose — nose, head, neck, shoulders, tailbase, tail, and 4 legs (elbows, knees, paws) |

|

||||

| **Box** | 4 | Corner keypoints — top-left, top-right, bottom-right, bottom-left |

|

||||

|

||||

|

||||

|

||||

|

|

@ -308,7 +308,7 @@ When Smart mode is active, a model picker appears in the toolbar. Five SAM model

|

|||

|

||||

| Model | Size | Speed | Notes |

|

||||

| ----------------- | ------- | -------- | -------------------------- |

|

||||

| **SAM 2.1 Tiny** | 74.5 MB | Fastest | |

|

||||

| **SAM 2.1 Tiny** | 75 MB | Fastest | |

|

||||

| **SAM 2.1 Small** | 88 MB | Fast | |

|

||||

| **SAM 2.1 Base** | 154 MB | Moderate | |

|

||||

| **SAM 2.1 Large** | 428 MB | Slower | Most accurate of SAM 2.1 |

|

||||

|

|

@ -438,8 +438,7 @@ Efficient annotation with keyboard shortcuts:

|

|||

| ----------------------------- | ---------------------------- |

|

||||

| `Cmd/Ctrl+S` | Save annotations |

|

||||

| `Cmd/Ctrl+Z` | Undo |

|

||||

| `Cmd/Ctrl+Shift+Z` | Redo |

|

||||

| `Cmd/Ctrl+Y` | Redo (alternative) |

|

||||

| `Cmd/Ctrl+Y` | Redo |

|

||||

| `Escape` | Save / Deselect / Exit |

|

||||

| `Delete` / `Backspace` | Delete selected annotation |

|

||||

| `1-9` | Select class 1-9 |

|

||||

|

|

@ -453,10 +452,10 @@ Efficient annotation with keyboard shortcuts:

|

|||

|

||||

=== "Modes"

|

||||

|

||||

| Shortcut | Action |

|

||||

| -------- | ------------------ |

|

||||

| `V` | Draw mode (manual) |

|

||||

| `S` | Smart mode (SAM) |

|

||||

| Shortcut | Action |

|

||||

| -------- | ------------------------------- |

|

||||

| `V` | Manual mode (draw) |

|

||||

| `S` | Smart mode (SAM or YOLO model) |

|

||||

|

||||

=== "Drawing"

|

||||

|

||||

|

|

@ -490,7 +489,7 @@ Efficient annotation with keyboard shortcuts:

|

|||

The annotation editor maintains a full undo/redo history:

|

||||

|

||||

- **Undo**: `Cmd/Ctrl+Z`

|

||||

- **Redo**: `Cmd/Ctrl+Shift+Z` or `Cmd/Ctrl+Y`

|

||||

- **Redo**: `Cmd/Ctrl+Y`

|

||||

|

||||

History tracks:

|

||||

|

||||

|

|

@ -549,8 +548,8 @@ The keyboard shortcut `1-9` quickly selects classes.

|

|||

Yes, but for best results:

|

||||

|

||||

- Label all objects of your target classes in each image

|

||||

- Use the label filter set to `Unannotated` to identify unlabeled images

|

||||

- Exclude unannotated images from training configuration

|

||||

- Use the label filter set to `Unlabeled` to identify images that still need annotation

|

||||

- Unlabeled images are excluded from training; only labeled images contribute to the loss

|

||||

|

||||

### Which SAM model should I use?

|

||||

|

||||

|

|

|

|||

|

|

@ -6,7 +6,7 @@ keywords: Ultralytics Platform, datasets, dataset management, dataset versioning

|

|||

|

||||

# Datasets

|

||||

|

||||

[Ultralytics Platform](https://platform.ultralytics.com) datasets provide a streamlined solution for managing your training data. After upload, the platform processes images, labels, and statistics automatically. A dataset is ready to train once processing has completed and it has at least one image in the `train` split, at least one image in either the `val` or `test` split, and at least one labeled image.

|

||||

[Ultralytics Platform](https://platform.ultralytics.com) datasets provide a streamlined solution for managing your training data. After upload, the platform processes images, labels, and statistics automatically. A dataset is ready to train once processing has completed and it has at least one image in the `train` split, at least one image in either the `val` or `test` split, at least one labeled image, and a total of at least two images.

|

||||

|

||||

## Upload Dataset

|

||||

|

||||

|

|

@ -234,28 +234,31 @@ Images can be sorted and filtered for efficient browsing:

|

|||

|

||||

=== "Sort Options"

|

||||

|

||||

| Sort | Description |

|

||||

| --------------- | -------------------- |

|

||||

| Newest | Most recently added |

|

||||

| Oldest | Earliest added |

|

||||

| Name A-Z | Alphabetical |

|

||||

| Name Z-A | Reverse alphabetical |

|

||||

| Size (smallest) | Smallest files first |

|

||||

| Size (largest) | Largest files first |

|

||||

| Most labels | Most annotations |

|

||||

| Fewest labels | Fewest annotations |

|

||||

| Sort | Description |

|

||||

| -------------------- | ---------------------------- |

|

||||

| Newest / Oldest | Upload / creation order |

|

||||

| Name A-Z / Z-A | Filename alphabetical |

|

||||

| Height ↑/↓ | Image height in pixels |

|

||||

| Width ↑/↓ | Image width in pixels |

|

||||

| Size ↑/↓ | File size on disk |

|

||||

| Annotations ↑/↓ | Annotation count per image |

|

||||

|

||||

!!! note "Large Datasets"

|

||||

|

||||

For datasets over 100,000 images, name / size / width / height sorts are disabled to keep the gallery responsive. Newest, oldest, and annotation-count sorts remain available.

|

||||

|

||||

=== "Filters"

|

||||

|

||||

| Filter | Options |

|

||||

| ---------------- | ---------------------------------- |

|

||||

| **Split filter** | Train, Val, Test, or All |

|

||||

| **Label filter** | All images, Annotated, or Unannotated |

|

||||

| **Search** | Filter images by filename |

|

||||

| Filter | Options |

|

||||

| ---------------- | ----------------------------------- |

|

||||

| **Split filter** | Train, Val, Test, or All |

|

||||

| **Label filter** | All, Labeled, or Unlabeled |

|

||||

| **Class filter** | Filter by class name |

|

||||

| **Search** | Filter images by filename |

|

||||

|

||||

!!! tip "Finding Unlabeled Images"

|

||||

|

||||

Use the label filter set to `Unannotated` to quickly find images that still need annotation. This is especially useful for large datasets where you want to track labeling progress.

|

||||

Use the label filter set to `Unlabeled` to quickly find images that still need annotation. This is especially useful for large datasets where you want to track labeling progress.

|

||||

|

||||

### Fullscreen Viewer

|

||||

|

||||

|

|

|

|||

|

|

@ -344,17 +344,24 @@ Dedicated endpoints are **not subject to the Platform API rate limits**. Request

|

|||

|

||||

### Request Parameters

|

||||

|

||||

| Parameter | Type | Default | Description |

|

||||

| ----------- | ------ | ------- | ------------------------------ |

|

||||

| `file` | file | - | Image or video file (required) |

|

||||

| `conf` | float | 0.25 | Minimum confidence threshold |

|

||||

| `iou` | float | 0.7 | NMS IoU threshold |

|

||||

| `imgsz` | int | 640 | Input image size |

|

||||

| `normalize` | string | - | Return normalized coordinates |

|

||||

| Parameter | Type | Default | Range | Description |

|

||||

| ----------- | ------ | ------- | ---------- | -------------------------------------------------- |

|

||||

| `file` | file | - | - | Image or video file (required) |

|

||||

| `conf` | float | 0.25 | 0.01 – 1.0 | Minimum confidence threshold |

|

||||

| `iou` | float | 0.7 | 0.0 – 0.95 | NMS IoU threshold |

|

||||

| `imgsz` | int | 640 | 32 – 1280 | Input image size in pixels |

|

||||

| `normalize` | bool | false | - | Return bounding box coordinates as 0 – 1 |

|

||||

| `decimals` | int | 5 | 0 – 10 | Decimal precision for coordinate values |

|

||||

| `source` | string | - | - | Image URL or base64 string (alternative to `file`) |

|

||||

|

||||

!!! tip "Video Inference"

|

||||

|

||||

Dedicated endpoints accept video files in addition to images. Supported video formats (up to 100MB): ASF, AVI, GIF, M4V, MKV, MOV, MP4, MPEG, MPG, TS, WEBM, WMV. Each frame is processed individually and results are returned per frame. Supported image formats (up to 50MB): AVIF, BMP, DNG, HEIC, JP2, JPEG, JPG, MPO, PNG, TIF, TIFF, WEBP.

|

||||

Dedicated endpoints accept both images and videos via the `file` parameter.

|

||||

|

||||

- **Image formats** (up to 50 MB): AVIF, BMP, DNG, HEIC, JP2, JPEG, JPG, MPO, PNG, TIF, TIFF, WEBP

|

||||

- **Video formats** (up to 100 MB): ASF, AVI, GIF, M4V, MKV, MOV, MP4, MPEG, MPG, TS, WEBM, WMV

|

||||

|

||||

Each video frame is processed individually and results are returned per frame. You can also pass a public image URL or a base64-encoded image via the `source` parameter instead of `file`.

|

||||

|

||||

### Response Format

|

||||

|

||||

|

|

|

|||

|

|

@ -103,11 +103,11 @@ Adjust detection behavior with parameters in the collapsible **Parameters** sect

|

|||

|

||||

|

||||

|

||||

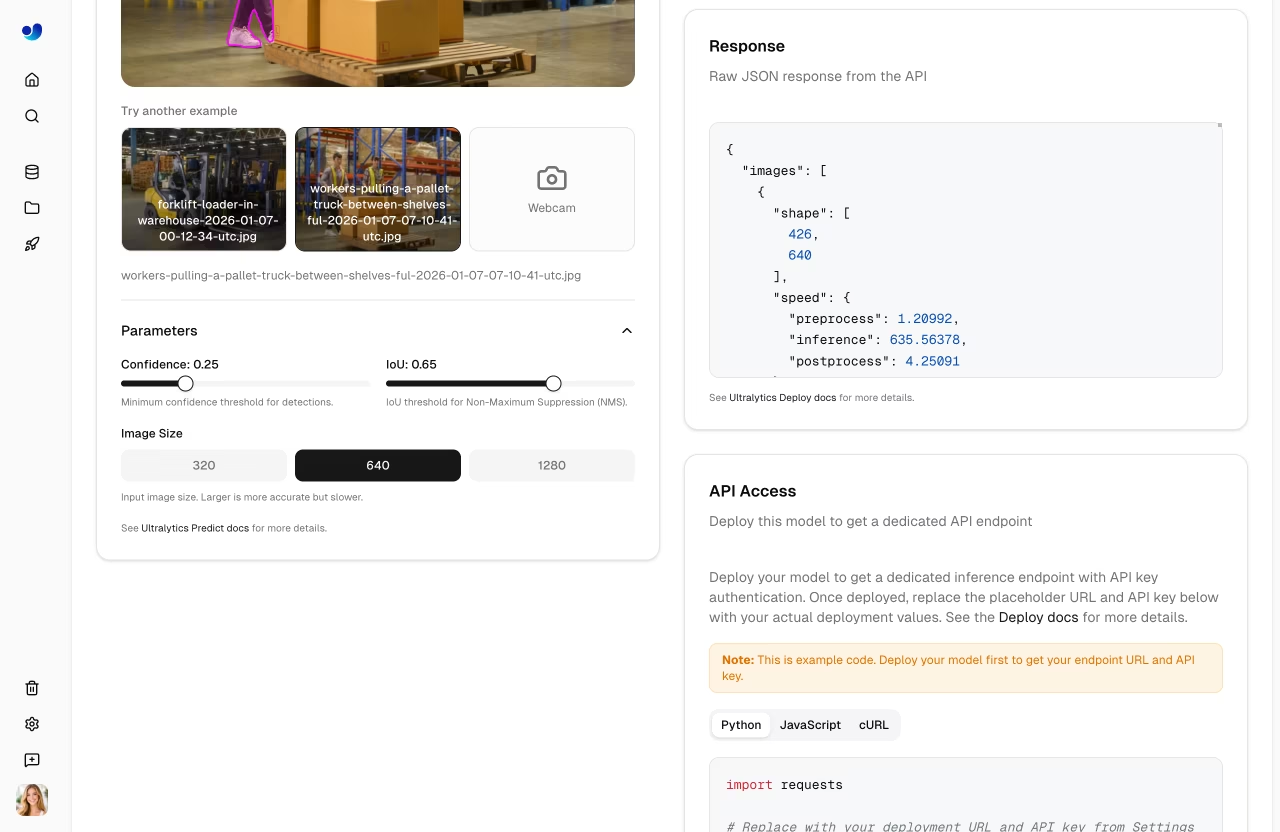

| Parameter | Range | Default | Description |

|

||||

| -------------- | -------------- | ------- | -------------------------------------- |

|

||||

| **Confidence** | 0.01-1.0 | 0.25 | Minimum confidence threshold |

|

||||

| **IoU** | 0.0-0.95 | 0.70 | NMS IoU threshold |

|

||||

| **Image Size** | 320, 640, 1280 | 640 | Input resize dimension (button toggle) |

|

||||

| Parameter | Range | Default | Description |

|

||||

| -------------- | -------------------------- | ------- | -------------------------------------------------------- |

|

||||

| **Confidence** | 0.01 – 1.0 | 0.25 | Minimum confidence threshold |

|

||||

| **IoU** | 0.0 – 0.95 | 0.7 | NMS IoU threshold |

|

||||

| **Image Size** | 320, 640, 1280 (UI toggle) | 640 | Input resize dimension (API accepts any value 32 – 1280) |

|

||||

|

||||

!!! note "Auto-Rerun"

|

||||

|

||||

|

|

@ -127,7 +127,7 @@ Control Non-Maximum Suppression:

|

|||

|

||||

- **Higher (0.7+)**: Allow more overlapping boxes

|

||||

- **Lower (0.3-0.5)**: Merge nearby detections more aggressively

|

||||

- **Default (0.70)**: Balanced NMS behavior for most use cases

|

||||

- **Default (0.7)**: Balanced NMS behavior for most use cases

|

||||

|

||||

## Deployment Predict

|

||||

|

||||

|

|

@ -359,10 +359,10 @@ Common error responses:

|

|||

|

||||

### Can I run inference on video?

|

||||

|

||||

It depends on the inference method:

|

||||

Both inference methods accept video files:

|

||||

|

||||

- **Dedicated endpoints** accept video files directly. Supported formats (up to 100MB): ASF, AVI, GIF, M4V, MKV, MOV, MP4, MPEG, MPG, TS, WEBM, WMV. Each frame is processed individually and results are returned per frame. See [dedicated endpoints](endpoints.md#request-parameters) for details.

|

||||

- **Shared inference** (`/api/models/{id}/predict`) accepts images only. For video, extract frames locally, send each frame as a separate request, and aggregate results.

|

||||

- **Dedicated endpoints** accept video files directly. Supported formats (up to 100 MB): ASF, AVI, GIF, M4V, MKV, MOV, MP4, MPEG, MPG, TS, WEBM, WMV. Each frame is processed individually and results are returned per frame. See [dedicated endpoints](endpoints.md#request-parameters) for details.

|

||||

- **Shared inference** (`/api/models/{id}/predict`) uses the same predict service and accepts the same video formats. However, the browser **Predict tab** in the UI only uploads images — use the REST API directly or a [dedicated endpoint](endpoints.md) for video workflows. The shared endpoint is also [rate-limited to 20 req/min](../api/index.md#per-api-key-limits), so dedicated endpoints are the better choice for heavy video workloads.

|

||||

|

||||

### How do I get the annotated image?

|

||||

|

||||

|

|

|

|||

|

|

@ -197,12 +197,12 @@ See [Projects](train/projects.md) for organizing models in your project.

|

|||

|

||||

Official `@ultralytics` content is pinned to the top of the Explore page. This includes:

|

||||

|

||||

| Project | Description | Models | Tasks |

|

||||

| --------------------------------- | --------------------------- | ---------------------------- | ------------------------------------ |

|

||||

| **[YOLO26](../models/yolo26.md)** | Latest January 2026 release | 25 models (all sizes, tasks) | detect, segment, pose, OBB, classify |

|

||||

| **[YOLO11](../models/yolo11.md)** | Current stable release | 10+ models | detect, segment, pose, OBB, classify |

|

||||

| **YOLOv8** | Previous generation | Various | detect, segment, pose, classify |

|

||||

| **YOLOv5** | Legacy, widely adopted | Various | detect, segment, classify |

|

||||

| Project | Description | Models | Tasks |

|

||||

| --------------------------------- | --------------------------- | ------------------------------ | ------------------------------------ |

|

||||

| **[YOLO26](../models/yolo26.md)** | Latest January 2026 release | 25 models (5 sizes × 5 tasks) | detect, segment, pose, OBB, classify |

|

||||

| **[YOLO11](../models/yolo11.md)** | Current stable release | 25 models (5 sizes × 5 tasks) | detect, segment, pose, OBB, classify |

|

||||

| **YOLOv8** | Previous generation | 20+ models (5 sizes × 4 tasks) | detect, segment, pose, classify |

|

||||

| **YOLOv5** | Legacy, widely adopted | 15+ models | detect, segment, classify |

|

||||

|

||||

Official datasets include benchmark datasets like [coco8](../datasets/detect/coco8.md) (8-image COCO subset), [VOC](../datasets/detect/voc.md), [african-wildlife](../datasets/detect/african-wildlife.md), [dota8](../datasets/obb/dota8.md), and other commonly used computer vision datasets.

|

||||

|

||||

|

|

|

|||

|

|

@ -66,15 +66,15 @@ graph LR

|

|||

| ------------ | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

|

||||

| **Upload** | Images (50MB), videos (1GB), and dataset files (ZIP, TAR including `.tar.gz`/`.tgz`, NDJSON) with automatic processing |

|

||||

| **Annotate** | Manual tools for all 5 task types, plus [Smart Annotation](data/annotation.md#smart-annotation) with SAM and YOLO models for detect, segment, and OBB (see [supported tasks](data/index.md#supported-tasks)) |

|

||||

| **Train** | Cloud GPUs (20 free + 3 Pro-exclusive), real-time metrics, project organization |

|

||||

| **Train** | Cloud GPUs (20 on all plans + 3 Pro/Enterprise-only: H200 NVL, H200 SXM, B200), real-time metrics, project organization |

|

||||

| **Export** | [17 deployment formats](../modes/export.md) (ONNX, TensorRT, CoreML, TFLite, etc.; see [supported formats](train/models.md#supported-formats)) |

|

||||

| **Deploy** | 43 global regions with dedicated endpoints, scale-to-zero behavior, and monitoring |

|

||||

| **Deploy** | 43 global regions with dedicated endpoints, scale-to-zero by default (single active instance), and monitoring |

|

||||

|

||||

**What you can do:**

|

||||

|

||||

- **Upload** images, videos, and dataset files to create training datasets

|

||||

- **Visualize** annotations with interactive overlays for all 5 YOLO task types (see [supported tasks](data/index.md#supported-tasks))

|

||||

- **Train** models on cloud GPUs (20 free, 23 with Pro) with real-time metrics

|

||||

- **Train** models on cloud GPUs (20 on all plans, 23 with Pro for H200 and B200) with real-time metrics

|

||||

- **Export** to [17 deployment formats](../modes/export.md) (ONNX, TensorRT, CoreML, TFLite, etc.)

|

||||

- **Deploy** to 43 global regions with one-click dedicated endpoints

|

||||

- **Monitor** training progress, deployment health, and usage metrics

|

||||

|

|

@ -126,7 +126,7 @@ graph LR

|

|||

|

||||

### Model Training

|

||||

|

||||

- **Cloud Training**: Train on cloud GPUs (20 free, 23 with [Pro](account/billing.md#plans)) with real-time metrics

|

||||

- **Cloud Training**: Train on cloud GPUs (20 on all plans, 23 with [Pro or Enterprise](account/billing.md#plans) for H200 and B200) with real-time metrics

|

||||

- **Remote Training**: Train anywhere and stream metrics to the platform (W&B-style)

|

||||

- **Project Organization**: Group related models, compare experiments, track activity

|

||||

- **17 Export Formats**: ONNX, TensorRT, CoreML, TFLite, and more (see [supported formats](train/models.md#supported-formats))

|

||||

|

|

@ -177,7 +177,7 @@ You can train models either through the web UI (cloud training) or from your own

|

|||

### Deployment

|

||||

|

||||

- **Inference Testing**: Test models directly in the browser with custom images

|

||||

- **Dedicated Endpoints**: Deploy to 43 global regions with scale-to-zero behavior

|

||||

- **Dedicated Endpoints**: Deploy to 43 global regions with scale-to-zero by default (single active instance)

|

||||

- **Monitoring**: Real-time metrics, request logs, and performance dashboards

|

||||

|

||||

```mermaid

|

||||

|

|

@ -243,17 +243,17 @@ Once deployed, call your endpoint from any language:

|

|||

|

||||

!!! info "Plan Tiers"

|

||||

|

||||

| Feature | Free | Pro ($29/mo) | Enterprise |

|

||||

| -------------------- | -------------- | ------------------- | -------------- |

|

||||

| Signup Credit | $5 / $25* | - | Custom |

|

||||

| Monthly Credit | - | $30/seat/month | Custom |

|

||||

| Models | 100 | 500 | Unlimited |

|

||||

| Concurrent Trainings | 3 | 10 | Unlimited |

|

||||

| Deployments | 3 | 10 | Unlimited |

|

||||

| Storage | 100 GB | 500 GB | Unlimited |

|

||||

| Cloud GPU Types | 20 | 23 (incl. H200/B200)| 23 |

|

||||

| Teams | - | Up to 5 members | Up to 50 |

|

||||

| Support | Community | Priority | Dedicated |

|

||||

| Feature | Free | Pro ($29/mo) | Enterprise |

|

||||

| -------------------- | -------------- | ----------------------- | -------------- |

|

||||

| Signup Credit | $5 / $25* | - | Custom |

|

||||

| Monthly Credit | - | $30/seat/month | Custom |

|

||||

| Models | 100 | 500 | Unlimited |

|

||||

| Concurrent Trainings | 3 | 10 | Unlimited |

|

||||

| Deployments | 3 | 10 | Unlimited |

|

||||

| Storage | 100 GB | 500 GB | Unlimited |

|

||||

| Cloud GPU Types | 20 | 23 (incl. H200 / B200) | 23 |

|

||||

| Teams | - | Up to 5 members | Up to 50 |

|

||||

| Support | Community | Priority | Dedicated |

|

||||

|

||||

*$5 at signup, or $25 with a verified company/work email.

|

||||

|

||||

|

|

@ -369,16 +369,16 @@ The Platform includes a full-featured annotation editor supporting:

|

|||

- **Smart Annotation**: Use [SAM 2.1](../models/sam-2.md) or [SAM 3](../models/sam-3.md) for click-based annotation, or run pretrained Ultralytics YOLO models and your own fine-tuned YOLO models from the toolbar for detect, segment, and OBB

|

||||

- **Keyboard Shortcuts**: Efficient workflows with hotkeys

|

||||

|

||||

| Shortcut | Action |

|

||||

| --------- | -------------------------- |

|

||||

| `V` | Select mode |

|

||||

| `S` | SAM smart annotation mode |

|

||||

| `A` | Auto-annotate mode |

|

||||

| `1` - `9` | Select class by number |

|

||||

| `Delete` | Delete selected annotation |

|

||||

| `Ctrl+Z` | Undo |

|

||||

| `Ctrl+Y` | Redo |

|

||||

| `Escape` | Cancel current action |

|

||||

| Shortcut | Action |

|

||||

| --------- | --------------------------------- |

|

||||

| `V` | Manual (draw) mode |

|

||||

| `S` | Smart mode (SAM) |

|

||||

| `A` | Toggle auto-apply (in Smart mode) |

|

||||

| `1` - `9` | Select class by number |

|

||||

| `Delete` | Delete selected annotation |

|

||||

| `Ctrl+Z` | Undo |

|

||||

| `Ctrl+Y` | Redo |

|

||||

| `Escape` | Save / deselect / exit |

|

||||

|

||||

See [Annotation](data/annotation.md) for the complete guide.

|

||||

|

||||

|

|

@ -412,12 +412,12 @@ See [Models Export](train/models.md#export-model), the [Export mode guide](../mo

|

|||

|

||||

### Dataset Issues

|

||||

|

||||

| Problem | Solution |

|

||||

| ---------------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| Dataset won't process | Check file format is supported (JPEG, PNG, WebP, etc.). Max file size: images 50MB, videos 1GB, datasets 10GB on Free / 20GB on Pro / 50GB on Enterprise |

|

||||

| Missing annotations | Verify labels are in [YOLO format](../datasets/detect/index.md#ultralytics-yolo-format) with `.txt` files matching image filenames |

|

||||

| "Train split required" | Add `train/` folder to your dataset structure, or create splits in [dataset settings](data/datasets.md#filter-by-split) |

|

||||

| Class names undefined | Add a `data.yaml` file with `names:` list (see [YOLO format](../datasets/detect/index.md#ultralytics-yolo-format)), or define classes in dataset settings |

|

||||

| Problem | Solution |

|

||||

| ---------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| Dataset won't process | Check file format is supported (JPEG, PNG, WebP, TIFF, HEIC, AVIF, BMP, JP2, DNG, MPO for images). Max file size: images 50 MB, videos 1 GB, dataset archives 10 GB (Free) / 20 GB (Pro) / 50 GB (Enterprise) |

|

||||

| Missing annotations | Verify labels are in [YOLO format](../datasets/detect/index.md#ultralytics-yolo-format) with `.txt` files matching image filenames, or upload COCO JSON |

|

||||

| "Train split required" | Add `train/` folder to your dataset structure, or redistribute splits via the [split bar](data/datasets.md#split-redistribution) |

|

||||

| Class names undefined | Add a `data.yaml` file with `names:` list (see [YOLO format](../datasets/detect/index.md#ultralytics-yolo-format)), or define classes in the [Classes tab](data/datasets.md#classes-tab) |

|

||||

|

||||

### Training Issues

|

||||

|

||||

|

|

|

|||

|

|

@ -241,13 +241,13 @@ From your project, click `Train Model` to start cloud training.

|

|||

3. **Set Epochs**: Number of training iterations (default: 100)

|

||||

4. **Select GPU**: Choose compute resources based on your budget and model size

|

||||

|

||||

| Model | Size | Speed | Accuracy | Recommended GPU |

|

||||

| ------- | ----------- | -------- | -------- | -------------------- |

|

||||

| YOLO26n | Nano | Fastest | Good | RTX PRO 6000 (96 GB) |

|

||||

| YOLO26s | Small | Fast | Better | RTX PRO 6000 (96 GB) |

|

||||

| YOLO26m | Medium | Moderate | High | RTX PRO 6000 (96 GB) |

|

||||

| YOLO26l | Large | Slower | Higher | A100 (80 GB) |

|

||||

| YOLO26x | Extra Large | Slowest | Best | H100 (80 GB) |

|

||||

| Model | Size | Speed | Accuracy | Recommended GPU |

|

||||

| ------- | ----------- | -------- | -------- | ------------------------------------------------- |

|

||||

| YOLO26n | Nano | Fastest | Good | RTX PRO 6000 (96 GB) or RTX 4090 (24 GB) |

|

||||

| YOLO26s | Small | Fast | Better | RTX PRO 6000 (96 GB) |

|

||||

| YOLO26m | Medium | Moderate | High | RTX PRO 6000 (96 GB) |

|

||||

| YOLO26l | Large | Slower | Higher | RTX PRO 6000 (96 GB) or A100 SXM (80 GB) |

|

||||

| YOLO26x | Extra Large | Slowest | Best | H100 SXM (80 GB), H200 (141 GB), or B200 (180 GB) |

|

||||

|

||||

!!! info "GPU Selection"

|

||||

|

||||

|

|

|

|||

|

|

@ -62,12 +62,12 @@ Choose a dataset to train on (see [Datasets](../data/datasets.md)):

|

|||

|

||||

Set core training parameters:

|

||||

|

||||

| Parameter | Description | Default |

|

||||

| -------------- | --------------------------------------------------------------------------- | --------- |

|

||||

| **Epochs** | Number of training iterations | 100 |

|

||||

| **Batch Size** | Samples per iteration | -1 (auto) |

|

||||

| **Image Size** | Input resolution (320/416/512/640/1280 dropdown, or 32-4096 in YAML editor) | 640 |

|

||||

| **Run Name** | Optional name for the training run | auto |

|

||||

| Parameter | Description | Default |

|

||||

| -------------- | ------------------------------------------------------------------------------------------------ | --------- |

|

||||

| **Epochs** | Number of training iterations | 100 |

|

||||

| **Batch Size** | Samples per iteration | -1 (auto) |

|

||||

| **Image Size** | Input resolution (320/416/512/640/1280 dropdown, any multiple of 32 from 32-4096 in YAML editor) | 640 |

|

||||

| **Run Name** | Optional name for the training run | auto |

|

||||

|

||||

### Step 4: Advanced Settings (Optional)

|

||||

|

||||

|

|

@ -76,7 +76,7 @@ Expand **Advanced Settings** to access the full YAML-based parameter editor with

|

|||

| Group | Parameters |

|

||||

| ----------------------- | -------------------------------------------------------------------------------- |

|

||||

| **Learning Rate** | lr0, lrf, momentum, weight_decay, warmup_epochs, warmup_momentum, warmup_bias_lr |

|

||||

| **Optimizer** | SGD, MuSGD, Adam, AdamW, NAdam, RAdam, RMSProp, Adamax |

|

||||

| **Optimizer** | auto (default), SGD, MuSGD, Adam, AdamW, NAdam, RAdam, RMSProp, Adamax |

|

||||

| **Loss Weights** | box, cls, dfl, pose, kobj, label_smoothing |

|

||||

| **Color Augmentation** | hsv_h, hsv_s, hsv_v |

|

||||

| **Geometric Augment.** | degrees, translate, scale, shear, perspective |

|

||||

|

|

@ -109,10 +109,11 @@ Choose your GPU from Ultralytics Cloud:

|

|||

|

||||

!!! tip "GPU Selection"

|

||||

|

||||

- **RTX PRO 6000**: 96 GB Blackwell generation, recommended default for most jobs

|

||||

- **A100 SXM**: Required for large batch sizes or big models

|

||||

- **H100/H200**: Maximum performance for time-sensitive training (H200 requires [Pro or Enterprise](../account/billing.md#plans))

|

||||

- **B200**: NVIDIA Blackwell architecture for cutting-edge workloads (requires [Pro or Enterprise](../account/billing.md#plans))

|

||||

- **RTX PRO 6000**: 96 GB Blackwell, recommended default for most jobs

|

||||

- **A100 SXM**: 80 GB HBM2e — strong choice for large batch sizes or bigger models

|

||||

- **H100 PCIe / H100 SXM / H100 NVL**: 80–94 GB Hopper for time-sensitive training (available on all plans)

|

||||

- **H200 NVL / H200 SXM**: 141–143 GB Hopper — requires [Pro or Enterprise](../account/billing.md#plans)

|

||||

- **B200**: 180 GB NVIDIA Blackwell for cutting-edge workloads — requires [Pro or Enterprise](../account/billing.md#plans)

|

||||

|

||||

The dialog shows your current **balance** and a **Top Up** button. An estimated cost and duration are calculated based on your configuration (model size, dataset images, epochs, GPU speed).

|

||||

|

||||

|

|

@ -462,11 +463,11 @@ Yes, the **Train** button on dataset pages opens the training dialog with the da

|

|||

|

||||

=== "Core"

|

||||

|

||||

| Parameter | Type | Default | Range | Description |

|

||||

| -------------- | ---- | ------- | -------- | ------------------------------------ |

|

||||

| `epochs` | int | 100 | 1-10000 | Number of training epochs |

|

||||

| `batch` | int | 16 | 1-512 | Batch size |

|

||||

| `imgsz` | int | 640 | 32-4096 | Input image size |

|

||||

| Parameter | Type | Default | Range | Description |

|

||||

| -------------- | ---- | --------- | --------- | ------------------------------------------------ |

|

||||

| `epochs` | int | 100 | 1-10000 | Number of training epochs |

|

||||

| `batch` | int | -1 (auto) | -1 to 512 | Batch size (`-1` = auto-fit to available VRAM) |

|

||||

| `imgsz` | int | 640 | 32-4096 | Input image size |

|

||||

| `patience` | int | 100 | 1-1000 | Early stopping patience |

|

||||

| `seed` | int | 0 | 0-2147483647 | Random seed for reproducibility |

|

||||

| `deterministic`| bool | True | - | Deterministic training mode |

|

||||

|

|

|

|||

Loading…

Reference in a new issue