mirror of

https://github.com/ultralytics/ultralytics

synced 2026-04-21 14:07:18 +00:00

Compare commits

103 commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

972e135c42 | ||

|

|

0bc12fcb54 | ||

|

|

235ebb36ba | ||

|

|

73fcaec788 | ||

|

|

6438ebd4d7 | ||

|

|

3681717315 | ||

|

|

8c824341fa | ||

|

|

de48cc7bea | ||

|

|

3d196f0d95 | ||

|

|

419fbd61d6 | ||

|

|

901dcb0e47 | ||

|

|

d86cb96689 | ||

|

|

b50af592b7 | ||

|

|

426bab3588 | ||

|

|

4b5c4580cf | ||

|

|

fdddfc4cc5 | ||

|

|

f4d0fda2cb | ||

|

|

4912cff3b6 | ||

|

|

08612af950 | ||

|

|

0579ac71fc | ||

|

|

d93fb45e03 | ||

|

|

3108aa614d | ||

|

|

dd7f30e51e | ||

|

|

ca24fdd77d | ||

|

|

518d31c1ee | ||

|

|

5dc005dfaa | ||

|

|

1bd9712f6c | ||

|

|

f647b25f8b | ||

|

|

c4315b27fe | ||

|

|

a38a7705ee | ||

|

|

4730551b1f | ||

|

|

da03154f86 | ||

|

|

826cd7f357 | ||

|

|

6b5c2a86f7 | ||

|

|

6aa70cd983 | ||

|

|

6eddf35907 | ||

|

|

1bf46217d6 | ||

|

|

117accf360 | ||

|

|

49deea630a | ||

|

|

093579437c | ||

|

|

ec681f4caa | ||

|

|

10beb3fa60 | ||

|

|

616c032b8b | ||

|

|

c7e1d6044a | ||

|

|

f06a9bbadd | ||

|

|

fec04ba66c | ||

|

|

6ccdcf372d | ||

|

|

bed8778ae4 | ||

|

|

9a0962d97e | ||

|

|

564f3bbc09 | ||

|

|

1515240d9c | ||

|

|

add03bb2b4 | ||

|

|

2283438618 | ||

|

|

cf95678dc8 | ||

|

|

99bee2f6be | ||

|

|

b1821192eb | ||

|

|

e5eee3e6a2 | ||

|

|

3f6584ad35 | ||

|

|

7eaea3c621 | ||

|

|

a481399723 | ||

|

|

fa3dd8d96e | ||

|

|

0e65fd1fa2 | ||

|

|

8588a650a2 | ||

|

|

20722a3cf5 | ||

|

|

817aca355e | ||

|

|

0bd8a0970f | ||

|

|

be17897752 | ||

|

|

6edac4a85a | ||

|

|

fde498ad30 | ||

|

|

fa1db1f467 | ||

|

|

75acff57c2 | ||

|

|

b7e4aa6a0f | ||

|

|

515d01f001 | ||

|

|

81a2398714 | ||

|

|

b8fa0f0cbd | ||

|

|

bfec123fa6 | ||

|

|

3d7bb126c6 | ||

|

|

36560f2a38 | ||

|

|

584b57df03 | ||

|

|

954fefa6e1 | ||

|

|

8d4e6e841c | ||

|

|

edc79913a3 | ||

|

|

650fca87a3 | ||

|

|

4fc2e42886 | ||

|

|

337dcdc641 | ||

|

|

12d8ba403b | ||

|

|

5b400439c4 | ||

|

|

18f339399a | ||

|

|

84d3a8868f | ||

|

|

63bcbc1f1e | ||

|

|

94d9bf55cf | ||

|

|

65aeccfa95 | ||

|

|

ea5789d670 | ||

|

|

661561b64e | ||

|

|

fd45a33af7 | ||

|

|

a88f4391c3 | ||

|

|

18b4666b06 | ||

|

|

bd42a0b800 | ||

|

|

865ccb2eb1 | ||

|

|

14e951e71d | ||

|

|

2a3e08ac4a | ||

|

|

418b03e367 | ||

|

|

166da9fa75 |

178 changed files with 3881 additions and 1798 deletions

24

.github/workflows/ci.yml

vendored

24

.github/workflows/ci.yml

vendored

|

|

@ -51,7 +51,7 @@ jobs:

|

|||

fail-fast: false

|

||||

matrix:

|

||||

# Temporarily disable windows-latest due to https://github.com/ultralytics/ultralytics/actions/runs/13020330819/job/36319338854?pr=18921

|

||||

os: [ubuntu-latest, macos-26, ubuntu-24.04-arm]

|

||||

os: [cpu-latest, macos-26, ubuntu-24.04-arm]

|

||||

python: ["3.12"]

|

||||

model: [yolo26n]

|

||||

steps:

|

||||

|

|

@ -74,22 +74,22 @@ jobs:

|

|||

uv pip list

|

||||

- name: Benchmark DetectionModel

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}.pt' imgsz=160 verbose=0.218

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}.pt' imgsz=160 verbose=0.216

|

||||

- name: Benchmark ClassificationModel

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-cls.pt' imgsz=160 verbose=0.249

|

||||

- name: Benchmark YOLOWorld DetectionModel

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/yolov8s-worldv2.pt' imgsz=160 verbose=0.337

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/yolov8s-worldv2.pt' imgsz=160 verbose=0.335

|

||||

- name: Benchmark SegmentationModel

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-seg.pt' imgsz=160 verbose=0.230

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-seg.pt' imgsz=160 verbose=0.229

|

||||

- name: Benchmark PoseModel

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-pose.pt' imgsz=160 verbose=0.194

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-pose.pt' imgsz=160 verbose=0.185

|

||||

- name: Benchmark OBBModel

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-obb.pt' imgsz=160 verbose=0.372

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-obb.pt' imgsz=160 verbose=0.371

|

||||

- name: Merge Coverage Reports

|

||||

run: |

|

||||

coverage xml -o coverage-benchmarks.xml

|

||||

|

|

@ -345,17 +345,17 @@ jobs:

|

|||

yolo checks

|

||||

uv pip list

|

||||

- name: Benchmark DetectionModel

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n.pt' imgsz=160 verbose=0.218

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n.pt' imgsz=160 verbose=0.216

|

||||

- name: Benchmark ClassificationModel

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n-cls.pt' imgsz=160 verbose=0.249

|

||||

- name: Benchmark YOLOWorld DetectionModel

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolov8s-worldv2.pt' imgsz=160 verbose=0.337

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolov8s-worldv2.pt' imgsz=160 verbose=0.335

|

||||

- name: Benchmark SegmentationModel

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n-seg.pt' imgsz=160 verbose=0.230

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n-seg.pt' imgsz=160 verbose=0.229

|

||||

- name: Benchmark PoseModel

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n-pose.pt' imgsz=160 verbose=0.194

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n-pose.pt' imgsz=160 verbose=0.185

|

||||

- name: Benchmark OBBModel

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n-obb.pt' imgsz=160 verbose=0.372

|

||||

run: python -m ultralytics.cfg.__init__ benchmark model='yolo26n-obb.pt' imgsz=160 verbose=0.371

|

||||

- name: Benchmark Summary

|

||||

run: |

|

||||

cat benchmarks.log

|

||||

|

|

@ -435,7 +435,7 @@ jobs:

|

|||

channel-priority: true

|

||||

activate-environment: anaconda-client-env

|

||||

- name: Install Ultralytics package from conda-forge

|

||||

run: conda install -c pytorch -c conda-forge pytorch-cpu torchvision ultralytics "openvino!=2026.0.0"

|

||||

run: conda install -c pytorch -c conda-forge pytorch-cpu torchvision ultralytics "openvino<2026"

|

||||

- name: Install pip packages

|

||||

run: uv pip install pytest

|

||||

- name: Check environment

|

||||

|

|

|

|||

42

.github/workflows/docker.yml

vendored

42

.github/workflows/docker.yml

vendored

|

|

@ -118,24 +118,42 @@ jobs:

|

|||

uses: docker/setup-buildx-action@v4

|

||||

|

||||

- name: Login to Docker Hub

|

||||

uses: docker/login-action@v4

|

||||

uses: ultralytics/actions/retry@main

|

||||

env:

|

||||

DOCKERHUB_USERNAME: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

DOCKERHUB_TOKEN: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

run: |

|

||||

if ! out=$(printf '%s' "$DOCKERHUB_TOKEN" | docker login -u "$DOCKERHUB_USERNAME" --password-stdin 2>&1); then

|

||||

printf '%s\n' "$out" >&2

|

||||

exit 1

|

||||

fi

|

||||

echo "Logged in to docker.io"

|

||||

|

||||

- name: Login to GHCR

|

||||

uses: docker/login-action@v4

|

||||

uses: ultralytics/actions/retry@main

|

||||

env:

|

||||

GHCR_USERNAME: ${{ github.repository_owner }}

|

||||

GHCR_TOKEN: ${{ secrets._GITHUB_TOKEN }}

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets._GITHUB_TOKEN }}

|

||||

run: |

|

||||

if ! out=$(printf '%s' "$GHCR_TOKEN" | docker login ghcr.io -u "$GHCR_USERNAME" --password-stdin 2>&1); then

|

||||

printf '%s\n' "$out" >&2

|

||||

exit 1

|

||||

fi

|

||||

echo "Logged in to ghcr.io"

|

||||

|

||||

- name: Login to NVIDIA NGC

|

||||

uses: docker/login-action@v4

|

||||

uses: ultralytics/actions/retry@main

|

||||

env:

|

||||

NVIDIA_NGC_API_KEY: ${{ secrets.NVIDIA_NGC_API_KEY }}

|

||||

with:

|

||||

registry: nvcr.io

|

||||

username: $oauthtoken

|

||||

password: ${{ secrets.NVIDIA_NGC_API_KEY }}

|

||||

run: |

|

||||

if ! out=$(printf '%s' "$NVIDIA_NGC_API_KEY" | docker login nvcr.io -u '$oauthtoken' --password-stdin 2>&1); then

|

||||

printf '%s\n' "$out" >&2

|

||||

exit 1

|

||||

fi

|

||||

echo "Logged in to nvcr.io"

|

||||

|

||||

- name: Retrieve Ultralytics version

|

||||

id: get_version

|

||||

|

|

@ -227,7 +245,7 @@ jobs:

|

|||

|

||||

- name: Run Benchmarks

|

||||

if: (github.event_name == 'push' || github.event.inputs[matrix.dockerfile] == 'true') && (matrix.platforms == 'linux/amd64' || matrix.dockerfile == 'Dockerfile-arm64') && matrix.dockerfile != 'Dockerfile' && matrix.dockerfile != 'Dockerfile-conda'

|

||||

run: docker run ultralytics/ultralytics:${{ (matrix.tags == 'latest-python' && 'latest-python-export') || (matrix.tags == 'latest' && 'latest-export') || matrix.tags }} yolo benchmark model=yolo26n.pt imgsz=160 verbose=0.218

|

||||

run: docker run ultralytics/ultralytics:${{ (matrix.tags == 'latest-python' && 'latest-python-export') || (matrix.tags == 'latest' && 'latest-export') || matrix.tags }} yolo benchmark model=yolo26n.pt imgsz=160 verbose=0.216

|

||||

|

||||

- name: Push All Images

|

||||

if: github.event_name == 'push' || (github.event.inputs[matrix.dockerfile] == 'true' && github.event.inputs.push == 'true')

|

||||

|

|

|

|||

2

.github/workflows/links.yml

vendored

2

.github/workflows/links.yml

vendored

|

|

@ -35,6 +35,7 @@ jobs:

|

|||

timeout_minutes: 60

|

||||

retry_delay_seconds: 1800

|

||||

retries: 2

|

||||

backoff: fixed

|

||||

run: |

|

||||

lychee \

|

||||

--scheme https \

|

||||

|

|

@ -70,6 +71,7 @@ jobs:

|

|||

timeout_minutes: 60

|

||||

retry_delay_seconds: 1800

|

||||

retries: 2

|

||||

backoff: fixed

|

||||

run: |

|

||||

lychee \

|

||||

--scheme https \

|

||||

|

|

|

|||

|

|

@ -29,9 +29,9 @@ First-time contributors are expected to submit small, well-scoped pull requests.

|

|||

|

||||

#### Established Contributors

|

||||

|

||||

Pull requests from established contributors generally receive higher review priority. Actions and results are fundamental to the [Ultralytics Mission & Values](https://handbook.ultralytics.com/mission-vision-values/). There is no specific threshold to becoming an 'established contributor' as it's impossible to fit all individuals to the same standard. The Ultralytics Team notices those who make consistent, high-quality contributions that follow the Ultralytics standards.

|

||||

Pull requests from established contributors generally receive higher review priority. Actions and results are fundamental to the [Ultralytics Mission & Values](https://handbook.ultralytics.com/mission-vision-values). There is no specific threshold to becoming an 'established contributor' as it's impossible to fit all individuals to the same standard. The Ultralytics Team notices those who make consistent, high-quality contributions that follow the Ultralytics standards.

|

||||

|

||||

Following our [contributing guidelines](./CONTRIBUTING.md) and [our Development Workflow](https://handbook.ultralytics.com/workflows/development/) is the best way to improve your chances for your work to be reviewed, accepted, and/or recognized; this is not a guarantee. In addition, contributors with a strong track record of meaningful contributions to notable open-source projects may be treated as established contributors, even if they are technically first-time contributors to Ultralytics.

|

||||

Following our [contributing guidelines](./CONTRIBUTING.md) and [our Development Workflow](https://handbook.ultralytics.com/workflows/development) is the best way to improve your chances for your work to be reviewed, accepted, and/or recognized; this is not a guarantee. In addition, contributors with a strong track record of meaningful contributions to notable open-source projects may be treated as established contributors, even if they are technically first-time contributors to Ultralytics.

|

||||

|

||||

#### Feature PRs

|

||||

|

||||

|

|

@ -156,11 +156,11 @@ We highly value bug reports as they help us improve the quality and reliability

|

|||

|

||||

Ultralytics uses the [GNU Affero General Public License v3.0 (AGPL-3.0)](https://www.ultralytics.com/legal/agpl-3-0-software-license) for its repositories. This license promotes [openness](https://en.wikipedia.org/wiki/Openness), [transparency](https://www.ultralytics.com/glossary/transparency-in-ai), and [collaborative improvement](https://en.wikipedia.org/wiki/Collaborative_software) in software development. It ensures that all users have the freedom to use, modify, and share the software, fostering a strong community of collaboration and innovation.

|

||||

|

||||

We encourage all contributors to familiarize themselves with the terms of the [AGPL-3.0 license](https://opensource.org/license/agpl-v3) to contribute effectively and ethically to the Ultralytics open-source community.

|

||||

We encourage all contributors to familiarize themselves with the terms of the [AGPL-3.0 license](https://opensource.org/license/agpl-3.0) to contribute effectively and ethically to the Ultralytics open-source community.

|

||||

|

||||

## 🌍 Open-Sourcing Your YOLO Project Under AGPL-3.0

|

||||

|

||||

Using Ultralytics YOLO models or code in your project? The [AGPL-3.0 license](https://opensource.org/license/agpl-v3) requires that your entire derivative work also be open-sourced under AGPL-3.0. This ensures modifications and larger projects built upon open-source foundations remain open.

|

||||

Using Ultralytics YOLO models or code in your project? The [AGPL-3.0 license](https://opensource.org/license/agpl-3.0) requires that your entire derivative work also be open-sourced under AGPL-3.0. This ensures modifications and larger projects built upon open-source foundations remain open.

|

||||

|

||||

### Why AGPL-3.0 Compliance Matters

|

||||

|

||||

|

|

@ -179,7 +179,7 @@ Complying means making the **complete corresponding source code** of your projec

|

|||

- **Use Ultralytics Template:** Start with the [Ultralytics template repository](https://github.com/ultralytics/template) for a clean, modular setup integrating YOLO.

|

||||

|

||||

2. **License Your Project:**

|

||||

- Add a `LICENSE` file containing the full text of the [AGPL-3.0 license](https://opensource.org/license/agpl-v3).

|

||||

- Add a `LICENSE` file containing the full text of the [AGPL-3.0 license](https://opensource.org/license/agpl-3.0).

|

||||

- Add a notice at the top of each source file indicating the license.

|

||||

|

||||

3. **Publish Your Source Code:**

|

||||

|

|

|

|||

|

|

@ -252,7 +252,7 @@ We look forward to your contributions to help make the Ultralytics ecosystem eve

|

|||

|

||||

Ultralytics offers two licensing options to suit different needs:

|

||||

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-v3) open-source license is perfect for students, researchers, and enthusiasts. It encourages open collaboration and knowledge sharing. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for full details.

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-3.0) open-source license is perfect for students, researchers, and enthusiasts. It encourages open collaboration and knowledge sharing. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for full details.

|

||||

- **Ultralytics Enterprise License**: Designed for commercial use, this license allows for the seamless integration of Ultralytics software and AI models into commercial products and services, bypassing the open-source requirements of AGPL-3.0. If your use case involves commercial deployment, please contact us via [Ultralytics Licensing](https://www.ultralytics.com/license).

|

||||

|

||||

## 📞 Contact

|

||||

|

|

|

|||

|

|

@ -252,7 +252,7 @@ Ultralytics 支持广泛的 YOLO 模型,从早期的版本如 [YOLOv3](https:/

|

|||

|

||||

Ultralytics 提供两种许可选项以满足不同需求:

|

||||

|

||||

- **AGPL-3.0 许可证**:这种经 [OSI 批准](https://opensource.org/license/agpl-v3)的开源许可证非常适合学生、研究人员和爱好者。它鼓励开放协作和知识共享。有关完整详细信息,请参阅 [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) 文件。

|

||||

- **AGPL-3.0 许可证**:这种经 [OSI 批准](https://opensource.org/license/agpl-3.0)的开源许可证非常适合学生、研究人员和爱好者。它鼓励开放协作和知识共享。有关完整详细信息,请参阅 [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) 文件。

|

||||

- **Ultralytics 企业许可证**:专为商业用途设计,此许可证允许将 Ultralytics 软件和 AI 模型无缝集成到商业产品和服务中,绕过 AGPL-3.0 的开源要求。如果您的使用场景涉及商业部署,请通过 [Ultralytics 授权许可](https://www.ultralytics.com/license)与我们联系。

|

||||

|

||||

## 📞 联系方式

|

||||

|

|

|

|||

|

|

@ -41,8 +41,8 @@ RUN sed -i 's/^\( *"tensorflowjs\)>=.*\(".*\)/\1>=3.9.0\2/' pyproject.toml && \

|

|||

# Pip install onnxruntime-gpu, torch, torchvision and ultralytics, then remove build files

|

||||

RUN uv pip install --system \

|

||||

https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.18.0-cp38-cp38-linux_aarch64.whl \

|

||||

https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.2.0-cp38-cp38-linux_aarch64.whl \

|

||||

https://github.com/ultralytics/assets/releases/download/v0.0.0/torchvision-0.17.2+c1d70fe-cp38-cp38-linux_aarch64.whl && \

|

||||

https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.1.0a0+41361538.nv23.06-cp38-cp38-linux_aarch64.whl \

|

||||

https://github.com/ultralytics/assets/releases/download/v0.0.0/torchvision-0.16.2+c6f3977-cp38-cp38-linux_aarch64.whl && \

|

||||

# Need lower version of 'numpy' for TensorRT export

|

||||

uv pip install --system numpy==1.23.5 && \

|

||||

uv pip install --system -e ".[export]" && \

|

||||

|

|

|

|||

|

|

@ -10,13 +10,13 @@ The [Caltech-256](https://data.caltech.edu/records/nyy15-4j048) dataset is an ex

|

|||

|

||||

<p align="center">

|

||||

<br>

|

||||

<iframe loading="lazy" width="720" height="405" src="https://www.youtube.com/embed/isc06_9qnM0"

|

||||

<iframe loading="lazy" width="720" height="405" src="https://www.youtube.com/embed/Y7cfNkqSdMg"

|

||||

title="YouTube video player" frameborder="0"

|

||||

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share"

|

||||

allowfullscreen>

|

||||

</iframe>

|

||||

<br>

|

||||

<strong>Watch:</strong> How to Train <a href="https://www.ultralytics.com/glossary/image-classification">Image Classification</a> Model using Caltech-256 Dataset with Ultralytics Platform

|

||||

<strong>Watch:</strong> How to Train <a href="https://www.ultralytics.com/glossary/image-classification">Image Classification</a> Model using Caltech-256 Dataset with Ultralytics YOLO26

|

||||

</p>

|

||||

|

||||

!!! note "Automatic Data Splitting"

|

||||

|

|

|

|||

|

|

@ -146,7 +146,7 @@ Illustrations of the dataset help provide insights into its richness:

|

|||

|

||||

- **Open Images V7**: This image exemplifies the depth and detail of annotations available, including bounding boxes, relationships, and segmentation masks.

|

||||

|

||||

Researchers can gain invaluable insights into the array of computer vision challenges that the dataset addresses, from basic object detection to intricate relationship identification. The [diversity of annotations](https://docs.ultralytics.com/datasets/explorer/) makes Open Images V7 particularly valuable for developing models that can understand complex visual scenes.

|

||||

Researchers can gain invaluable insights into the array of computer vision challenges that the dataset addresses, from basic object detection to intricate relationship identification. The diversity of annotations makes Open Images V7 particularly valuable for developing models that can understand complex visual scenes.

|

||||

|

||||

## Citations and Acknowledgments

|

||||

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: TT100K, Tsinghua-Tencent 100K, traffic sign detection, YOLO26, dataset

|

|||

|

||||

The [Tsinghua-Tencent 100K (TT100K)](https://cg.cs.tsinghua.edu.cn/traffic-sign/) is a large-scale traffic sign benchmark dataset created from 100,000 Tencent Street View panoramas. This dataset is specifically designed for traffic sign detection and classification in real-world conditions, providing researchers and developers with a comprehensive resource for building robust traffic sign recognition systems.

|

||||

|

||||

The dataset contains **100,000 images** with over **30,000 traffic sign instances** across **221 different categories**. These images capture large variations in illuminance, weather conditions, viewing angles, and distances, making it ideal for training models that need to perform reliably in diverse real-world scenarios.

|

||||

The dataset contains **100,000 images** with over **30,000 traffic sign instances** across **221 annotation categories**. The original paper applies a 100-instance threshold per class for supervised training, yielding a commonly used **45-class** subset; however, the provided Ultralytics dataset configuration retains all **221 annotated categories**, many of which are very sparse. These images capture large variations in illuminance, weather conditions, viewing angles, and distances, making it ideal for training models that need to perform reliably in diverse real-world scenarios.

|

||||

|

||||

This dataset is particularly valuable for:

|

||||

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: Ultralytics, Explorer API, dataset exploration, SQL queries, similarit

|

|||

|

||||

!!! warning "Community Note ⚠️"

|

||||

|

||||

As of **`ultralytics>=8.3.10`**, Ultralytics Explorer support is deprecated. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

As of **`ultralytics>=8.3.12`**, Ultralytics Explorer has been removed. To use Explorer, install `pip install ultralytics==8.3.11`. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

|

||||

## Introduction

|

||||

|

||||

|

|

@ -331,13 +331,6 @@ Start creating your own CV dataset exploration reports using the Explorer API. F

|

|||

|

||||

Try our [GUI Demo](dashboard.md) based on Explorer API

|

||||

|

||||

## Coming Soon

|

||||

|

||||

- [ ] Merge specific labels from datasets. Example - Import all `person` labels from COCO and `car` labels from Cityscapes

|

||||

- [ ] Remove images that have a higher similarity index than the given threshold

|

||||

- [ ] Automatically persist new datasets after merging/removing entries

|

||||

- [ ] Advanced Dataset Visualizations

|

||||

|

||||

## FAQ

|

||||

|

||||

### What is the Ultralytics Explorer API used for?

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: Ultralytics Explorer GUI, semantic search, vector similarity, SQL quer

|

|||

|

||||

!!! warning "Community Note ⚠️"

|

||||

|

||||

As of **`ultralytics>=8.3.10`**, Ultralytics Explorer support is deprecated. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

As of **`ultralytics>=8.3.12`**, Ultralytics Explorer has been removed. To use Explorer, install `pip install ultralytics==8.3.11`. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

|

||||

Explorer GUI is built on the [Ultralytics Explorer API](api.md). It allows you to run semantic/vector similarity search, SQL queries, and natural language queries using the Ask AI feature powered by LLMs.

|

||||

|

||||

|

|

|

|||

|

|

@ -45,7 +45,7 @@ Install `ultralytics` and run `yolo explorer` in your terminal to run custom que

|

|||

|

||||

!!! warning "Community Note ⚠️"

|

||||

|

||||

As of **`ultralytics>=8.3.10`**, Ultralytics Explorer support is deprecated. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

As of **`ultralytics>=8.3.12`**, Ultralytics Explorer has been removed. To use Explorer, install `pip install ultralytics==8.3.11`. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

|

||||

## Setup

|

||||

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: Ultralytics Explorer, CV datasets, semantic search, SQL queries, vecto

|

|||

|

||||

!!! warning "Community Note ⚠️"

|

||||

|

||||

As of **`ultralytics>=8.3.10`**, Ultralytics Explorer support is deprecated. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

As of **`ultralytics>=8.3.12`**, Ultralytics Explorer has been removed. To use Explorer, install `pip install ultralytics==8.3.11`. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

|

||||

|

||||

<p>

|

||||

<img width="1709" alt="Ultralytics Explorer dataset visualization GUI" src="https://cdn.jsdelivr.net/gh/ultralytics/assets@main/docs/explorer-dashboard-screenshot-1.avif">

|

||||

|

|

@ -39,7 +39,7 @@ pip install ultralytics[explorer]

|

|||

|

||||

!!! tip

|

||||

|

||||

Explorer works on embedding/semantic search & SQL querying and is powered by [LanceDB](https://lancedb.com/) serverless vector database. Unlike traditional in-memory DBs, it is persisted on disk without sacrificing performance, so you can scale locally to large datasets like COCO without running out of memory.

|

||||

Explorer works on embedding/semantic search & SQL querying and is powered by [LanceDB](https://www.lancedb.com/) serverless vector database. Unlike traditional in-memory DBs, it is persisted on disk without sacrificing performance, so you can scale locally to large datasets like COCO without running out of memory.

|

||||

|

||||

## Explorer API

|

||||

|

||||

|

|

@ -68,7 +68,7 @@ yolo explorer

|

|||

|

||||

### What is Ultralytics Explorer and how can it help with CV datasets?

|

||||

|

||||

Ultralytics Explorer is a powerful tool designed for exploring [computer vision](https://www.ultralytics.com/glossary/computer-vision-cv) (CV) datasets through semantic search, SQL queries, vector similarity search, and even natural language. This versatile tool provides both a GUI and a Python API, allowing users to seamlessly interact with their datasets. By leveraging technologies like [LanceDB](https://lancedb.com/), Ultralytics Explorer ensures efficient, scalable access to large datasets without excessive memory usage. Whether you're performing detailed dataset analysis or exploring data patterns, Ultralytics Explorer streamlines the entire process.

|

||||

Ultralytics Explorer is a powerful tool designed for exploring [computer vision](https://www.ultralytics.com/glossary/computer-vision-cv) (CV) datasets through semantic search, SQL queries, vector similarity search, and even natural language. This versatile tool provides both a GUI and a Python API, allowing users to seamlessly interact with their datasets. By leveraging technologies like [LanceDB](https://www.lancedb.com/), Ultralytics Explorer ensures efficient, scalable access to large datasets without excessive memory usage. Whether you're performing detailed dataset analysis or exploring data patterns, Ultralytics Explorer streamlines the entire process.

|

||||

|

||||

Learn more about the [Explorer API](api.md).

|

||||

|

||||

|

|

@ -80,7 +80,7 @@ To manually install the optional dependencies needed for Ultralytics Explorer, y

|

|||

pip install ultralytics[explorer]

|

||||

```

|

||||

|

||||

These dependencies are essential for the full functionality of semantic search and SQL querying. By including libraries powered by [LanceDB](https://lancedb.com/), the installation ensures that the database operations remain efficient and scalable, even for large datasets like [COCO](../detect/coco.md).

|

||||

These dependencies are essential for the full functionality of semantic search and SQL querying. By including libraries powered by [LanceDB](https://www.lancedb.com/), the installation ensures that the database operations remain efficient and scalable, even for large datasets like [COCO](../detect/coco.md).

|

||||

|

||||

### How can I use the GUI version of Ultralytics Explorer?

|

||||

|

||||

|

|

|

|||

|

|

@ -195,7 +195,7 @@ To add your dataset:

|

|||

|

||||

### What is the purpose of the dataset YAML file in Ultralytics YOLO?

|

||||

|

||||

The dataset YAML file in Ultralytics YOLO defines the dataset and model configuration for training. It specifies paths to training, validation, and test images, keypoint shapes, class names, and other configuration options. This structured format helps streamline [dataset management](https://docs.ultralytics.com/datasets/explorer/) and model training. Here is an example YAML format:

|

||||

The dataset YAML file in Ultralytics YOLO defines the dataset and model configuration for training. It specifies paths to training, validation, and test images, keypoint shapes, class names, and other configuration options. This structured format helps streamline dataset management and model training. Here is an example YAML format:

|

||||

|

||||

```yaml

|

||||

--8<-- "ultralytics/cfg/datasets/coco8-pose.yaml"

|

||||

|

|

|

|||

|

|

@ -93,6 +93,7 @@ This logs the mean F1 score across all classes and a per-class breakdown after e

|

|||

| Attribute | Description |

|

||||

|---|---|

|

||||

| `f1` | F1 score per class |

|

||||

| `image_metrics` | Per-image metrics dictionary with precision, recall, F1, TP, FP, and FN |

|

||||

| `p` | Precision per class |

|

||||

| `r` | Recall per class |

|

||||

| `ap50` | AP at IoU 0.5 per class |

|

||||

|

|

|

|||

|

|

@ -106,7 +106,7 @@ Let's say you are ready to annotate now. There are several open-source tools ava

|

|||

<img width="100%" src="https://cdn.jsdelivr.net/gh/ultralytics/assets@main/docs/labelme-instance-segmentation-annotation.avif" alt="LabelMe annotation tool for instance segmentation">

|

||||

</p>

|

||||

|

||||

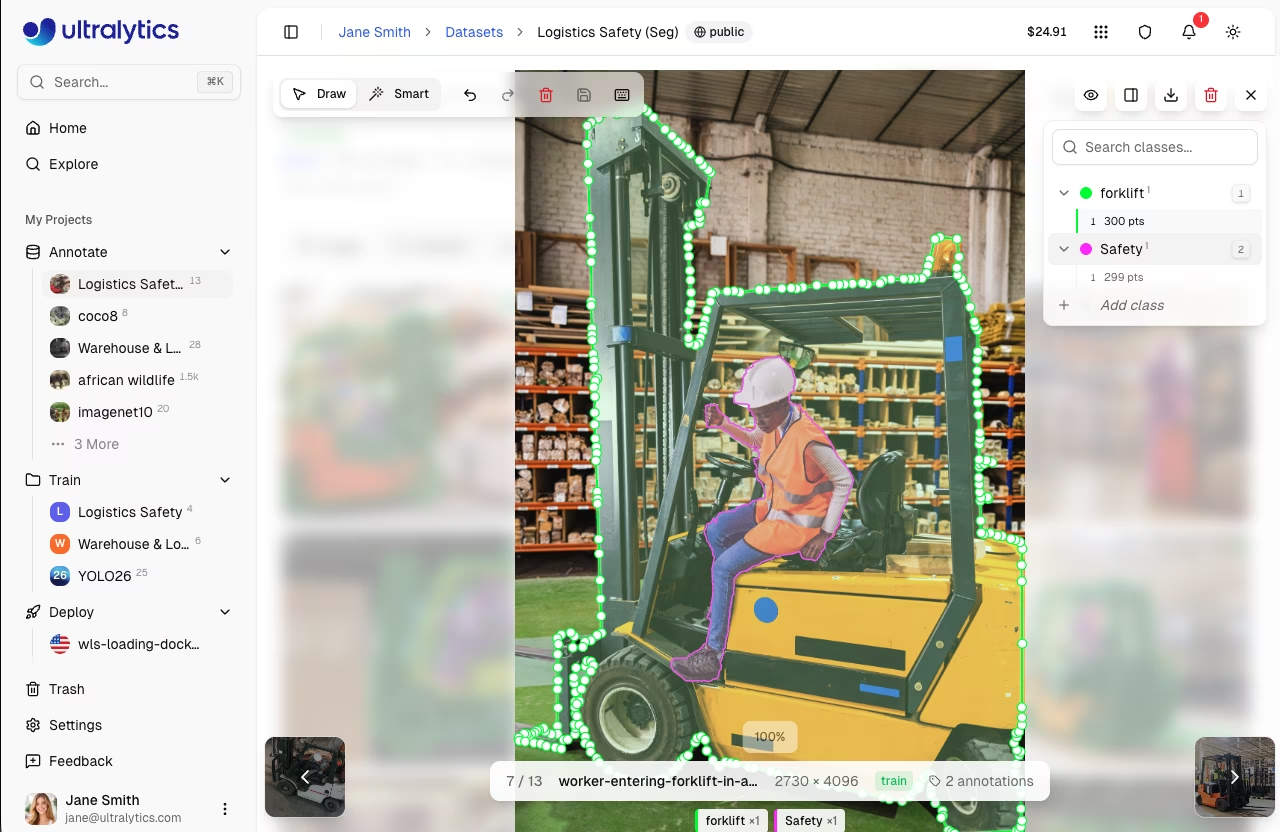

These open-source tools are budget-friendly and provide a range of features to meet different annotation needs.

|

||||

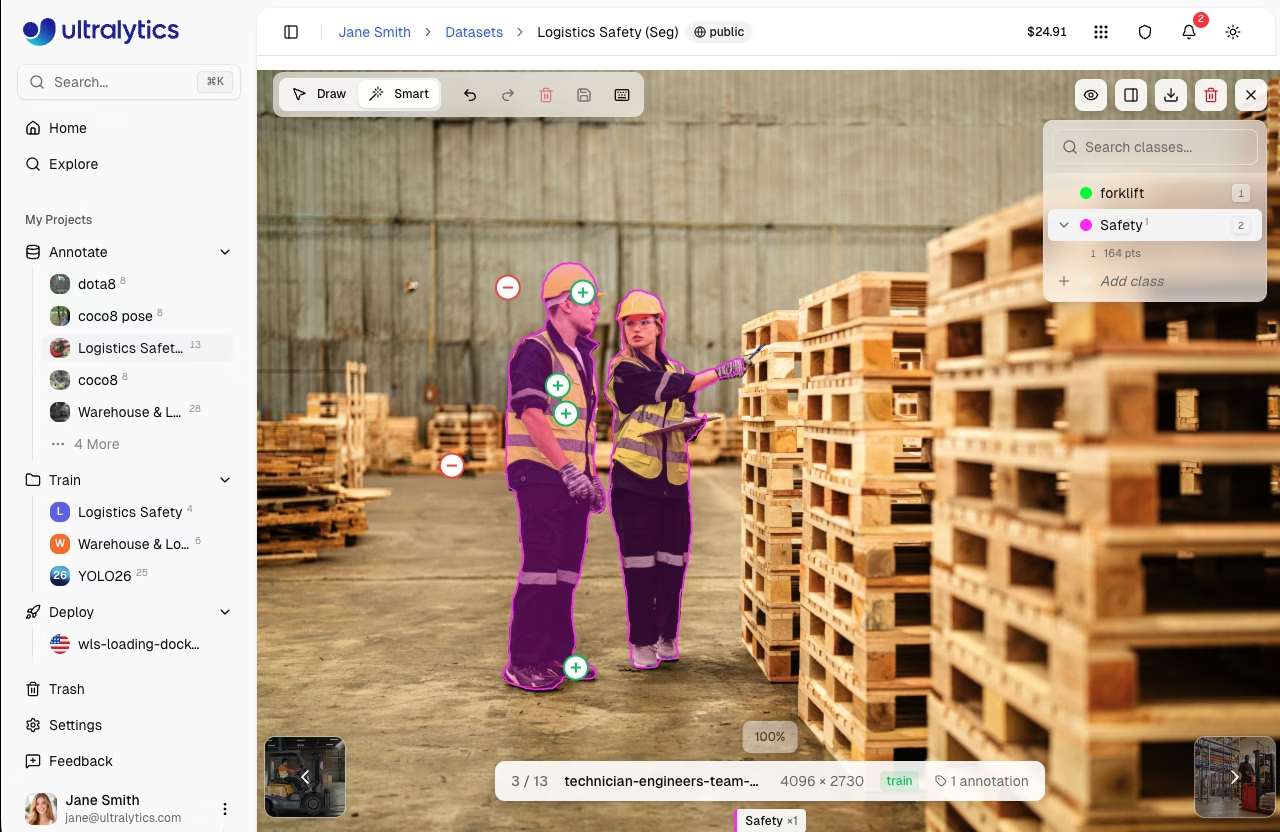

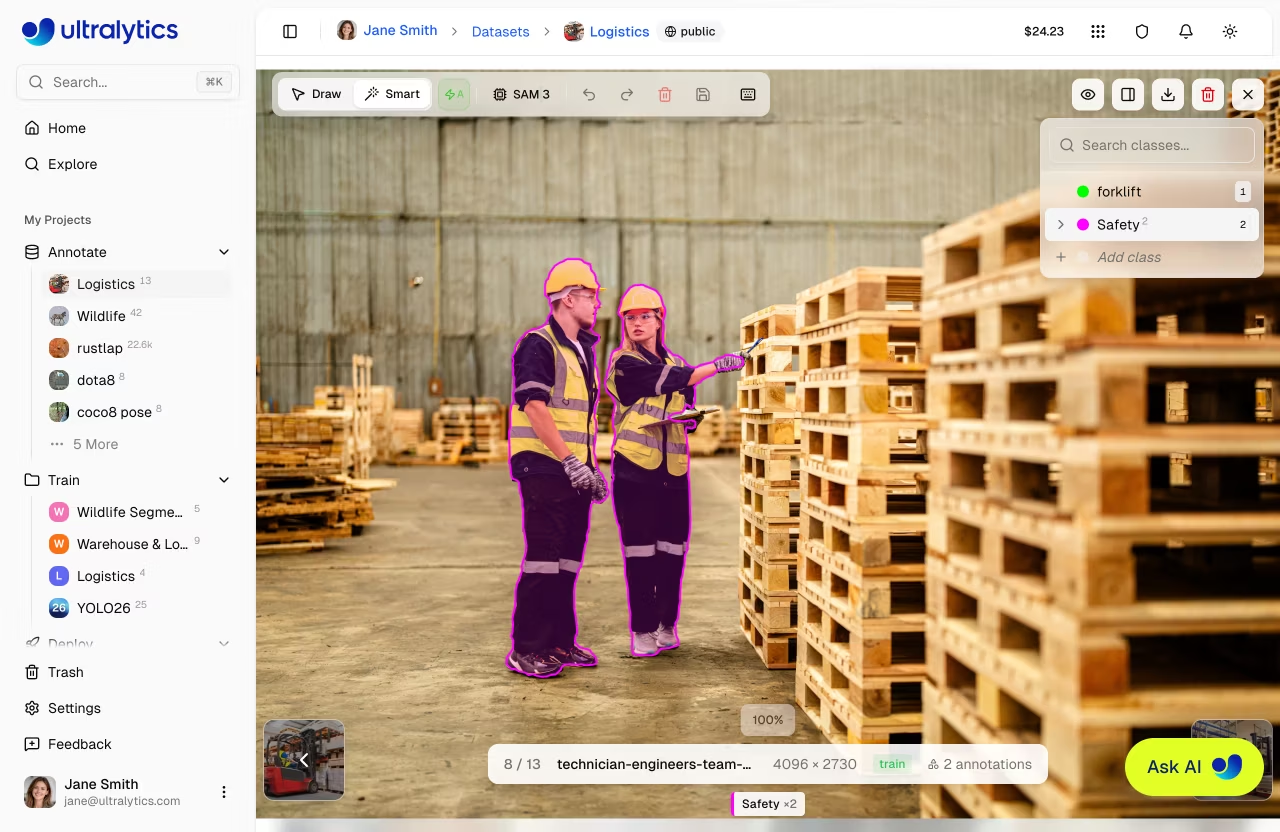

These open-source tools are budget-friendly and provide a range of features to meet different annotation needs. [Ultralytics Platform](https://platform.ultralytics.com) also provides a built-in [annotation editor](../platform/data/annotation.md) supporting all YOLO task types (detection, segmentation, pose, OBB, and classification) with SAM-powered smart annotation for spatial tasks.

|

||||

|

||||

### Some More Things to Consider Before Annotating Data

|

||||

|

||||

|

|

|

|||

|

|

@ -36,9 +36,10 @@ Before you start to follow this guide:

|

|||

|

||||

- Visit our documentation, [Quick Start Guide: NVIDIA Jetson with Ultralytics YOLO26](nvidia-jetson.md) to set up your NVIDIA Jetson device with Ultralytics YOLO26

|

||||

- Install [DeepStream SDK](https://developer.nvidia.com/deepstream-getting-started) according to the JetPack version

|

||||

- For JetPack 4.6.4, install [DeepStream 6.0.1](https://docs.nvidia.com/metropolis/deepstream/6.0.1/dev-guide/text/DS_Quickstart.html)

|

||||

- For JetPack 5.1.3, install [DeepStream 6.3](https://docs.nvidia.com/metropolis/deepstream/6.3/dev-guide/text/DS_Quickstart.html)

|

||||

- For JetPack 6.1, install [DeepStream 7.1](https://docs.nvidia.com/metropolis/deepstream/7.0/dev-guide/text/DS_Overview.html)

|

||||

- For JetPack 4.6.4, install [DeepStream 6.0.1](https://archive.docs.nvidia.com/metropolis/deepstream/6.0.1/dev-guide/text/DS_Quickstart.html)

|

||||

- For JetPack 5.1.3, install [DeepStream 6.3](https://archive.docs.nvidia.com/metropolis/deepstream/6.3/dev-guide/text/DS_Quickstart.html)

|

||||

- For JetPack 6.1, install [DeepStream 7.1](https://docs.nvidia.com/metropolis/deepstream/7.1/text/DS_Overview.html)

|

||||

- For JetPack 7.1, install [DeepStream 9.0](https://docs.nvidia.com/metropolis/deepstream/9.0/text/DS_Overview.html)

|

||||

|

||||

!!! tip

|

||||

|

||||

|

|

|

|||

190

docs/en/guides/finetuning-guide.md

Normal file

190

docs/en/guides/finetuning-guide.md

Normal file

|

|

@ -0,0 +1,190 @@

|

|||

---

|

||||

comments: true

|

||||

description: Learn how to fine-tune YOLO26 on a custom dataset with pretrained weights. Complete guide covering transfer learning, layer freezing, optimizer selection, two-stage training, and troubleshooting common issues like low mAP and catastrophic forgetting.

|

||||

keywords: fine-tune YOLO, finetune YOLO custom dataset, YOLO transfer learning, YOLO26 fine-tuning, freeze layers YOLO, pretrained YOLO custom data, YOLO training from scratch vs fine-tuning, catastrophic forgetting YOLO, two-stage fine-tuning, YOLO optimizer selection, fine-tune object detection model, custom object detection training, YOLO freeze backbone, how to finetune YOLO26

|

||||

---

|

||||

|

||||

# How to Fine-Tune YOLO on a Custom Dataset

|

||||

|

||||

[Fine-tuning](https://www.ultralytics.com/glossary/fine-tuning) adapts a pretrained model to recognize new classes by starting from learned weights rather than random initialization. Instead of training from scratch for hundreds of epochs, fine-tuning leverages pretrained [COCO](../datasets/detect/coco.md) features and converges on custom data in a fraction of the time.

|

||||

|

||||

This guide covers fine-tuning [YOLO26](../models/yolo26.md) on custom datasets, from basic usage to advanced techniques like [layer freezing](#freezing-layers) and [two-stage training](#two-stage-fine-tuning).

|

||||

|

||||

## Fine-Tuning vs Training from Scratch

|

||||

|

||||

A pretrained model has already learned general visual features - edge detection, texture recognition, shape understanding - from millions of images. [Transfer learning](https://www.ultralytics.com/glossary/transfer-learning) through fine-tuning reuses that knowledge and only teaches the model what the new classes look like, which is why it converges faster and requires less data. Training from scratch discards all of that and forces the model to learn everything from pixel-level patterns up, which demands significantly more resources.

|

||||

|

||||

| | Fine-Tuning | Training from Scratch |

|

||||

| --------------------- | ---------------------------------------------------- | --------------------------------------------------------------------- |

|

||||

| **Starting weights** | Pretrained on COCO (80 classes) | Random initialization |

|

||||

| **Command** | `YOLO("yolo26n.pt")` | `YOLO("yolo26n.yaml")` |

|

||||

| **Convergence** | Faster - backbone is already trained | Slower - all layers learn from zero |

|

||||

| **Data requirements** | Lower - pretrained features compensate for less data | Higher - model must learn all features from the dataset alone |

|

||||

| **When to use** | Custom classes with natural images | Domains fundamentally different from COCO (medical, satellite, radar) |

|

||||

|

||||

!!! tip "Fine-tuning requires no extra code"

|

||||

|

||||

When a `.pt` file is loaded with `YOLO("yolo26n.pt")`, the pretrained weights are stored in the model. Calling `.train(data="custom.yaml")` after that automatically transfers all compatible weights to the new model architecture, reinitializes any layers that don't match (such as the detection head when the number of classes differs), and begins training. No manual weight loading, layer manipulation, or custom transfer learning code is required.

|

||||

|

||||

### How Pretrained Weight Transfer Works

|

||||

|

||||

When a pretrained model is fine-tuned on a dataset with a different number of classes (for example, COCO's 80 classes to 5 custom classes), Ultralytics performs shape-aware weight transfer:

|

||||

|

||||

1. **Backbone and neck transfer fully** - these layers extract general visual features and their shapes are independent of the number of classes.

|

||||

2. **Detection head is partially reinitialized** - the classification output layers (`cv3`, `one2one_cv3`) have shapes tied to the class count (80 vs 5), so they cannot transfer and are randomly initialized. Box regression layers (`cv2`, `one2one_cv2`) in the head have fixed shapes regardless of class count, so they transfer normally.

|

||||

3. **The vast majority of weights transfer** when changing class count. Only the classification-specific layers in the detection head are reinitialized - the backbone, neck, and box regression branches remain intact.

|

||||

|

||||

For datasets with the same number of classes as the pretrained model (for example, fine-tuning COCO-pretrained weights on another 80-class dataset), 100% of weights transfer including the detection head.

|

||||

|

||||

## Basic Fine-Tuning Example

|

||||

|

||||

!!! example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

model = YOLO("yolo26n.pt") # load pretrained model

|

||||

model.train(data="path/to/data.yaml", epochs=50, imgsz=640)

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

yolo detect train model=yolo26n.pt data=path/to/data.yaml epochs=50 imgsz=640

|

||||

```

|

||||

|

||||

### Choosing a Model Size

|

||||

|

||||

Larger models have more capacity but also more parameters to update, which can increase the risk of overfitting when training data is limited. Starting with a smaller model (YOLO26n or YOLO26s) and scaling up only if validation metrics plateau is a practical approach. The optimal model size depends on the complexity of the task, the number of classes, the diversity of the dataset, and the hardware available for deployment. See the full [YOLO26 model page](../models/yolo26.md) for available sizes and performance benchmarks.

|

||||

|

||||

## Optimizer and Learning Rate Selection

|

||||

|

||||

The default `optimizer=auto` setting selects the optimizer and learning rate based on the total number of training iterations:

|

||||

|

||||

- **< 10,000 iterations** (small datasets or few epochs): AdamW with a low, auto-calculated learning rate

|

||||

- **> 10,000 iterations** (large datasets): [MuSGD](../reference/optim/muon.md) (a hybrid Muon+SGD optimizer) with lr=0.01

|

||||

|

||||

For most fine-tuning tasks, the default setting works well without any manual tuning. Consider setting the optimizer explicitly when:

|

||||

|

||||

- **Training is unstable** (loss spikes or diverges): try `optimizer=AdamW, lr0=0.001` for more stable convergence

|

||||

- **Fine-tuning a large model on a small dataset**: a lower learning rate like `lr0=0.001` helps preserve pretrained features

|

||||

|

||||

!!! warning "Auto optimizer overrides manual lr0"

|

||||

|

||||

When `optimizer=auto`, the `lr0` and `momentum` values are ignored. To control the learning rate manually, set the optimizer explicitly: `optimizer=SGD, lr0=0.005`.

|

||||

|

||||

## Freezing Layers

|

||||

|

||||

Freezing prevents specific layers from updating during training. This speeds up training and reduces [overfitting](https://www.ultralytics.com/glossary/overfitting) when the dataset is small relative to the model capacity.

|

||||

|

||||

The `freeze` parameter accepts either an integer or a list. An integer `freeze=10` freezes the first 10 layers (0 through 9, which corresponds to the backbone in YOLO26). A list can contain layer indices like `freeze=[0, 3, 5]` for partial backbone freezing, or module name strings like `freeze=["23.cv2"]` for fine-grained control over specific branches within a layer.

|

||||

|

||||

!!! example

|

||||

|

||||

=== "Freeze backbone"

|

||||

|

||||

```python

|

||||

model.train(data="custom.yaml", epochs=50, freeze=10)

|

||||

```

|

||||

|

||||

=== "Freeze specific layers"

|

||||

|

||||

```python

|

||||

model.train(data="custom.yaml", epochs=50, freeze=[0, 1, 2, 3, 4])

|

||||

```

|

||||

|

||||

=== "Freeze by module name"

|

||||

|

||||

```python

|

||||

# Freeze the box regression branch of the detection head

|

||||

model.train(data="custom.yaml", epochs=50, freeze=["23.cv2"])

|

||||

```

|

||||

|

||||

The right freeze depth depends on how similar the target domain is to the pretrained data and how much training data is available:

|

||||

|

||||

| Scenario | Recommendation | Rationale |

|

||||

| ----------------------------- | ----------------------- | ----------------------------------------------------------- |

|

||||

| Large dataset, similar domain | `freeze=None` (default) | Enough data to adapt all layers without overfitting |

|

||||

| Small dataset, similar domain | `freeze=10` | Preserves backbone features, reduces trainable parameters |

|

||||

| Very small dataset | `freeze=23` | Only the detection head trains, minimizing overfitting risk |

|

||||

| Domain far from COCO | `freeze=None` | Backbone features may not transfer well and need retraining |

|

||||

|

||||

Freeze depth can also be treated as a hyperparameter - trying a few values (0, 5, 10) and comparing validation mAP is a practical way to find the best setting for a specific dataset.

|

||||

|

||||

## Key Hyperparameters for Fine-Tuning

|

||||

|

||||

Fine-tuning generally requires fewer hyperparameter adjustments than training from scratch. The parameters that matter most are:

|

||||

|

||||

- **`epochs`**: Fine-tuning converges faster than training from scratch. Start with a moderate value and use `patience` to stop early when validation metrics plateau.

|

||||

- **`patience`**: The default of 100 is designed for long training runs. Reducing this to 10-20 avoids wasting time on runs that have already converged.

|

||||

- **`warmup_epochs`**: The default warmup (3 epochs) gradually increases the learning rate from zero, which prevents large gradient updates from damaging pretrained features in early iterations. Keeping the default is recommended even for fine-tuning.

|

||||

|

||||

For the full list of training parameters, see the [training configuration reference](../usage/cfg.md).

|

||||

|

||||

## Two-Stage Fine-Tuning

|

||||

|

||||

Two-stage fine-tuning splits training into two phases. The first stage freezes the backbone and trains only the neck and head, allowing the detection layers to adapt to the new classes without disrupting pretrained features. The second stage unfreezes all layers and trains the full model with a lower learning rate to refine the backbone for the target domain.

|

||||

|

||||

This approach is particularly useful when the target domain differs significantly from COCO (medical images, aerial imagery, microscopy), where the backbone may need adaptation but training everything at once causes instability. For automatic unfreezing with a callback-based approach, see [Freezing and Unfreezing the Backbone](custom-trainer.md#freezing-and-unfreezing-the-backbone).

|

||||

|

||||

!!! example "Two-stage fine-tuning"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Stage 1: freeze backbone, train head and neck

|

||||

model = YOLO("yolo26n.pt")

|

||||

model.train(data="custom.yaml", epochs=20, freeze=10, name="stage1", exist_ok=True)

|

||||

|

||||

# Stage 2: unfreeze all, fine-tune with lower lr

|

||||

model = YOLO("runs/detect/stage1/weights/best.pt")

|

||||

model.train(data="custom.yaml", epochs=30, lr0=0.001, name="stage2", exist_ok=True)

|

||||

```

|

||||

|

||||

## Common Pitfalls

|

||||

|

||||

### Model produces no predictions

|

||||

|

||||

- **Insufficient training data**: training with very few samples is the most common cause - the model cannot learn or generalize from too little data. Ensure enough diverse examples per class before investigating other causes.

|

||||

- **Check dataset paths**: incorrect paths in `data.yaml` silently produce zero labels. Run `yolo detect val model=yolo26n.pt data=your_data.yaml` before training to confirm labels load correctly.

|

||||

- **Lower confidence threshold**: if predictions exist but are filtered out, try `conf=0.1` during inference.

|

||||

- **Verify class count**: ensure `nc` in `data.yaml` matches the actual number of classes in the label files.

|

||||

|

||||

### Validation mAP plateaus early

|

||||

|

||||

- **Add more data**: fine-tuning benefits significantly from additional training data, especially diverse examples with varied angles, lighting, and backgrounds.

|

||||

- **Check class balance**: underrepresented classes will have low AP. Use `cls_pw` to apply inverse frequency class weighting (start with `cls_pw=0.25` for moderate imbalance, increase to `1.0` for severe imbalance).

|

||||

- **Reduce augmentation**: for very small datasets, heavy augmentation can hurt more than it helps. Try `mosaic=0.5` or `mosaic=0.0`.

|

||||

- **Increase resolution**: for datasets with small objects, try `imgsz=1280` to preserve detail.

|

||||

|

||||

### Performance degrades on original classes after fine-tuning

|

||||

|

||||

This is known as catastrophic forgetting - the model loses previously learned knowledge when fine-tuned exclusively on new data. Forgetting is mostly unavoidable without including original dataset images alongside new data. To mitigate this:

|

||||

|

||||

- **Merge datasets**: include examples of the original classes alongside the new classes during fine-tuning. This is the only reliable way to prevent forgetting.

|

||||

- **Freeze backbone and neck**: freezing both the backbone and neck so only the detection head trains helps for short fine-tuning runs with a very low learning rate.

|

||||

- **Train for fewer epochs**: the longer the model trains on new data exclusively, the more forgetting increases.

|

||||

|

||||

## FAQ

|

||||

|

||||

### How many images do I need to fine-tune YOLO?

|

||||

|

||||

There is no fixed minimum - results depend on the complexity of the task, the number of classes, and how similar the domain is to COCO. More diverse images (varied lighting, angles, backgrounds) matter more than raw quantity. Start with what you have and scale up if validation metrics are insufficient.

|

||||

|

||||

### How do I fine-tune YOLO26 on a custom dataset?

|

||||

|

||||

Load a pretrained `.pt` file and call `.train()` with the path to a custom `data.yaml`. Ultralytics automatically handles [weight transfer](https://www.ultralytics.com/glossary/transfer-learning), detection head reinitialization, and optimizer selection. See the [Basic Fine-Tuning](#basic-fine-tuning-example) section for the complete code example.

|

||||

|

||||

### Why is my fine-tuned YOLO model not detecting anything?

|

||||

|

||||

The most common causes are incorrect paths in `data.yaml` (which silently produces zero labels), a mismatch between `nc` in the YAML and the actual label files, or a confidence threshold that is too high. See [Common Pitfalls](#model-produces-no-predictions) for a full troubleshooting checklist.

|

||||

|

||||

### Which YOLO layers should I freeze for fine-tuning?

|

||||

|

||||

It depends on the dataset size and domain similarity. For small datasets with a domain similar to COCO, freezing the backbone (`freeze=10`) prevents overfitting. For domains very different from COCO, leaving all layers unfrozen (`freeze=None`) allows the backbone to adapt. See [Freezing Layers](#freezing-layers) for detailed recommendations.

|

||||

|

||||

### How do I prevent catastrophic forgetting when fine-tuning YOLO on new classes?

|

||||

|

||||

Include examples of the original classes in the training data alongside the new classes. If that is not possible, freezing more layers (`freeze=10` or higher) and using a lower learning rate helps preserve the pretrained knowledge. See [Performance degrades on original classes](#performance-degrades-on-original-classes-after-fine-tuning) for more details.

|

||||

|

|

@ -216,4 +216,4 @@ cv2.destroyAllWindows()

|

|||

|

||||

### Why should businesses choose Ultralytics YOLO26 for heatmap generation in data analysis?

|

||||

|

||||

Ultralytics YOLO26 offers seamless integration of advanced object detection and real-time heatmap generation, making it an ideal choice for businesses looking to visualize data more effectively. The key advantages include intuitive data distribution visualization, efficient pattern detection, and enhanced spatial analysis for better decision-making. Additionally, YOLO26's cutting-edge features such as persistent tracking, customizable colormaps, and support for various export formats make it superior to other tools like [TensorFlow](https://www.ultralytics.com/glossary/tensorflow) and OpenCV for comprehensive data analysis. Learn more about business applications at [Ultralytics Plans](https://www.ultralytics.com/plans).

|

||||

Ultralytics YOLO26 offers seamless integration of advanced object detection and real-time heatmap generation, making it an ideal choice for businesses looking to visualize data more effectively. The key advantages include intuitive data distribution visualization, efficient pattern detection, and enhanced spatial analysis for better decision-making. Additionally, YOLO26's cutting-edge features such as persistent tracking, customizable colormaps, and support for various export formats make it superior to other tools like [TensorFlow](https://www.ultralytics.com/glossary/tensorflow) and OpenCV for comprehensive data analysis. Learn more about business applications at [Ultralytics Plans](https://www.ultralytics.com/pricing).

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: Ultralytics YOLO, hyperparameter tuning, machine learning, model optim

|

|||

|

||||

## Introduction

|

||||

|

||||

Hyperparameter tuning is not just a one-time setup but an iterative process aimed at optimizing the [machine learning](https://www.ultralytics.com/glossary/machine-learning-ml) model's performance metrics, such as accuracy, precision, and recall. In the context of Ultralytics YOLO, these hyperparameters could range from learning rate to architectural details, such as the number of layers or types of activation functions used.

|

||||

Hyperparameter tuning is not just a one-time setup but an iterative process aimed at optimizing the [machine learning](https://www.ultralytics.com/glossary/machine-learning-ml) model's performance metrics, such as accuracy, precision, and recall. In the context of Ultralytics YOLO, these hyperparameters could range from learning rate to architectural details, such as the number of layers or types of activation functions used. [Ultralytics Platform](https://platform.ultralytics.com) also supports [cloud training](../platform/train/cloud-training.md) with configurable hyperparameters and real-time metrics tracking.

|

||||

|

||||

<p align="center">

|

||||

<br>

|

||||

|

|

@ -70,7 +70,7 @@ Use metrics like AP50, F1-score, or custom metrics to evaluate the model's perfo

|

|||

|

||||

### Log Results

|

||||

|

||||

It's crucial to log both the performance metrics and the corresponding hyperparameters for future reference. Ultralytics YOLO automatically saves these results in CSV format.

|

||||

It's crucial to log both the performance metrics and the corresponding hyperparameters for future reference. Ultralytics YOLO automatically saves these results in NDJSON format.

|

||||

|

||||

### Repeat

|

||||

|

||||

|

|

@ -90,6 +90,7 @@ The following table lists the default search space parameters for hyperparameter

|

|||

| `warmup_momentum` | `float` | `(0.0, 0.95)` | Initial momentum during warmup phase. Gradually increases to the final momentum value |

|

||||

| `box` | `float` | `(1.0, 20.0)` | Bounding box loss weight in the total loss function. Balances box regression vs classification |

|

||||

| `cls` | `float` | `(0.1, 4.0)` | Classification loss weight in the total loss function. Higher values emphasize correct class prediction |

|

||||

| `cls_pw` | `float` | `(0.0, 1.0)` | Class weighting power for handling class imbalance. Higher values increase weight on rare classes |

|

||||

| `dfl` | `float` | `(0.4, 12.0)` | DFL (Distribution Focal Loss) weight in the total loss function. Higher values emphasize precise bounding box localization |

|

||||

| `hsv_h` | `float` | `(0.0, 0.1)` | Random hue augmentation range in HSV color space. Helps model generalize across color variations |

|

||||

| `hsv_s` | `float` | `(0.0, 0.9)` | Random saturation augmentation range in HSV space. Simulates different lighting conditions |

|

||||

|

|

@ -186,8 +187,8 @@ runs/

|

|||

├── ...

|

||||

└── tune/

|

||||

├── best_hyperparameters.yaml

|

||||

├── best_fitness.png

|

||||

├── tune_results.csv

|

||||

├── tune_fitness.png

|

||||

├── tune_results.ndjson

|

||||

├── tune_scatter_plots.png

|

||||

└── weights/

|

||||

├── last.pt

|

||||

|

|

@ -236,7 +237,7 @@ This YAML file contains the best-performing hyperparameters found during the tun

|

|||

copy_paste: 0.0

|

||||

```

|

||||

|

||||

#### best_fitness.png

|

||||

#### tune_fitness.png

|

||||

|

||||

This is a plot displaying fitness (typically a performance metric like AP50) against the number of iterations. It helps you visualize how well the genetic algorithm performed over time.

|

||||

|

||||

|

|

@ -247,23 +248,59 @@ This is a plot displaying fitness (typically a performance metric like AP50) aga

|

|||

<img width="640" src="https://cdn.jsdelivr.net/gh/ultralytics/assets@main/docs/best-fitness.avif" alt="Hyperparameter Tuning Fitness vs Iteration">

|

||||

</p>

|

||||

|

||||

#### tune_results.csv

|

||||

#### tune_results.ndjson

|

||||

|

||||

A CSV file containing detailed results of each iteration during the tuning. Each row in the file represents one iteration, and it includes metrics like fitness score, [precision](https://www.ultralytics.com/glossary/precision), [recall](https://www.ultralytics.com/glossary/recall), as well as the hyperparameters used.

|

||||

An NDJSON file containing detailed results of each tuning iteration. Each line is one JSON object with the aggregate fitness, tuned hyperparameters, and per-dataset metrics. Single-dataset and multi-dataset tuning use the same file format.

|

||||

|

||||

- **Format**: CSV

|

||||

- **Format**: NDJSON

|

||||

- **Usage**: Per-iteration results tracking.

|

||||

- **Example**:

|

||||

```csv

|

||||

fitness,lr0,lrf,momentum,weight_decay,warmup_epochs,warmup_momentum,box,cls,dfl,hsv_h,hsv_s,hsv_v,degrees,translate,scale,shear,perspective,flipud,fliplr,mosaic,mixup,copy_paste

|

||||

0.05021,0.01,0.01,0.937,0.0005,3.0,0.8,7.5,0.5,1.5,0.015,0.7,0.4,0.0,0.1,0.5,0.0,0.0,0.0,0.5,1.0,0.0,0.0

|

||||

0.07217,0.01003,0.00967,0.93897,0.00049,2.79757,0.81075,7.5,0.50746,1.44826,0.01503,0.72948,0.40658,0.0,0.0987,0.4922,0.0,0.0,0.0,0.49729,1.0,0.0,0.0

|

||||

0.06584,0.01003,0.00855,0.91009,0.00073,3.42176,0.95,8.64301,0.54594,1.72261,0.01503,0.59179,0.40658,0.0,0.0987,0.46955,0.0,0.0,0.0,0.49729,0.80187,0.0,0.0

|

||||

```

|

||||

|

||||

A pretty-printed example is shown below for readability. In the actual `.ndjson` file, each object is stored on a single line.

|

||||

|

||||

```json

|

||||

{

|

||||

"iteration": 1,

|

||||

"fitness": 0.23345,

|

||||

"hyperparameters": {

|

||||

"lr0": 0.01,

|

||||

"lrf": 0.01,

|

||||

"momentum": 0.937,

|

||||

"weight_decay": 0.0005

|

||||

},

|

||||

"datasets": {

|

||||

"coco8": {

|

||||

"fitness": 0.28992

|

||||

},

|

||||

"coco8-grayscale": {

|

||||

"fitness": 0.17697

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

{

|

||||

"iteration": 2,

|

||||

"fitness": 0.23661,

|

||||

"hyperparameters": {

|

||||

"lr0": 0.0062,

|

||||

"lrf": 0.01,

|

||||

"momentum": 0.90058,

|

||||

"weight_decay": 0.0

|

||||

},

|

||||

"datasets": {

|

||||

"coco8": {

|

||||

"fitness": 0.29561

|

||||

},

|

||||

"coco8-grayscale": {

|

||||

"fitness": 0.1776

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

#### tune_scatter_plots.png

|

||||

|

||||

This file contains scatter plots generated from `tune_results.csv`, helping you visualize relationships between different hyperparameters and performance metrics. Note that hyperparameters initialized to 0 will not be tuned, such as `degrees` and `shear` below.

|

||||

This file contains scatter plots generated from `tune_results.ndjson`, helping you visualize relationships between different hyperparameters and performance metrics. Note that hyperparameters initialized to 0 will not be tuned, such as `degrees` and `shear` below.

|

||||

|

||||

- **Format**: PNG

|

||||

- **Usage**: Exploratory data analysis

|

||||

|

|

|

|||

|

|

@ -38,6 +38,7 @@ Here's a compilation of in-depth guides to help you master different aspects of

|

|||

- [Docker Quickstart](docker-quickstart.md): Complete guide to setting up and using Ultralytics YOLO models with [Docker](https://hub.docker.com/r/ultralytics/ultralytics). Learn how to install Docker, manage GPU support, and run YOLO models in isolated containers for consistent development and deployment.

|

||||

- [Edge TPU on Raspberry Pi](coral-edge-tpu-on-raspberry-pi.md): [Google Edge TPU](https://developers.google.com/coral) accelerates YOLO inference on [Raspberry Pi](https://www.raspberrypi.com/).

|

||||

- [End-to-End Detection](end2end-detection.md): Understand YOLO26's NMS-free end-to-end detection, export compatibility, output format changes, and how to migrate from older YOLO models.

|

||||

- [Fine-Tuning YOLO on Custom Data](finetuning-guide.md): Complete guide to fine-tuning YOLO26 on custom datasets with pretrained weights, covering transfer learning, layer freezing, optimizer selection, two-stage training, and troubleshooting.

|

||||

- [Hyperparameter Tuning](hyperparameter-tuning.md): Discover how to optimize your YOLO models by fine-tuning hyperparameters using the Tuner class and genetic evolution algorithms.

|

||||

- [Insights on Model Evaluation and Fine-Tuning](model-evaluation-insights.md): Gain insights into the strategies and best practices for evaluating and fine-tuning your computer vision models. Learn about the iterative process of refining models to achieve optimal results.

|

||||

- [Isolating Segmentation Objects](isolating-segmentation-objects.md): Step-by-step recipe and explanation on how to extract and/or isolate objects from images using Ultralytics Segmentation.

|

||||

|

|

@ -45,6 +46,7 @@ Here's a compilation of in-depth guides to help you master different aspects of

|

|||

- [Maintaining Your Computer Vision Model](model-monitoring-and-maintenance.md): Understand the key practices for monitoring, maintaining, and documenting computer vision models to guarantee accuracy, spot anomalies, and mitigate data drift.

|

||||

- [Model Deployment Options](model-deployment-options.md): Overview of YOLO [model deployment](https://www.ultralytics.com/glossary/model-deployment) formats like ONNX, OpenVINO, and TensorRT, with pros and cons for each to inform your deployment strategy.

|

||||

- [Model YAML Configuration Guide](model-yaml-config.md): A comprehensive deep dive into Ultralytics' model architecture definitions. Explore the YAML format, understand the module resolution system, and learn how to integrate custom modules seamlessly.

|

||||

- [NVIDIA DALI GPU Preprocessing](nvidia-dali.md): Eliminate CPU preprocessing bottlenecks by running YOLO letterbox resize, padding, and normalization on the GPU using NVIDIA DALI, with Triton Inference Server integration.

|

||||

- [NVIDIA DGX Spark](nvidia-dgx-spark.md): Quickstart guide for deploying YOLO models on NVIDIA DGX Spark devices.

|

||||

- [NVIDIA Jetson](nvidia-jetson.md): Quickstart guide for deploying YOLO models on NVIDIA Jetson devices.

|

||||

- [OpenVINO Latency vs Throughput Modes](optimizing-openvino-latency-vs-throughput-modes.md): Learn latency and throughput optimization techniques for peak YOLO inference performance.

|

||||

|

|

|

|||

197

docs/en/guides/modal-quickstart.md

Normal file

197

docs/en/guides/modal-quickstart.md

Normal file

|

|

@ -0,0 +1,197 @@

|

|||

---

|

||||

comments: true

|

||||

description: Learn to set up Modal for running Ultralytics YOLO26 in the cloud. Follow our guide for easy serverless GPU inference and training.

|

||||

keywords: Ultralytics, Modal, YOLO26, serverless, cloud computing, GPU, machine learning, inference, training

|

||||

---

|

||||

|

||||

# Modal Quickstart Guide for Ultralytics

|

||||

|

||||

This guide provides a comprehensive introduction to running [Ultralytics YOLO26](../models/yolo26.md) on [Modal](https://modal.com/), covering serverless GPU inference and model training.

|

||||

|

||||

## What is Modal?

|

||||

|

||||

[Modal](https://modal.com/) is a serverless [cloud computing](https://www.ultralytics.com/glossary/cloud-computing) platform for AI and [machine learning](https://www.ultralytics.com/glossary/machine-learning-ml) workloads. It handles provisioning, scaling, and execution automatically — you write Python code locally and Modal runs it in the cloud with GPU access. This makes it ideal for running [deep learning](https://www.ultralytics.com/glossary/deep-learning-dl) models like YOLO26 without managing infrastructure.

|

||||

|

||||

## What You Will Learn

|

||||

|

||||

- Setting up Modal and authenticating

|

||||

- Running YOLO26 inference on Modal

|

||||

- Using GPUs for faster inference

|

||||

- Training YOLO26 models on Modal

|

||||

|

||||

## Prerequisites

|

||||

|

||||

- A Modal account (sign up for free at [modal.com](https://modal.com/))

|

||||

- Python 3.9 or later installed on your local machine

|

||||

|

||||

## Installation

|

||||

|

||||

Install the Modal Python package and authenticate:

|

||||

|

||||

```bash

|

||||

pip install modal

|

||||

```

|

||||

|

||||

```bash

|

||||

modal token new

|

||||

```

|

||||

|

||||

!!! tip "Authentication"

|

||||

|

||||

The `modal token new` command will open a browser window to authenticate your Modal account. After authentication, you can run Modal commands from the terminal.

|

||||

|

||||

## Running YOLO26 Inference

|

||||

|

||||

Create a new Python file called `modal_yolo.py` with the following code:

|

||||

|

||||

```python

|

||||

"""

|

||||

Modal + Ultralytics YOLO26 Quickstart

|

||||

Run: modal run modal_yolo.py.

|

||||

"""

|

||||

|

||||

import modal

|

||||

|

||||

app = modal.App("ultralytics-yolo")

|

||||

|

||||

image = modal.Image.debian_slim(python_version="3.11").apt_install("libgl1", "libglib2.0-0").pip_install("ultralytics")

|

||||

|

||||

|

||||

@app.function(image=image)

|

||||

def predict(image_url: str):

|

||||

"""Run YOLO26 inference on an image URL."""

|

||||

from ultralytics import YOLO

|

||||

|

||||

model = YOLO("yolo26n.pt")

|

||||

results = model(image_url)

|

||||

|

||||

for r in results:

|

||||

print(f"Detected {len(r.boxes)} objects:")

|

||||

for box in r.boxes:

|

||||

print(f" - {model.names[int(box.cls)]}: {float(box.conf):.2f}")

|

||||

|

||||

|