mirror of

https://github.com/lobehub/lobehub

synced 2026-04-21 17:47:27 +00:00

♻️ refactor: refactor with chat docs site (#1309)

* 📝 docs: add package.json * 🔧 chore: update config * 🔧 chore: update config * 📝 docs: update docs * 📝 docs: add en-US docs * 📝 docs: update docs * 📝 docs: fix docs url * 🔧 chore: add docs rewrites * 🔧 chore: add docs rewrites * 🔧 chore: fix docs rewrites * 💄 style: update docs link * 🚚 chore: move to contributing docs * 🔧 chore: update contributing ci workflow * 💄 style: update docs link

This commit is contained in:

parent

c831b978f9

commit

c131fa68f0

125 changed files with 4267 additions and 2281 deletions

6

.github/workflows/issue-close-require.yml

vendored

6

.github/workflows/issue-close-require.yml

vendored

|

|

@ -21,7 +21,7 @@ jobs:

|

|||

token: ${{ secrets.GH_TOKEN }}

|

||||

inactive-label: 'Inactive'

|

||||

inactive-day: 30

|

||||

|

||||

|

||||

issue-close-require:

|

||||

permissions:

|

||||

issues: write # for actions-cool/issues-helper to update issues

|

||||

|

|

@ -36,7 +36,7 @@ jobs:

|

|||

labels: '✅ Fixed'

|

||||

inactive-day: 3

|

||||

body: |

|

||||

👋 @{{ github.event.issue.user.login }}

|

||||

👋 @{{ author }}

|

||||

<br/>

|

||||

Since the issue was labeled with `✅ Fixed`, but no response in 3 days. This issue will be closed. If you have any questions, you can comment and reply.\

|

||||

由于该 issue 被标记为已修复,同时 3 天未收到回应。现关闭 issue,若有任何问题,可评论回复。

|

||||

|

|

@ -48,7 +48,7 @@ jobs:

|

|||

labels: '🤔 Need Reproduce'

|

||||

inactive-day: 3

|

||||

body: |

|

||||

👋 @{{ github.event.issue.user.login }}

|

||||

👋 @{{ author }}

|

||||

<br/>

|

||||

Since the issue was labeled with `🤔 Need Reproduce`, but no response in 3 days. This issue will be closed. If you have any questions, you can comment and reply.\

|

||||

由于该 issue 被标记为需要更多信息,却 3 天未收到回应。现关闭 issue,若有任何问题,可评论回复。

|

||||

|

|

|

|||

6

.github/workflows/issues-translate.yml

vendored

6

.github/workflows/issues-translate.yml

vendored

|

|

@ -1,8 +1,8 @@

|

|||

name: Issue Translate

|

||||

on:

|

||||

issue_comment:

|

||||

on:

|

||||

issue_comment:

|

||||

types: [created]

|

||||

issues:

|

||||

issues:

|

||||

types: [opened]

|

||||

|

||||

jobs:

|

||||

|

|

|

|||

2

.github/workflows/wiki-sync.yml

vendored

2

.github/workflows/wiki-sync.yml

vendored

|

|

@ -4,7 +4,7 @@ on:

|

|||

workflow_dispatch:

|

||||

push:

|

||||

paths:

|

||||

- 'docs/**'

|

||||

- 'contributing/**'

|

||||

branches:

|

||||

- main

|

||||

|

||||

|

|

|

|||

|

|

@ -22,13 +22,13 @@ module.exports = defineConfig({

|

|||

'vi-VN',

|

||||

],

|

||||

temperature: 0,

|

||||

modelName: 'gpt-3.5-turbo-1106',

|

||||

modelName: 'gpt-3.5-turbo-0125',

|

||||

splitToken: 1024,

|

||||

experimental: {

|

||||

jsonMode: true,

|

||||

},

|

||||

markdown: {

|

||||

entry: ['./README.zh-CN.md', './docs/**/*.zh-CN.md'],

|

||||

entry: ['./README.zh-CN.md', './docs/**/*.zh-CN.md', './docs/**/*.zh-CN.mdx'],

|

||||

entryLocale: 'zh-CN',

|

||||

entryExtension: '.zh-CN.md',

|

||||

outputLocales: ['en-US'],

|

||||

|

|

|

|||

|

|

@ -11,7 +11,7 @@

|

|||

LobeChat is an open-source, high-performance chatbot framework<br/>that supports speech synthesis, multimodal, and extensible ([Function Call][fc-link]) plugin system. <br/>

|

||||

Supports one-click free deployment of your private ChatGPT/LLM web application.

|

||||

|

||||

**English** · [简体中文](./README.zh-CN.md) · [Changelog](./CHANGELOG.md) · [Wiki][github-wiki-link] · [Report Bug][github-issues-link] · [Request Feature][github-issues-link]

|

||||

**English** · [简体中文](./README.zh-CN.md) · [Changelog](./CHANGELOG.md) · [Documents][github-document-link] · [Report Bug][github-issues-link] · [Request Feature][github-issues-link]

|

||||

|

||||

<!-- SHIELD GROUP -->

|

||||

|

||||

|

|

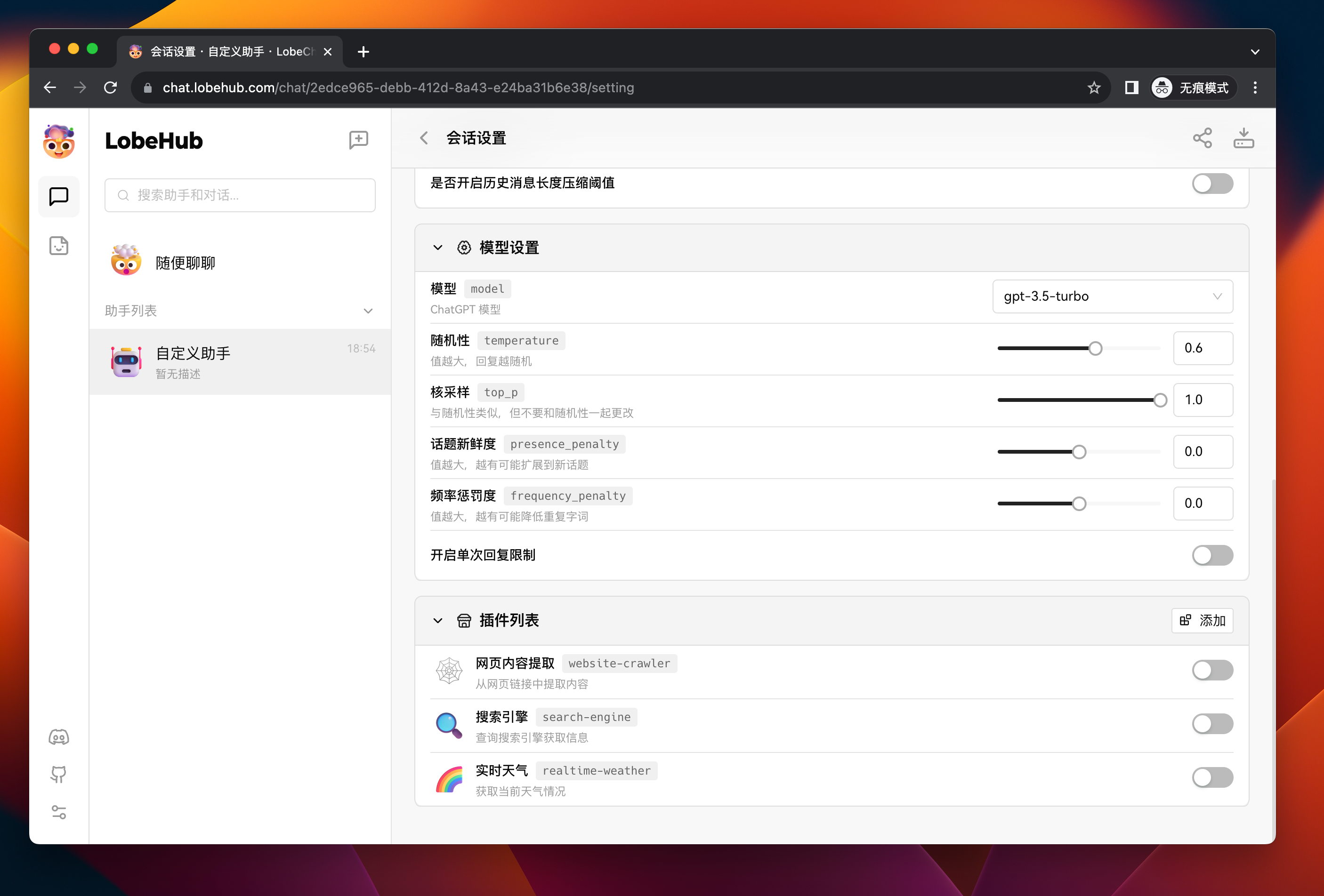

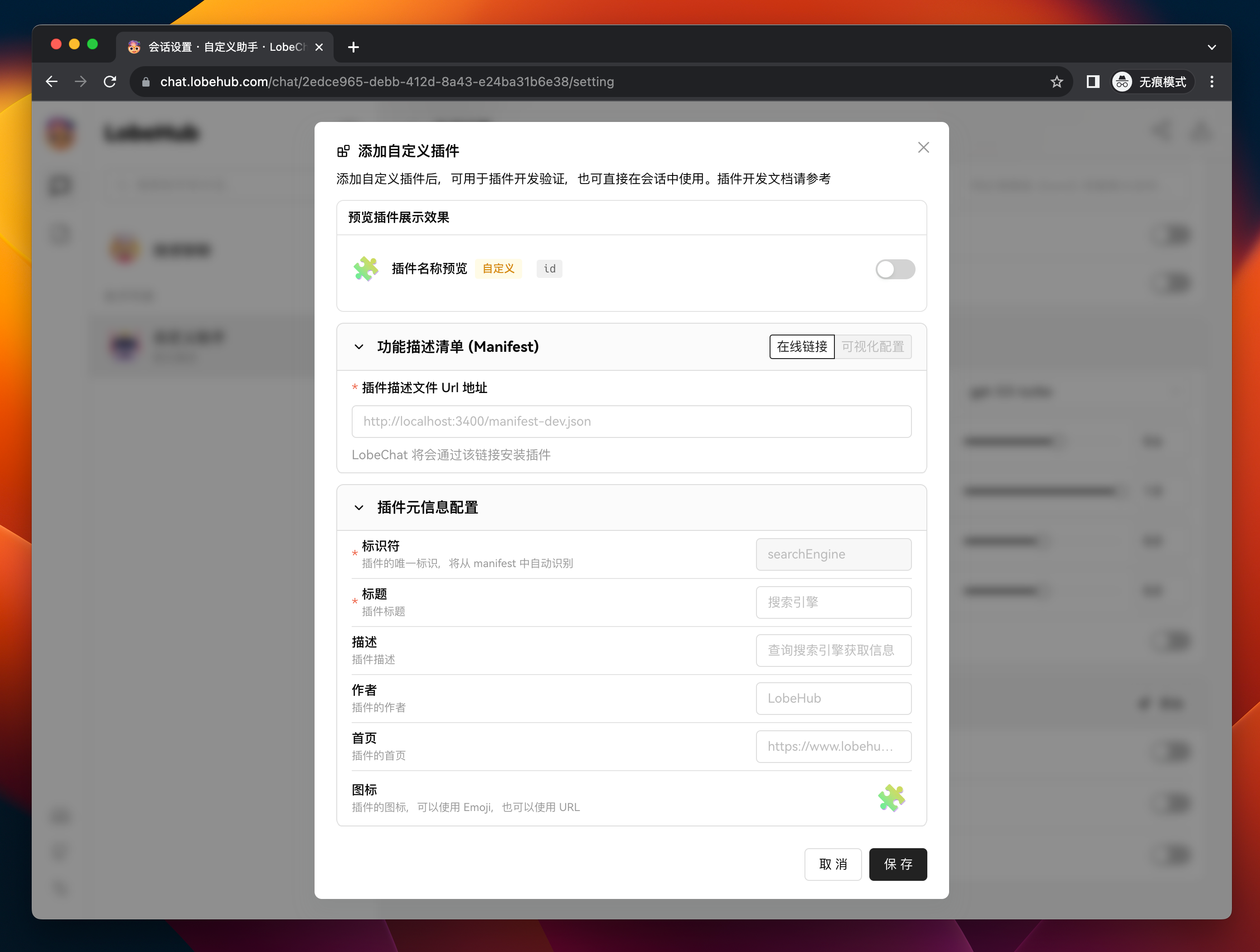

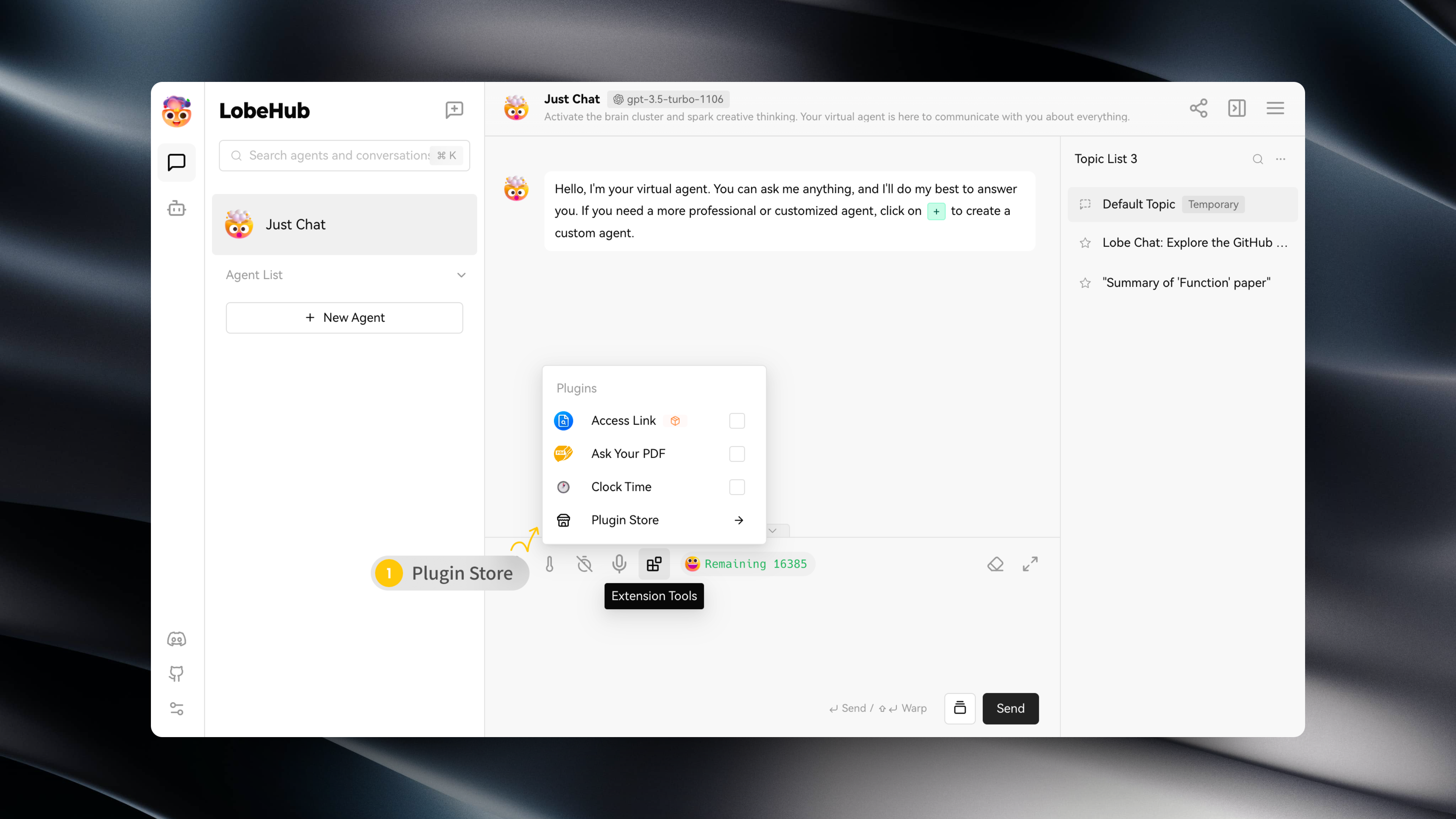

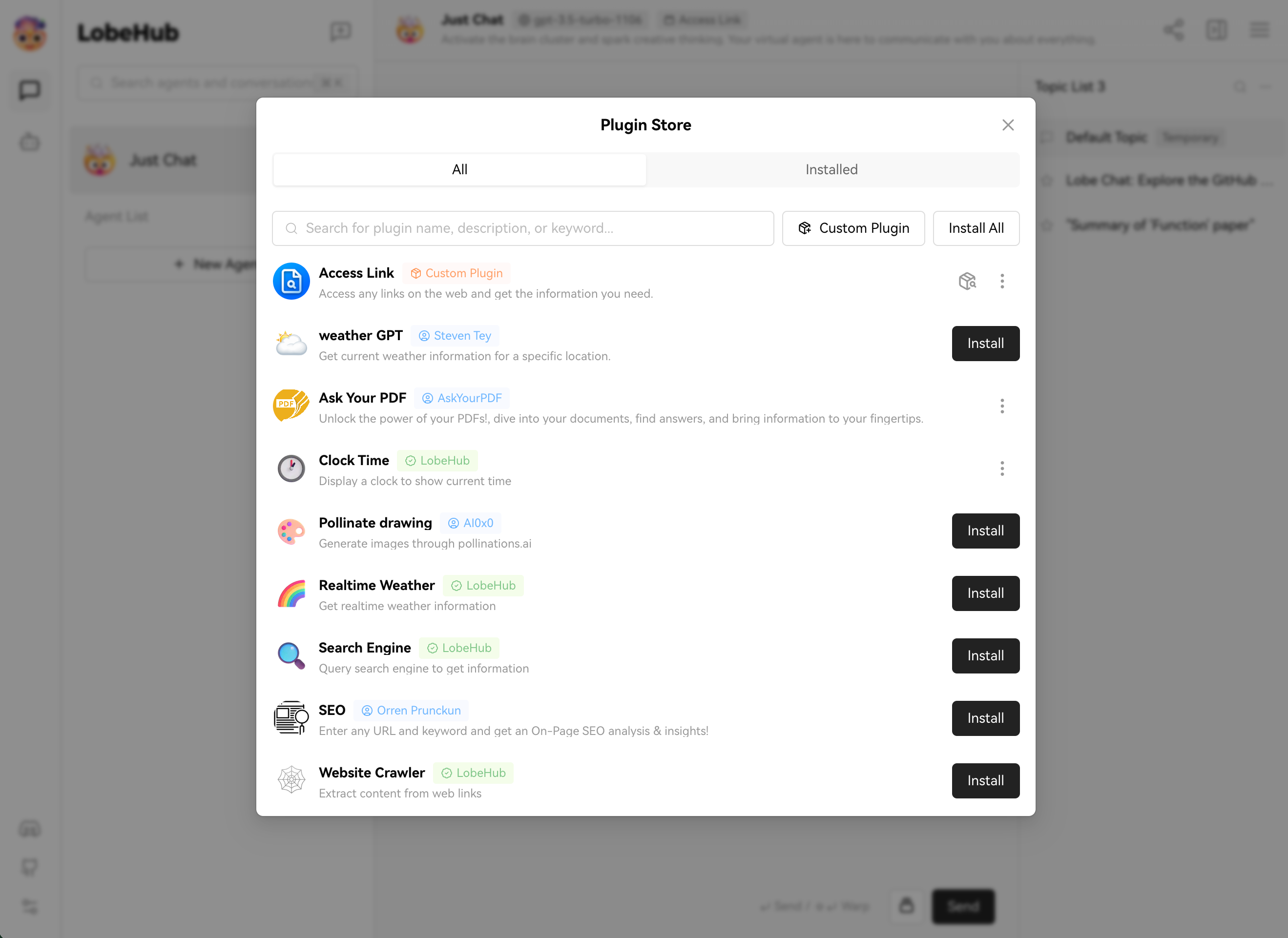

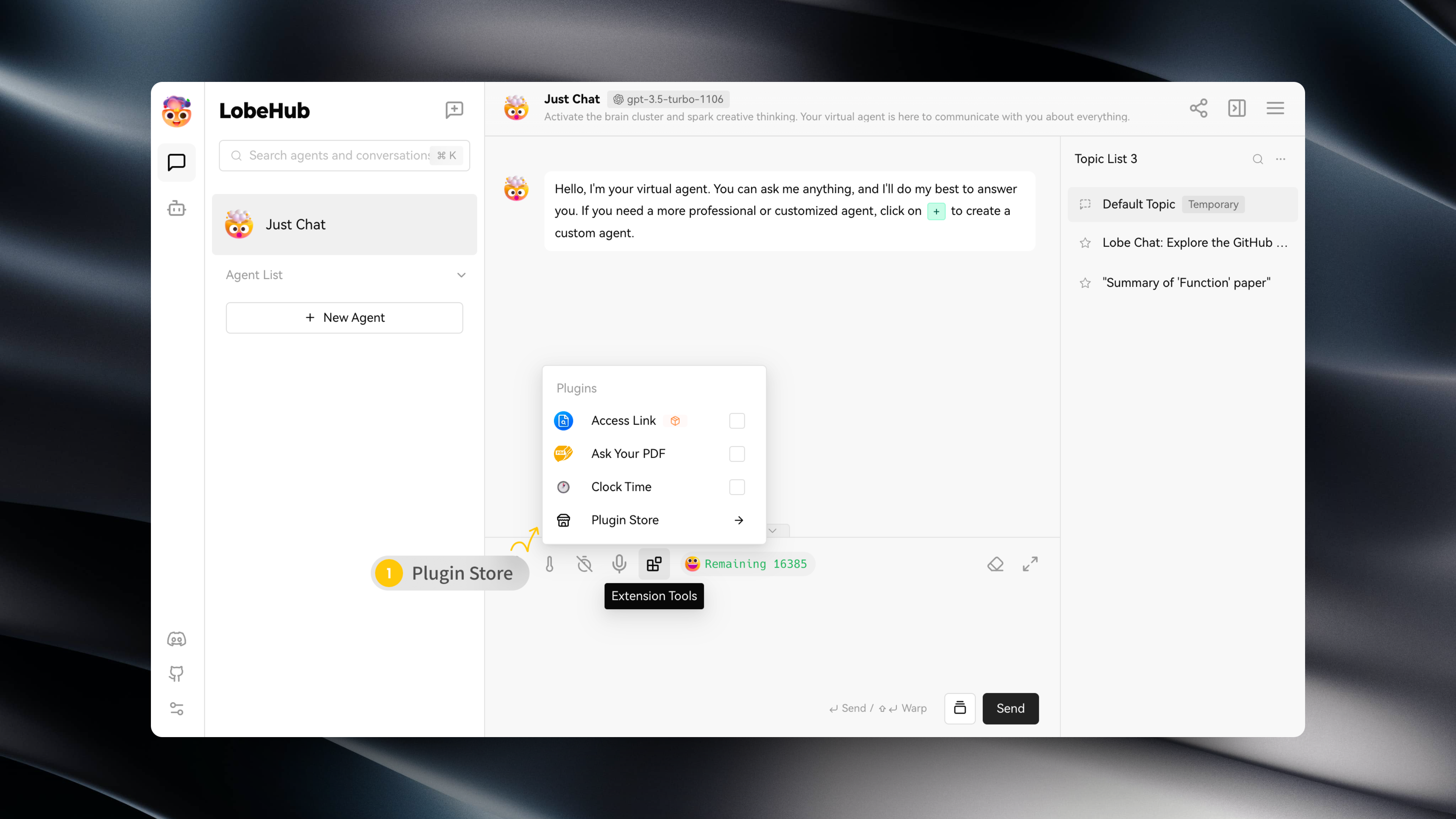

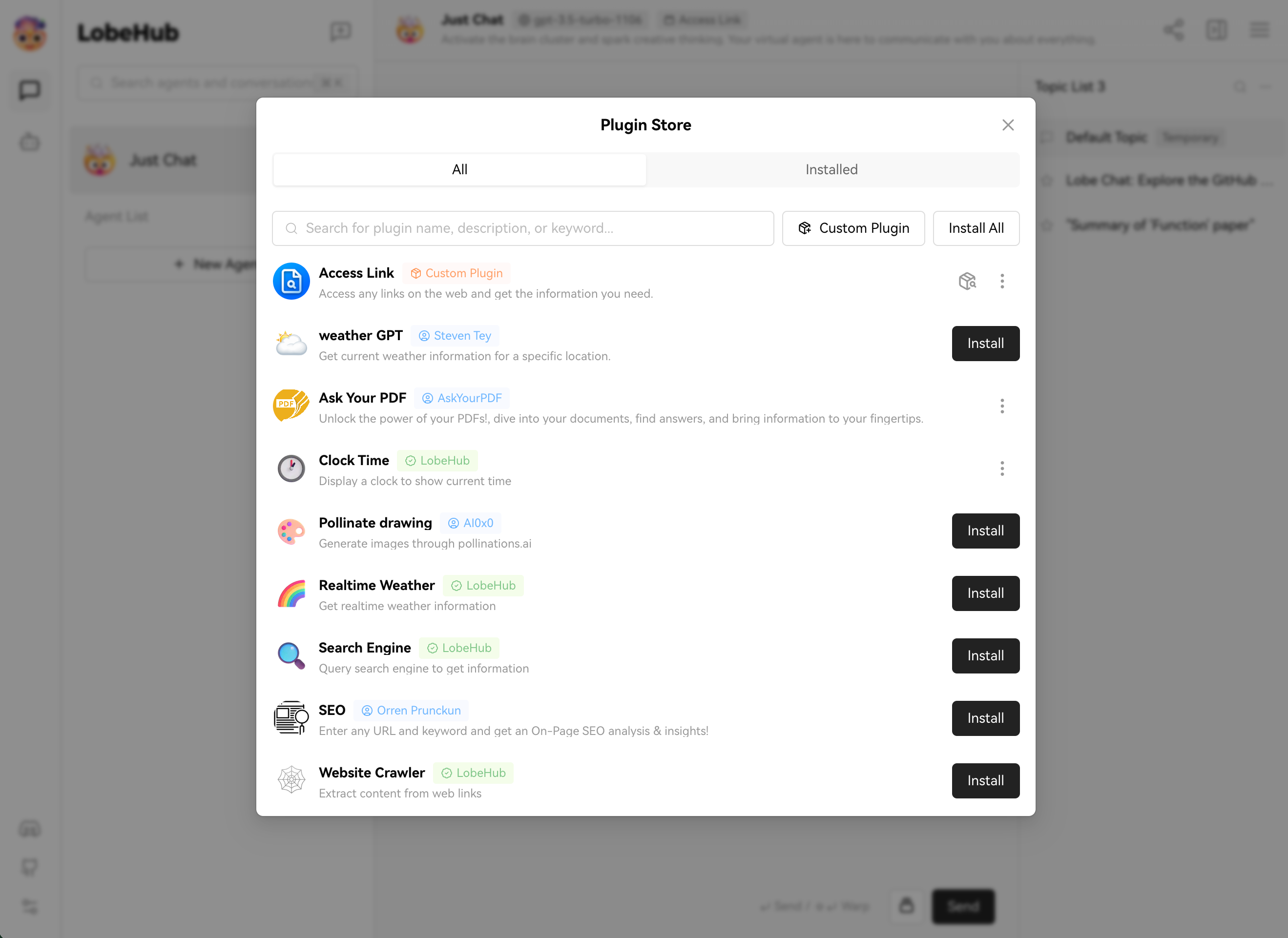

@ -169,7 +169,7 @@ such as automatically fetching the latest news headlines to provide users with i

|

|||

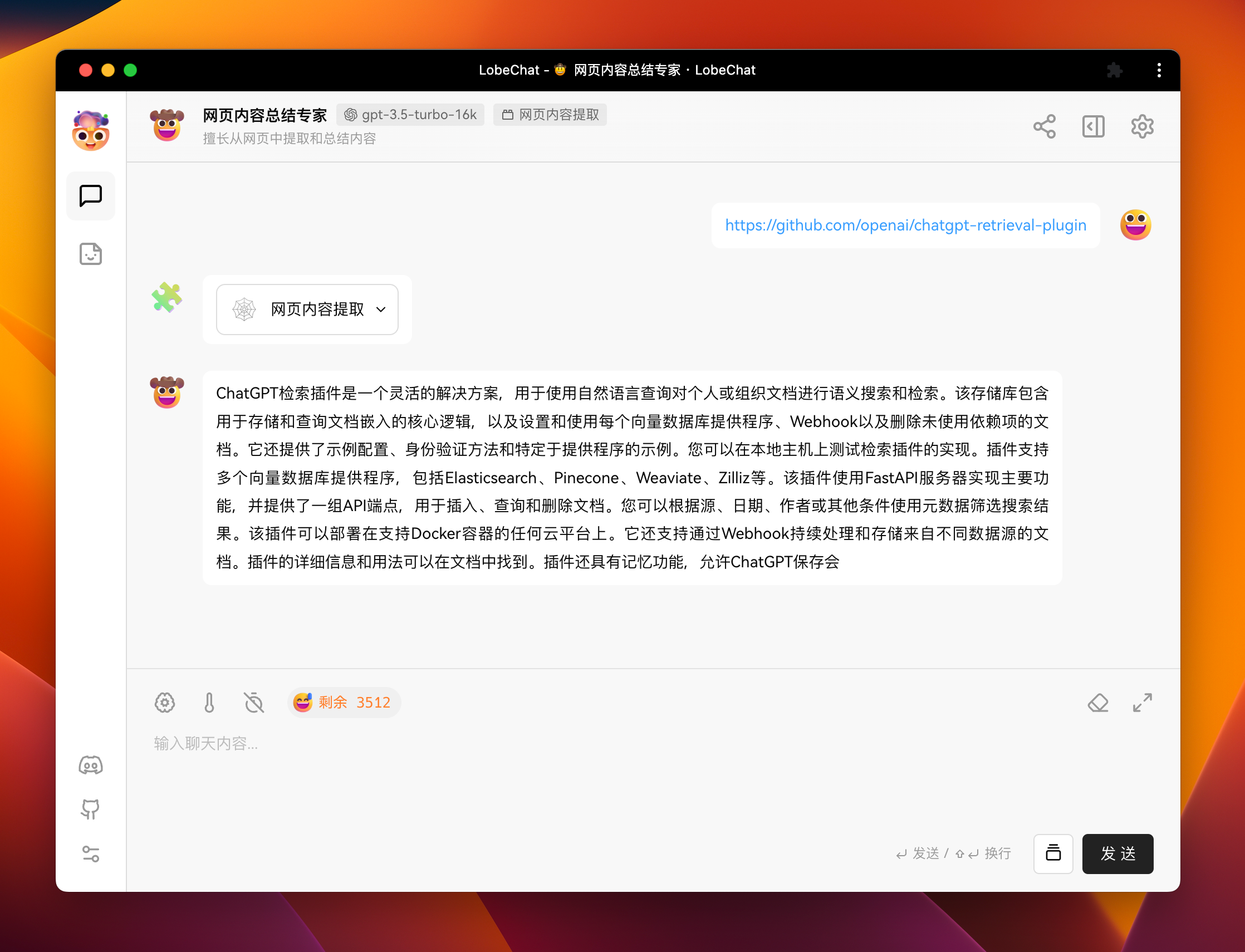

Moreover, these plugins are not limited to news aggregation but can also extend to other practical functions, such as quick document retrieval,

|

||||

e-commerce platform data access, and various third-party services.

|

||||

|

||||

> Learn More in [📘 Plugin Usage](https://github.com/lobehub/lobe-chat/wiki/Plugins)

|

||||

> Learn More in [📘 Plugin Usage](https://chat-docs.lobehub.com/en/usage/plugins/basic)

|

||||

|

||||

<video controls src="https://github.com/lobehub/lobe-chat/assets/28616219/f29475a3-f346-4196-a435-41a6373ab9e2" muted="false"></video>

|

||||

|

||||

|

|

@ -622,6 +622,7 @@ This project is [MIT](./LICENSE) licensed.

|

|||

[github-action-test-shield]: https://img.shields.io/github/actions/workflow/status/lobehub/lobe-chat/test.yml?label=test&labelColor=black&logo=githubactions&logoColor=white&style=flat-square

|

||||

[github-contributors-link]: https://github.com/lobehub/lobe-chat/graphs/contributors

|

||||

[github-contributors-shield]: https://img.shields.io/github/contributors/lobehub/lobe-chat?color=c4f042&labelColor=black&style=flat-square

|

||||

[github-document-link]: https://chat-docs.lobehub.com/en

|

||||

[github-forks-link]: https://github.com/lobehub/lobe-chat/network/members

|

||||

[github-forks-shield]: https://img.shields.io/github/forks/lobehub/lobe-chat?color=8ae8ff&labelColor=black&style=flat-square

|

||||

[github-issues-link]: https://github.com/lobehub/lobe-chat/issues

|

||||

|

|

@ -637,7 +638,6 @@ This project is [MIT](./LICENSE) licensed.

|

|||

[github-stars-shield]: https://img.shields.io/github/stars/lobehub/lobe-chat?color=ffcb47&labelColor=black&style=flat-square

|

||||

[github-trending-shield]: https://trendshift.io/api/badge/repositories/2256

|

||||

[github-trending-url]: https://trendshift.io/repositories/2256

|

||||

[github-wiki-link]: https://github.com/lobehub/lobe-chat/wiki

|

||||

[issues-link]: https://img.shields.io/github/issues/lobehub/lobe-chat.svg?style=flat

|

||||

[lobe-assets-github]: https://github.com/lobehub/lobe-assets

|

||||

[lobe-chat-plugins]: https://github.com/lobehub/lobe-chat-plugins

|

||||

|

|

|

|||

|

|

@ -10,7 +10,7 @@

|

|||

|

||||

LobeChat 是开源的高性能聊天机器人框架,支持语音合成、多模态、可扩展的([Function Call][fc-link])插件系统。<br/> 支持一键免费部署私人 ChatGPT/LLM 网页应用程序。

|

||||

|

||||

[English](./README.md) · **简体中文** · [更新日志](./CHANGELOG.md) · [文档][github-wiki-link] · [报告问题][github-issues-link] · [请求功能][github-issues-link]

|

||||

[English](./README.md) · **简体中文** · [更新日志](./CHANGELOG.md) · [文档][github-document-link] · [报告问题][github-issues-link] · [请求功能][github-issues-link]

|

||||

|

||||

<!-- SHIELD GROUP -->

|

||||

|

||||

|

|

@ -155,7 +155,7 @@ LobeChat 支持文字转语音(Text-to-Speech,TTS)和语音转文字(Spe

|

|||

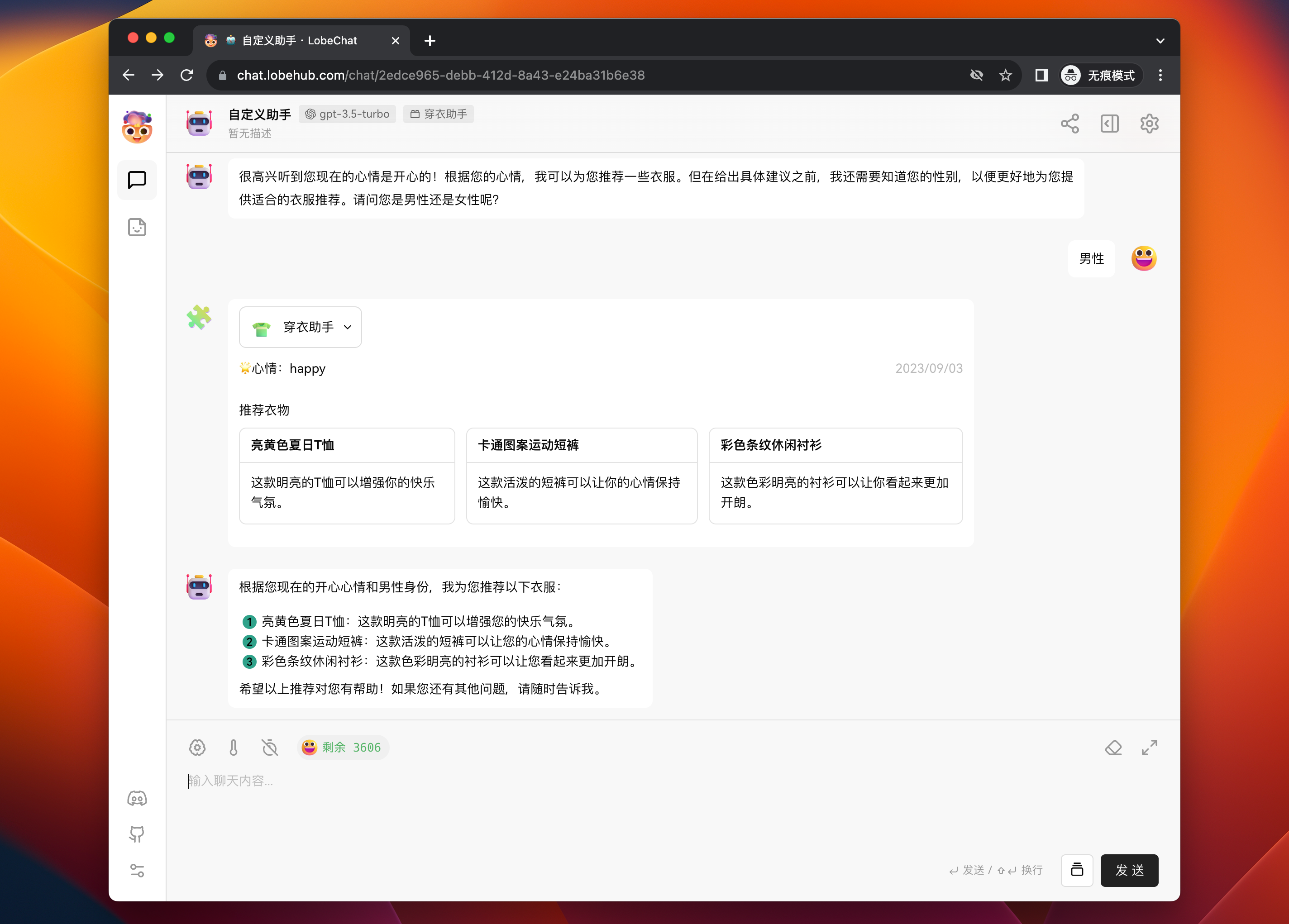

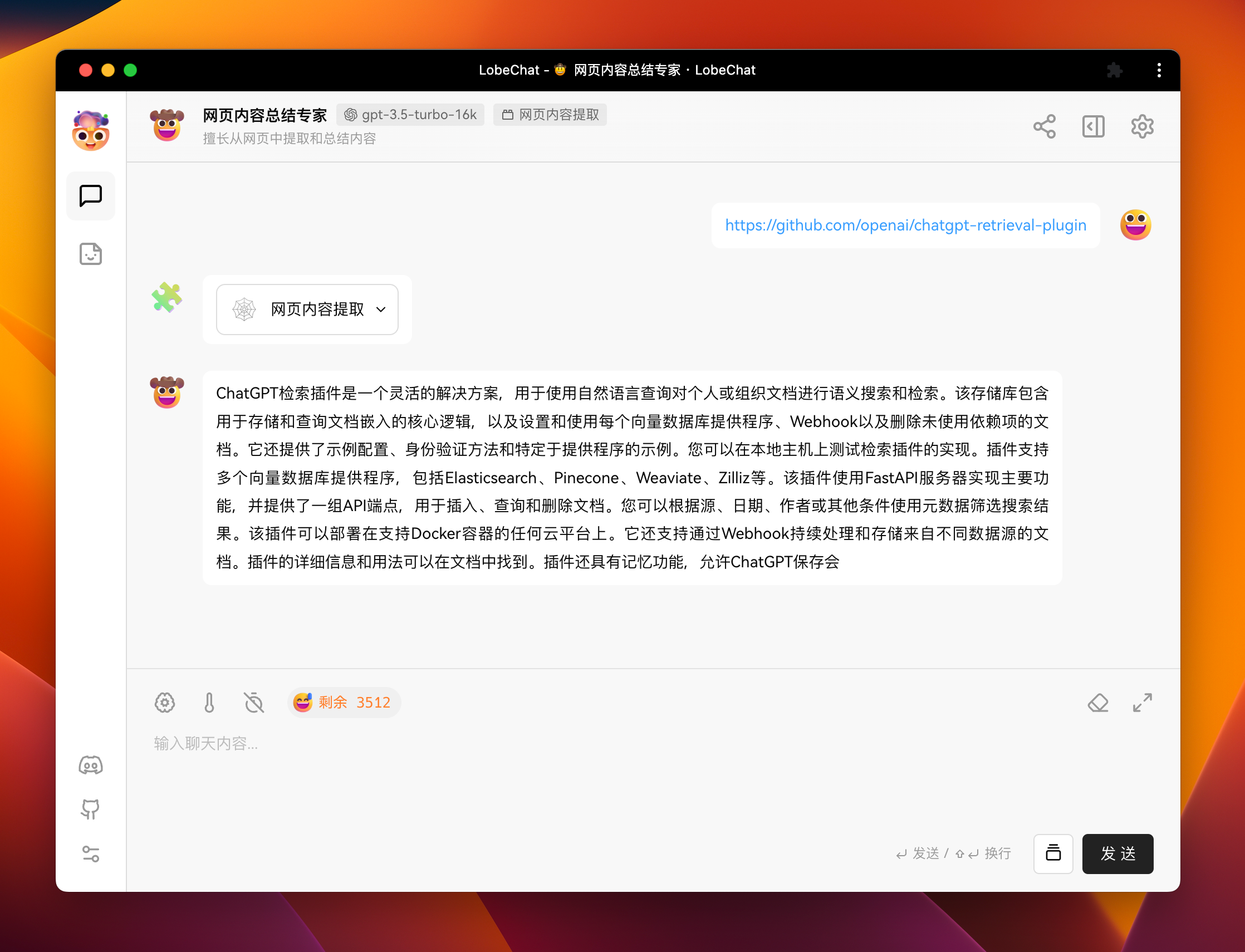

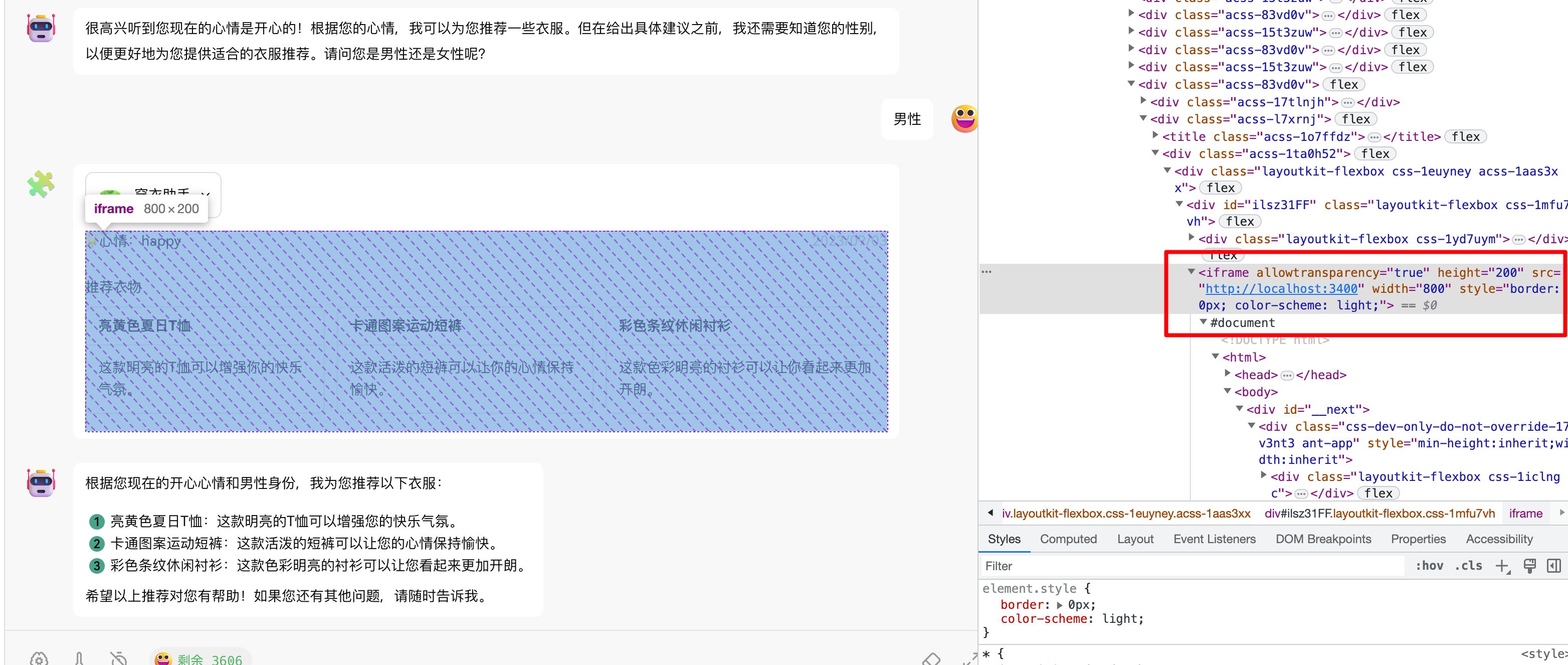

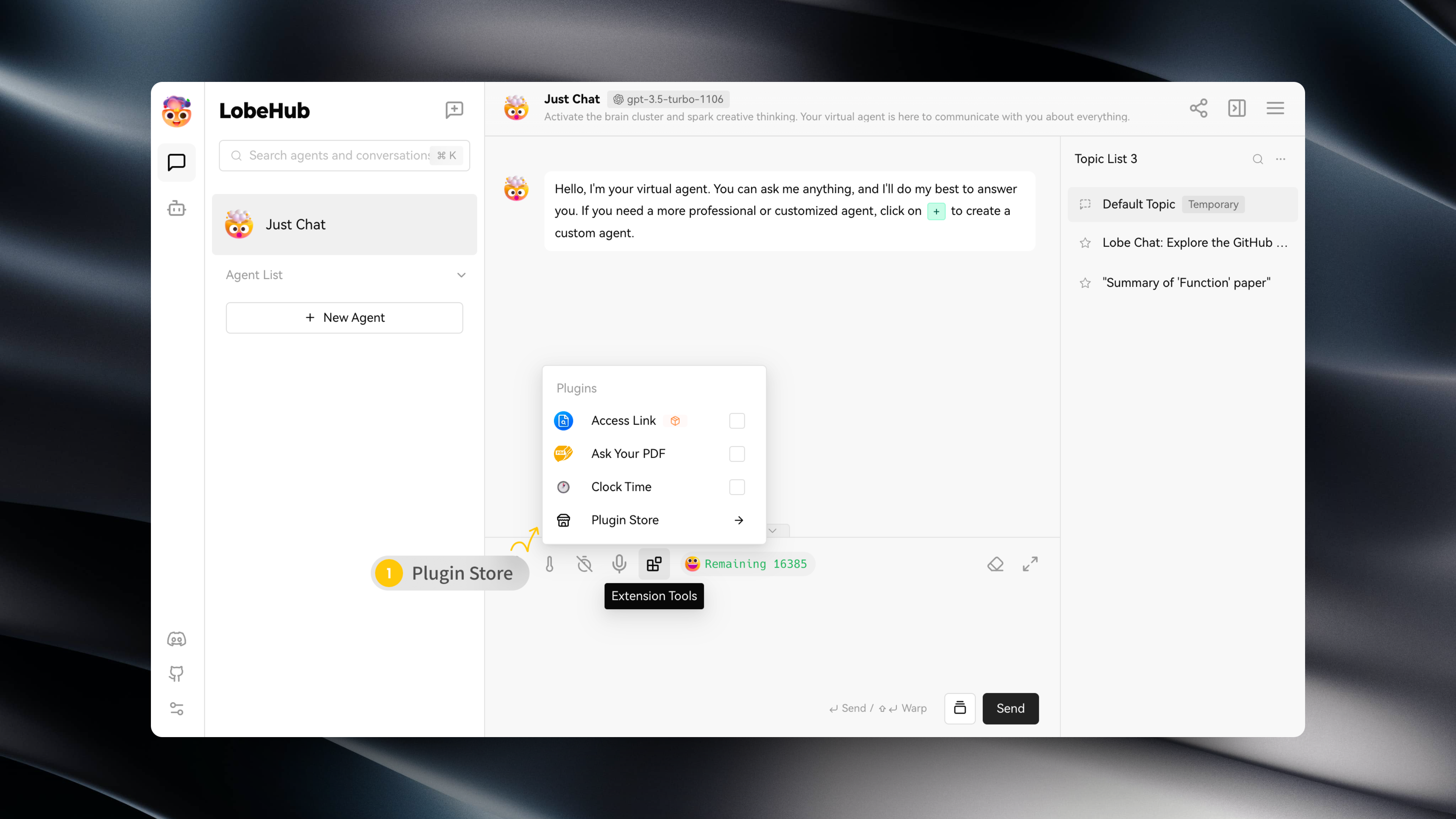

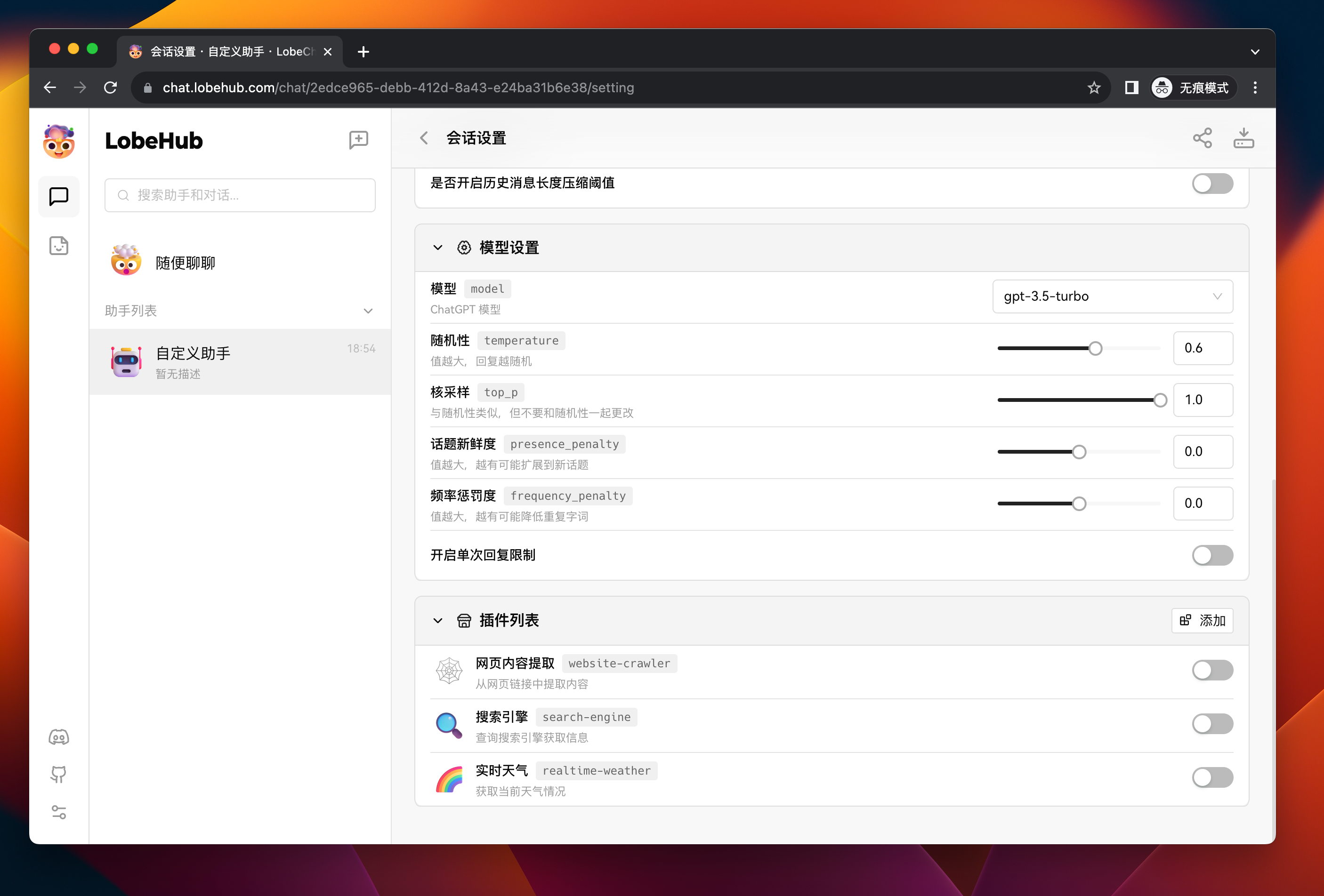

LobeChat 的插件生态系统是其核心功能的重要扩展,它极大地增强了 ChatGPT 的实用性和灵活性。通过利用插件,ChatGPT 能够实现实时信息的获取和处理,例如自动获取最新新闻头条,为用户提供即时且相关的资讯。

|

||||

此外,这些插件不仅局限于新闻聚合,还可以扩展到其他实用的功能,如快速检索文档、获取电商平台数据、以及其他各式各样的第三方服务。

|

||||

|

||||

> 通过 Wiki 了解更多 [📘 插件使用](https://github.com/lobehub/lobe-chat/wiki/Plugins.zh-CN)

|

||||

> 通过文档了解更多 [📘 插件使用](https://chat-docs.lobehub.com/zh/usage/plugins/basic)

|

||||

|

||||

<video controls src="https://github.com/lobehub/lobe-chat/assets/28616219/f29475a3-f346-4196-a435-41a6373ab9e2" muted="false"></video>

|

||||

|

||||

|

|

@ -638,6 +638,7 @@ This project is [MIT](./LICENSE) licensed.

|

|||

[github-action-test-shield]: https://img.shields.io/github/actions/workflow/status/lobehub/lobe-chat/test.yml?label=test&labelColor=black&logo=githubactions&logoColor=white&style=flat-square

|

||||

[github-contributors-link]: https://github.com/lobehub/lobe-chat/graphs/contributors

|

||||

[github-contributors-shield]: https://img.shields.io/github/contributors/lobehub/lobe-chat?color=c4f042&labelColor=black&style=flat-square

|

||||

[github-document-link]: https://chat-docs.lobehub.com/zh

|

||||

[github-forks-link]: https://github.com/lobehub/lobe-chat/network/members

|

||||

[github-forks-shield]: https://img.shields.io/github/forks/lobehub/lobe-chat?color=8ae8ff&labelColor=black&style=flat-square

|

||||

[github-issues-link]: https://github.com/lobehub/lobe-chat/issues

|

||||

|

|

@ -653,7 +654,6 @@ This project is [MIT](./LICENSE) licensed.

|

|||

[github-stars-shield]: https://img.shields.io/github/stars/lobehub/lobe-chat?color=ffcb47&labelColor=black&style=flat-square

|

||||

[github-trending-shield]: https://trendshift.io/api/badge/repositories/2256

|

||||

[github-trending-url]: https://trendshift.io/repositories/2256

|

||||

[github-wiki-link]: https://github.com/lobehub/lobe-chat/wiki

|

||||

[issues-link]: https://img.shields.io/github/issues/lobehub/lobe-chat.svg?style=flat

|

||||

[lobe-assets-github]: https://github.com/lobehub/lobe-assets

|

||||

[lobe-chat-plugins]: https://github.com/lobehub/lobe-chat-plugins

|

||||

|

|

|

|||

713

contributing/Basic/Feature-Development.md

Normal file

713

contributing/Basic/Feature-Development.md

Normal file

|

|

@ -0,0 +1,713 @@

|

|||

# Complete Guide to LobeChat Feature Development

|

||||

|

||||

This document aims to guide developers on how to develop a complete feature requirement in LobeChat.

|

||||

|

||||

We will use the implementation of sessionGroup as an example: [✨ feat: add session group manager](https://github.com/lobehub/lobe-chat/pull/1055), and explain the complete implementation process through the following six main sections:

|

||||

|

||||

1. Data Model / Database Definition

|

||||

2. Service Implementation / Model Implementation

|

||||

3. Frontend Data Flow Store Implementation

|

||||

4. UI Implementation and Action Binding

|

||||

5. Data Migration

|

||||

6. Data Import and Export

|

||||

|

||||

## 1. Database Section

|

||||

|

||||

To implement the Session Group feature, it is necessary to define the relevant data model and indexes at the database level.

|

||||

|

||||

Define a new sessionGroup table in 3 steps:

|

||||

|

||||

### 1. Establish Data Model Schema

|

||||

|

||||

Define the data model of `DB_SessionGroup` in `src/database/schema/sessionGroup.ts`:

|

||||

|

||||

```typescript

|

||||

import { z } from 'zod';

|

||||

|

||||

export const DB_SessionGroupSchema = z.object({

|

||||

name: z.string(),

|

||||

sort: z.number().optional(),

|

||||

});

|

||||

|

||||

export type DB_SessionGroup = z.infer<typeof DB_SessionGroupSchema>;

|

||||

```

|

||||

|

||||

### 2. Create Database Indexes

|

||||

|

||||

Since a new table needs to be added, an index needs to be added to the database schema for the `sessionGroup` table.

|

||||

|

||||

Add `dbSchemaV4` in `src/database/core/schema.ts`:

|

||||

|

||||

```diff

|

||||

// ... previous implementations

|

||||

|

||||

// ************************************** //

|

||||

// ******* Version 3 - 2023-12-06 ******* //

|

||||

// ************************************** //

|

||||

// - Added `plugin` table

|

||||

|

||||

export const dbSchemaV3 = {

|

||||

...dbSchemaV2,

|

||||

plugins:

|

||||

'&identifier, type, manifest.type, manifest.meta.title, manifest.meta.description, manifest.meta.author, createdAt, updatedAt',

|

||||

};

|

||||

|

||||

+ // ************************************** //

|

||||

+ // ******* Version 4 - 2024-01-21 ******* //

|

||||

+ // ************************************** //

|

||||

+ // - Added `sessionGroup` table

|

||||

|

||||

+ export const dbSchemaV4 = {

|

||||

+ ...dbSchemaV3,

|

||||

+ sessionGroups: '&id, name, sort, createdAt, updatedAt',

|

||||

+ sessions: '&id, type, group, pinned, meta.title, meta.description, meta.tags, createdAt, updatedAt',

|

||||

};

|

||||

```

|

||||

|

||||

> \[!Note]

|

||||

>

|

||||

> In addition to `sessionGroups`, the definition of `sessions` has also been modified here due to data migration. However, as this section only focuses on schema definition and does not delve into the implementation of data migration, please refer to section five for details.

|

||||

|

||||

> \[!Important]

|

||||

>

|

||||

> If you are unfamiliar with the need to create indexes here and the syntax of schema definition, you may need to familiarize yourself with the basics of Dexie.js. You can refer to the [📘 Local Database](./Local-Database.zh-CN) section for relevant information.

|

||||

|

||||

### 3. Add the sessionGroups Table to the Local DB

|

||||

|

||||

Extend the local database class to include the new `sessionGroups` table:

|

||||

|

||||

```diff

|

||||

|

||||

import { dbSchemaV1, dbSchemaV2, dbSchemaV3, dbSchemaV4 } from './schemas';

|

||||

|

||||

interface LobeDBSchemaMap {

|

||||

files: DB_File;

|

||||

messages: DB_Message;

|

||||

plugins: DB_Plugin;

|

||||

+ sessionGroups: DB_SessionGroup;

|

||||

sessions: DB_Session;

|

||||

topics: DB_Topic;

|

||||

}

|

||||

|

||||

// Define a local DB

|

||||

export class LocalDB extends Dexie {

|

||||

public files: LobeDBTable<'files'>;

|

||||

public sessions: LobeDBTable<'sessions'>;

|

||||

public messages: LobeDBTable<'messages'>;

|

||||

public topics: LobeDBTable<'topics'>;

|

||||

public plugins: LobeDBTable<'plugins'>;

|

||||

+ public sessionGroups: LobeDBTable<'sessionGroups'>;

|

||||

|

||||

constructor() {

|

||||

super(LOBE_CHAT_LOCAL_DB_NAME);

|

||||

this.version(1).stores(dbSchemaV1);

|

||||

this.version(2).stores(dbSchemaV2);

|

||||

this.version(3).stores(dbSchemaV3);

|

||||

+ this.version(4).stores(dbSchemaV4);

|

||||

|

||||

this.files = this.table('files');

|

||||

this.sessions = this.table('sessions');

|

||||

this.messages = this.table('messages');

|

||||

this.topics = this.table('topics');

|

||||

this.plugins = this.table('plugins');

|

||||

+ this.sessionGroups = this.table('sessionGroups');

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

As a result, you can now view the `sessionGroups` table in the `LOBE_CHAT_DB` in `Application` -> `Storage` -> `IndexedDB`.

|

||||

|

||||

|

||||

|

||||

## 2. Model and Service Section

|

||||

|

||||

### Define Model

|

||||

|

||||

When building the LobeChat application, the Model is responsible for interacting with the database. It defines how to read, insert, update, and delete data from the database, as well as defining specific business logic.

|

||||

|

||||

In `src/database/model/sessionGroup.ts`, the `SessionGroupModel` is defined as follows:

|

||||

|

||||

```typescript

|

||||

import { BaseModel } from '@/database/core';

|

||||

import { DB_SessionGroup, DB_SessionGroupSchema } from '@/database/schemas/sessionGroup';

|

||||

import { nanoid } from '@/utils/uuid';

|

||||

|

||||

class _SessionGroupModel extends BaseModel {

|

||||

constructor() {

|

||||

super('sessions', DB_SessionGroupSchema);

|

||||

}

|

||||

|

||||

async create(name: string, sort?: number, id = nanoid()) {

|

||||

return this._add({ name, sort }, id);

|

||||

}

|

||||

|

||||

// ... Implementation of other CRUD methods

|

||||

}

|

||||

|

||||

export const SessionGroupModel = new _SessionGroupModel();

|

||||

```

|

||||

|

||||

### Service Implementation

|

||||

|

||||

In LobeChat, the Service layer is mainly responsible for communicating with the backend service, encapsulating business logic, and providing data to other layers in the frontend. `SessionService` is a service class specifically handling business logic related to sessions. It encapsulates operations such as creating sessions, querying sessions, and updating sessions.

|

||||

|

||||

To maintain code maintainability and extensibility, we place the logic related to session grouping in the `SessionService`. This helps to keep the business logic of the session domain cohesive. When business requirements increase or change, it becomes easier to modify and extend within this domain.

|

||||

|

||||

`SessionService` implements session group-related request logic by calling methods from `SessionGroupModel`. The following is the implementation of Session Group-related request logic in `sessionService`:

|

||||

|

||||

```typescript

|

||||

class SessionService {

|

||||

// ... Omitted session business logic

|

||||

|

||||

// ************************************** //

|

||||

// *********** SessionGroup *********** //

|

||||

// ************************************** //

|

||||

|

||||

async createSessionGroup(name: string, sort?: number) {

|

||||

const item = await SessionGroupModel.create(name, sort);

|

||||

if (!item) {

|

||||

throw new Error('session group create Error');

|

||||

}

|

||||

|

||||

return item.id;

|

||||

}

|

||||

|

||||

// ... Other SessionGroup related implementations

|

||||

}

|

||||

```

|

||||

|

||||

## 3. Store Action Section

|

||||

|

||||

In the LobeChat application, the Store module is used to manage the frontend state of the application. The Actions within it are functions that trigger state updates, usually by calling methods in the service layer to perform actual data processing operations and then updating the state in the Store. We use `zustand` as the underlying dependency for the Store module. For a detailed practical introduction to state management, you can refer to [📘 Best Practices for State Management](../State-Management/State-Management-Intro.zh-CN.md).

|

||||

|

||||

### sessionGroup CRUD

|

||||

|

||||

CRUD operations for session groups are the core behaviors for managing session group data. In `src/store/session/slice/sessionGroup`, we will implement the state logic related to session groups, including adding, deleting, updating session groups, and their sorting.

|

||||

|

||||

The following are the methods of the `SessionGroupAction` interface that need to be implemented in the `action.ts` file:

|

||||

|

||||

```ts

|

||||

export interface SessionGroupAction {

|

||||

// Add session group

|

||||

addSessionGroup: (name: string) => Promise<string>;

|

||||

// Remove session group

|

||||

removeSessionGroup: (id: string) => Promise<void>;

|

||||

// Update session group ID for a session

|

||||

updateSessionGroupId: (sessionId: string, groupId: string) => Promise<void>;

|

||||

// Update session group name

|

||||

updateSessionGroupName: (id: string, name: string) => Promise<void>;

|

||||

// Update session group sorting

|

||||

updateSessionGroupSort: (items: SessionGroupItem[]) => Promise<void>;

|

||||

}

|

||||

```

|

||||

|

||||

Taking the `addSessionGroup` method as an example, we first call the `createSessionGroup` method of `sessionService` to create a new session group, and then use the `refreshSessions` method to refresh the sessions state:

|

||||

|

||||

```ts

|

||||

export const createSessionGroupSlice: StateCreator<

|

||||

SessionStore,

|

||||

[['zustand/devtools', never]],

|

||||

[],

|

||||

SessionGroupAction

|

||||

> = (set, get) => ({

|

||||

// Implement the logic for adding a session group

|

||||

addSessionGroup: async (name) => {

|

||||

// Call the createSessionGroup method in the service layer and pass in the session group name

|

||||

const id = await sessionService.createSessionGroup(name);

|

||||

// Call the get method to get the current Store state and execute the refreshSessions method to refresh the session data

|

||||

await get().refreshSessions();

|

||||

// Return the ID of the newly created session group

|

||||

return id;

|

||||

},

|

||||

// ... Other action implementations

|

||||

});

|

||||

```

|

||||

|

||||

With the above implementation, we can ensure that after adding a new session group, the application's state will be updated in a timely manner, and the relevant components will receive the latest state and re-render. This approach improves the predictability and maintainability of the data flow, while also simplifying communication between components.

|

||||

|

||||

### Sessions Group Logic Refactoring

|

||||

|

||||

This requirement involves upgrading the Sessions feature to transform it from a single list to three different groups: `pinnedSessions` (pinned list), `customSessionGroups` (custom groups), and `defaultSessions` (default list).

|

||||

|

||||

To handle these groups, we need to refactor the implementation logic of `useFetchSessions`. Here are the key changes:

|

||||

|

||||

1. Use the `sessionService.getSessionsWithGroup` method to call the backend API and retrieve the grouped session data.

|

||||

2. Save the retrieved data into three different state fields: `pinnedSessions`, `customSessionGroups`, and `defaultSessions`.

|

||||

|

||||

#### `useFetchSessions` Method

|

||||

|

||||

This method is defined in `createSessionSlice` as follows:

|

||||

|

||||

```typescript

|

||||

export const createSessionSlice: StateCreator<

|

||||

SessionStore,

|

||||

[['zustand/devtools', never]],

|

||||

[],

|

||||

SessionAction

|

||||

> = (set, get) => ({

|

||||

// ... other methods

|

||||

useFetchSessions: () =>

|

||||

useSWR<ChatSessionList>(FETCH_SESSIONS_KEY, sessionService.getSessionsWithGroup, {

|

||||

onSuccess: (data) => {

|

||||

set(

|

||||

{

|

||||

customSessionGroups: data.customGroup,

|

||||

defaultSessions: data.default,

|

||||

isSessionsFirstFetchFinished: true,

|

||||

pinnedSessions: data.pinned,

|

||||

sessions: data.all,

|

||||

},

|

||||

false,

|

||||

n('useFetchSessions/onSuccess', data),

|

||||

);

|

||||

},

|

||||

}),

|

||||

});

|

||||

```

|

||||

|

||||

After successfully retrieving the data, we use the `set` method to update the `customSessionGroups`, `defaultSessions`, `pinnedSessions`, and `sessions` states. This ensures that the states are synchronized with the latest session data.

|

||||

|

||||

#### `sessionService.getSessionsWithGroup` Method

|

||||

|

||||

The `sessionService.getSessionsWithGroup` method is responsible for calling the backend API `SessionModel.queryWithGroups()`.

|

||||

|

||||

```typescript

|

||||

class SessionService {

|

||||

// ... other SessionGroup related implementations

|

||||

|

||||

async getSessionsWithGroup(): Promise<ChatSessionList> {

|

||||

return SessionModel.queryWithGroups();

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

#### `SessionModel.queryWithGroups` Method

|

||||

|

||||

This method is the core method called by `sessionService.getSessionsWithGroup`, and it is responsible for querying and organizing session data. The code is as follows:

|

||||

|

||||

```typescript

|

||||

class _SessionModel extends BaseModel {

|

||||

// ... other methods

|

||||

|

||||

/**

|

||||

* Query session data and categorize sessions based on groups.

|

||||

* @returns {Promise<ChatSessionList>} An object containing all sessions and categorized session lists.

|

||||

*/

|

||||

async queryWithGroups(): Promise<ChatSessionList> {

|

||||

// Query session group data

|

||||

const groups = await SessionGroupModel.query();

|

||||

// Query custom session groups based on session group IDs

|

||||

const customGroups = await this.queryByGroupIds(groups.map((item) => item.id));

|

||||

// Query default session list

|

||||

const defaultItems = await this.querySessionsByGroupId(SessionDefaultGroup.Default);

|

||||

// Query pinned sessions

|

||||

const pinnedItems = await this.getPinnedSessions();

|

||||

|

||||

// Query all sessions

|

||||

const all = await this.query();

|

||||

// Combine and return all sessions and their group information

|

||||

return {

|

||||

all, // Array containing all sessions

|

||||

customGroup: groups.map((group) => ({ ...group, children: customGroups[group.id] })), // Custom groups

|

||||

default: defaultItems, // Default session list

|

||||

pinned: pinnedItems, // Pinned session list

|

||||

};

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

The `queryWithGroups` method first queries all session groups, then based on the IDs of these groups, it queries custom session groups, as well as default and pinned sessions. Finally, it returns an object containing all sessions and categorized session lists.

|

||||

|

||||

### Adjusting sessions selectors

|

||||

|

||||

Due to changes in the logic of grouping within sessions, we need to adjust the logic of the `sessions` selectors to ensure they can correctly handle the new data structure.

|

||||

|

||||

Original selectors:

|

||||

|

||||

```ts

|

||||

// Default group

|

||||

const defaultSessions = (s: SessionStore): LobeSessions => s.sessions;

|

||||

|

||||

// Pinned group

|

||||

const pinnedSessionList = (s: SessionStore) =>

|

||||

defaultSessions(s).filter((s) => s.group === SessionGroupDefaultKeys.Pinned);

|

||||

|

||||

// Unpinned group

|

||||

const unpinnedSessionList = (s: SessionStore) =>

|

||||

defaultSessions(s).filter((s) => s.group === SessionGroupDefaultKeys.Default);

|

||||

```

|

||||

|

||||

Revised:

|

||||

|

||||

```ts

|

||||

const defaultSessions = (s: SessionStore): LobeSessions => s.defaultSessions;

|

||||

const pinnedSessions = (s: SessionStore): LobeSessions => s.pinnedSessions;

|

||||

const customSessionGroups = (s: SessionStore): CustomSessionGroup[] => s.customSessionGroups;

|

||||

```

|

||||

|

||||

Since all data retrieval in the UI is implemented using syntax like `useSessionStore(sessionSelectors.defaultSessions)`, we only need to modify the selector implementation of `defaultSessions` to complete the data structure change. The data retrieval code in the UI layer does not need to be changed at all, which can greatly reduce the cost and risk of refactoring.

|

||||

|

||||

> !\[Important]

|

||||

>

|

||||

> If you are not familiar with the concept and functionality of selectors, you can refer to the section [📘 Data Storage and Retrieval Module](./State-Management-Selectors.en-US) for relevant information.

|

||||

|

||||

## IV. UI Section

|

||||

|

||||

Bind Store Action in the UI component to implement interactive logic, for example `CreateGroupModal`:

|

||||

|

||||

```tsx

|

||||

const CreateGroupModal = () => {

|

||||

// ... Other logic

|

||||

|

||||

const [updateSessionGroup, addCustomGroup] = useSessionStore((s) => [

|

||||

s.updateSessionGroupId,

|

||||

s.addSessionGroup,

|

||||

]);

|

||||

|

||||

return (

|

||||

<Modal

|

||||

onOk={async () => {

|

||||

// ... Other logic

|

||||

const groupId = await addCustomGroup(name);

|

||||

await updateSessionGroup(sessionId, groupId);

|

||||

}}

|

||||

>

|

||||

{/* ... */}

|

||||

</Modal>

|

||||

);

|

||||

};

|

||||

```

|

||||

|

||||

## 5. Data Migration

|

||||

|

||||

In the process of software development, data migration is an inevitable issue, especially when the existing data structure cannot meet the new business requirements. For this iteration of SessionGroup, we need to handle the migration of the `group` field in the `session`, which is a typical data migration case.

|

||||

|

||||

### Issues with the Old Data Structure

|

||||

|

||||

In the old data structure, the `group` field was used to mark whether the session was "pinned" or belonged to a "default" group. However, when support for multiple session groups is needed, the original data structure becomes inflexible.

|

||||

|

||||

For example:

|

||||

|

||||

```

|

||||

before pin: group = abc

|

||||

after pin: group = pinned

|

||||

after unpin: group = default

|

||||

```

|

||||

|

||||

From the above example, it can be seen that once a session is unpinned from the "pinned" state, the `group` field cannot be restored to its original `abc` value. This is because we do not have a separate field to maintain the pinned state. Therefore, we have introduced a new field `pinned` to indicate whether the session is pinned, while the `group` field will be used solely to identify the session group.

|

||||

|

||||

### Migration Strategy

|

||||

|

||||

The core logic of this migration is as follows:

|

||||

|

||||

- When the user's `group` field is `pinned`, set their `pinned` field to `true`, and set the group to `default`.

|

||||

|

||||

However, data migration in LobeChat typically involves two parts: **configuration file migration** and **database migration**. Therefore, the above logic will need to be implemented separately in these two areas.

|

||||

|

||||

#### Configuration File Migration

|

||||

|

||||

For configuration file migration, we recommend performing it before database migration, as configuration file migration is usually easier to test and validate. LobeChat's file migration configuration is located in the `src/migrations/index.ts` file, which defines the various versions of configuration file migration and their corresponding migration scripts.

|

||||

|

||||

```diff

|

||||

// Current latest version number

|

||||

- export const CURRENT_CONFIG_VERSION = 2;

|

||||

+ export const CURRENT_CONFIG_VERSION = 3;

|

||||

|

||||

// Historical version upgrade module

|

||||

const ConfigMigrations = [

|

||||

+ /**

|

||||

+ * 2024.01.22

|

||||

+ * from `group = pinned` to `pinned:true`

|

||||

+ */

|

||||

+ MigrationV2ToV3,

|

||||

/**

|

||||

* 2023.11.27

|

||||

* Migrate from single key database to dexie-based relational structure

|

||||

*/

|

||||

MigrationV1ToV2,

|

||||

/**

|

||||

* 2023.07.11

|

||||

* just the first version, Nothing to do

|

||||

*/

|

||||

MigrationV0ToV1,

|

||||

];

|

||||

```

|

||||

|

||||

The logic for this configuration file migration is defined in `src/migrations/FromV2ToV3/index.ts`, simplified as follows:

|

||||

|

||||

```ts

|

||||

export class MigrationV2ToV3 implements Migration {

|

||||

// Specify the version from which to upgrade

|

||||

version = 2;

|

||||

|

||||

migrate(data: MigrationData<V2ConfigState>): MigrationData<V3ConfigState> {

|

||||

const { sessions } = data.state;

|

||||

|

||||

return {

|

||||

...data,

|

||||

state: {

|

||||

...data.state,

|

||||

sessions: sessions.map((s) => this.migrateSession(s)),

|

||||

},

|

||||

};

|

||||

}

|

||||

|

||||

migrateSession = (session: V2Session): V3Session => {

|

||||

return {

|

||||

...session,

|

||||

group: 'default',

|

||||

pinned: session.group === 'pinned',

|

||||

};

|

||||

};

|

||||

}

|

||||

```

|

||||

|

||||

It can be seen that the migration implementation is very simple. However, it is important to ensure the correctness of the migration, so corresponding test cases need to be written in `src/migrations/FromV2ToV3/migrations.test.ts`:

|

||||

|

||||

```ts

|

||||

import { MigrationData, VersionController } from '@/migrations/VersionController';

|

||||

|

||||

import { MigrationV1ToV2 } from '../FromV1ToV2';

|

||||

import inputV1Data from '../FromV1ToV2/fixtures/input-v1-session.json';

|

||||

import inputV2Data from './fixtures/input-v2-session.json';

|

||||

import outputV3DataFromV1 from './fixtures/output-v3-from-v1.json';

|

||||

import outputV3Data from './fixtures/output-v3.json';

|

||||

import { MigrationV2ToV3 } from './index';

|

||||

|

||||

describe('MigrationV2ToV3', () => {

|

||||

let migrations;

|

||||

let versionController: VersionController<any>;

|

||||

|

||||

beforeEach(() => {

|

||||

migrations = [MigrationV2ToV3];

|

||||

versionController = new VersionController(migrations, 3);

|

||||

});

|

||||

|

||||

it('should migrate data correctly through multiple versions', () => {

|

||||

const data: MigrationData = inputV2Data;

|

||||

|

||||

const migratedData = versionController.migrate(data);

|

||||

|

||||

expect(migratedData.version).toEqual(outputV3Data.version);

|

||||

expect(migratedData.state.sessions).toEqual(outputV3Data.state.sessions);

|

||||

expect(migratedData.state.topics).toEqual(outputV3Data.state.topics);

|

||||

expect(migratedData.state.messages).toEqual(outputV3Data.state.messages);

|

||||

});

|

||||

|

||||

it('should work correct from v1 to v3', () => {

|

||||

const data: MigrationData = inputV1Data;

|

||||

|

||||

versionController = new VersionController([MigrationV2ToV3, MigrationV1ToV2], 3);

|

||||

|

||||

const migratedData = versionController.migrate(data);

|

||||

|

||||

expect(migratedData.version).toEqual(outputV3DataFromV1.version);

|

||||

expect(migratedData.state.sessions).toEqual(outputV3DataFromV1.state.sessions);

|

||||

expect(migratedData.state.topics).toEqual(outputV3DataFromV1.state.topics);

|

||||

expect(migratedData.state.messages).toEqual(outputV3DataFromV1.state.messages);

|

||||

});

|

||||

});

|

||||

```

|

||||

|

||||

```markdown

|

||||

|

||||

```

|

||||

|

||||

Unit tests require the use of `fixtures` to fix the test data. The test cases include verification logic for two parts: 1) the correctness of a single migration (v2 -> v3) and 2) the correctness of a complete migration (v1 -> v3).

|

||||

|

||||

> \[!Important]

|

||||

>

|

||||

> The version number in the configuration file may not match the database version number, as database version updates do not always involve changes to the data structure (such as adding tables or fields), while configuration file version updates usually involve data migration.

|

||||

|

||||

````

|

||||

|

||||

#### Database Migration

|

||||

|

||||

Database migration needs to be implemented in the `LocalDB` class, which is defined in the `src/database/core/db.ts` file. The migration process involves adding a new `pinned` field for each record in the `sessions` table and resetting the `group` field:

|

||||

|

||||

```diff

|

||||

export class LocalDB extends Dexie {

|

||||

public files: LobeDBTable<'files'>;

|

||||

public sessions: LobeDBTable<'sessions'>;

|

||||

public messages: LobeDBTable<'messages'>;

|

||||

public topics: LobeDBTable<'topics'>;

|

||||

public plugins: LobeDBTable<'plugins'>;

|

||||

public sessionGroups: LobeDBTable<'sessionGroups'>;

|

||||

|

||||

constructor() {

|

||||

super(LOBE_CHAT_LOCAL_DB_NAME);

|

||||

this.version(1).stores(dbSchemaV1);

|

||||

this.version(2).stores(dbSchemaV2);

|

||||

this.version(3).stores(dbSchemaV3);

|

||||

this.version(4)

|

||||

.stores(dbSchemaV4)

|

||||

+ .upgrade((trans) => this.upgradeToV4(trans));

|

||||

|

||||

this.files = this.table('files');

|

||||

this.sessions = this.table('sessions');

|

||||

this.messages = this.table('messages');

|

||||

this.topics = this.table('topics');

|

||||

this.plugins = this.table('plugins');

|

||||

this.sessionGroups = this.table('sessionGroups');

|

||||

}

|

||||

|

||||

+ /**

|

||||

+ * 2024.01.22

|

||||

+ *

|

||||

+ * DB V3 to V4

|

||||

+ * from `group = pinned` to `pinned:true`

|

||||

+ */

|

||||

+ upgradeToV4 = async (trans: Transaction) => {

|

||||

+ const sessions = trans.table('sessions');

|

||||

+ await sessions.toCollection().modify((session) => {

|

||||

+ // translate boolean to number

|

||||

+ session.pinned = session.group === 'pinned' ? 1 : 0;

|

||||

session.group = 'default';

|

||||

});

|

||||

+ };

|

||||

}

|

||||

````

|

||||

|

||||

This is our data migration strategy. When performing the migration, it is essential to ensure the correctness of the migration script and validate the migration results through thorough testing.

|

||||

|

||||

## VI. Data Import and Export

|

||||

|

||||

In LobeChat, the data import and export feature is designed to ensure that users can migrate their data between different devices. This includes session, topic, message, and settings data. In the implementation of the Session Group feature, we also need to handle data import and export to ensure that the complete exported data can be restored exactly the same on other devices.

|

||||

|

||||

The core implementation of data import and export is in the `ConfigService` in `src/service/config.ts`, with key methods as follows:

|

||||

|

||||

| Method Name | Description |

|

||||

| --------------------- | -------------------------- |

|

||||

| `importConfigState` | Import configuration data |

|

||||

| `exportAgents` | Export all agent data |

|

||||

| `exportSessions` | Export all session data |

|

||||

| `exportSingleSession` | Export single session data |

|

||||

| `exportSingleAgent` | Export single agent data |

|

||||

| `exportSettings` | Export settings data |

|

||||

| `exportAll` | Export all data |

|

||||

|

||||

### Data Export

|

||||

|

||||

In LobeChat, when a user chooses to export data, the current session, topic, message, and settings data are packaged into a JSON file and provided for download. The standard structure of this JSON file is as follows:

|

||||

|

||||

```json

|

||||

{

|

||||

"exportType": "sessions",

|

||||

"state": {

|

||||

"sessions": [],

|

||||

"topics": [],

|

||||

"messages": []

|

||||

},

|

||||

"version": 3

|

||||

}

|

||||

```

|

||||

|

||||

Where:

|

||||

|

||||

- `exportType`: Identifies the type of data being exported, currently including `sessions`, `agent`, `settings`, and `all`.

|

||||

- `state`: Stores the actual data, with different data types for different `exportType`.

|

||||

- `version`: Indicates the data version.

|

||||

|

||||

In the implementation of the Session Group feature, we need to add `sessionGroups` data to the `state` field. This way, when users export data, their Session Group data will also be included.

|

||||

|

||||

For example, when exporting sessions, the relevant implementation code modification is as follows:

|

||||

|

||||

```diff

|

||||

class ConfigService {

|

||||

// ... Other code omitted

|

||||

|

||||

exportSessions = async () => {

|

||||

const sessions = await sessionService.getSessions();

|

||||

+ const sessionGroups = await sessionService.getSessionGroups();

|

||||

const messages = await messageService.getAllMessages();

|

||||

const topics = await topicService.getAllTopics();

|

||||

|

||||

- const config = createConfigFile('sessions', { messages, sessions, topics });

|

||||

+ const config = createConfigFile('sessions', { messages, sessionGroups, sessions, topics });

|

||||

|

||||

exportConfigFile(config, 'sessions');

|

||||

};

|

||||

}

|

||||

```

|

||||

|

||||

### Data Import

|

||||

|

||||

The data import functionality is implemented through `ConfigService.importConfigState`. When users choose to import data, they need to provide a JSON file that conforms to the above structure specification. The `importConfigState` method accepts the data of the configuration file and imports it into the application.

|

||||

|

||||

In the implementation of the Session Group feature, we need to handle the `sessionGroups` data during the data import process. This way, when users import data, their Session Group data will also be imported correctly.

|

||||

|

||||

The following is the modified code for the import implementation in `importConfigState`:

|

||||

|

||||

```diff

|

||||

class ConfigService {

|

||||

// ... Other code omitted

|

||||

|

||||

+ importSessionGroups = async (sessionGroups: SessionGroupItem[]) => {

|

||||

+ return sessionService.batchCreateSessionGroups(sessionGroups);

|

||||

+ };

|

||||

|

||||

importConfigState = async (config: ConfigFile): Promise<ImportResults | undefined> => {

|

||||

switch (config.exportType) {

|

||||

case 'settings': {

|

||||

await this.importSettings(config.state.settings);

|

||||

|

||||

break;

|

||||

}

|

||||

|

||||

case 'agents': {

|

||||

+ const sessionGroups = await this.importSessionGroups(config.state.sessionGroups);

|

||||

|

||||

const data = await this.importSessions(config.state.sessions);

|

||||

return {

|

||||

+ sessionGroups: this.mapImportResult(sessionGroups),

|

||||

sessions: this.mapImportResult(data),

|

||||

};

|

||||

}

|

||||

|

||||

case 'all': {

|

||||

await this.importSettings(config.state.settings);

|

||||

|

||||

+ const sessionGroups = await this.importSessionGroups(config.state.sessionGroups);

|

||||

|

||||

const [sessions, messages, topics] = await Promise.all([

|

||||

this.importSessions(config.state.sessions),

|

||||

this.importMessages(config.state.messages),

|

||||

this.importTopics(config.state.topics),

|

||||

]);

|

||||

|

||||

return {

|

||||

messages: this.mapImportResult(messages),

|

||||

+ sessionGroups: this.mapImportResult(sessionGroups),

|

||||

sessions: this.mapImportResult(sessions),

|

||||

topics: this.mapImportResult(topics),

|

||||

};

|

||||

}

|

||||

|

||||

case 'sessions': {

|

||||

+ const sessionGroups = await this.importSessionGroups(config.state.sessionGroups);

|

||||

|

||||

const [sessions, messages, topics] = await Promise.all([

|

||||

this.importSessions(config.state.sessions),

|

||||

this.importMessages(config.state.messages),

|

||||

this.importTopics(config.state.topics),

|

||||

]);

|

||||

|

||||

return {

|

||||

messages: this.mapImportResult(messages),

|

||||

+ sessionGroups: this.mapImportResult(sessionGroups),

|

||||

sessions: this.mapImportResult(sessions),

|

||||

topics: this.mapImportResult(topics),

|

||||

};

|

||||

}

|

||||

}

|

||||

};

|

||||

}

|

||||

```

|

||||

|

||||

One key point of the above modification is to import sessionGroup first, because if sessions are imported first and the corresponding SessionGroup Id is not found in the current database, the group of this session will default to be modified to the default value. This will prevent the correct association of the sessionGroup's ID with the session.

|

||||

|

||||

This is the implementation of the LobeChat Session Group feature in the data import and export process. This approach ensures that users' Session Group data is correctly handled during the import and export process.

|

||||

|

||||

## Summary

|

||||

|

||||

The above is the complete implementation process of the LobeChat Session Group feature. Developers can refer to this document for the development and testing of related functionalities.

|

||||

|

|

@ -178,7 +178,7 @@ class SessionService {

|

|||

|

||||

## 三、Store Action 部分

|

||||

|

||||

在 LobeChat 应用中,Store 是用于管理应用前端状态的模块。其中的 Action 是触发状态更新的函数,通常会调用服务层的方法来执行实际的数据处理操作,然后更新 Store 中的状态。我们采用了 `zustand` 作为 Store 模块的底层依赖,对于状态管理的详细实践介绍,可以查阅 [📘 状态管理最佳实践](./State-Management-Intro.zh-CN)

|

||||

在 LobeChat 应用中,Store 是用于管理应用前端状态的模块。其中的 Action 是触发状态更新的函数,通常会调用服务层的方法来执行实际的数据处理操作,然后更新 Store 中的状态。我们采用了 `zustand` 作为 Store 模块的底层依赖,对于状态管理的详细实践介绍,可以查阅 [📘 状态管理最佳实践](../State-Management/State-Management-Intro.zh-CN.md)

|

||||

|

||||

### sessionGroup CRUD

|

||||

|

||||

|

|

@ -349,7 +349,7 @@ const customSessionGroups = (s: SessionStore): CustomSessionGroup[] => s.customS

|

|||

|

||||

> !\[Important]

|

||||

>

|

||||

> 如果你对 Selectors 的概念和功能不太了解,可以查阅 [📘 数据存储取数模块](./State-Management-Selectors.zh-CN) 部分了解相关内容。

|

||||

> 如果你对 Selectors 的概念和功能不太了解,可以查阅 [📘 数据存储取数模块](../State-Management/State-Management-Selectors.zh-CN.md) 部分了解相关内容。

|

||||

|

||||

## 四、UI 部分

|

||||

|

||||

|

|

@ -44,7 +44,7 @@ src

|

|||

└── utils # 通用的工具函数

|

||||

```

|

||||

|

||||

有关目录架构的详细介绍,详见: [文件夹目录架构](Folder-Structure.zh-CN)

|

||||

有关目录架构的详细介绍,详见: [文件夹目录架构](Folder-Structure.zh-CN.md)

|

||||

|

||||

## 本地开发环境设置

|

||||

|

||||

|

|

@ -76,7 +76,7 @@ bun run dev

|

|||

# 访问 http://localhost:3010 查看应用

|

||||

```

|

||||

|

||||

现在,你应该可以在浏览器中看到 LobeChat 的欢迎页面。详细的环境配置指南,请参考 [开发环境设置指南](Setup-Development.zh-CN)。

|

||||

现在,你应该可以在浏览器中看到 LobeChat 的欢迎页面。详细的环境配置指南,请参考 [开发环境设置指南](Setup-Development.zh-CN.md)。

|

||||

|

||||

## 代码风格与贡献指南

|

||||

|

||||

|

|

@ -87,7 +87,7 @@ bun run dev

|

|||

|

||||

所有的贡献都将经过代码审查。维护者可能会提出修改建议或要求。请积极响应审查意见,并及时做出调整,我们期待你的参与和贡献。

|

||||

|

||||

详细的代码风格和贡献指南,请参考 [代码风格与贡献指南](Contributing-Guidelines.zh-CN)。

|

||||

详细的代码风格和贡献指南,请参考 [代码风格与贡献指南](Contributing-Guidelines.zh-CN.md)。

|

||||

|

||||

## 国际化实现指南

|

||||

|

||||

|

|

@ -95,9 +95,9 @@ LobeChat 采用 `i18next` 和 `lobe-i18n` 实现多语言支持,确保用户

|

|||

|

||||

国际化文件位于 `src/locales`,包含默认语言(中文)。 我们会通过 `lobe-i18n` 自动生成其他的语言 JSON 文件。

|

||||

|

||||

如果要添加新语种,需遵循特定步骤,详见 [新语种添加指南](Add-New-Locale.zh-CN)。 我们鼓励你参与我们的国际化努力,共同为全球用户提供更好的服务。

|

||||

如果要添加新语种,需遵循特定步骤,详见 [新语种添加指南](../Internationalization/Add-New-Locale.zh-CN.md)。 我们鼓励你参与我们的国际化努力,共同为全球用户提供更好的服务。

|

||||

|

||||

详细的国际化实现指南指南,请参考 [国际化实现指南](Internationalization-Implementation.zh-CN)。

|

||||

详细的国际化实现指南指南,请参考 [国际化实现指南](../Internationalization/Internationalization-Implementation.zh-CN.md)。

|

||||

|

||||

## 附录:资源与参考

|

||||

|

||||

|

|

@ -6,7 +6,9 @@

|

|||

|

||||

<h1>Lobe Chat Wiki</h1>

|

||||

|

||||

LobeChat is a open-source, extensible ([Function Calling][fc-url]), high-performance chatbot framework. <br/> It supports one-click free deployment of your private ChatGPT/LLM web application.

|

||||

LobeChat is an open-source, extensible ([Function Calling][fc-url]), high-performance chatbot framework. <br/> It supports one-click free deployment of your private ChatGPT/LLM web application.

|

||||

|

||||

[Usage Documents](https://chat-docs.lobehub.com/en) | [使用指南](https://chat-docs.lobehub.com/zh)

|

||||

|

||||

</div>

|

||||

|

||||

|

|

@ -14,43 +16,33 @@ LobeChat is a open-source, extensible ([Function Calling][fc-url]), high-perform

|

|||

|

||||

<!-- DOCS LIST -->

|

||||

|

||||

### 🤯 Usage

|

||||

### 🤯 Basic

|

||||

|

||||

- [Custom Agents Guide](https://github.com/lobehub/lobe-chat/wiki/Usage-Agents) | [自定义助手指南](https://github.com/lobehub/lobe-chat/wiki/Usage-Agents.zh-CN)

|

||||

- [Plugin Usage](https://github.com/lobehub/lobe-chat/wiki/Plugins) | [插件使用](https://github.com/lobehub/lobe-chat/wiki/Plugins.zh-CN)

|

||||

- [Topic Guide](https://github.com/lobehub/lobe-chat/wiki/Usage-Topics) | [话题指南](https://github.com/lobehub/lobe-chat/wiki/Usage-Topics.zh-CN)

|

||||

|

||||

<br/>

|

||||

|

||||

### 🛳 Self-Hosting

|

||||

|

||||

- [Docker Deployment Guide](https://github.com/lobehub/lobe-chat/wiki/Docker-Deployment) | [Docker 部署指引](https://github.com/lobehub/lobe-chat/wiki/Docker-Deployment.zh-CN)

|

||||

- [Deploying with Azure OpenAI](https://github.com/lobehub/lobe-chat/wiki/Deploy-with-Azure-OpenAI) | [使用 Azure OpenAI 部署](https://github.com/lobehub/lobe-chat/wiki/Deploy-with-Azure-OpenAI.zh-CN)

|

||||

- [Environment Variables](https://github.com/lobehub/lobe-chat/wiki/Environment-Variable) | [环境变量](https://github.com/lobehub/lobe-chat/wiki/Environment-Variable.zh-CN)

|

||||

- [Authentication Service](https://github.com/lobehub/lobe-chat/wiki/Authentication) | [身份验证服务](https://github.com/lobehub/lobe-chat/wiki/Authentication.zh-CN)

|

||||

- [Upstream Sync](https://github.com/lobehub/lobe-chat/wiki/Upstream-Sync) | [自部署保持更新](https://github.com/lobehub/lobe-chat/wiki/Upstream-Sync.zh-CN)

|

||||

- [Frequently Asked Questions](https://github.com/lobehub/lobe-chat/wiki/Common-Error) | [常见问题](https://github.com/lobehub/lobe-chat/wiki/Common-Error.zh-CN)

|

||||

- [Data Statistics](https://github.com/lobehub/lobe-chat/wiki/Analytics) | [数据统计](https://github.com/lobehub/lobe-chat/wiki/Analytics.zh-CN)

|

||||

|

||||

<br/>

|

||||

|

||||

### ⌨️ Development

|

||||

|

||||

- [Technical Development Getting Started Guide](https://github.com/lobehub/lobe-chat/wiki/index) | [技术开发上手指南](https://github.com/lobehub/lobe-chat/wiki/index.zh-CN)

|

||||

- [Code Style and Contribution Guidelines](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines) | [代码风格与贡献指南](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines.zh-CN)

|

||||

- [Environment Setup Guide](https://github.com/lobehub/lobe-chat/wiki/Setup-Development) | [环境设置指南](https://github.com/lobehub/lobe-chat/wiki/Setup-Development.zh-CN)

|

||||

- [Architecture Design](https://github.com/lobehub/lobe-chat/wiki/Architecture) | [架构设计](https://github.com/lobehub/lobe-chat/wiki/Architecture.zh-CN)

|

||||

- [Directory Structure](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure) | [目录架构](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure.zh-CN)

|

||||

- [Best Practices for State Management](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro) | [状态管理最佳实践](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro.zh-CN)

|

||||

- [Data Store Selector](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors) | [数据存储取数模块](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors.zh-CN)

|

||||

- [Code Style and Contribution Guidelines](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines) | [代码风格与贡献指南](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines.zh-CN)

|

||||

- [Complete Guide to LobeChat Feature Development](https://github.com/lobehub/lobe-chat/wiki/Feature-Development) | [LobeChat 功能开发完全指南](https://github.com/lobehub/lobe-chat/wiki/Feature-Development.zh-CN)

|

||||

- [Conversation API Implementation Logic](https://github.com/lobehub/lobe-chat/wiki/Chat-API) | [会话 API 实现逻辑](https://github.com/lobehub/lobe-chat/wiki/Chat-API.zh-CN)

|

||||

- [How to Develop a New Feature](https://github.com/lobehub/lobe-chat/wiki/Feature-Development) | [如何开发一个新功能](https://github.com/lobehub/lobe-chat/wiki/Feature-Development.zh-CN)

|

||||

- [Frontend](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend) | [前端实现](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend.zh-CN)

|

||||

- [Directory Structure](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure) | [目录架构](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure.zh-CN)

|

||||

- [Environment Setup Guide](https://github.com/lobehub/lobe-chat/wiki/Setup-Development) | [环境设置指南](https://github.com/lobehub/lobe-chat/wiki/Setup-Development.zh-CN)

|

||||

- [How to Develop a New Feature](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend) | [如何开发一个新功能:前端实现](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend.zh-CN)

|

||||

- [New Authentication Provider Guide](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers) | [新身份验证方式开发指南](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers.zh-CN)

|

||||

- [Resources and References](https://github.com/lobehub/lobe-chat/wiki/Resources) | [资源与参考](https://github.com/lobehub/lobe-chat/wiki/Resources.zh-CN)

|

||||

- [Technical Development Getting Started Guide](https://github.com/lobehub/lobe-chat/wiki/Intro) | [技术开发上手指南](https://github.com/lobehub/lobe-chat/wiki/Intro.zh-CN)

|

||||

- [Testing Guide](https://github.com/lobehub/lobe-chat/wiki/Test) | [测试指南](https://github.com/lobehub/lobe-chat/wiki/Test.zh-CN)

|

||||

|

||||

<br/>

|

||||

|

||||

### 🌎 Internationalization

|

||||

|

||||

- [Internationalization Implementation Guide](https://github.com/lobehub/lobe-chat/wiki/Internationalization-Implementation) | [国际化实现指南](https://github.com/lobehub/lobe-chat/wiki/Internationalization-Implementation.zh-CN)

|

||||

- [New Locale Guide](https://github.com/lobehub/lobe-chat/wiki/Add-New-Locale) | [新语种添加指南](https://github.com/lobehub/lobe-chat/wiki/Add-New-Locale.zh-CN)

|

||||

- [New Authentication Provider Guide](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers) | [新身份验证方式开发指南](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers.zh-CN)

|

||||

- [Testing Guide](https://github.com/lobehub/lobe-chat/wiki/Test) | [测试指南](https://github.com/lobehub/lobe-chat/wiki/Test.zh-CN)

|

||||

- [Resources and References](https://github.com/lobehub/lobe-chat/wiki/Resources) | [资源与参考](https://github.com/lobehub/lobe-chat/wiki/Resources.zh-CN)

|

||||

|

||||

<br/>

|

||||

|

||||

### ⌨️ State Management

|

||||

|

||||

- [Best Practices for State Management](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro) | [状态管理最佳实践](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro.zh-CN)

|

||||

- [Data Store Selector](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors) | [数据存储取数模块](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors.zh-CN)

|

||||

|

||||

<br/>

|

||||

|

||||

|

|

@ -62,7 +54,6 @@ LobeChat is a open-source, extensible ([Function Calling][fc-url]), high-perform

|

|||

|

||||

### 🧩 Plugins

|

||||

|

||||

- [Plugin Development Guide](https://github.com/lobehub/lobe-chat/wiki/Plugin-Development) | [插件开发指南](https://github.com/lobehub/lobe-chat/wiki/Plugin-Development.zh-CN)

|

||||

- [Plugin Index and Submit](https://github.com/lobehub/lobe-chat-plugins) | [插件索引与提交](https://github.com/lobehub/lobe-chat-plugins/blob/main/README.zh-CN.md)

|

||||

- [Plugin SDK Docs](https://chat-plugin-sdk.lobehub.com) | [插件 SDK 文档](https://chat-plugin-sdk.lobehub.com)

|

||||

|

||||

|

|

@ -115,7 +115,7 @@ const createI18nInstance = (lang) => {

|

|||

- [🌐 feat(locale): Add fr-FR (#637) #645](https://github.com/lobehub/lobe-chat/pull/645)

|

||||

- [🌐 Add russian localy #137](https://github.com/lobehub/lobe-chat/pull/137)

|

||||

|

||||

要添加新的语种支持, 详细步骤请参考:[新语种添加指南](Add-New-Locale.zh-CN)。

|

||||

要添加新的语种支持, 详细步骤请参考:[新语种添加指南](Add-New-Locale.zh-CN.md)。

|

||||

|

||||

## 资源和进一步阅读

|

||||

|

||||

|

|

@ -2,43 +2,33 @@

|

|||

|

||||

#### 🏠 Home

|

||||

|

||||

- [TOC](Home) | [目录](Home)

|

||||

- [TOC](Home.md) | [目录](Home.md)

|

||||

|

||||

<!-- DOCS LIST -->

|

||||

|

||||

#### 🤯 Usage

|

||||

#### 🤯 Basic

|

||||

|

||||

- [Custom Agents Guide](https://github.com/lobehub/lobe-chat/wiki/Usage-Agents) | [自定义助手指南](https://github.com/lobehub/lobe-chat/wiki/Usage-Agents.zh-CN)

|

||||

- [Plugin Usage](https://github.com/lobehub/lobe-chat/wiki/Plugins) | [插件使用](https://github.com/lobehub/lobe-chat/wiki/Plugins.zh-CN)

|

||||

- [Topic Guide](https://github.com/lobehub/lobe-chat/wiki/Usage-Topics) | [话题指南](https://github.com/lobehub/lobe-chat/wiki/Usage-Topics.zh-CN)

|

||||

|

||||

#### 🛳 Self-Hosting

|

||||

|

||||

- [Docker Deployment Guide](https://github.com/lobehub/lobe-chat/wiki/Docker-Deployment) | [Docker 部署指引](https://github.com/lobehub/lobe-chat/wiki/Docker-Deployment.zh-CN)

|

||||

- [Deploying with Azure OpenAI](https://github.com/lobehub/lobe-chat/wiki/Deploy-with-Azure-OpenAI) | [使用 Azure OpenAI 部署](https://github.com/lobehub/lobe-chat/wiki/Deploy-with-Azure-OpenAI.zh-CN)

|

||||

- [Environment Variables](https://github.com/lobehub/lobe-chat/wiki/Environment-Variable) | [环境变量](https://github.com/lobehub/lobe-chat/wiki/Environment-Variable.zh-CN)

|

||||

- [Authentication Service](https://github.com/lobehub/lobe-chat/wiki/Authentication) | [身份验证服务](https://github.com/lobehub/lobe-chat/wiki/Authentication.zh-CN)

|

||||

- [Upstream Sync](https://github.com/lobehub/lobe-chat/wiki/Upstream-Sync) | [自部署保持更新](https://github.com/lobehub/lobe-chat/wiki/Upstream-Sync.zh-CN)

|

||||

- [Frequently Asked Questions](https://github.com/lobehub/lobe-chat/wiki/Common-Error) | [常见问题](https://github.com/lobehub/lobe-chat/wiki/Common-Error.zh-CN)

|

||||

- [Data Statistics](https://github.com/lobehub/lobe-chat/wiki/Analytics) | [数据统计](https://github.com/lobehub/lobe-chat/wiki/Analytics.zh-CN)

|

||||

|

||||

#### ⌨️ Development

|

||||

|

||||

- [Technical Development Getting Started Guide](https://github.com/lobehub/lobe-chat/wiki/Intro) | [技术开发上手指南](https://github.com/lobehub/lobe-chat/wiki/Intro.zh-CN)

|

||||

- [Code Style and Contribution Guidelines](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines) | [代码风格与贡献指南](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines.zh-CN)

|

||||

- [Environment Setup Guide](https://github.com/lobehub/lobe-chat/wiki/Setup-Development) | [环境设置指南](https://github.com/lobehub/lobe-chat/wiki/Setup-Development.zh-CN)

|

||||

- [Architecture Design](https://github.com/lobehub/lobe-chat/wiki/Architecture) | [架构设计](https://github.com/lobehub/lobe-chat/wiki/Architecture.zh-CN)

|

||||

- [Directory Structure](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure) | [目录架构](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure.zh-CN)

|

||||

- [Best Practices for State Management](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro) | [状态管理最佳实践](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro.zh-CN)

|

||||

- [Data Store Selector](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors) | [数据存储取数模块](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors.zh-CN)

|

||||

- [Code Style and Contribution Guidelines](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines) | [代码风格与贡献指南](https://github.com/lobehub/lobe-chat/wiki/Contributing-Guidelines.zh-CN)

|

||||

- [Complete Guide to LobeChat Feature Development](https://github.com/lobehub/lobe-chat/wiki/Feature-Development) | [LobeChat 功能开发完全指南](https://github.com/lobehub/lobe-chat/wiki/Feature-Development.zh-CN)

|

||||

- [Conversation API Implementation Logic](https://github.com/lobehub/lobe-chat/wiki/Chat-API) | [会话 API 实现逻辑](https://github.com/lobehub/lobe-chat/wiki/Chat-API.zh-CN)

|

||||

- [How to Develop a New Feature](https://github.com/lobehub/lobe-chat/wiki/Feature-Development) | [如何开发一个新功能](https://github.com/lobehub/lobe-chat/wiki/Feature-Development.zh-CN)

|

||||

- [Frontend](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend) | [前端实现](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend.zh-CN)

|

||||

- [Directory Structure](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure) | [目录架构](https://github.com/lobehub/lobe-chat/wiki/Folder-Structure.zh-CN)

|

||||

- [Environment Setup Guide](https://github.com/lobehub/lobe-chat/wiki/Setup-Development) | [环境设置指南](https://github.com/lobehub/lobe-chat/wiki/Setup-Development.zh-CN)

|

||||

- [How to Develop a New Feature](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend) | [如何开发一个新功能:前端实现](https://github.com/lobehub/lobe-chat/wiki/Feature-Development-Frontend.zh-CN)

|

||||

- [New Authentication Provider Guide](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers) | [新身份验证方式开发指南](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers.zh-CN)

|

||||

- [Resources and References](https://github.com/lobehub/lobe-chat/wiki/Resources) | [资源与参考](https://github.com/lobehub/lobe-chat/wiki/Resources.zh-CN)

|

||||

- [Technical Development Getting Started Guide](https://github.com/lobehub/lobe-chat/wiki/Intro) | [技术开发上手指南](https://github.com/lobehub/lobe-chat/wiki/Intro.zh-CN)

|

||||

- [Testing Guide](https://github.com/lobehub/lobe-chat/wiki/Test) | [测试指南](https://github.com/lobehub/lobe-chat/wiki/Test.zh-CN)

|

||||

|

||||

#### 🌎 Internationalization

|

||||

|

||||

- [Internationalization Implementation Guide](https://github.com/lobehub/lobe-chat/wiki/Internationalization-Implementation) | [国际化实现指南](https://github.com/lobehub/lobe-chat/wiki/Internationalization-Implementation.zh-CN)

|

||||

- [New Locale Guide](https://github.com/lobehub/lobe-chat/wiki/Add-New-Locale) | [新语种添加指南](https://github.com/lobehub/lobe-chat/wiki/Add-New-Locale.zh-CN)

|

||||

- [New Authentication Provider Guide](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers) | [新身份验证方式开发指南](https://github.com/lobehub/lobe-chat/wiki/Add-New-Authentication-Providers.zh-CN)

|

||||

- [Testing Guide](https://github.com/lobehub/lobe-chat/wiki/Test) | [测试指南](https://github.com/lobehub/lobe-chat/wiki/Test.zh-CN)

|

||||

- [Resources and References](https://github.com/lobehub/lobe-chat/wiki/Resources) | [资源与参考](https://github.com/lobehub/lobe-chat/wiki/Resources.zh-CN)

|

||||

|

||||

#### ⌨️ State Management

|

||||

|

||||

- [Best Practices for State Management](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro) | [状态管理最佳实践](https://github.com/lobehub/lobe-chat/wiki/State-Management-Intro.zh-CN)

|

||||

- [Data Store Selector](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors) | [数据存储取数模块](https://github.com/lobehub/lobe-chat/wiki/State-Management-Selectors.zh-CN)

|

||||

|

||||

#### 🤖 Agents

|

||||

|

||||

|

|

@ -46,7 +36,6 @@

|

|||

|

||||

#### 🧩 Plugins

|

||||

|

||||

- [Plugin Development Guide](https://github.com/lobehub/lobe-chat/wiki/Plugin-Development) | [插件开发指南](https://github.com/lobehub/lobe-chat/wiki/Plugin-Development.zh-CN)

|

||||

- [Plugin Index and Submit](https://github.com/lobehub/lobe-chat-plugins) | [插件索引与提交](https://github.com/lobehub/lobe-chat-plugins/blob/main/README.zh-CN.md)

|

||||

- [Plugin SDK Docs](https://chat-plugin-sdk.lobehub.com) | [插件 SDK 文档](https://chat-plugin-sdk.lobehub.com)

|

||||

|

||||

|

|

@ -1,20 +0,0 @@

|

|||

# Data Statistics

|

||||

|

||||

To better analyze the usage of LobeChat users, we have integrated several free/open-source data statistics services in LobeChat for collecting user usage data, which you can enable as needed.

|

||||

|

||||

#### TOC

|

||||

|

||||

- [Vercel Analytics](#vercel-analytics)

|

||||

- [🚧 Posthog](#-posthog)

|

||||

|

||||

## Vercel Analytics

|

||||

|

||||

[Vercel Analytics](https://vercel.com/analytics) is a data analysis service launched by Vercel, which can help you collect website visit information, including traffic, sources, and devices used for access.

|

||||

|

||||