* perf(studio): upgrade to Vite 8 + auto-install bun for 3x faster frontend builds

* fix(studio): make bun-to-npm fallback actually reachable

setup.sh used run_quiet() for the bun install attempt, but run_quiet

calls exit on failure. This killed the script before the npm fallback

could run, making the "falling back to npm" branch dead code.

Replace the run_quiet call with a direct bun invocation that captures

output to a temp file (same pattern, but returns instead of exiting).

Also clean up partial node_modules left by a failed bun install before

falling back to npm, in both setup.sh and build.sh. Without this, npm

inherits a corrupted node_modules tree from the failed bun run.

* fix(studio): restore commonjsOptions for dagre CJS interop

The previous commit removed build.commonjsOptions, assuming Vite 8's

Rolldown handles CJS natively. While optimizeDeps.include covers the

dev server (pre-bundling), it does NOT apply to production builds.

The resolve.alias still points @dagrejs/dagre to its .cjs.js entry,

so without commonjsOptions the production bundle fails to resolve

the CJS default export. This causes "TypeError: e is not a function"

on /chat after build (while dev mode works fine).

Restore the original commonjsOptions block to fix production builds.

* fix(studio): use motion/react instead of legacy framer-motion import

* fix(studio): address PR review findings for Vite 8 + bun upgrade

Fixes:

- Remove bun.lock from repo and add to .gitignore (npm is source of truth)

- Use & bun install *> $null pattern in setup.ps1 for reliable $LASTEXITCODE

- Add Remove-Item node_modules before npm fallback in setup.ps1

- Print bun install failure log in setup.sh before discarding

- Add Refresh-Environment after npm install -g bun in setup.ps1

- Tighten Node version check to ^20.19.0 || >=22.12.0 (Vite 8 requirement)

- Add engines field to package.json

- Use string comparison for _install_ok in build.sh

- Remove explicit framer-motion ^11.18.2 from package.json (motion pulls

framer-motion ^12.38.0 as its own dependency — the old pin caused a

version conflict)

* Fix Colab Node bypass and bun.lock stale-build trigger

Gate the Colab Node shortcut on NODE_OK=true so Colab

environments with a Node version too old for Vite 8 fall

through to the nvm install path instead of silently proceeding.

Exclude bun.lock from the stale-build probe in both setup.sh

and setup.ps1 so it does not force unnecessary frontend rebuilds

on every run.

---------

Co-authored-by: Daniel Han <danielhanchen@gmail.com>

Co-authored-by: Shine1i <wasimysdev@gmail.com>

|

||

|---|---|---|

| .github | ||

| images | ||

| scripts | ||

| studio | ||

| tests | ||

| unsloth | ||

| unsloth_cli | ||

| .gitattributes | ||

| .gitignore | ||

| .pre-commit-ci.yaml | ||

| .pre-commit-config.yaml | ||

| build.sh | ||

| cli.py | ||

| CODE_OF_CONDUCT.md | ||

| CONTRIBUTING.md | ||

| COPYING | ||

| install.ps1 | ||

| install.sh | ||

| LICENSE | ||

| pyproject.toml | ||

| README.md | ||

| unsloth-cli.py | ||

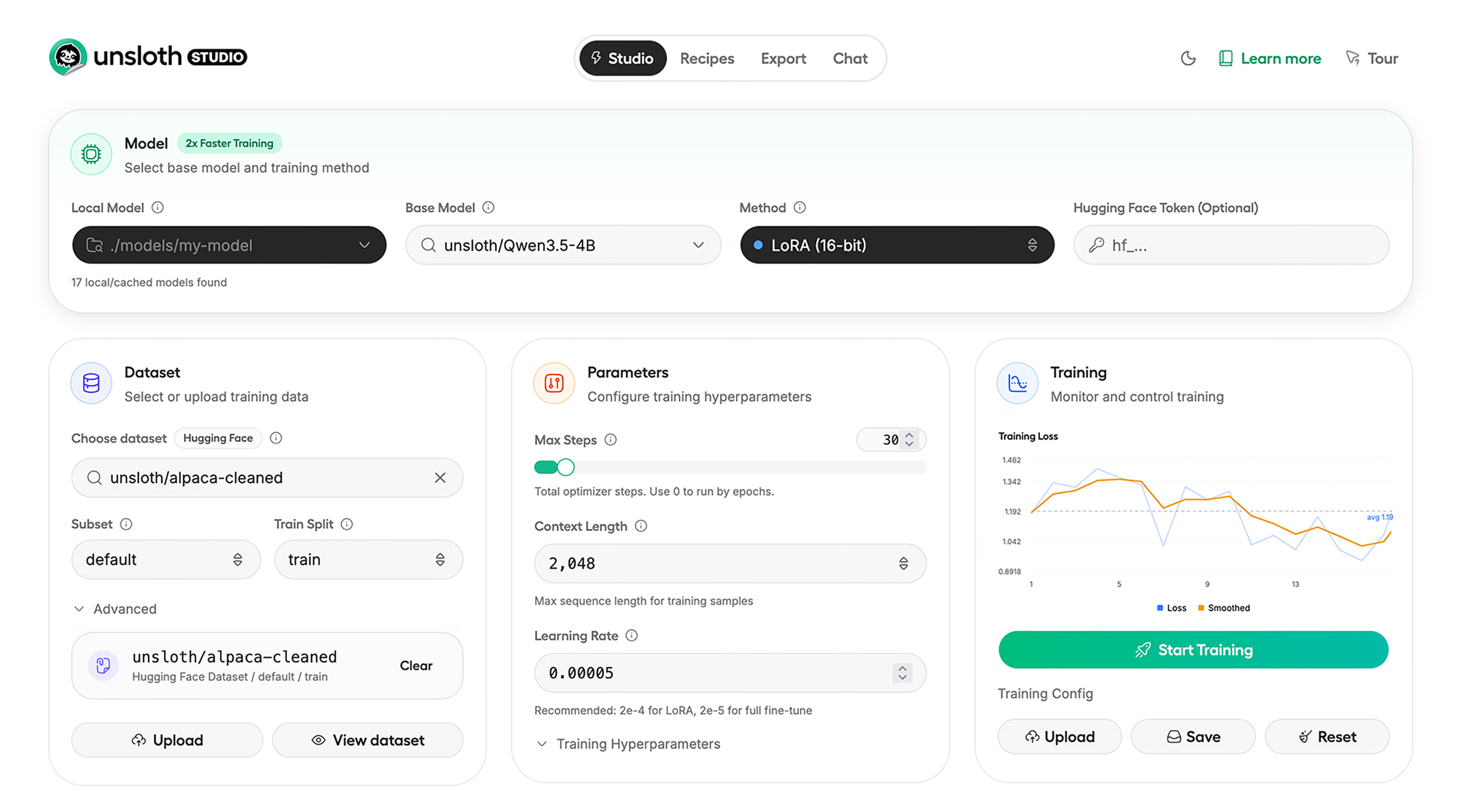

Run and train AI models with a unified local interface.

Features • Quickstart • Notebooks • Documentation • Reddit

Unsloth Studio (Beta) lets you run and train text, audio, embedding, vision models on Windows, Linux and macOS.

⭐ Features

Unsloth provides several key features for both inference and training:

Inference

- Search + download + run models including GGUF, LoRA adapters, safetensors

- Export models: Save or export models to GGUF, 16-bit safetensors and other formats.

- Tool calling: Support for self-healing tool calling and web search

- Code execution: lets LLMs test code in Claude artifacts and sandbox environments

- Auto-tune inference parameters and customize chat templates.

- We work directly with teams behind gpt-oss, Qwen3, Llama 4, Mistral, Gemma 1-3, and Phi-4, where we’ve fixed bugs that improve model accuracy.

- Upload images, audio, PDFs, code, DOCX and more file types to chat with.

Training

- Train and RL 500+ models up to 2x faster with up to 70% less VRAM, with no accuracy loss.

- Custom Triton and mathematical kernels. See some collabs we did with PyTorch and Hugging Face.

- Data Recipes: Auto-create datasets from PDF, CSV, DOCX etc. Edit data in a visual-node workflow.

- Reinforcement Learning (RL): The most efficient RL library, using 80% less VRAM for GRPO, FP8 etc.

- Supports full fine-tuning, RL, pretraining, 4-bit, 16-bit and, FP8 training.

- Observability: Monitor training live, track loss and GPU usage and customize graphs.

- Multi-GPU training is supported, with major improvements coming soon.

⚡ Quickstart

Unsloth can be used in two ways: through Unsloth Studio, the web UI, or through Unsloth Core, the code-based version. Each has different requirements.

Unsloth Studio (web UI)

Unsloth Studio (Beta) works on Windows, Linux, WSL and macOS.

- CPU: Supported for Chat and Data Recipes currently

- NVIDIA: Training works on RTX 30/40/50, Blackwell, DGX Spark, Station and more

- macOS: Currently supports chat and Data Recipes. MLX training is coming very soon

- AMD: Chat + Data works. Train with Unsloth Core. Studio support is out soon.

- Coming soon: Training support for Apple MLX, AMD, and Intel.

- Multi-GPU: Available now, with a major upgrade on the way

macOS, Linux, WSL:

curl -fsSL https://unsloth.ai/install.sh | sh

If you don't have curl, use wget. Launch after setup via:

source unsloth_studio/bin/activate

unsloth studio -H 0.0.0.0 -p 8888

Windows:

irm https://unsloth.ai/install.ps1 | iex

Launch after setup via:

& .\unsloth_studio\Scripts\unsloth.exe studio -H 0.0.0.0 -p 8888

Docker

Use our Docker image unsloth/unsloth container. Run:

docker run -d -e JUPYTER_PASSWORD="mypassword" \

-p 8888:8888 -p 8000:8000 -p 2222:22 \

-v $(pwd)/work:/workspace/work \

--gpus all \

unsloth/unsloth

macOS, Linux, WSL developer installs:

curl -LsSf https://astral.sh/uv/install.sh | sh

uv venv unsloth_studio --python 3.13

source unsloth_studio/bin/activate

uv pip install unsloth --torch-backend=auto

unsloth studio setup

unsloth studio -H 0.0.0.0 -p 8888

Windows PowerShell developer installs:

winget install -e --id Python.Python.3.13

winget install --id=astral-sh.uv -e

uv venv unsloth_studio --python 3.13

.\unsloth_studio\Scripts\activate

uv pip install unsloth --torch-backend=auto

unsloth studio setup

unsloth studio -H 0.0.0.0 -p 8888

Nightly - MacOS, Linux, WSL:

curl -LsSf https://astral.sh/uv/install.sh | sh

git clone --filter=blob:none https://github.com/unslothai/unsloth.git unsloth_studio

cd unsloth_studio

uv venv --python 3.13

source .venv/bin/activate

uv pip install -e . --torch-backend=auto

unsloth studio setup

unsloth studio -H 0.0.0.0 -p 8888

Then to launch every time:

cd unsloth_studio

source .venv/bin/activate

unsloth studio -H 0.0.0.0 -p 8888

Nightly - Windows:

Run in Windows Powershell:

winget install -e --id Python.Python.3.13

winget install --id=astral-sh.uv -e

git clone --filter=blob:none https://github.com/unslothai/unsloth.git unsloth_studio

cd unsloth_studio

uv venv --python 3.13

.\.venv\Scripts\activate

uv pip install -e . --torch-backend=auto

unsloth studio setup

unsloth studio -H 0.0.0.0 -p 8888

Then to launch every time:

cd unsloth_studio

.\.venv\Scripts\activate

unsloth studio -H 0.0.0.0 -p 8888

Unsloth Core (code-based)

Linux, WSL:

curl -LsSf https://astral.sh/uv/install.sh | sh

uv venv unsloth_env --python 3.13

source unsloth_env/bin/activate

uv pip install unsloth --torch-backend=auto

Windows:

winget install -e --id Python.Python.3.13

winget install --id=astral-sh.uv -e

uv venv unsloth_env --python 3.13

.\unsloth_env\Scripts\activate

uv pip install unsloth --torch-backend=auto

For Windows, pip install unsloth works only if you have PyTorch installed. Read our Windows Guide.

You can use the same Docker image as Unsloth Studio.

AMD, Intel:

For RTX 50x, B200, 6000 GPUs: uv pip install unsloth --torch-backend=auto. Read our guides for: Blackwell and DGX Spark.

To install Unsloth on AMD and Intel GPUs, follow our AMD Guide and Intel Guide.

✨ Free Notebooks

Train for free with our notebooks. Read our guide. Add dataset, run, then deploy your trained model.

| Model | Free Notebooks | Performance | Memory use |

|---|---|---|---|

| Qwen3.5 (4B) | ▶️ Start for free | 1.5x faster | 60% less |

| gpt-oss (20B) | ▶️ Start for free | 2x faster | 70% less |

| Qwen3.5 GSPO | ▶️ Start for free | 2x faster | 70% less |

| gpt-oss (20B): GRPO | ▶️ Start for free | 2x faster | 80% less |

| Qwen3: Advanced GRPO | ▶️ Start for free | 2x faster | 70% less |

| Gemma 3 (4B) Vision | ▶️ Start for free | 1.7x faster | 60% less |

| embeddinggemma (300M) | ▶️ Start for free | 2x faster | 20% less |

| Mistral Ministral 3 (3B) | ▶️ Start for free | 1.5x faster | 60% less |

| Llama 3.1 (8B) Alpaca | ▶️ Start for free | 2x faster | 70% less |

| Llama 3.2 Conversational | ▶️ Start for free | 2x faster | 70% less |

| Orpheus-TTS (3B) | ▶️ Start for free | 1.5x faster | 50% less |

- See all our notebooks for: Kaggle, GRPO, TTS, embedding & Vision

- See all our models and all our notebooks

- See detailed documentation for Unsloth here

🦥 Unsloth News

- Introducing Unsloth Studio: our new web UI for running and training LLMs. Blog

- Qwen3.5 - 0.8B, 2B, 4B, 9B, 27B, 35-A3B, 112B-A10B are now supported. Guide + notebooks

- Train MoE LLMs 12x faster with 35% less VRAM - DeepSeek, GLM, Qwen and gpt-oss. Blog

- Embedding models: Unsloth now supports ~1.8-3.3x faster embedding fine-tuning. Blog • Notebooks

- New 7x longer context RL vs. all other setups, via our new batching algorithms. Blog

- New RoPE & MLP Triton Kernels & Padding Free + Packing: 3x faster training & 30% less VRAM. Blog

- 500K Context: Training a 20B model with >500K context is now possible on an 80GB GPU. Blog

- FP8 & Vision RL: You can now do FP8 & VLM GRPO on consumer GPUs. FP8 Blog • Vision RL

- gpt-oss by OpenAI: Read our RL blog, Flex Attention blog and Guide.

💚 Community and Links

| Type | Links |

|---|---|

| Join Discord server | |

| Join Reddit community | |

| 📚 Documentation & Wiki | Read Our Docs |

| Follow us on X | |

| 🔮 Our Models | Unsloth Catalog |

| ✍️ Blog | Read our Blogs |

Citation

You can cite the Unsloth repo as follows:

@software{unsloth,

author = {Daniel Han, Michael Han and Unsloth team},

title = {Unsloth},

url = {https://github.com/unslothai/unsloth},

year = {2023}

}

If you trained a model with 🦥Unsloth, you can use this cool sticker!

License

Unsloth uses a dual-licensing model of Apache 2.0 and AGPL-3.0. The core Unsloth package remains licensed under Apache 2.0, while certain optional components, such as the Unsloth Studio UI are licensed under the open-source license AGPL-3.0.

This structure helps support ongoing Unsloth development while keeping the project open source and enabling the broader ecosystem to continue growing.

Thank You to

- The llama.cpp library that lets users run and save models with Unsloth

- The Hugging Face team and their libraries: transformers and TRL

- The Pytorch and Torch AO team for their contributions

- NVIDIA for their NeMo DataDesigner library and their contributions

- And of course for every single person who has contributed or has used Unsloth!