mirror of

https://github.com/ultralytics/ultralytics

synced 2026-04-21 14:07:18 +00:00

Merge branch 'yoloe_new2' into yoloe_new

This commit is contained in:

commit

f6c9055301

72 changed files with 827 additions and 390 deletions

2

.github/workflows/ci.yml

vendored

2

.github/workflows/ci.yml

vendored

|

|

@ -435,7 +435,7 @@ jobs:

|

|||

channel-priority: true

|

||||

activate-environment: anaconda-client-env

|

||||

- name: Install Ultralytics package from conda-forge

|

||||

run: conda install -c pytorch -c conda-forge pytorch-cpu torchvision ultralytics "openvino!=2026.0.0"

|

||||

run: conda install -c pytorch -c conda-forge pytorch-cpu torchvision ultralytics "openvino<2026"

|

||||

- name: Install pip packages

|

||||

run: uv pip install pytest

|

||||

- name: Check environment

|

||||

|

|

|

|||

40

.github/workflows/docker.yml

vendored

40

.github/workflows/docker.yml

vendored

|

|

@ -118,24 +118,42 @@ jobs:

|

|||

uses: docker/setup-buildx-action@v4

|

||||

|

||||

- name: Login to Docker Hub

|

||||

uses: docker/login-action@v4

|

||||

uses: ultralytics/actions/retry@main

|

||||

env:

|

||||

DOCKERHUB_USERNAME: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

DOCKERHUB_TOKEN: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

run: |

|

||||

if ! out=$(printf '%s' "$DOCKERHUB_TOKEN" | docker login -u "$DOCKERHUB_USERNAME" --password-stdin 2>&1); then

|

||||

printf '%s\n' "$out" >&2

|

||||

exit 1

|

||||

fi

|

||||

echo "Logged in to docker.io"

|

||||

|

||||

- name: Login to GHCR

|

||||

uses: docker/login-action@v4

|

||||

uses: ultralytics/actions/retry@main

|

||||

env:

|

||||

GHCR_USERNAME: ${{ github.repository_owner }}

|

||||

GHCR_TOKEN: ${{ secrets._GITHUB_TOKEN }}

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets._GITHUB_TOKEN }}

|

||||

run: |

|

||||

if ! out=$(printf '%s' "$GHCR_TOKEN" | docker login ghcr.io -u "$GHCR_USERNAME" --password-stdin 2>&1); then

|

||||

printf '%s\n' "$out" >&2

|

||||

exit 1

|

||||

fi

|

||||

echo "Logged in to ghcr.io"

|

||||

|

||||

- name: Login to NVIDIA NGC

|

||||

uses: docker/login-action@v4

|

||||

uses: ultralytics/actions/retry@main

|

||||

env:

|

||||

NVIDIA_NGC_API_KEY: ${{ secrets.NVIDIA_NGC_API_KEY }}

|

||||

with:

|

||||

registry: nvcr.io

|

||||

username: $oauthtoken

|

||||

password: ${{ secrets.NVIDIA_NGC_API_KEY }}

|

||||

run: |

|

||||

if ! out=$(printf '%s' "$NVIDIA_NGC_API_KEY" | docker login nvcr.io -u '$oauthtoken' --password-stdin 2>&1); then

|

||||

printf '%s\n' "$out" >&2

|

||||

exit 1

|

||||

fi

|

||||

echo "Logged in to nvcr.io"

|

||||

|

||||

- name: Retrieve Ultralytics version

|

||||

id: get_version

|

||||

|

|

|

|||

2

.github/workflows/links.yml

vendored

2

.github/workflows/links.yml

vendored

|

|

@ -35,6 +35,7 @@ jobs:

|

|||

timeout_minutes: 60

|

||||

retry_delay_seconds: 1800

|

||||

retries: 2

|

||||

backoff: fixed

|

||||

run: |

|

||||

lychee \

|

||||

--scheme https \

|

||||

|

|

@ -70,6 +71,7 @@ jobs:

|

|||

timeout_minutes: 60

|

||||

retry_delay_seconds: 1800

|

||||

retries: 2

|

||||

backoff: fixed

|

||||

run: |

|

||||

lychee \

|

||||

--scheme https \

|

||||

|

|

|

|||

|

|

@ -29,9 +29,9 @@ First-time contributors are expected to submit small, well-scoped pull requests.

|

|||

|

||||

#### Established Contributors

|

||||

|

||||

Pull requests from established contributors generally receive higher review priority. Actions and results are fundamental to the [Ultralytics Mission & Values](https://handbook.ultralytics.com/mission-vision-values/). There is no specific threshold to becoming an 'established contributor' as it's impossible to fit all individuals to the same standard. The Ultralytics Team notices those who make consistent, high-quality contributions that follow the Ultralytics standards.

|

||||

Pull requests from established contributors generally receive higher review priority. Actions and results are fundamental to the [Ultralytics Mission & Values](https://handbook.ultralytics.com/mission-vision-values). There is no specific threshold to becoming an 'established contributor' as it's impossible to fit all individuals to the same standard. The Ultralytics Team notices those who make consistent, high-quality contributions that follow the Ultralytics standards.

|

||||

|

||||

Following our [contributing guidelines](./CONTRIBUTING.md) and [our Development Workflow](https://handbook.ultralytics.com/workflows/development/) is the best way to improve your chances for your work to be reviewed, accepted, and/or recognized; this is not a guarantee. In addition, contributors with a strong track record of meaningful contributions to notable open-source projects may be treated as established contributors, even if they are technically first-time contributors to Ultralytics.

|

||||

Following our [contributing guidelines](./CONTRIBUTING.md) and [our Development Workflow](https://handbook.ultralytics.com/workflows/development) is the best way to improve your chances for your work to be reviewed, accepted, and/or recognized; this is not a guarantee. In addition, contributors with a strong track record of meaningful contributions to notable open-source projects may be treated as established contributors, even if they are technically first-time contributors to Ultralytics.

|

||||

|

||||

#### Feature PRs

|

||||

|

||||

|

|

@ -156,11 +156,11 @@ We highly value bug reports as they help us improve the quality and reliability

|

|||

|

||||

Ultralytics uses the [GNU Affero General Public License v3.0 (AGPL-3.0)](https://www.ultralytics.com/legal/agpl-3-0-software-license) for its repositories. This license promotes [openness](https://en.wikipedia.org/wiki/Openness), [transparency](https://www.ultralytics.com/glossary/transparency-in-ai), and [collaborative improvement](https://en.wikipedia.org/wiki/Collaborative_software) in software development. It ensures that all users have the freedom to use, modify, and share the software, fostering a strong community of collaboration and innovation.

|

||||

|

||||

We encourage all contributors to familiarize themselves with the terms of the [AGPL-3.0 license](https://opensource.org/license/agpl-v3) to contribute effectively and ethically to the Ultralytics open-source community.

|

||||

We encourage all contributors to familiarize themselves with the terms of the [AGPL-3.0 license](https://opensource.org/license/agpl-3.0) to contribute effectively and ethically to the Ultralytics open-source community.

|

||||

|

||||

## 🌍 Open-Sourcing Your YOLO Project Under AGPL-3.0

|

||||

|

||||

Using Ultralytics YOLO models or code in your project? The [AGPL-3.0 license](https://opensource.org/license/agpl-v3) requires that your entire derivative work also be open-sourced under AGPL-3.0. This ensures modifications and larger projects built upon open-source foundations remain open.

|

||||

Using Ultralytics YOLO models or code in your project? The [AGPL-3.0 license](https://opensource.org/license/agpl-3.0) requires that your entire derivative work also be open-sourced under AGPL-3.0. This ensures modifications and larger projects built upon open-source foundations remain open.

|

||||

|

||||

### Why AGPL-3.0 Compliance Matters

|

||||

|

||||

|

|

@ -179,7 +179,7 @@ Complying means making the **complete corresponding source code** of your projec

|

|||

- **Use Ultralytics Template:** Start with the [Ultralytics template repository](https://github.com/ultralytics/template) for a clean, modular setup integrating YOLO.

|

||||

|

||||

2. **License Your Project:**

|

||||

- Add a `LICENSE` file containing the full text of the [AGPL-3.0 license](https://opensource.org/license/agpl-v3).

|

||||

- Add a `LICENSE` file containing the full text of the [AGPL-3.0 license](https://opensource.org/license/agpl-3.0).

|

||||

- Add a notice at the top of each source file indicating the license.

|

||||

|

||||

3. **Publish Your Source Code:**

|

||||

|

|

|

|||

|

|

@ -252,7 +252,7 @@ We look forward to your contributions to help make the Ultralytics ecosystem eve

|

|||

|

||||

Ultralytics offers two licensing options to suit different needs:

|

||||

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-v3) open-source license is perfect for students, researchers, and enthusiasts. It encourages open collaboration and knowledge sharing. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for full details.

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-3.0) open-source license is perfect for students, researchers, and enthusiasts. It encourages open collaboration and knowledge sharing. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for full details.

|

||||

- **Ultralytics Enterprise License**: Designed for commercial use, this license allows for the seamless integration of Ultralytics software and AI models into commercial products and services, bypassing the open-source requirements of AGPL-3.0. If your use case involves commercial deployment, please contact us via [Ultralytics Licensing](https://www.ultralytics.com/license).

|

||||

|

||||

## 📞 Contact

|

||||

|

|

|

|||

|

|

@ -252,7 +252,7 @@ Ultralytics 支持广泛的 YOLO 模型,从早期的版本如 [YOLOv3](https:/

|

|||

|

||||

Ultralytics 提供两种许可选项以满足不同需求:

|

||||

|

||||

- **AGPL-3.0 许可证**:这种经 [OSI 批准](https://opensource.org/license/agpl-v3)的开源许可证非常适合学生、研究人员和爱好者。它鼓励开放协作和知识共享。有关完整详细信息,请参阅 [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) 文件。

|

||||

- **AGPL-3.0 许可证**:这种经 [OSI 批准](https://opensource.org/license/agpl-3.0)的开源许可证非常适合学生、研究人员和爱好者。它鼓励开放协作和知识共享。有关完整详细信息,请参阅 [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) 文件。

|

||||

- **Ultralytics 企业许可证**:专为商业用途设计,此许可证允许将 Ultralytics 软件和 AI 模型无缝集成到商业产品和服务中,绕过 AGPL-3.0 的开源要求。如果您的使用场景涉及商业部署,请通过 [Ultralytics 授权许可](https://www.ultralytics.com/license)与我们联系。

|

||||

|

||||

## 📞 联系方式

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: TT100K, Tsinghua-Tencent 100K, traffic sign detection, YOLO26, dataset

|

|||

|

||||

The [Tsinghua-Tencent 100K (TT100K)](https://cg.cs.tsinghua.edu.cn/traffic-sign/) is a large-scale traffic sign benchmark dataset created from 100,000 Tencent Street View panoramas. This dataset is specifically designed for traffic sign detection and classification in real-world conditions, providing researchers and developers with a comprehensive resource for building robust traffic sign recognition systems.

|

||||

|

||||

The dataset contains **100,000 images** with over **30,000 traffic sign instances** across **221 different categories**. These images capture large variations in illuminance, weather conditions, viewing angles, and distances, making it ideal for training models that need to perform reliably in diverse real-world scenarios.

|

||||

The dataset contains **100,000 images** with over **30,000 traffic sign instances** across **221 annotation categories**. The original paper applies a 100-instance threshold per class for supervised training, yielding a commonly used **45-class** subset; however, the provided Ultralytics dataset configuration retains all **221 annotated categories**, many of which are very sparse. These images capture large variations in illuminance, weather conditions, viewing angles, and distances, making it ideal for training models that need to perform reliably in diverse real-world scenarios.

|

||||

|

||||

This dataset is particularly valuable for:

|

||||

|

||||

|

|

|

|||

|

|

@ -39,7 +39,7 @@ pip install ultralytics[explorer]

|

|||

|

||||

!!! tip

|

||||

|

||||

Explorer works on embedding/semantic search & SQL querying and is powered by [LanceDB](https://lancedb.com/) serverless vector database. Unlike traditional in-memory DBs, it is persisted on disk without sacrificing performance, so you can scale locally to large datasets like COCO without running out of memory.

|

||||

Explorer works on embedding/semantic search & SQL querying and is powered by [LanceDB](https://www.lancedb.com/) serverless vector database. Unlike traditional in-memory DBs, it is persisted on disk without sacrificing performance, so you can scale locally to large datasets like COCO without running out of memory.

|

||||

|

||||

## Explorer API

|

||||

|

||||

|

|

@ -68,7 +68,7 @@ yolo explorer

|

|||

|

||||

### What is Ultralytics Explorer and how can it help with CV datasets?

|

||||

|

||||

Ultralytics Explorer is a powerful tool designed for exploring [computer vision](https://www.ultralytics.com/glossary/computer-vision-cv) (CV) datasets through semantic search, SQL queries, vector similarity search, and even natural language. This versatile tool provides both a GUI and a Python API, allowing users to seamlessly interact with their datasets. By leveraging technologies like [LanceDB](https://lancedb.com/), Ultralytics Explorer ensures efficient, scalable access to large datasets without excessive memory usage. Whether you're performing detailed dataset analysis or exploring data patterns, Ultralytics Explorer streamlines the entire process.

|

||||

Ultralytics Explorer is a powerful tool designed for exploring [computer vision](https://www.ultralytics.com/glossary/computer-vision-cv) (CV) datasets through semantic search, SQL queries, vector similarity search, and even natural language. This versatile tool provides both a GUI and a Python API, allowing users to seamlessly interact with their datasets. By leveraging technologies like [LanceDB](https://www.lancedb.com/), Ultralytics Explorer ensures efficient, scalable access to large datasets without excessive memory usage. Whether you're performing detailed dataset analysis or exploring data patterns, Ultralytics Explorer streamlines the entire process.

|

||||

|

||||

Learn more about the [Explorer API](api.md).

|

||||

|

||||

|

|

@ -80,7 +80,7 @@ To manually install the optional dependencies needed for Ultralytics Explorer, y

|

|||

pip install ultralytics[explorer]

|

||||

```

|

||||

|

||||

These dependencies are essential for the full functionality of semantic search and SQL querying. By including libraries powered by [LanceDB](https://lancedb.com/), the installation ensures that the database operations remain efficient and scalable, even for large datasets like [COCO](../detect/coco.md).

|

||||

These dependencies are essential for the full functionality of semantic search and SQL querying. By including libraries powered by [LanceDB](https://www.lancedb.com/), the installation ensures that the database operations remain efficient and scalable, even for large datasets like [COCO](../detect/coco.md).

|

||||

|

||||

### How can I use the GUI version of Ultralytics Explorer?

|

||||

|

||||

|

|

|

|||

|

|

@ -216,4 +216,4 @@ cv2.destroyAllWindows()

|

|||

|

||||

### Why should businesses choose Ultralytics YOLO26 for heatmap generation in data analysis?

|

||||

|

||||

Ultralytics YOLO26 offers seamless integration of advanced object detection and real-time heatmap generation, making it an ideal choice for businesses looking to visualize data more effectively. The key advantages include intuitive data distribution visualization, efficient pattern detection, and enhanced spatial analysis for better decision-making. Additionally, YOLO26's cutting-edge features such as persistent tracking, customizable colormaps, and support for various export formats make it superior to other tools like [TensorFlow](https://www.ultralytics.com/glossary/tensorflow) and OpenCV for comprehensive data analysis. Learn more about business applications at [Ultralytics Plans](https://www.ultralytics.com/plans).

|

||||

Ultralytics YOLO26 offers seamless integration of advanced object detection and real-time heatmap generation, making it an ideal choice for businesses looking to visualize data more effectively. The key advantages include intuitive data distribution visualization, efficient pattern detection, and enhanced spatial analysis for better decision-making. Additionally, YOLO26's cutting-edge features such as persistent tracking, customizable colormaps, and support for various export formats make it superior to other tools like [TensorFlow](https://www.ultralytics.com/glossary/tensorflow) and OpenCV for comprehensive data analysis. Learn more about business applications at [Ultralytics Plans](https://www.ultralytics.com/pricing).

|

||||

|

|

|

|||

|

|

@ -109,6 +109,15 @@ Parking management with [Ultralytics YOLO26](https://github.com/ultralytics/ultr

|

|||

cv2.destroyAllWindows() # destroy all opened windows

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

yolo solutions parking source="path/to/video.mp4" json_file="bounding_boxes.json" show=True

|

||||

```

|

||||

|

||||

!!! note

|

||||

Create parking zone annotations first using `ParkingPtsSelection()` in Python (Step 2 above), then pass the JSON file to the CLI command.

|

||||

|

||||

### `ParkingManagement` Arguments

|

||||

|

||||

Here's a table with the `ParkingManagement` arguments:

|

||||

|

|

|

|||

|

|

@ -87,6 +87,12 @@ keywords: object counting, regions, YOLO26, computer vision, Ultralytics, effici

|

|||

cv2.destroyAllWindows() # destroy all opened windows

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

yolo solutions region source="path/to/video.mp4" show=True region="[(20, 400), (1080, 400), (1080, 360), (20, 360)]"

|

||||

```

|

||||

|

||||

!!! tip "Ultralytics Example Code"

|

||||

|

||||

The Ultralytics region counting module is available in our [examples section](https://github.com/ultralytics/ultralytics/blob/main/examples/YOLOv8-Region-Counter/yolov8_region_counter.py). You can explore this example for code customization and modify it to suit your specific use case.

|

||||

|

|

|

|||

|

|

@ -243,7 +243,7 @@ Using YOLO, it is possible to extract and combine information from both RGB and

|

|||

|

||||

!!! warning "RGB-D Cameras"

|

||||

|

||||

When working with depth images, it is essential to ensure that the RGB and depth images are correctly aligned. RGB-D cameras, such as the [Intel RealSense](https://realsenseai.com/) series, provide synchronized RGB and depth images, making it easier to combine information from both sources. If using separate RGB and depth cameras, it is crucial to calibrate them to ensure accurate alignment.

|

||||

When working with depth images, it is essential to ensure that the RGB and depth images are correctly aligned. RGB-D cameras, such as the [Intel RealSense](https://www.realsenseai.com/) series, provide synchronized RGB and depth images, making it easier to combine information from both sources. If using separate RGB and depth cameras, it is crucial to calibrate them to ensure accurate alignment.

|

||||

|

||||

#### Depth Step-by-Step Usage

|

||||

|

||||

|

|

|

|||

|

|

@ -79,6 +79,15 @@ The Security Alarm System Project utilizing Ultralytics YOLO26 integrates advanc

|

|||

cv2.destroyAllWindows() # destroy all opened windows

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

yolo solutions security source="path/to/video.mp4" show=True

|

||||

```

|

||||

|

||||

!!! note

|

||||

Email alerts require the Python API to call `.authenticate()`. The CLI provides detection and visualization only.

|

||||

|

||||

When you run the code, you will receive a single email notification if any object is detected. The notification is sent immediately, not repeatedly. You can customize the code to suit your project requirements.

|

||||

|

||||

#### Email Received Sample

|

||||

|

|

|

|||

|

|

@ -27,7 +27,7 @@ Before we start, you will need to create a Google Cloud Platform (GCP) project.

|

|||

## Prerequisites

|

||||

|

||||

1. Install [Docker](https://docs.docker.com/engine/install/) on your machine.

|

||||

2. Install the [Google Cloud SDK](https://cloud.google.com/sdk/docs/install) and [authenticate for using the gcloud CLI](https://cloud.google.com/docs/authentication/gcloud).

|

||||

2. Install the [Google Cloud SDK](https://docs.cloud.google.com/sdk/docs/install-sdk) and [authenticate for using the gcloud CLI](https://docs.cloud.google.com/docs/authentication/gcloud).

|

||||

3. It is highly recommended that you go through the [Docker Quickstart Guide for Ultralytics](https://docs.ultralytics.com/guides/docker-quickstart/), because you will need to extend one of the official Ultralytics Docker images while following this guide.

|

||||

|

||||

## 1. Create an inference backend with FastAPI

|

||||

|

|

@ -507,7 +507,7 @@ docker push YOUR_REGION-docker.pkg.dev/YOUR_PROJECT_ID/YOUR_REPOSITORY_NAME/IMAG

|

|||

|

||||

Wait for the process to complete. You should now see the image in your Artifact Registry repository.

|

||||

|

||||

For more specific instructions on how to work with images in Artifact Registry, see the Artifact Registry documentation: [Push and pull images](https://cloud.google.com/artifact-registry/docs/docker/pushing-and-pulling).

|

||||

For more specific instructions on how to work with images in Artifact Registry, see the Artifact Registry documentation: [Push and pull images](https://docs.cloud.google.com/artifact-registry/docs/docker/pushing-and-pulling).

|

||||

|

||||

## 4. Import your model in Vertex AI

|

||||

|

||||

|

|

|

|||

|

|

@ -207,11 +207,11 @@ We highly value bug reports as they help us improve the quality and reliability

|

|||

|

||||

Ultralytics uses the [GNU Affero General Public License v3.0 (AGPL-3.0)](https://www.ultralytics.com/legal/agpl-3-0-software-license) for its repositories. This license promotes [openness](https://en.wikipedia.org/wiki/Openness), [transparency](https://www.ultralytics.com/glossary/transparency-in-ai), and [collaborative improvement](https://en.wikipedia.org/wiki/Collaborative_software) in software development. It ensures that all users have the freedom to use, modify, and share the software, fostering a strong community of collaboration and innovation.

|

||||

|

||||

We encourage all contributors to familiarize themselves with the terms of the [AGPL-3.0 license](https://opensource.org/license/agpl-v3) to contribute effectively and ethically to the Ultralytics open-source community.

|

||||

We encourage all contributors to familiarize themselves with the terms of the [AGPL-3.0 license](https://opensource.org/license/agpl-3.0) to contribute effectively and ethically to the Ultralytics open-source community.

|

||||

|

||||

## 🌍 Open-Sourcing Your YOLO Project Under AGPL-3.0

|

||||

|

||||

Using Ultralytics YOLO models or code in your project? The [AGPL-3.0 license](https://opensource.org/license/agpl-v3) requires that your entire derivative work also be open-sourced under AGPL-3.0. This ensures modifications and larger projects built upon open-source foundations remain open.

|

||||

Using Ultralytics YOLO models or code in your project? The [AGPL-3.0 license](https://opensource.org/license/agpl-3.0) requires that your entire derivative work also be open-sourced under AGPL-3.0. This ensures modifications and larger projects built upon open-source foundations remain open.

|

||||

|

||||

### Why AGPL-3.0 Compliance Matters

|

||||

|

||||

|

|

@ -230,7 +230,7 @@ Complying means making the **complete corresponding source code** of your projec

|

|||

- **Use Ultralytics Template:** Start with the [Ultralytics template repository](https://github.com/ultralytics/template) for a clean, modular setup integrating YOLO.

|

||||

|

||||

2. **License Your Project:**

|

||||

- Add a `LICENSE` file containing the full text of the [AGPL-3.0 license](https://opensource.org/license/agpl-v3).

|

||||

- Add a `LICENSE` file containing the full text of the [AGPL-3.0 license](https://opensource.org/license/agpl-3.0).

|

||||

- Add a notice at the top of each source file indicating the license.

|

||||

|

||||

3. **Publish Your Source Code:**

|

||||

|

|

|

|||

|

|

@ -9,9 +9,9 @@ At [Ultralytics](https://www.ultralytics.com/), the security of our users' data

|

|||

|

||||

## Snyk Scanning

|

||||

|

||||

We utilize [Snyk](https://snyk.io/advisor/python/ultralytics) to conduct comprehensive security scans on Ultralytics repositories. Snyk's robust scanning capabilities extend beyond dependency checks; it also examines our code and Dockerfiles for various vulnerabilities. By identifying and addressing these issues proactively, we ensure a higher level of security and reliability for our users.

|

||||

We utilize [Snyk](https://security.snyk.io/package/pip/ultralytics) to conduct comprehensive security scans on Ultralytics repositories. Snyk's robust scanning capabilities extend beyond dependency checks; it also examines our code and Dockerfiles for various vulnerabilities. By identifying and addressing these issues proactively, we ensure a higher level of security and reliability for our users.

|

||||

|

||||

[](https://snyk.io/advisor/python/ultralytics)

|

||||

[](https://security.snyk.io/package/pip/ultralytics)

|

||||

|

||||

## GitHub CodeQL Scanning

|

||||

|

||||

|

|

@ -51,7 +51,7 @@ These tools ensure proactive identification and resolution of security issues, e

|

|||

|

||||

### How does Ultralytics use Snyk for security scanning?

|

||||

|

||||

Ultralytics utilizes [Snyk](https://snyk.io/advisor/python/ultralytics) to conduct thorough security scans on its repositories. Snyk extends beyond basic dependency checks, examining the code and Dockerfiles for various vulnerabilities. By proactively identifying and resolving potential security issues, Snyk helps ensure that Ultralytics' open-source projects remain secure and reliable.

|

||||

Ultralytics utilizes [Snyk](https://security.snyk.io/package/pip/ultralytics) to conduct thorough security scans on its repositories. Snyk extends beyond basic dependency checks, examining the code and Dockerfiles for various vulnerabilities. By proactively identifying and resolving potential security issues, Snyk helps ensure that Ultralytics' open-source projects remain secure and reliable.

|

||||

|

||||

To see the Snyk badge and learn more about its deployment, check the [Snyk Scanning section](#snyk-scanning).

|

||||

|

||||

|

|

|

|||

|

|

@ -164,7 +164,7 @@ Explore the Ultralytics Docs, a comprehensive resource designed to help you unde

|

|||

|

||||

Ultralytics offers two licensing options to accommodate diverse use cases:

|

||||

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-v3) open-source license is ideal for students and enthusiasts, promoting open collaboration and knowledge sharing. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for more details.

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-3.0) open-source license is ideal for students and enthusiasts, promoting open collaboration and knowledge sharing. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for more details.

|

||||

- **Enterprise License**: Designed for commercial use, this license permits seamless integration of Ultralytics software and AI models into commercial goods and services, bypassing the open-source requirements of AGPL-3.0. If your scenario involves embedding our solutions into a commercial offering, reach out through [Ultralytics Licensing](https://www.ultralytics.com/license).

|

||||

|

||||

Our licensing strategy is designed to ensure that any improvements to our open-source projects are returned to the community. We believe in open source, and our mission is to ensure that our contributions can be used and expanded in ways that benefit everyone.

|

||||

|

|

|

|||

|

|

@ -6,7 +6,7 @@ keywords: Axelera AI, Metis AIPU, Voyager SDK, Edge AI, YOLOv8, YOLO11, YOLO26,

|

|||

|

||||

# Axelera AI Export and Deployment

|

||||

|

||||

Ultralytics partners with [Axelera AI](https://www.axelera.ai/) to enable high-performance, energy-efficient inference on [Edge AI](https://www.ultralytics.com/glossary/edge-ai) devices. Export and deploy **Ultralytics YOLO models** directly to the **Metis® AIPU** using the **Voyager SDK**.

|

||||

Ultralytics partners with [Axelera AI](https://axelera.ai/) to enable high-performance, energy-efficient inference on [Edge AI](https://www.ultralytics.com/glossary/edge-ai) devices. Export and deploy **Ultralytics YOLO models** directly to the **Metis® AIPU** using the **Voyager SDK**.

|

||||

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -103,7 +103,7 @@ If you'd like to dive deeper into Google Colab, here are a few resources to guid

|

|||

|

||||

- **[Image Segmentation with Ultralytics YOLO26 on Google Colab](https://www.ultralytics.com/blog/image-segmentation-with-ultralytics-yolo11-on-google-colab)**: Explore how to perform image segmentation tasks using YOLO26 in the Google Colab environment, with practical examples using datasets like the Roboflow Carparts Segmentation Dataset.

|

||||

|

||||

- **[Curated Notebooks](https://colab.google/notebooks/)**: Here you can explore a series of organized and educational notebooks, each grouped by specific topic areas.

|

||||

- **[Curated Notebooks](https://developers.google.com/colab)**: Here you can explore a series of organized and educational notebooks, each grouped by specific topic areas.

|

||||

|

||||

- **[Google Colab's Medium Page](https://medium.com/google-colab)**: You can find tutorials, updates, and community contributions here that can help you better understand and utilize this tool.

|

||||

|

||||

|

|

@ -130,7 +130,7 @@ Google Colab offers several advantages for training YOLO26 models:

|

|||

- **Integration with Google Drive:** Easily store and access datasets and models.

|

||||

- **Collaboration:** Share notebooks with others and collaborate in real-time.

|

||||

|

||||

For more information on why you should use Google Colab, explore the [training guide](../modes/train.md) and visit the [Google Colab page](https://colab.google/notebooks/).

|

||||

For more information on why you should use Google Colab, explore the [training guide](../modes/train.md) and visit the [Google Colab page](https://developers.google.com/colab).

|

||||

|

||||

### How can I handle Google Colab session timeouts during YOLO26 training?

|

||||

|

||||

|

|

|

|||

|

|

@ -87,7 +87,7 @@ Then, you can import the needed packages.

|

|||

|

||||

For this tutorial, we will use a [marine litter dataset](https://www.kaggle.com/datasets/atiqishrak/trash-dataset-icra19) available on Kaggle. With this dataset, we will custom-train a YOLO26 model to detect and classify litter and biological objects in underwater images.

|

||||

|

||||

We can load the dataset directly into the notebook using the Kaggle API. First, create a free Kaggle account. Once you have created an account, you'll need to generate an API key. Directions for generating your key can be found in the [Kaggle API documentation](https://github.com/Kaggle/kaggle-api/blob/main/docs/README.md) under the section "API credentials".

|

||||

We can load the dataset directly into the notebook using the Kaggle API. First, create a free Kaggle account. Once you have created an account, you'll need to generate an API key. Directions for generating your key can be found in the [Kaggle API documentation](https://github.com/Kaggle/kaggle-cli/blob/main/docs/README.md) under the section "API credentials".

|

||||

|

||||

Copy and paste your Kaggle username and API key into the following code. Then run the code to install the API and load the dataset into Watsonx.

|

||||

|

||||

|

|

|

|||

|

|

@ -44,6 +44,10 @@ Currently, you can only export models that include the following tasks to IMX500

|

|||

- [Classification](https://docs.ultralytics.com/tasks/classify/)

|

||||

- [Instance segmentation](https://docs.ultralytics.com/tasks/segment/)

|

||||

|

||||

!!! note "Supported model variants"

|

||||

|

||||

IMX export is designed and benchmarked for **YOLOv8n** and **YOLO11n** (nano). Other architectures and model scales are not supported.

|

||||

|

||||

## Usage Examples

|

||||

|

||||

Export an Ultralytics YOLO11 model to IMX500 format and run inference with the exported model.

|

||||

|

|

@ -205,9 +209,9 @@ The export process will create an ONNX model for quantization validation, along

|

|||

├── dnnParams.xml

|

||||

├── labels.txt

|

||||

├── packerOut.zip

|

||||

├── yolo11n_imx.onnx

|

||||

├── yolo11n_imx_MemoryReport.json

|

||||

└── yolo11n_imx.pbtxt

|

||||

├── model_imx.onnx

|

||||

├── model_imx_MemoryReport.json

|

||||

└── model_imx.pbtxt

|

||||

```

|

||||

|

||||

=== "Pose Estimation"

|

||||

|

|

@ -217,9 +221,9 @@ The export process will create an ONNX model for quantization validation, along

|

|||

├── dnnParams.xml

|

||||

├── labels.txt

|

||||

├── packerOut.zip

|

||||

├── yolo11n-pose_imx.onnx

|

||||

├── yolo11n-pose_imx_MemoryReport.json

|

||||

└── yolo11n-pose_imx.pbtxt

|

||||

├── model_imx.onnx

|

||||

├── model_imx_MemoryReport.json

|

||||

└── model_imx.pbtxt

|

||||

```

|

||||

|

||||

=== "Classification"

|

||||

|

|

@ -229,9 +233,9 @@ The export process will create an ONNX model for quantization validation, along

|

|||

├── dnnParams.xml

|

||||

├── labels.txt

|

||||

├── packerOut.zip

|

||||

├── yolo11n-cls_imx.onnx

|

||||

├── yolo11n-cls_imx_MemoryReport.json

|

||||

└── yolo11n-cls_imx.pbtxt

|

||||

├── model_imx.onnx

|

||||

├── model_imx_MemoryReport.json

|

||||

└── model_imx.pbtxt

|

||||

```

|

||||

|

||||

=== "Instance Segmentation"

|

||||

|

|

@ -241,9 +245,9 @@ The export process will create an ONNX model for quantization validation, along

|

|||

├── dnnParams.xml

|

||||

├── labels.txt

|

||||

├── packerOut.zip

|

||||

├── yolo11n-seg_imx.onnx

|

||||

├── yolo11n-seg_imx_MemoryReport.json

|

||||

└── yolo11n-seg_imx.pbtxt

|

||||

├── model_imx.onnx

|

||||

├── model_imx_MemoryReport.json

|

||||

└── model_imx.pbtxt

|

||||

```

|

||||

|

||||

## Using IMX500 Export in Deployment

|

||||

|

|

|

|||

|

|

@ -214,7 +214,7 @@ These features help in tracking experiments, optimizing models, and collaboratin

|

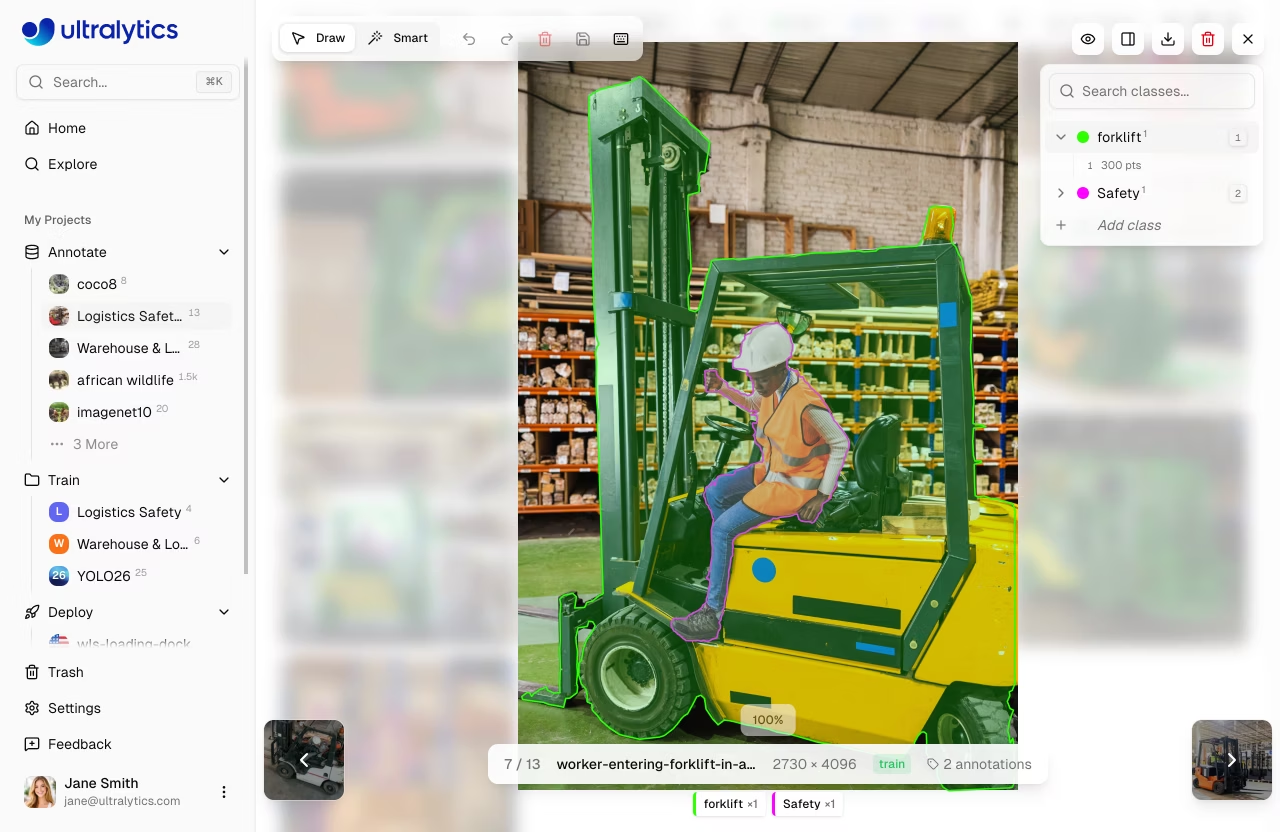

|||

After running your training script with W&B integration:

|

||||

|

||||

1. A link to your W&B dashboard will be provided in the console output.

|

||||

2. Click on the link or go to [wandb.ai](https://wandb.ai/) and log in to your account.

|

||||

2. Click on the link or go to [wandb.ai](https://wandb.ai/site) and log in to your account.

|

||||

3. Navigate to your project to view detailed metrics, visualizations, and model performance data.

|

||||

|

||||

The dashboard offers insights into your model's training process, allowing you to analyze and improve your YOLO26 models effectively.

|

||||

|

|

|

|||

|

|

@ -84,7 +84,7 @@ For organizations with advanced needs:

|

|||

- SLA guarantees (coming soon)

|

||||

- Enterprise support

|

||||

|

||||

See [Ultralytics Licensing](https://www.ultralytics.com/licensing) for Enterprise plan details.

|

||||

See [Ultralytics Licensing](https://www.ultralytics.com/license) for Enterprise plan details.

|

||||

|

||||

## Credits

|

||||

|

||||

|

|

|

|||

|

|

@ -237,7 +237,7 @@ From this tab you can:

|

|||

|

||||

- **Compare features** across Free, Pro, and Enterprise tiers

|

||||

- **Upgrade to Pro** to unlock more storage, models, team collaboration, and priority GPU access

|

||||

- **Review Enterprise** capabilities including SSO/SAML, RBAC, and commercial licensing — see [Ultralytics Licensing](https://www.ultralytics.com/licensing)

|

||||

- **Review Enterprise** capabilities including SSO/SAML, RBAC, and commercial licensing — see [Ultralytics Licensing](https://www.ultralytics.com/license)

|

||||

|

||||

See [Billing](billing.md) for detailed plan information, pricing, and upgrade instructions.

|

||||

|

||||

|

|

|

|||

|

|

@ -101,11 +101,11 @@ Enterprise plans include additional capabilities for organizations with advanced

|

|||

|

||||

!!! warning "License Expiration"

|

||||

|

||||

If your Enterprise license expires, workspace access is blocked until the license is renewed. See [Ultralytics Licensing](https://www.ultralytics.com/licensing) for details.

|

||||

If your Enterprise license expires, workspace access is blocked until the license is renewed. See [Ultralytics Licensing](https://www.ultralytics.com/license) for details.

|

||||

|

||||

### Getting Started with Enterprise

|

||||

|

||||

Enterprise plans are provisioned by the Ultralytics team. See [Ultralytics Licensing](https://www.ultralytics.com/licensing) for plan details. Once your enterprise configuration is set up, you'll receive a provisioning invite to accept as the team Owner, after which you can invite your team members.

|

||||

Enterprise plans are provisioned by the Ultralytics team. See [Ultralytics Licensing](https://www.ultralytics.com/license) for plan details. Once your enterprise configuration is set up, you'll receive a provisioning invite to accept as the team Owner, after which you can invite your team members.

|

||||

|

||||

## FAQ

|

||||

|

||||

|

|

@ -123,4 +123,4 @@ All team members share a single credit balance. The Owner and Admins can top up

|

|||

|

||||

### How do I upgrade from Pro to Enterprise?

|

||||

|

||||

Enterprise pricing and provisioning are handled directly by the Ultralytics team. See [Ultralytics Licensing](https://www.ultralytics.com/licensing) for plan details.

|

||||

Enterprise pricing and provisioning are handled directly by the Ultralytics team. See [Ultralytics Licensing](https://www.ultralytics.com/license) for plan details.

|

||||

|

|

|

|||

|

|

@ -2183,7 +2183,7 @@ Webhooks notify your server of Platform events via HTTP POST callbacks:

|

|||

|

||||

**All plans**: Training webhooks via the Python SDK (real-time metrics, completion notifications) work automatically on every plan -- no configuration required.

|

||||

|

||||

**Enterprise only**: Custom webhook endpoints that send HTTP POST callbacks to your own server URL require an Enterprise plan. See [Ultralytics Licensing](https://www.ultralytics.com/licensing) for details.

|

||||

**Enterprise only**: Custom webhook endpoints that send HTTP POST callbacks to your own server URL require an Enterprise plan. See [Ultralytics Licensing](https://www.ultralytics.com/license) for details.

|

||||

|

||||

---

|

||||

|

||||

|

|

|

|||

|

|

@ -434,18 +434,22 @@ Efficient annotation with keyboard shortcuts:

|

|||

|

||||

=== "General"

|

||||

|

||||

| Shortcut | Action |

|

||||

| ---------------------- | -------------------------- |

|

||||

| `Cmd/Ctrl+S` | Save annotations |

|

||||

| `Cmd/Ctrl+Z` | Undo |

|

||||

| `Cmd/Ctrl+Shift+Z` | Redo |

|

||||

| `Cmd/Ctrl+Y` | Redo (alternative) |

|

||||

| `Escape` | Save / Deselect / Exit |

|

||||

| `Delete` / `Backspace` | Delete selected annotation |

|

||||

| `1-9` | Select class 1-9 |

|

||||

| `Cmd/Ctrl+Scroll` | Zoom in/out |

|

||||

| `Shift+Click` | Multi-select annotations |

|

||||

| `Cmd/Ctrl+A` | Select all annotations |

|

||||

| Shortcut | Action |

|

||||

| ----------------------------- | ---------------------------- |

|

||||

| `Cmd/Ctrl+S` | Save annotations |

|

||||

| `Cmd/Ctrl+Z` | Undo |

|

||||

| `Cmd/Ctrl+Shift+Z` | Redo |

|

||||

| `Cmd/Ctrl+Y` | Redo (alternative) |

|

||||

| `Escape` | Save / Deselect / Exit |

|

||||

| `Delete` / `Backspace` | Delete selected annotation |

|

||||

| `1-9` | Select class 1-9 |

|

||||

| `Cmd/Ctrl+Scroll` | Zoom in/out |

|

||||

| `Cmd/Ctrl++` or `Cmd/Ctrl+=` | Zoom in |

|

||||

| `Cmd/Ctrl+-` | Zoom out |

|

||||

| `Cmd/Ctrl+0` | Reset to fit |

|

||||

| `Space+Drag` | Pan canvas when zoomed |

|

||||

| `Shift+Click` | Multi-select annotations |

|

||||

| `Cmd/Ctrl+A` | Select all annotations |

|

||||

|

||||

=== "Modes"

|

||||

|

||||

|

|

@ -456,15 +460,15 @@ Efficient annotation with keyboard shortcuts:

|

|||

|

||||

=== "Drawing"

|

||||

|

||||

| Shortcut | Action |

|

||||

| -------------- | --------------------------------------------------------- |

|

||||

| `Click+Drag` | Draw bounding box (detect/OBB) |

|

||||

| `Click` | Add polygon point (segment) / Place skeleton (pose) |

|

||||

| `Right-click` | Complete polygon / Add SAM negative point |

|

||||

| `Shift` + `click`/`right-click` | Place multiple SAM points before applying (auto-apply on) |

|

||||

| `A` | Toggle auto-apply (Smart mode) |

|

||||

| `Enter` | Complete polygon / Confirm pose / Save SAM annotation |

|

||||

| `Escape` | Cancel pose / Save SAM annotation / Deselect / Exit |

|

||||

| Shortcut | Action |

|

||||

| ------------------------------- | ----------------------------------------------------------- |

|

||||

| `Click+Drag` | Draw bounding box (detect/OBB) |

|

||||

| `Click` | Add polygon point (segment) / Place skeleton (pose) |

|

||||

| `Right-click` | Complete polygon / Add SAM negative point |

|

||||

| `Shift` + `click`/`right-click` | Place multiple SAM points before applying (auto-apply on) |

|

||||

| `A` | Toggle auto-apply (Smart mode) |

|

||||

| `Enter` | Complete polygon / Confirm pose / Save SAM annotation |

|

||||

| `Escape` | Cancel pose / Save SAM annotation / Deselect / Exit |

|

||||

|

||||

=== "Arrange (Z-Order)"

|

||||

|

||||

|

|

|

|||

|

|

@ -268,7 +268,9 @@ Click any image to open the fullscreen viewer with:

|

|||

- **Edit**: Enter annotation mode to add or modify labels

|

||||

- **Download**: Download the original image file

|

||||

- **Delete**: Delete the image from the dataset

|

||||

- **Zoom**: `Cmd/Ctrl+Scroll` to zoom in/out

|

||||

- **Zoom**: `Cmd/Ctrl+Scroll`, `Cmd/Ctrl++`, or `Cmd/Ctrl+=` to zoom in, and `Cmd/Ctrl+-` to zoom out

|

||||

- **Reset view**: `Cmd/Ctrl + 0` or the reset button to fit the image to the viewer

|

||||

- **Pan**: Hold `Space` and drag to pan the canvas when zoomed

|

||||

- **Pixel view**: Toggle pixelated rendering for close inspection

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -473,4 +473,4 @@ See [Models Export](train/models.md#export-model), the [Export mode guide](../mo

|

|||

|

||||

??? question "Can I use Platform models commercially?"

|

||||

|

||||

Free and Pro plans use the AGPL license. For commercial use without AGPL requirements, see [Ultralytics Licensing](https://www.ultralytics.com/licensing).

|

||||

Free and Pro plans use the AGPL license. For commercial use without AGPL requirements, see [Ultralytics Licensing](https://www.ultralytics.com/license).

|

||||

|

|

|

|||

|

|

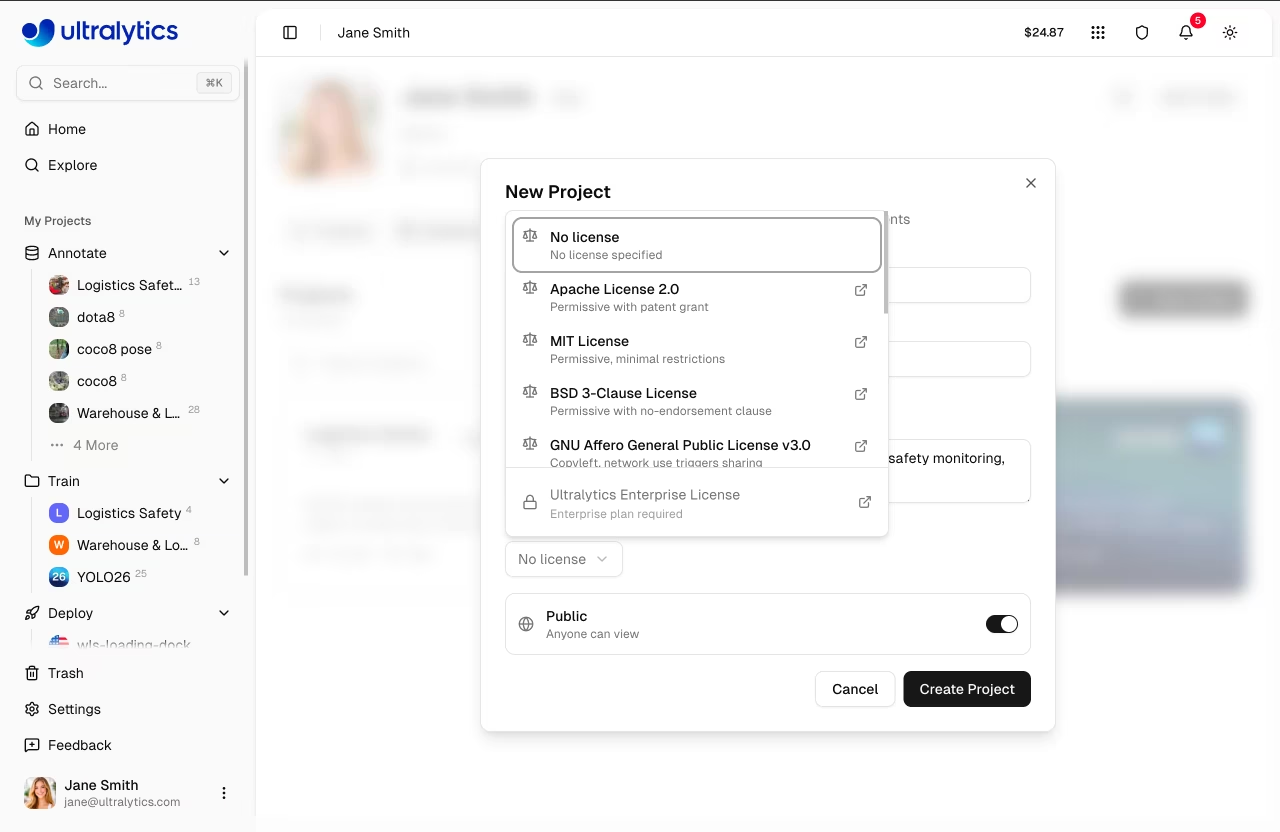

@ -40,7 +40,7 @@ Enter your project details:

|

|||

- **Name**: A descriptive name for your project (a random name is auto-generated)

|

||||

- **Description**: Optional notes about the project purpose

|

||||

- **Visibility**: Public (anyone can view) or Private (only you can access)

|

||||

- **License**: Optional license for your project (AGPL-3.0, Apache-2.0, MIT, GPL-3.0, BSD-3-Clause, LGPL-3.0, MPL-2.0, EUPL-1.1, Unlicense, Ultralytics-Enterprise, and more). The **Ultralytics-Enterprise** license is for commercial use without AGPL requirements and is available with an Enterprise plan — see [Ultralytics Licensing](https://www.ultralytics.com/licensing).

|

||||

- **License**: Optional license for your project (AGPL-3.0, Apache-2.0, MIT, GPL-3.0, BSD-3-Clause, LGPL-3.0, MPL-2.0, EUPL-1.1, Unlicense, Ultralytics-Enterprise, and more). The **Ultralytics-Enterprise** license is for commercial use without AGPL requirements and is available with an Enterprise plan — see [Ultralytics Licensing](https://www.ultralytics.com/license).

|

||||

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -237,7 +237,7 @@ You can then pass this file as `cfg=default_copy.yaml` along with any additional

|

|||

|

||||

## Solutions Commands

|

||||

|

||||

Ultralytics provides ready-to-use solutions for common computer vision applications through the CLI. These solutions simplify the implementation of complex tasks like object counting, workout monitoring, and queue management.

|

||||

Ultralytics provides ready-to-use solutions for common computer vision applications through the CLI. The `yolo solutions` command exposes object counting, cropping, blurring, workout monitoring, heatmaps, instance segmentation, VisionEye, speed estimation, queue management, analytics, Streamlit inference, and zone-based tracking — see the [Solutions](../solutions/index.md) page for the full catalog. Run `yolo solutions help` to list every supported solution and its arguments.

|

||||

|

||||

!!! example

|

||||

|

||||

|

|

@ -250,6 +250,26 @@ Ultralytics provides ready-to-use solutions for common computer vision applicati

|

|||

yolo solutions count source="path/to/video.mp4" # specify video file path

|

||||

```

|

||||

|

||||

=== "Crop"

|

||||

|

||||

Crop detected objects and save them to disk:

|

||||

|

||||

```bash

|

||||

yolo solutions crop show=True

|

||||

yolo solutions crop source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions crop classes="[0, 2]" # crop only selected classes

|

||||

```

|

||||

|

||||

=== "Blur"

|

||||

|

||||

Blur detected objects in a video for privacy or to highlight other regions:

|

||||

|

||||

```bash

|

||||

yolo solutions blur show=True

|

||||

yolo solutions blur source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions blur classes="[0, 5]" # blur only selected classes

|

||||

```

|

||||

|

||||

=== "Workout"

|

||||

|

||||

Monitor workout exercises using a pose model:

|

||||

|

|

@ -259,8 +279,49 @@ Ultralytics provides ready-to-use solutions for common computer vision applicati

|

|||

yolo solutions workout source="path/to/video.mp4" # specify video file path

|

||||

|

||||

# Use keypoints for ab-workouts

|

||||

yolo solutions workout kpts=[5, 11, 13] # left side

|

||||

yolo solutions workout kpts=[6, 12, 14] # right side

|

||||

yolo solutions workout kpts="[5, 11, 13]" # left side

|

||||

yolo solutions workout kpts="[6, 12, 14]" # right side

|

||||

```

|

||||

|

||||

=== "Heatmap"

|

||||

|

||||

Generate a heatmap showing object density and movement patterns:

|

||||

|

||||

```bash

|

||||

yolo solutions heatmap show=True

|

||||

yolo solutions heatmap source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions heatmap colormap=cv2.COLORMAP_INFERNO # customize colormap

|

||||

yolo solutions heatmap region="[(20, 400), (1080, 400), (1080, 360), (20, 360)]" # restrict heatmap to a region

|

||||

```

|

||||

|

||||

=== "Isegment"

|

||||

|

||||

Run instance segmentation with tracking on a video:

|

||||

|

||||

```bash

|

||||

yolo solutions isegment show=True

|

||||

yolo solutions isegment source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions isegment classes="[0, 5]" # segment only selected classes

|

||||

```

|

||||

|

||||

=== "VisionEye"

|

||||

|

||||

Draw object-to-observer sightlines with VisionEye:

|

||||

|

||||

```bash

|

||||

yolo solutions visioneye show=True

|

||||

yolo solutions visioneye source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions visioneye classes="[0, 5]" # monitor only selected classes

|

||||

```

|

||||

|

||||

=== "Speed"

|

||||

|

||||

Estimate the speed of moving objects in a video:

|

||||

|

||||

```bash

|

||||

yolo solutions speed show=True

|

||||

yolo solutions speed source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions speed meter_per_pixel=0.05 # set scale for real-world units

|

||||

```

|

||||

|

||||

=== "Queue"

|

||||

|

|

@ -273,6 +334,18 @@ Ultralytics provides ready-to-use solutions for common computer vision applicati

|

|||

yolo solutions queue region="[(20, 400), (1080, 400), (1080, 360), (20, 360)]" # configure queue coordinates

|

||||

```

|

||||

|

||||

=== "Analytics"

|

||||

|

||||

Generate analytical charts (line, bar, area, or pie) from tracked detections:

|

||||

|

||||

```bash

|

||||

yolo solutions analytics show=True

|

||||

yolo solutions analytics source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions analytics analytics_type="pie" show=True

|

||||

yolo solutions analytics analytics_type="bar" show=True

|

||||

yolo solutions analytics analytics_type="area" show=True

|

||||

```

|

||||

|

||||

=== "Inference"

|

||||

|

||||

Perform object detection, instance segmentation, or pose estimation in a web browser using Streamlit:

|

||||

|

|

@ -282,6 +355,44 @@ Ultralytics provides ready-to-use solutions for common computer vision applicati

|

|||

yolo solutions inference model="path/to/model.pt" # use custom model

|

||||

```

|

||||

|

||||

=== "TrackZone"

|

||||

|

||||

Track objects only inside a specified polygonal zone:

|

||||

|

||||

```bash

|

||||

yolo solutions trackzone show=True

|

||||

yolo solutions trackzone source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions trackzone region="[(150, 150), (1130, 150), (1130, 570), (150, 570)]" # configure zone coordinates

|

||||

```

|

||||

|

||||

=== "Region"

|

||||

|

||||

Count objects inside specific polygonal regions:

|

||||

|

||||

```bash

|

||||

yolo solutions region show=True

|

||||

yolo solutions region source="path/to/video.mp4" # specify video file path

|

||||

yolo solutions region region="[(20, 400), (1080, 400), (1080, 360), (20, 360)]" # configure region coordinates

|

||||

```

|

||||

|

||||

=== "Security"

|

||||

|

||||

Run security alarm monitoring with object detection:

|

||||

|

||||

```bash

|

||||

yolo solutions security show=True

|

||||

yolo solutions security source="path/to/video.mp4" # specify video file path

|

||||

```

|

||||

|

||||

=== "Parking"

|

||||

|

||||

Monitor parking lot occupancy using pre-defined zones:

|

||||

|

||||

```bash

|

||||

yolo solutions parking source="path/to/video.mp4" json_file="bounding_boxes.json" # requires pre-built JSON

|

||||

yolo solutions parking source="path/to/video.mp4" json_file="bounding_boxes.json" model="yolo26n.pt"

|

||||

```

|

||||

|

||||

=== "Help"

|

||||

|

||||

View available solutions and their options:

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ keywords: YOLOv5, Google Cloud Platform, GCP, Deep Learning VM, object detection

|

|||

|

||||

Embarking on the journey of [artificial intelligence (AI)](https://www.ultralytics.com/glossary/artificial-intelligence-ai) and [machine learning (ML)](https://www.ultralytics.com/glossary/machine-learning-ml) can be exhilarating, especially when you leverage the power and flexibility of a [cloud computing](https://www.ultralytics.com/glossary/cloud-computing) platform. Google Cloud Platform (GCP) offers robust tools tailored for ML enthusiasts and professionals alike. One such tool is the Deep Learning VM, preconfigured for data science and ML tasks. In this tutorial, we will navigate the process of setting up [Ultralytics YOLOv5](../../models/yolov5.md) on a [GCP Deep Learning VM](https://docs.cloud.google.com/deep-learning-vm/docs). Whether you're taking your first steps in ML or you're a seasoned practitioner, this guide provides a clear pathway to implementing [object detection](https://www.ultralytics.com/glossary/object-detection) models powered by YOLOv5.

|

||||

|

||||

🆓 Plus, if you're a new GCP user, you're in luck with a [$300 free credit offer](https://cloud.google.com/free/docs/free-cloud-features#free-trial) to kickstart your projects.

|

||||

🆓 Plus, if you're a new GCP user, you're in luck with a [$300 free credit offer](https://docs.cloud.google.com/free/docs/free-cloud-features) to kickstart your projects.

|

||||

|

||||

In addition to GCP, explore other accessible quickstart options for YOLOv5, like our [Google Colab Notebook](https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb) <img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"> for a browser-based experience, or the scalability of [Amazon AWS](./aws_quickstart_tutorial.md). Furthermore, container aficionados can utilize our official Docker image available on [Docker Hub](https://hub.docker.com/r/ultralytics/yolov5) <img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker Pulls"> for an encapsulated environment, following our [Docker Quickstart Guide](../../guides/docker-quickstart.md).

|

||||

|

||||

|

|

|

|||

|

|

@ -39,7 +39,7 @@ Developing a custom [object detection](https://docs.ultralytics.com/tasks/detect

|

|||

|

||||

Ultralytics provides two licensing options to accommodate diverse usage scenarios:

|

||||

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-v3) open-source license is ideal for students, researchers, and enthusiasts passionate about open collaboration and knowledge sharing. It requires derived works to be shared under the same license. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for full details.

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/license/agpl-3.0) open-source license is ideal for students, researchers, and enthusiasts passionate about open collaboration and knowledge sharing. It requires derived works to be shared under the same license. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for full details.

|

||||

- **Enterprise License**: Designed for commercial applications, this license permits the seamless integration of Ultralytics software and AI models into commercial products and services without the open-source stipulations of AGPL-3.0. If your project requires commercial deployment, request an [Enterprise License](https://www.ultralytics.com/license).

|

||||

|

||||

Explore our licensing options further on the [Ultralytics Licensing](https://www.ultralytics.com/license) page.

|

||||

|

|

|

|||

|

|

@ -16,7 +16,7 @@ pip install -r requirements.txt

|

|||

|

||||

### Installing `onnxruntime-gpu` (Optional)

|

||||

|

||||

For accelerated inference using an NVIDIA GPU, install the **`onnxruntime-gpu`** package. Ensure you have the correct [NVIDIA drivers](https://www.nvidia.com/Download/index.aspx) and [CUDA toolkit](https://developer.nvidia.com/cuda-toolkit) installed first. Consult the official [ONNX Runtime GPU documentation](https://onnxruntime.ai/docs/execution-providers/CUDA-ExecutionProvider.html) for detailed compatibility information and setup instructions.

|

||||

For accelerated inference using an NVIDIA GPU, install the **`onnxruntime-gpu`** package. Ensure you have the correct [NVIDIA drivers](https://www.nvidia.com/Download/index.aspx) and [CUDA toolkit](https://developer.nvidia.com/cuda/toolkit) installed first. Consult the official [ONNX Runtime GPU documentation](https://onnxruntime.ai/docs/execution-providers/CUDA-ExecutionProvider.html) for detailed compatibility information and setup instructions.

|

||||

|

||||

```bash

|

||||

pip install onnxruntime-gpu

|

||||

|

|

|

|||

|

|

@ -12,7 +12,7 @@ This repository provides a [Rust](https://rust-lang.org/) demo showcasing key [U

|

|||

- **Extensive Model Compatibility**: Supports a wide range of YOLO versions including [YOLOv5](https://docs.ultralytics.com/models/yolov5/), [YOLOv6](https://docs.ultralytics.com/models/yolov6/), [YOLOv7](https://docs.ultralytics.com/models/yolov7/), [YOLOv8](https://docs.ultralytics.com/models/yolov8/), [YOLOv9](https://docs.ultralytics.com/models/yolov9/), [YOLOv10](https://docs.ultralytics.com/models/yolov10/), [YOLO11](https://docs.ultralytics.com/models/yolo11/), [YOLO-World](https://docs.ultralytics.com/models/yolo-world/), [RT-DETR](https://docs.ultralytics.com/models/rtdetr/), and others.

|

||||

- **Versatile Task Coverage**: Includes examples for `Classification`, `Segmentation`, `Detection`, `Pose`, and `OBB`.

|

||||

- **Precision Flexibility**: Works seamlessly with `FP16` and `FP32` precision [ONNX models](https://docs.ultralytics.com/integrations/onnx/).

|

||||

- **Execution Providers**: Accelerated support for `CPU`, [CUDA](https://developer.nvidia.com/cuda-toolkit), [CoreML](https://developer.apple.com/documentation/coreml), and [TensorRT](https://docs.ultralytics.com/integrations/tensorrt/).

|

||||

- **Execution Providers**: Accelerated support for `CPU`, [CUDA](https://developer.nvidia.com/cuda/toolkit), [CoreML](https://developer.apple.com/documentation/coreml), and [TensorRT](https://docs.ultralytics.com/integrations/tensorrt/).

|

||||

- **Dynamic Input Shapes**: Dynamically adjusts to variable `batch`, `width`, and `height` dimensions for flexible model input.

|

||||

- **Flexible Data Loading**: The `DataLoader` component handles images, folders, videos, and real-time video streams.

|

||||

- **Real-Time Display and Video Export**: The `Viewer` provides real-time frame visualization and video export functions, similar to OpenCV’s `imshow()` and `imwrite()`.

|

||||

|

|

@ -45,7 +45,7 @@ This repository provides a [Rust](https://rust-lang.org/) demo showcasing key [U

|

|||

|

||||

### 2. [Optional] Install CUDA, CuDNN, and TensorRT

|

||||

|

||||

- The CUDA execution provider requires [NVIDIA CUDA Toolkit](https://developer.nvidia.com/cuda-toolkit) version `12.x`.

|

||||

- The CUDA execution provider requires [NVIDIA CUDA Toolkit](https://developer.nvidia.com/cuda/toolkit) version `12.x`.

|

||||

- The TensorRT execution provider requires both CUDA `12.x` and [NVIDIA TensorRT](https://developer.nvidia.com/tensorrt) `10.x`. Ensure [cuDNN](https://developer.nvidia.com/cudnn) is also correctly installed.

|

||||

|

||||

### 3. [Optional] Install ffmpeg

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ This example provides a practical guide on performing inference with [Ultralytic

|

|||

|

||||

- **Deployment-Friendly:** Well-suited for deployment in industrial and production environments.

|

||||

- **Performance:** Offers faster [inference latency](https://www.ultralytics.com/glossary/inference-latency) compared to OpenCV's DNN module on both CPU and [GPU](https://www.ultralytics.com/glossary/gpu-graphics-processing-unit).

|

||||

- **Acceleration:** Supports FP32 and [FP16 (Half Precision)](https://www.ultralytics.com/glossary/half-precision) inference acceleration using [NVIDIA CUDA](https://developer.nvidia.com/cuda-toolkit).

|

||||

- **Acceleration:** Supports FP32 and [FP16 (Half Precision)](https://www.ultralytics.com/glossary/half-precision) inference acceleration using [NVIDIA CUDA](https://developer.nvidia.com/cuda/toolkit).

|

||||

|

||||

## ☕ Note

|

||||

|

||||

|

|

@ -85,7 +85,7 @@ Ensure you have the following dependencies installed:

|

|||

| [OpenCV](https://opencv.org/releases/) | >=4.0.0 | Required for image loading and preprocessing. |

|

||||

| C++ Compiler | C++17 Support | Needed for features like `<filesystem>`. ([GCC](https://gcc.gnu.org/), [Clang](https://clang.llvm.org/), [MSVC](https://visualstudio.microsoft.com/vs/features/cplusplus/)) |

|

||||

| [CMake](https://cmake.org/download/) | >=3.18 | Cross-platform build system generator. Version 3.18+ recommended for better CUDA support discovery. |

|

||||

| [CUDA Toolkit](https://developer.nvidia.com/cuda-toolkit) (Optional) | >=11.4, <12.0 | Required for GPU acceleration via ONNX Runtime's CUDA Execution Provider. **Must be CUDA 11.x**. |

|

||||

| [CUDA Toolkit](https://developer.nvidia.com/cuda/toolkit) (Optional) | >=11.4, <12.0 | Required for GPU acceleration via ONNX Runtime's CUDA Execution Provider. **Must be CUDA 11.x**. |

|

||||

| [cuDNN](https://developer.nvidia.com/cudnn) (CUDA required) | =8.x | Required by CUDA Execution Provider. **Must be cuDNN 8.x** compatible with your CUDA 11.x version. |

|

||||

|

||||

**Important Notes:**

|

||||

|

|

|

|||

|

|

@ -35,7 +35,7 @@ Please follow the official Rust installation guide: [https://www.rust-lang.org/t

|

|||

|

||||

### 3. [Optional] Install CUDA & CuDNN & TensorRT

|

||||

|

||||

- The CUDA execution provider requires [CUDA](https://developer.nvidia.com/cuda-toolkit) v11.6+.

|

||||

- The CUDA execution provider requires [CUDA](https://developer.nvidia.com/cuda/toolkit) v11.6+.

|

||||

- The TensorRT execution provider requires CUDA v11.4+ and [TensorRT](https://developer.nvidia.com/tensorrt) v8.4+. You may also need [cuDNN](https://developer.nvidia.com/cudnn).

|

||||

|

||||

## ▶️ Get Started

|

||||

|

|

|

|||

|

|

@ -54,7 +54,7 @@ We welcome contributions to improve this demo! If you encounter bugs, have featu

|

|||

|

||||

## 📄 License

|

||||

|

||||

This project is licensed under the AGPL-3.0 License. For detailed information, please see the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file or read the full [AGPL-3.0 license text](https://opensource.org/license/agpl-v3).

|

||||

This project is licensed under the AGPL-3.0 License. For detailed information, please see the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file or read the full [AGPL-3.0 license text](https://opensource.org/license/agpl-3.0).

|

||||

|

||||

## 🙏 Acknowledgments

|

||||

|

||||

|

|

|

|||

|

|

@ -3,6 +3,49 @@

|

|||

import shutil

|

||||

from pathlib import Path

|

||||

|

||||

import pytest

|

||||

|

||||

|

||||

@pytest.fixture(scope="session")

|

||||

def solution_assets():

|

||||

"""Session-scoped fixture to cache solution test assets.

|

||||

|

||||

Lazily downloads solution assets into a persistent directory (WEIGHTS_DIR/solution_assets) and returns a callable

|

||||

that resolves asset names to cached paths.

|

||||

"""

|

||||

from ultralytics.utils import ASSETS_URL, WEIGHTS_DIR

|

||||

from ultralytics.utils.downloads import safe_download

|

||||

|

||||

# Use persistent directory alongside weights

|

||||

cache_dir = WEIGHTS_DIR / "solution_assets"

|

||||

cache_dir.mkdir(parents=True, exist_ok=True)

|

||||

|

||||

# Define all assets needed for solution tests

|

||||

assets = {

|

||||

# Videos

|

||||

"demo_video": "solutions_ci_demo.mp4",

|

||||

"crop_video": "decelera_landscape_min.mov",

|

||||

"pose_video": "solution_ci_pose_demo.mp4",

|

||||

"parking_video": "solution_ci_parking_demo.mp4",

|

||||

"vertical_video": "solution_vertical_demo.mp4",

|

||||

# Parking manager files

|

||||

"parking_areas": "solution_ci_parking_areas.json",

|

||||

"parking_model": "solutions_ci_parking_model.pt",

|

||||

}

|

||||

|

||||

asset_paths = {}

|

||||

|

||||

def get_asset(name):

|

||||

"""Return the cached path for a named solution asset, downloading it on first use."""

|

||||

if name not in asset_paths:

|

||||

asset_path = cache_dir / assets[name]

|

||||

if not asset_path.exists():

|

||||

safe_download(url=f"{ASSETS_URL}/{asset_path.name}", dir=cache_dir)

|

||||

asset_paths[name] = asset_path

|

||||

return asset_paths[name]

|

||||

|

||||

return get_asset

|

||||

|

||||

|

||||

def pytest_addoption(parser):

|

||||

"""Add custom command-line options to pytest."""

|

||||

|

|

@ -55,5 +98,5 @@ def pytest_terminal_summary(terminalreporter, exitstatus, config):

|

|||

|

||||

# Remove directories

|

||||

models = [path for x in {"*.mlpackage", "*_openvino_model"} for path in WEIGHTS_DIR.rglob(x)]

|

||||

for directory in [WEIGHTS_DIR / "path with spaces", *models]:

|

||||

for directory in [WEIGHTS_DIR / "solution_assets", WEIGHTS_DIR / "path with spaces", *models]:

|

||||

shutil.rmtree(directory, ignore_errors=True)

|

||||

|

|

|

|||

|

|

@ -25,7 +25,7 @@ def test_export():

|

|||

"""Test model exporting functionality by adding a callback and verifying its execution."""

|

||||

exporter = Exporter()

|

||||

exporter.add_callback("on_export_start", test_func)

|

||||

assert test_func in exporter.callbacks["on_export_start"], "callback test failed"

|

||||

assert test_func in exporter.callbacks["on_export_start"], "on_export_start callback not registered"

|

||||

f = exporter(model=YOLO("yolo26n.yaml").model)