diff --git a/docs/en/datasets/detect/open-images-v7.md b/docs/en/datasets/detect/open-images-v7.md

index c63d5894db..047fc4255a 100644

--- a/docs/en/datasets/detect/open-images-v7.md

+++ b/docs/en/datasets/detect/open-images-v7.md

@@ -146,7 +146,7 @@ Illustrations of the dataset help provide insights into its richness:

- **Open Images V7**: This image exemplifies the depth and detail of annotations available, including bounding boxes, relationships, and segmentation masks.

-Researchers can gain invaluable insights into the array of computer vision challenges that the dataset addresses, from basic object detection to intricate relationship identification. The [diversity of annotations](https://docs.ultralytics.com/datasets/explorer/) makes Open Images V7 particularly valuable for developing models that can understand complex visual scenes.

+Researchers can gain invaluable insights into the array of computer vision challenges that the dataset addresses, from basic object detection to intricate relationship identification. The diversity of annotations makes Open Images V7 particularly valuable for developing models that can understand complex visual scenes.

## Citations and Acknowledgments

diff --git a/docs/en/datasets/pose/index.md b/docs/en/datasets/pose/index.md

index ecbb2ceffd..d43f6f6d2d 100644

--- a/docs/en/datasets/pose/index.md

+++ b/docs/en/datasets/pose/index.md

@@ -195,7 +195,7 @@ To add your dataset:

### What is the purpose of the dataset YAML file in Ultralytics YOLO?

-The dataset YAML file in Ultralytics YOLO defines the dataset and model configuration for training. It specifies paths to training, validation, and test images, keypoint shapes, class names, and other configuration options. This structured format helps streamline [dataset management](https://docs.ultralytics.com/datasets/explorer/) and model training. Here is an example YAML format:

+The dataset YAML file in Ultralytics YOLO defines the dataset and model configuration for training. It specifies paths to training, validation, and test images, keypoint shapes, class names, and other configuration options. This structured format helps streamline dataset management and model training. Here is an example YAML format:

```yaml

--8<-- "ultralytics/cfg/datasets/coco8-pose.yaml"

diff --git a/docs/en/guides/preprocessing_annotated_data.md b/docs/en/guides/preprocessing_annotated_data.md

index 9014e8d707..c8d3822727 100644

--- a/docs/en/guides/preprocessing_annotated_data.md

+++ b/docs/en/guides/preprocessing_annotated_data.md

@@ -130,17 +130,9 @@ Common tools for visualizations include:

- **Scatter Plots**: Helpful for exploring relationships between image features or annotations.

- **Heatmaps**: Effective for visualizing the distribution of pixel intensities or the spatial distribution of annotated features within images.

-### Using Ultralytics Explorer for EDA

+### Using Ultralytics Platform for EDA

-!!! warning "Community Note"

-

- As of **`ultralytics>=8.3.10`**, Ultralytics Explorer support is deprecated. Similar (and expanded) dataset exploration features are available in [Ultralytics Platform](https://platform.ultralytics.com/).

-

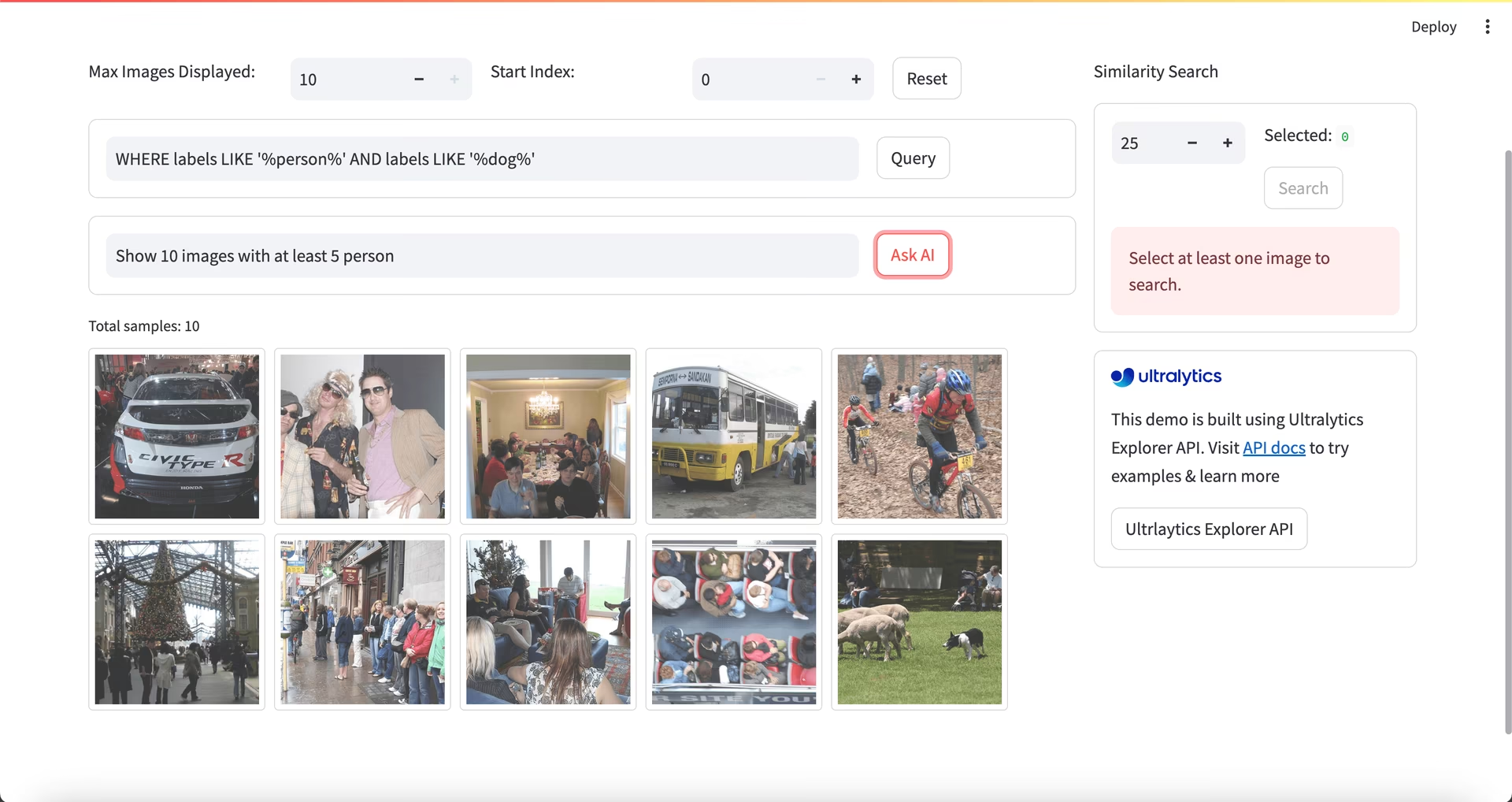

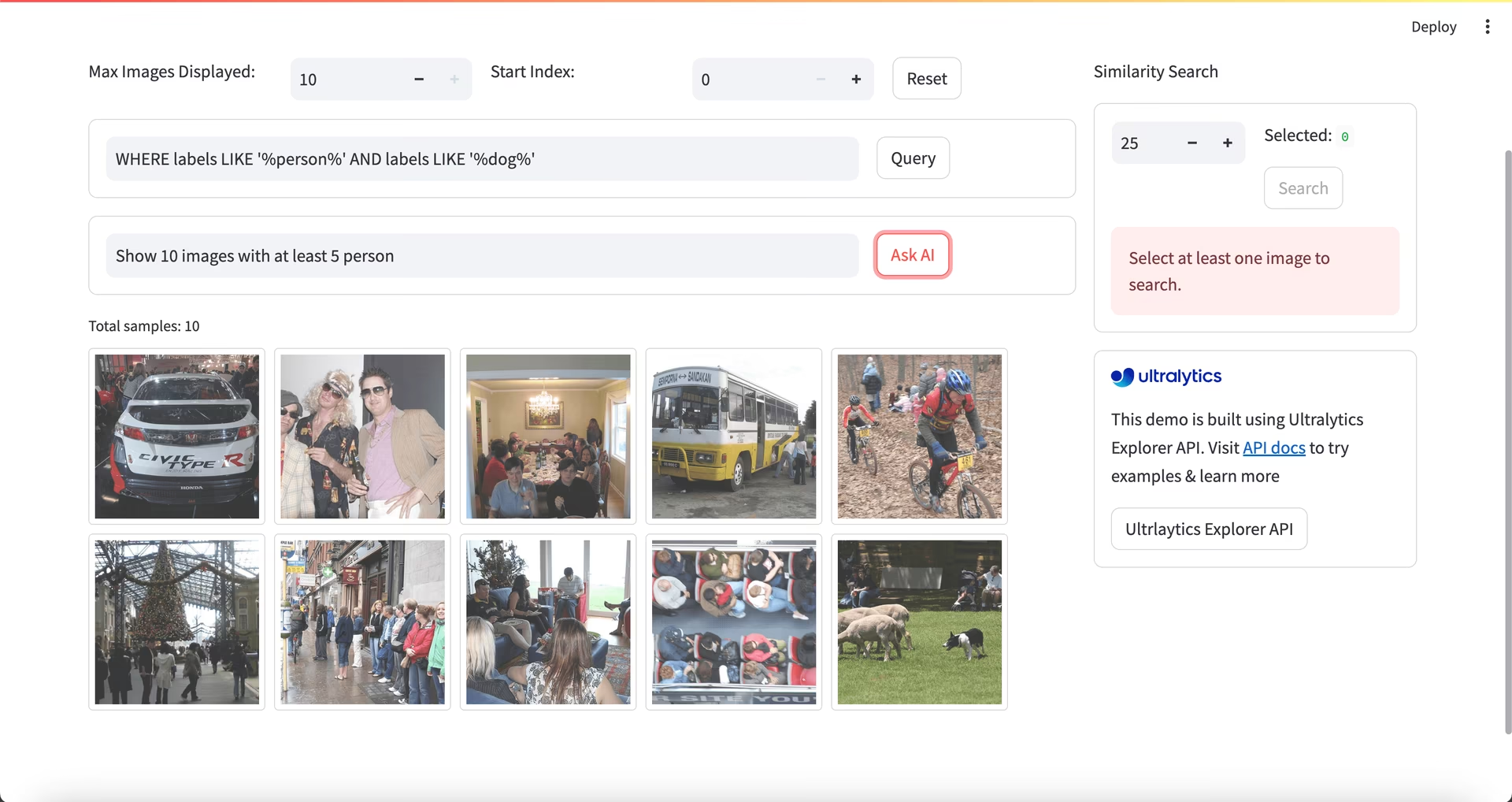

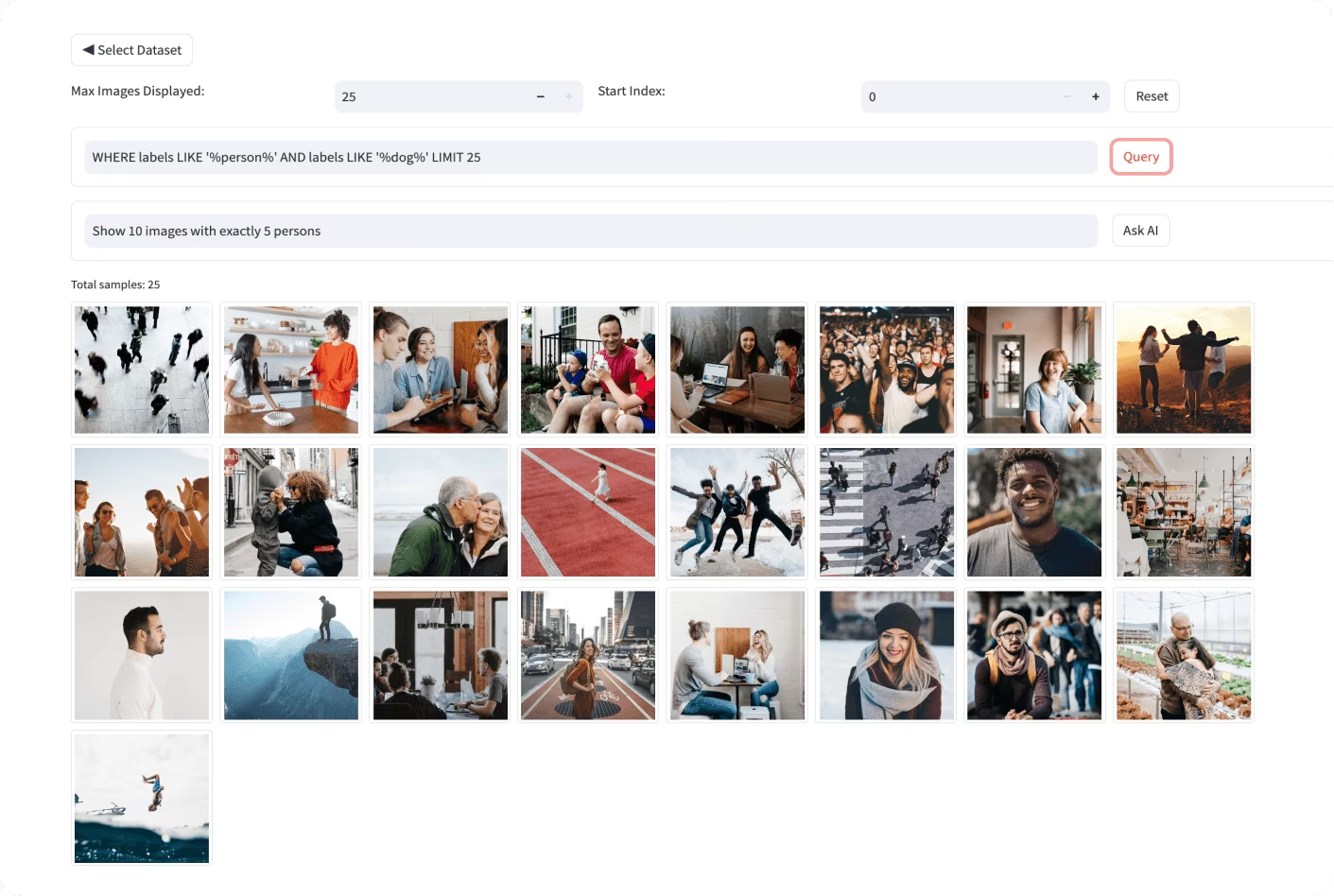

-For a more advanced approach to EDA, you can use the Ultralytics Explorer tool. It offers robust capabilities for exploring computer vision datasets. By supporting semantic search, SQL queries, and vector similarity search, the tool makes it easy to analyze and understand your data. With Ultralytics Explorer, you can create [embeddings](https://www.ultralytics.com/glossary/embeddings) for your dataset to find similar images, run SQL queries for detailed analysis, and perform semantic searches, all through a user-friendly graphical interface.

-

-

-  -

-

+For a no-code approach to EDA, upload your dataset to [Ultralytics Platform](https://platform.ultralytics.com/). The dataset's `Charts` tab automatically generates the visualizations described above: split distribution, top class counts, image width/height histograms, and 2D heatmaps of annotation positions and image dimensions. The `Images` tab lets you browse your data in grid, compact, or table views with annotation overlays, making it easy to spot mislabeled examples or unbalanced classes without writing a single line of code.

## Reach Out and Connect

diff --git a/docs/en/guides/steps-of-a-cv-project.md b/docs/en/guides/steps-of-a-cv-project.md

index 4ecdb8bfad..f688d4979c 100644

--- a/docs/en/guides/steps-of-a-cv-project.md

+++ b/docs/en/guides/steps-of-a-cv-project.md

@@ -114,11 +114,7 @@ After splitting your data, you can perform data augmentation by applying transfo

Libraries like [OpenCV](https://www.ultralytics.com/glossary/opencv), [Albumentations](../integrations/albumentations.md), and [TensorFlow](https://www.ultralytics.com/glossary/tensorflow) offer flexible augmentation functions that you can use. Additionally, some libraries, such as Ultralytics, have [built-in augmentation settings](../modes/train.md) directly within its model training function, simplifying the process.

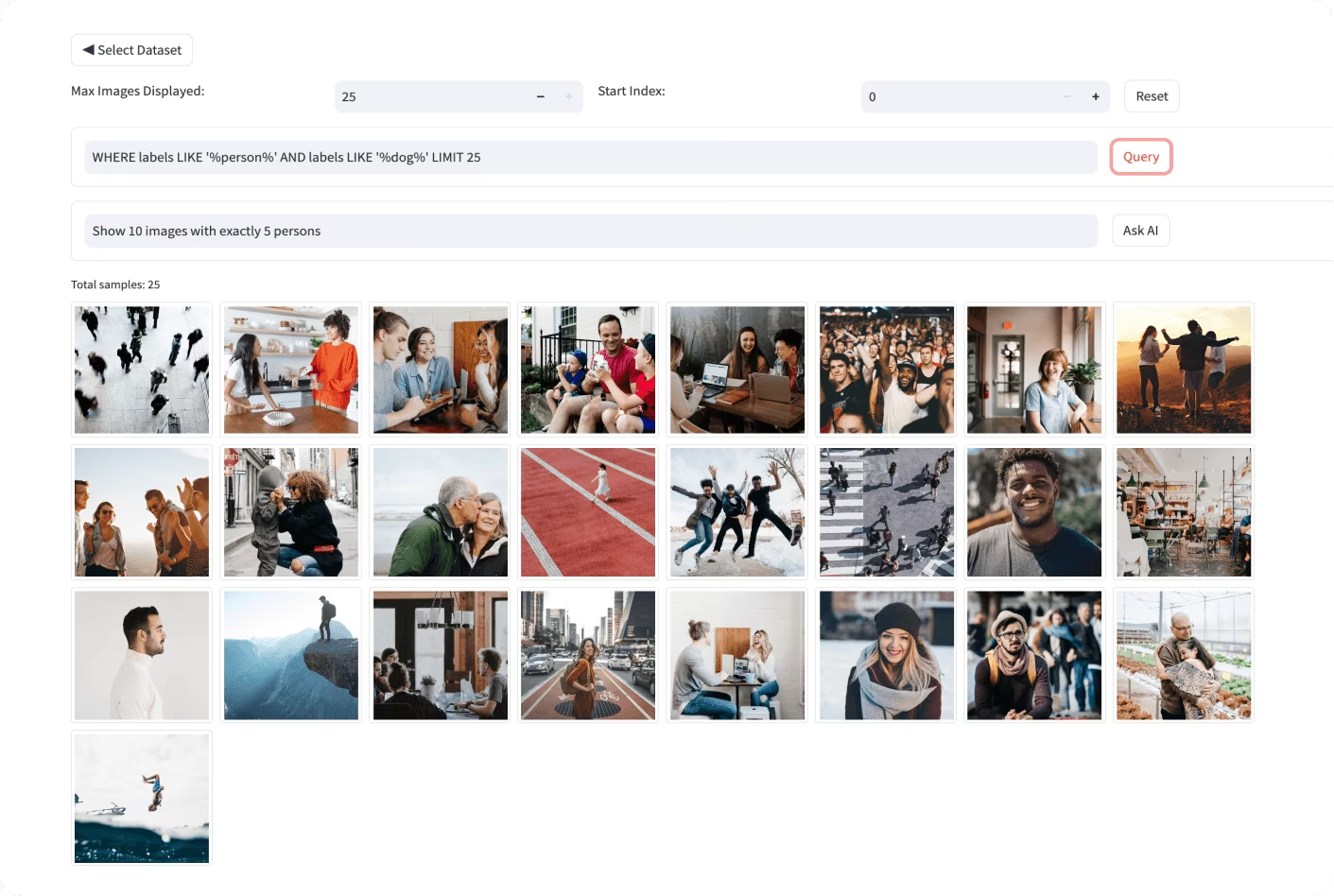

-To understand your data better, you can use tools like [Matplotlib](https://matplotlib.org/) or [Seaborn](https://seaborn.pydata.org/) to visualize the images and analyze their distribution and characteristics. Visualizing your data helps identify patterns, anomalies, and the effectiveness of your augmentation techniques. You can also use [Ultralytics Explorer](../datasets/explorer/index.md), a tool for exploring computer vision datasets with semantic search, SQL queries, and vector similarity search.

-

-

-  -

-

+To understand your data better, you can use tools like [Matplotlib](https://matplotlib.org/) or [Seaborn](https://seaborn.pydata.org/) to visualize the images and analyze their distribution and characteristics. Visualizing your data helps identify patterns, anomalies, and the effectiveness of your augmentation techniques. The [Ultralytics Platform](https://platform.ultralytics.com/) `Charts` tab can surface many of these insights without any code by automatically generating split distribution, class counts, image-dimension histograms, and annotation-position heatmaps for every uploaded dataset.

By properly [understanding, splitting, and augmenting your data](./preprocessing_annotated_data.md), you can develop a well-trained, validated, and tested model that performs well in real-world applications.

diff --git a/docs/en/usage/simple-utilities.md b/docs/en/usage/simple-utilities.md

index 23674ee8ca..4e19cb1a24 100644

--- a/docs/en/usage/simple-utilities.md

+++ b/docs/en/usage/simple-utilities.md

@@ -731,7 +731,7 @@ auto_annotate(

)

```

-For more details, check the [auto_annotate reference section](../reference/data/annotator.md#ultralytics.data.annotator.auto_annotate).

+For more details, check the [auto_annotate reference section](../reference/data/annotator.md#ultralytics.data.annotator.auto_annotate), or use [Ultralytics Platform](https://platform.ultralytics.com/) as a hosted, no-code alternative with click-based masking via [SAM 2.1](../models/sam-2.md) or [SAM 3](../models/sam-3.md), or predictions from pretrained and fine-tuned YOLO models for detect, segment, and OBB tasks.

### How do I convert COCO dataset annotations to YOLO format in Ultralytics?

@@ -750,9 +750,9 @@ convert_coco(

For additional information, visit the [convert_coco reference page](../reference/data/converter.md#ultralytics.data.converter.convert_coco).

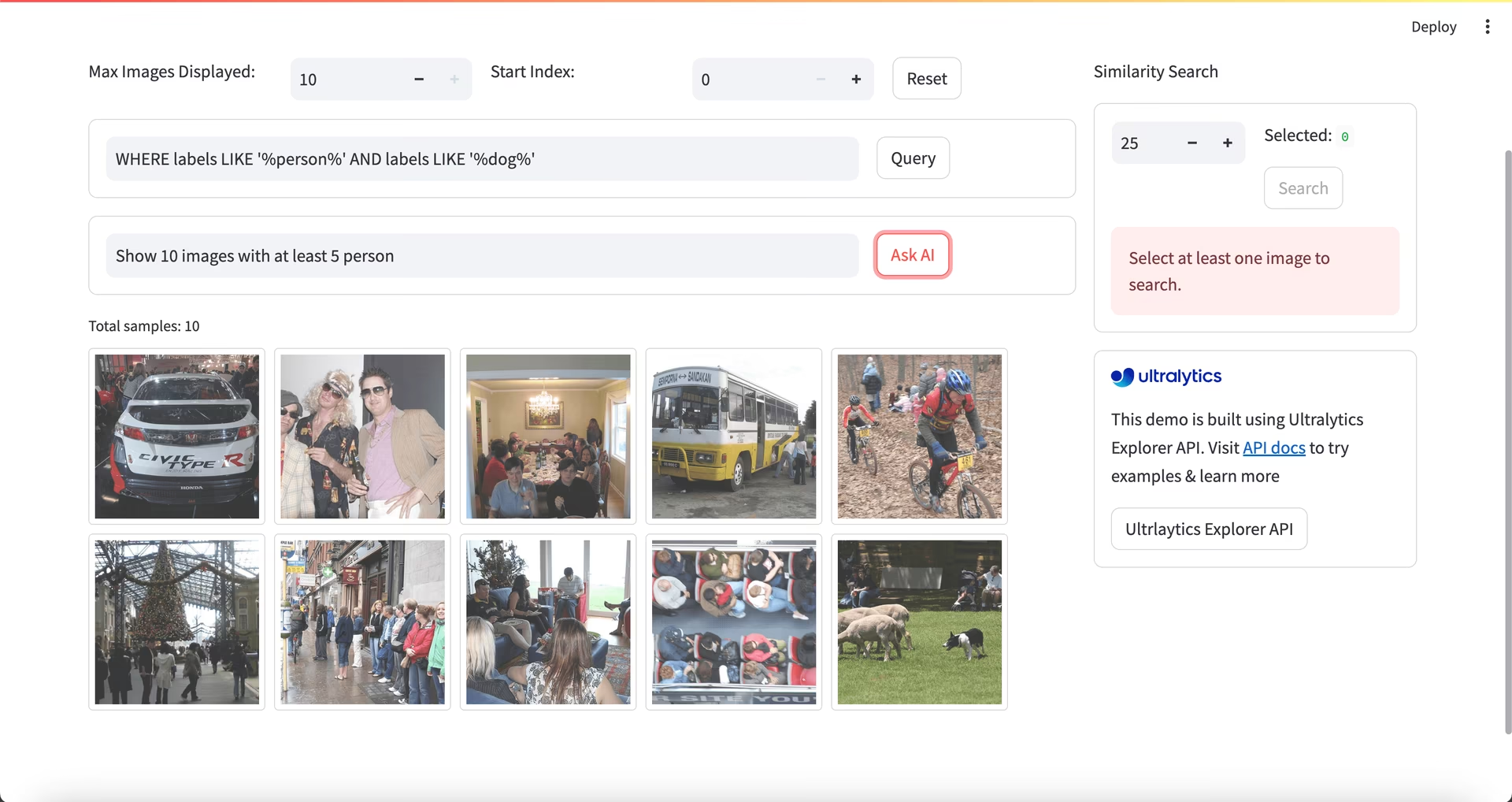

-### What is the purpose of the YOLO Data Explorer in the Ultralytics package?

+### How can I analyze my dataset composition and distribution?

-The [YOLO Explorer](../datasets/explorer/index.md) is a powerful tool introduced in the `8.1.0` update to enhance dataset understanding. It allows you to use text queries to find object instances in your dataset, making it easier to analyze and manage your data. This tool provides valuable insights into dataset composition and distribution, helping to improve model training and performance.

+[Ultralytics Platform](https://platform.ultralytics.com/) provides automatic dataset analytics: the `Charts` tab shows split distribution, top class counts, image-dimension histograms, and 2D heatmaps of annotation positions, helping you spot imbalances and outliers before training.

### How can I convert bounding boxes to segments in Ultralytics?

-

- -

- -

-