* ♻️ refactor(acp): move agent provider to agencyConfig + restore creation entry - Move AgentProviderConfig from chatConfig to agencyConfig.heterogeneousProvider - Rename type from 'acp' to 'claudecode' for clarity - Restore Claude Code agent creation entry in sidebar + menu - Prioritize heterogeneousProvider check over gateway mode in execution flow - Remove ACP settings from AgentChat form (provider is set at creation time) - Add getAgencyConfigById selector for cleaner access - Use existing agent workingDirectory instead of duplicating in provider config Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> ✨ feat(acp): defer terminal events + extract model/usage per turn Three improvements to ACP stream handling: 1. Defer agent_runtime_end/error: Previously the adapter emitted terminal events from result.type directly into the Gateway handler. The handler immediately fires fetchAndReplaceMessages which reads stale DB state (before we persist final content/tools). Fix: intercept terminal events in the executor's event loop and forward them only AFTER content + metadata has been written to DB. 2. Extract model/usage per assistant event: Claude Code sets model name and token usage on every assistant event. Adapter now emits a 'step_complete' event with phase='turn_metadata' carrying these. Executor accumulates input/output/cache tokens across turns and persists them onto the assistant message (model + metadata.totalTokens). 3. Missing final text fix: The accumulated assistant text was being written AFTER agent_runtime_end triggered fetchAndReplaceMessages, so the UI rendered stale (empty) content. Deferred terminals solve this. Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> 🐛 fix(acp): eliminate orphan-tool warning flicker during streaming Root cause: LobeHub's conversation-flow parser (collectToolMessages) filters tool messages by matching `tool_call_id` against `assistant.tools[].id`. The previous flow created tool messages FIRST, then updated assistant.tools[], which opened a brief window where the UI saw tool messages that had no matching entry in the parent's tools array — rendering them as "orphan" with a scary "请删除" warning to the user. Fix: Reorder persistNewToolCalls into three phases: 1. Pre-register tool entries in assistant.tools[] (id only, no result_msg_id) 2. Create the tool messages in DB (tool_call_id matches pre-registered ids) 3. Back-fill result_msg_id and re-write assistant.tools[] Between phase 1 and phase 3 the UI always sees consistent state: every tool message in DB has a matching entry in the parent's tools array. Verified: orphan count stays at 0 across all sampled timepoints during streaming (vs 1+ before fix). Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> 🐛 fix(acp): dedupe tool_use + capture tool_result + persist result_msg_id Three critical fixes to ACP tool-call handling, discovered via live testing: 1. **tool_use dedupe** — Claude Code stream-json previously produced 15+ duplicate tool messages per tool_call_id. The adapter now tracks emitted ids so each tool_use → exactly one tool message. 2. **tool_result content capture** — tool_result blocks live in `type: 'user'` events in Claude Code's stream-json, not in assistant events. The adapter now handles the 'user' event type and emits a new `tool_result` HeterogeneousAgentEvent which the executor consumes to call messageService.updateToolMessage() with the actual result content. Previously all tool messages had empty content. 3. **result_msg_id on assistant.tools[]** — LobeHub's parse() step links tool messages to their parent assistant turn via tools[].result_msg_id. Without it, the UI renders orphan-message warnings. The executor now captures the tool message id returned by messageService.createMessage and writes it back into the assistant.tools[] JSONB. Also adds vitest config + 9 unit tests for the adapter covering lifecycle, content mapping, and tool_result handling. Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> ✨ feat(acp): integrate external AI agents via ACP protocol Adds support for connecting external AI agents (Claude Code and future agents like Codex, Kimi CLI) into LobeHub Desktop via a new heterogeneous agent layer that adapts agent-specific protocols to the unified Gateway event stream. Architecture: - New @lobechat/heterogeneous-agents package: pluggable adapters that convert agent-specific outputs to AgentStreamEvent - AcpCtr (Electron main): agent-agnostic process manager with CLI presets registry, broadcasts raw stdout lines to renderer - acpExecutor (renderer): subscribes to broadcasts, runs events through adapter, feeds into existing createGatewayEventHandler - Tool call persistence: creates role='tool' messages via messageService before emitting tool_start/tool_end to the handler Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ♻️ refactor: rename acpExecutor to heterogeneousAgentExecutor - Rename file acpExecutor.ts → heterogeneousAgentExecutor.ts - Rename ACPExecutorParams → HeterogeneousAgentExecutorParams - Rename executeACPAgent → executeHeterogeneousAgent - Change operation type from execAgentRuntime to execHeterogeneousAgent - Change operation label to "Heterogeneous Agent Execution" - Change error type from ACPError to HeterogeneousAgentError - Rename acpData/acpContext variables to heteroData/heteroContext Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ♻️ refactor: rename AcpCtr and acp service to heterogeneousAgent Desktop side: - AcpCtr.ts → HeterogeneousAgentCtr.ts - groupName 'acp' → 'heterogeneousAgent' - IPC channels: acpRawLine → heteroAgentRawLine, etc. Renderer side: - services/electron/acp.ts → heterogeneousAgent.ts - ACPService → HeterogeneousAgentService - acpService → heterogeneousAgentService - Update all IPC channel references in executor Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🔧 chore: switch CC permission mode to bypassPermissions Use bypassPermissions to allow Bash and other tool execution. Previously acceptEdits only allowed file edits, causing Bash tool calls to fail during CC execution. Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🐛 fix: don't fallback activeAgentId to empty string in AgentIdSync Empty string '' causes chat store to have a truthy but invalid activeAgentId, breaking message routing. Pass undefined instead. Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🐛 fix: use AI_RUNTIME_OPERATION_TYPES for loading and cancel states stopGenerateMessage and cancelOperation were hardcoding ['execAgentRuntime', 'execServerAgentRuntime'], missing execHeterogeneousAgent. This caused: - CC execution couldn't be cancelled via stop button - isAborting flag wasn't set for heterogeneous agent operations Now uses AI_RUNTIME_OPERATION_TYPES constant everywhere to ensure all AI runtime operation types are handled consistently. Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ✨ feat: split multi-step CC execution into separate assistant messages Claude Code's multi-turn execution (thinking → tool → final text) was accumulating everything onto a single assistant message, causing the final text response to appear inside the tool call message. Changes: - ClaudeCodeAdapter: detect message.id changes and emit stream_end + stream_start with newStep flag at step boundaries - heterogeneousAgentExecutor: on newStep stream_start, persist previous step's content, create a new assistant message, reset accumulators, and forward the new message ID to the gateway handler This ensures each LLM turn gets its own assistant message, matching how Gateway mode handles multi-step agent execution. Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🐛 fix: fix multi-step CC execution and add DB persistence tests Adapter fixes: - Fix false step boundary on first assistant after init (ghost empty message) Executor fixes: - Fix parentId chain: new-step assistant points to last tool message - Fix content contamination: sync snapshot of content accumulators on step boundary - Fix type errors (import path, ChatToolPayload casts, sessionId guard) Tests: - Add ClaudeCodeAdapter unit tests (multi-step, usage, flush, edge cases) - Add ClaudeCodeAdapter E2E test (full multi-step session simulation) - Add registry tests - Add executor DB persistence tests covering: - Tool 3-phase write (pre-register → create → backfill) - Tool result content + error persistence - Multi-step parentId chain (assistant → tool → assistant) - Final content/reasoning/model/usage writes - Sync snapshot preventing cross-step contamination - Error handling with partial content persistence - Full multi-step E2E (Read → Write → text) Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🔧 chore: add orphan tool regression tests and debug trace - Add orphan tool regression tests for multi-turn tool execution - Add __HETERO_AGENT_TRACE debug instrumentation for event flow capture Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ✨ feat: support image attachments in CC via stream-json stdin - Main process downloads files by ID from cloud (GET {domain}/f/{fileId}) - Local disk cache at lobehub-storage/heteroAgent/files/ (by fileId) - When fileIds present, switches to --input-format stream-json + stdin pipe - Constructs user message with text + image content blocks (base64) - Pass fileIds through executor → service → IPC → controller Closes LOBE-7254 Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ♻️ refactor: pass imageList instead of fileIds for CC vision support - Use imageList (with url) instead of fileIds — Main downloads from URL directly - Cache by image id at lobehub-storage/heteroAgent/files/ - Only images (not arbitrary files) are sent to CC via stream-json stdin Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🐛 fix: read imageList from persisted DB message instead of chatUploadFileList chatUploadFileList is cleared after sendMessageInServer, so tempImages was empty by the time the executor ran. Now reads imageList from the persisted user message in heteroData.messages instead. Also removes debug console.log/console.error statements. Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * update i18n * 🐛 fix: prevent orphan tool UI by deferring handler events during step transition Root cause: when a CC step boundary occurs, the adapter produces [stream_end, stream_start(newStep), stream_chunk(tools_calling)] in one batch. The executor deferred stream_start via persistQueue but forwarded stream_chunk synchronously — handler received tools_calling BEFORE stream_start, dispatching tools to the OLD assistant message → UI showed orphan tool warning. Fix: add pendingStepTransition flag that defers ALL handler-bound events through persistQueue until stream_start is forwarded, guaranteeing correct event ordering. Also adds: - Minimal regression test in gatewayEventHandler confirming correct ordering - Multi-tool per turn regression test from real LOBE-7240 trace - Data-driven regression replaying 133 real CC events from regression.json Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ✨ feat: add lab toggle for heterogeneous agent (Claude Code) - Add enableHeterogeneousAgent to UserLabSchema + defaults (off by default) - Add selector + settings UI toggle (desktop only) - Gate "Claude Code Agent" sidebar menu item behind the lab setting - Remove regression.json (no longer needed) - Add i18n keys for the lab feature Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🐛 fix: gate heterogeneous agent execution behind isDesktop check Without this, web users with an agent that has heterogeneousProvider config would hit the CC execution path and fail (no Electron IPC). Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ♻️ refactor: rename tool identifier from acp-agent to claude-code Also update operation label to "External agent running". Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ✨ feat: add CLI agent detectors for system tools settings Detect agentic coding CLIs installed on the system: - Claude Code, Codex, Gemini CLI, Qwen Code, Kimi CLI, Aider - Uses validated detection (which + --version keyword matching) - New "CLI Agents" category in System Tools settings - i18n for en-US and zh-CN Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🐛 fix: fix token usage over-counting in CC execution Two bugs fixed: 1. Adapter: same message.id emitted duplicate step_complete(turn_metadata) for each content block (thinking/text/tool_use) — all carry identical usage. Now deduped by message.id, only emits once per turn. 2. Executor: CC result event contains authoritative session-wide usage totals but was ignored. Now adapter emits step_complete(result_usage) from the result event, executor uses it to override accumulated values. Fixes LOBE-7261 Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🔧 chore: gitignore cc-stream.json and .heterogeneous-tracing/ Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * 🔧 chore: untrack .heerogeneous-tracing/ Co-Authored-By: Claude Opus 4.6 (1M context) <noreply@anthropic.com> * ✨ feat: wire CC session resume for multi-turn conversations Reads `ccSessionId` from topic metadata and passes it as `resumeSessionId` into the heterogeneous-agent executor, which forwards it into the Electron main-process controller. `sendPrompt` then appends `--resume <id>` so the next turn continues the same Claude Code session instead of starting fresh. After each run, the CC init-event session_id (captured by the adapter) is persisted back onto the topic so the chain survives page reloads. Also stops killing the session in `finally` — it needs to stay alive for subsequent turns; cleanup happens on topic deletion or app quit. * 🐛 fix: record cache token breakdown in CC execution metadata The prior token-usage fix only wrote totals — `inputCachedTokens`, `inputWriteCacheTokens` and `inputCacheMissTokens` were dropped, so the pricing card rendered zero cached/write-cache tokens even though CC had reported them. Map the accumulated Anthropic-shape usage to the same breakdown the anthropic usage converter emits, so CC turns display consistently with Gateway turns. Refs LOBE-7261 * ♻️ refactor: write CC usage under metadata.usage instead of flat fields Flat `inputCachedTokens / totalInputTokens / ...` on `MessageMetadata` are the legacy shape; new code should put usage under `metadata.usage`. Move the CC executor to the nested shape so it matches the convention the rest of the runtime is migrating to. Refs LOBE-7261 * ♻️ refactor(types): mark flat usage fields on MessageMetadata as deprecated Stop extending `ModelUsage` and redeclare each token field inline with a `@deprecated` JSDoc pointing to `metadata.usage` (nested). Existing readers still type-check, but IDEs now surface the deprecation so writers migrate to the nested shape. * ♻️ refactor(types): mark flat performance fields on MessageMetadata as deprecated Stop extending `ModelPerformance` and redeclare `duration` / `latency` / `tps` / `ttft` inline with `@deprecated`, pointing at `metadata.performance`. Mirrors the same treatment just done for the token usage fields. * ✨ feat: CC agent gets claude avatar + lands on chat page directly Skip the shared createAgent hook's /profile redirect for the Claude Code variant — its config is fixed so the profile editor would be noise — and preseed the Claude avatar from @lobehub/icons-static-avatar so new CC agents aren't blank. * 🐛 fix(conversation-flow): read usage/performance from nested metadata `splitMetadata` only scraped the legacy flat token/perf fields, so messages written under the new canonical shape (`metadata.usage`, `metadata.performance`) never populated `UIChatMessage.usage` and the Extras panel rendered blank. - Prefer nested `metadata.usage` / `metadata.performance` when present; keep flat scraping as fallback for pre-migration rows. - Add `usage` / `performance` to FlatListBuilder's filter sets so the nested blobs don't leak into `otherMetadata`. - Drop the stale `usage! || metadata` fallback in the Assistant / CouncilMember Extra renders — with splitMetadata fixed, `item.usage` is always populated when usage data exists, and passing raw metadata as ModelUsage is wrong now that the flat fields are gone. * 🐛 fix: skip stores.reset on initial dataSyncConfig hydration `useDataSyncConfig`'s SWR onSuccess called `refreshUserData` (which runs `stores.reset()`) whenever the freshly-fetched config didn't deep-equal the hard-coded initial `{ storageMode: 'cloud' }` — which happens on every first load. The reset would wipe `chat.activeAgentId` just after `AgentIdSync` set it from the URL, and because `AgentIdSync`'s sync effects are keyed on `params.aid` (which hasn't changed), they never re-fire to restore it. Result: topic SWR saw `activeAgentId === ''`, treated the container as invalid, and left the sidebar stuck on the loading skeleton. Gate the reset on `isInitRemoteServerConfig` so it only runs when the user actually switches sync modes, not on the first hydration. * ✨ feat(claude-code): wire Inspector layer for CC tool calls Mirrors local-system: each CC tool now has an inspector rendered above the tool-call output instead of an opaque default row. - `Inspector.tsx` — registry that passes the CC tool name itself as the shared factories' `translationKey`. react-i18next's missing-key fallback surfaces the literal name (Bash / Edit / Glob / Grep / Read / Write), so we don't add CC-specific entries to the plugin locale. - `ReadInspector.tsx` / `WriteInspector.tsx` — thin adapters that map Anthropic-native args (`file_path` / `offset` / `limit`) onto the shared inspectors' shape (`path` / `startLine` / `endLine`), so shared stays pure. Bash / Edit / Glob / Grep reuse shared factories directly. - Register `ClaudeCodeInspectors` under `claude-code` in the builtin-tools inspector dispatch. Also drops the redundant `Render/Bash/index.tsx` wrapper and pipes the shared `RunCommandRender` straight into the registry. * ♻️ refactor: use agentSelectors.isCurrentAgentHeterogeneous Two callsites (ConversationArea / useActionsBarConfig) were reaching into `currentAgentConfig(...)?.agencyConfig?.heterogeneousProvider` inline. Switch them to the existing `isCurrentAgentHeterogeneous` selector so the predicate lives in one place. * update * ♻️ refactor: drop no-op useCallback wrapper in AgentChat form `handleFinish` just called `updateConfig(values)` with no extra logic; the zustand action is already a stable reference so the wrapper added no memoization value. Leftover from the ACP refactor (930ba41fe3) where the handler once did more work — hand the action straight to `onFinish`. * update * ⏪ revert: roll back conversation-flow nested-shape reads Unwind the `splitMetadata` nested-preference + `FlatListBuilder` filter additions from 306fd6561f. The nested `metadata.usage` / `metadata.performance` promotion now happens in `parse.ts` (and a `?? metadata?.usage` fallback at the UI callsites), so conversation-flow's transformer layer goes back to its original flat-field-only behavior. * update * 🐛 fix(cc): wire Stop to cancel the external Claude Code process Previously hitting Stop only flipped the `execHeterogeneousAgent` operation to `cancelled` in the store — the spawned `claude -p` process kept running and kept streaming/persisting output for the user. The op's abort signal had no listeners and no `onCancelHandler` was registered. - On session start, register an `onCancelHandler` that calls `heterogeneousAgentService.cancelSession(sessionId)` (SIGINT to the CLI). - Read the op's `abortController.signal` and short-circuit `onRawLine` so late events the CLI emits between SIGINT and exit don't leak into DB writes. - Skip the error-event forward in `onError` / the outer catch when the abort came from the user, so the UI doesn't surface a misleading error toast on top of the already-cancelled operation. Verified end-to-end: prompt that runs a long sequence of Reads → click Stop → `claude -p` process is gone within 2s, op status = cancelled, no error message written to the conversation. * ✨ feat(sidebar): mark heterogeneous agents with an "External" tag Pipes the agent's `agencyConfig.heterogeneousProvider.type` through the sidebar data flow and renders a `<Tag>` next to the title for any agent driven by an external CLI runtime (Claude Code today, more later). Mirrors the group-member External pattern so future provider types just need a label swap — the field is a string, not a boolean. - `SidebarAgentItem.heterogeneousType?: string | null` on the shared type - `HomeRepository.getSidebarAgentList` selects `agents.agencyConfig` and derives the field via `cleanObject` - `AgentItem` shows `<Tag>{t('group.profile.external')}</Tag>` when the field is present Verified client-side by injecting `heterogeneousType: 'claudecode'` into a sidebar item at runtime — the "外部" tag renders next to the title in the zh-CN locale. * ♻️ refactor(i18n): dedicated key for the sidebar external-agent tag Instead of reusing `group.profile.external` (which is about group members that are user-linked rather than virtual), add `agentSidebar.externalTag` specifically for the heterogeneous-runtime tag. Keeps the two concepts separate so we can swap this one to "Claude Code" / provider-specific labels later without touching the group UI copy. Remember to run `pnpm i18n` before the PR so the remaining locales pick up the new key. * 🐛 fix: clear remaining CI type errors Three small fixes so `tsgo --noEmit` exits clean: - `AgentIdSync`: `useChatStoreUpdater` is typed off the chat-store key, whose `activeAgentId` is `string` (initial ''). Coerce the optional URL param to `''` so the store key type matches; `createStoreUpdater` still skips the setState when the value is undefined-ish. - `heterogeneousAgentExecutor.test.ts`: `scope: 'session'` isn't a valid `MessageMapScope` (the union dropped that variant); switch the fixture to `'main'`, which is the correct scope for agent main conversations. - Same test file: `Array.at(-1)` is `T | undefined`; non-null assert since the preceding calls guarantee the slot is populated. * 🐛 fix: loosen createStoreUpdater signature to accept nullable values Upstream `createStoreUpdater` types `value` as exactly `T[Key]`, so any call site feeding an optional source (URL param, selector that may return undefined) fails type-check — even though the runtime already guards `typeof value !== 'undefined'` and no-ops in that case. Wrap it once in `store/utils/createStoreUpdater.ts` with a `T[Key] | null | undefined` value type so callers can pass `params.aid` directly, instead of the lossy `?? ''` fallback the previous commit used (which would have written an empty-string sentinel into the chat store). Swap the import in `AgentIdSync.tsx`. --------- Co-authored-by: Claude Opus 4.6 (1M context) <noreply@anthropic.com> |

||

|---|---|---|

| .agents/skills | ||

| .claude | ||

| .codex | ||

| .conductor | ||

| .cursor | ||

| .devcontainer | ||

| .github | ||

| .husky | ||

| .vscode | ||

| __mocks__/zustand | ||

| apps | ||

| changelog | ||

| docker-compose | ||

| docs | ||

| e2e | ||

| locales | ||

| packages | ||

| patches | ||

| plugins/vite | ||

| public | ||

| scripts | ||

| src | ||

| tests | ||

| .bunfig.toml | ||

| .changelogrc.cjs | ||

| .console-log-whitelist.json | ||

| .cursorindexingignore | ||

| .dockerignore | ||

| .editorconfig | ||

| .env.desktop | ||

| .env.example | ||

| .env.example.development | ||

| .gitattributes | ||

| .gitignore | ||

| .i18nrc.js | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .releaserc.cjs | ||

| .remarkrc.mdx.mjs | ||

| .remarkrc.mjs | ||

| .seorc.cjs | ||

| .stylelintignore | ||

| AGENTS.md | ||

| CHANGELOG.md | ||

| CLAUDE.md | ||

| CODE_OF_CONDUCT.md | ||

| codecov.yml | ||

| commitlint.config.mjs | ||

| conductor.json | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| drizzle.config.ts | ||

| eslint.config.mjs | ||

| GEMINI.md | ||

| index.html | ||

| index.mobile.html | ||

| knip.ts | ||

| LICENSE | ||

| netlify.toml | ||

| next.config.ts | ||

| package.json | ||

| pnpm-workspace.yaml | ||

| prettier.config.mjs | ||

| README.md | ||

| README.zh-CN.md | ||

| renovate.json | ||

| SECURITY.md | ||

| stylelint.config.mjs | ||

| tsconfig.json | ||

| vercel.json | ||

| vite.config.ts | ||

| vitest.config.mts | ||

LobeHub

LobeHub is the ultimate space for work and life:

to find, build, and collaborate with agent teammates that grow with you.

We’re building the world’s largest human–agent co-evolving network.

English · 简体中文 · Official Site · Changelog · Documents · Blog · Feedback

Share LobeHub Repository

Agent teammates that grow with you

Table of contents

TOC

- 👋🏻 Getting Started & Join Our Community

- ✨ Features

- Create: Agents as the Unit of Work

- Collaborate: Scale New Forms of Collaboration Networks

- Evolve: Co-evolution of Humans and Agents

- MCP Plugin One-Click Installation

- MCP Marketplace

- Desktop App

- Smart Internet Search

- Chain of Thought

- Branching Conversations

- Artifacts Support

- File Upload /Knowledge Base

- Multi-Model Service Provider Support

- Local Large Language Model (LLM) Support

- Model Visual Recognition

- TTS & STT Voice Conversation

- Text to Image Generation

- Plugin System (Function Calling)

- Agent Market (GPTs)

- Support Local / Remote Database

- Support Multi-User Management

- Progressive Web App (PWA)

- Mobile Device Adaptation

- Custom Themes

*What's more

- 🛳 Self Hosting

- 📦 Ecosystem

- 🧩 Plugins

- ⌨️ Local Development

- 🤝 Contributing

- ❤️ Sponsor

- 🔗 More Products

https://github.com/user-attachments/assets/6710ad97-03d0-4175-bd75-adff9b55eca2

👋🏻 Getting Started & Join Our Community

We are a group of e/acc design-engineers, hoping to provide modern design components and tools for AIGC. By adopting the Bootstrapping approach, we aim to provide developers and users with a more open, transparent, and user-friendly product ecosystem.

Whether for users or professional developers, LobeHub will be your AI Agent playground. Please be aware that LobeHub is currently under active development, and feedback is welcome for any issues encountered.

[!IMPORTANT]

Star Us, You will receive all release notifications from GitHub without any delay ~ ⭐️

Star History

✨ Features

Today’s agents are one-off, task-driven tools. They lack context, live in isolation, and require manual hand-offs between different windows and models. While some maintain memory, it is often global, shallow, and impersonal. In this mode, users are forced to toggle between fragmented conversations, making it difficult to form structured productivity.

LobeHub changes everything.

LobeHub is a work-and-lifestyle space to find, build, and collaborate with agent teammates that grow with you. In LobeHub, we treat Agents as the unit of work, providing an infrastructure where humans and agents co-evolve.

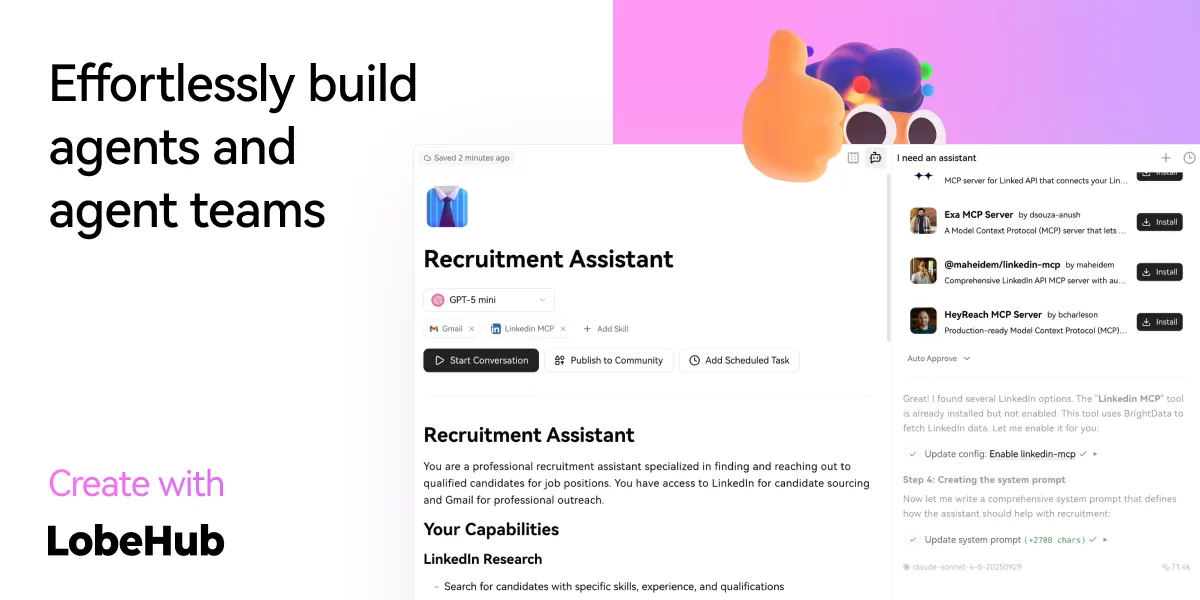

Create: Agents as the Unit of Work

Building a personalized AI team starts with the Agent Builder. You can describe what you need once, and the agent setup starts right away, applying auto-configurations so you can use it instantly.

- Unified Intelligence: Seamlessly access any model and any modality—all under your control.

- 10,000+ Skills: Connect your agents to the skills you use every day with a library of over 10,000 tools and MCP-compatible plugins.

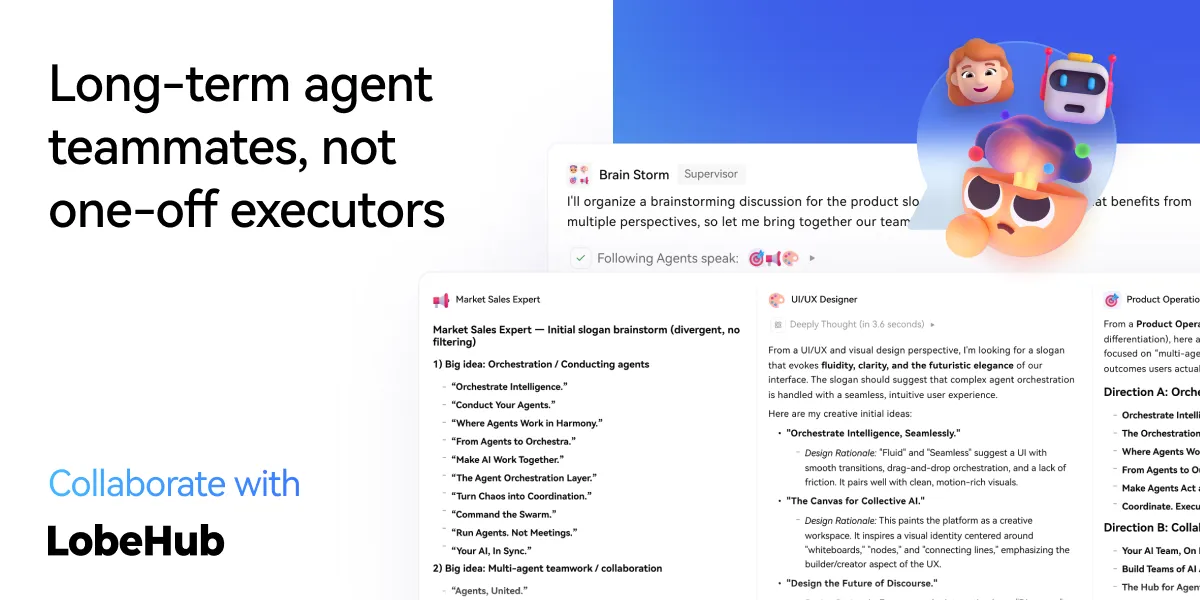

Collaborate: Scale New Forms of Collaboration Networks

LobeHub introduces Agent Groups, allowing you to work with agents like real teammates. The system assembles the right agents for the task, enabling parallel collaboration and iterative improvement.

- Pages: Write and refine content with multiple agents in one place with a shared context.

- Schedule: Schedule runs and let agents do the work at the right time, even while you are away.

- Project: Organize work by project to keep everything structured and easy to track.

- Workspace: A shared space for teams to collaborate with agents, ensuring clear ownership and visibility across the organization.

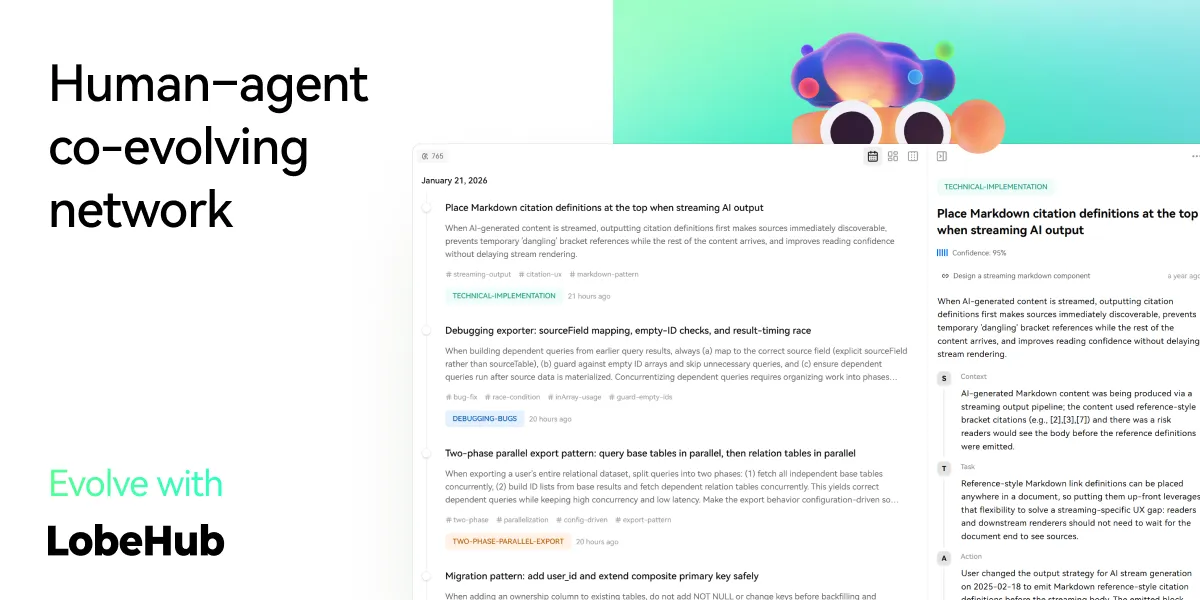

Evolve: Co-evolution of Humans and Agents

The best AI is one that understands you deeply. LobeHub features Personal Memory that builds a clear understanding of your needs.

- Continual Learning: Your agents learn from how you work, adapting their behavior to act at the right moment.

- White-Box Memory: We believe in transparency. Your agents use structured, editable memory, giving you full control over what they remember.

More Features

MCP Plugin One-Click Installation

Seamlessly Connect Your AI to the World

Unlock the full potential of your AI by enabling smooth, secure, and dynamic interactions with external tools, data sources, and services. LobeHub's MCP (Model Context Protocol) plugin system breaks down the barriers between your AI and the digital ecosystem, allowing for unprecedented connectivity and functionality.

Transform your conversations into powerful workflows by connecting to databases, APIs, file systems, and more. Experience the freedom of AI that truly understands and interacts with your world.

MCP Marketplace

Discover, Connect, Extend

Browse a growing library of MCP plugins to expand your AI's capabilities and streamline your workflows effortlessly. Visit lobehub.com/mcp to explore the MCP Marketplace, which offers a curated collection of integrations that enhance your AI's ability to work with various tools and services.

From productivity tools to development environments, discover new ways to extend your AI's reach and effectiveness. Connect with the community and find the perfect plugins for your specific needs.

Desktop App

Peak Performance, Zero Distractions

Get the full LobeHub experience without browser limitations—comprehensive, focused, and always ready to go. Our desktop application provides a dedicated environment for your AI interactions, ensuring optimal performance and minimal distractions.

Experience faster response times, better resource management, and a more stable connection to your AI assistant. The desktop app is designed for users who demand the best performance from their AI tools.

Smart Internet Search

Online Knowledge On Demand

With real-time internet access, your AI keeps up with the world—news, data, trends, and more. Stay informed and get the most current information available, enabling your AI to provide accurate and up-to-date responses.

Access live information, verify facts, and explore current events without leaving your conversation. Your AI becomes a gateway to the world's knowledge, always current and comprehensive.

Chain of Thought

Experience AI reasoning like never before. Watch as complex problems unfold step by step through our innovative Chain of Thought (CoT) visualization. This breakthrough feature provides unprecedented transparency into AI's decision-making process, allowing you to observe how conclusions are reached in real-time.

By breaking down complex reasoning into clear, logical steps, you can better understand and validate the AI's problem-solving approach. Whether you're debugging, learning, or simply curious about AI reasoning, CoT visualization transforms abstract thinking into an engaging, interactive experience.

Branching Conversations

Introducing a more natural and flexible way to chat with AI. With Branch Conversations, your discussions can flow in multiple directions, just like human conversations do. Create new conversation branches from any message, giving you the freedom to explore different paths while preserving the original context.

Choose between two powerful modes:

- Continuation Mode: Seamlessly extend your current discussion while maintaining valuable context

- Standalone Mode: Start fresh with a new topic based on any previous message

This groundbreaking feature transforms linear conversations into dynamic, tree-like structures, enabling deeper exploration of ideas and more productive interactions.

Artifacts Support

Experience the power of Claude Artifacts, now integrated into LobeHub. This revolutionary feature expands the boundaries of AI-human interaction, enabling real-time creation and visualization of diverse content formats.

Create and visualize with unprecedented flexibility:

- Generate and display dynamic SVG graphics

- Build and render interactive HTML pages in real-time

- Produce professional documents in multiple formats

File Upload /Knowledge Base

LobeHub supports file upload and knowledge base functionality. You can upload various types of files including documents, images, audio, and video, as well as create knowledge bases, making it convenient for users to manage and search for files. Additionally, you can utilize files and knowledge base features during conversations, enabling a richer dialogue experience.

https://github.com/user-attachments/assets/faa8cf67-e743-4590-8bf6-ebf6ccc34175

[!TIP]

Learn more on 📘 LobeHub Knowledge Base Launch — From Now On, Every Step Counts

Multi-Model Service Provider Support

In the continuous development of LobeHub, we deeply understand the importance of diversity in model service providers for meeting the needs of the community when providing AI conversation services. Therefore, we have expanded our support to multiple model service providers, rather than being limited to a single one, in order to offer users a more diverse and rich selection of conversations.

In this way, LobeHub can more flexibly adapt to the needs of different users, while also providing developers with a wider range of choices.

Supported Model Service Providers

We have implemented support for the following model service providers:

See more providers (+-10)

📊 Total providers: 0

At the same time, we are also planning to support more model service providers. If you would like LobeHub to support your favorite service provider, feel free to join our 💬 community discussion.

Local Large Language Model (LLM) Support

To meet the specific needs of users, LobeHub also supports the use of local models based on Ollama, allowing users to flexibly use their own or third-party models.

[!TIP]

Learn more about 📘 Using Ollama in LobeHub by checking it out.

Model Visual Recognition

LobeHub now supports OpenAI's latest gpt-4-vision model with visual recognition capabilities,

a multimodal intelligence that can perceive visuals. Users can easily upload or drag and drop images into the dialogue box,

and the agent will be able to recognize the content of the images and engage in intelligent conversation based on this,

creating smarter and more diversified chat scenarios.

This feature opens up new interactive methods, allowing communication to transcend text and include a wealth of visual elements. Whether it's sharing images in daily use or interpreting images within specific industries, the agent provides an outstanding conversational experience.

TTS & STT Voice Conversation

LobeHub supports Text-to-Speech (TTS) and Speech-to-Text (STT) technologies, enabling our application to convert text messages into clear voice outputs, allowing users to interact with our conversational agent as if they were talking to a real person. Users can choose from a variety of voices to pair with the agent.

Moreover, TTS offers an excellent solution for those who prefer auditory learning or desire to receive information while busy. In LobeHub, we have meticulously selected a range of high-quality voice options (OpenAI Audio, Microsoft Edge Speech) to meet the needs of users from different regions and cultural backgrounds. Users can choose the voice that suits their personal preferences or specific scenarios, resulting in a personalized communication experience.

Text to Image Generation

With support for the latest text-to-image generation technology, LobeHub now allows users to invoke image creation tools directly within conversations with the agent. By leveraging the capabilities of AI tools such as DALL-E 3, MidJourney, and Pollinations, the agents are now equipped to transform your ideas into images.

This enables a more private and immersive creative process, allowing for the seamless integration of visual storytelling into your personal dialogue with the agent.

Plugin System (Function Calling)

The plugin ecosystem of LobeHub is an important extension of its core functionality, greatly enhancing the practicality and flexibility of the LobeHub assistant.

By utilizing plugins, LobeHub assistants can obtain and process real-time information, such as searching for web information and providing users with instant and relevant news.

In addition, these plugins are not limited to news aggregation, but can also extend to other practical functions, such as quickly searching documents, generating images, obtaining data from various platforms like Bilibili, Steam, and interacting with various third-party services.

[!TIP]

Learn more about 📘 Plugin Usage by checking it out.

| Recent Submits | Description |

|---|---|

| Shopping tools By shoppingtools on 2026-01-12 |

Search for products on eBay & AliExpress, find eBay events & coupons. Get prompt examples.shopping e-bay ali-express coupons |

| SEO Assistant By webfx on 2026-01-12 |

The SEO Assistant can generate search engine keyword information in order to aid the creation of content.seo keyword |

| Video Captions By maila on 2025-12-13 |

Convert Youtube links into transcribed text, enable asking questions, create chapters, and summarize its content.video-to-text youtube |

| WeatherGPT By steven-tey on 2025-12-13 |

Get current weather information for a specific location.weather |

📊 Total plugins: 40

Agent Market (GPTs)

In LobeHub Agent Marketplace, creators can discover a vibrant and innovative community that brings together a multitude of well-designed agents, which not only play an important role in work scenarios but also offer great convenience in learning processes. Our marketplace is not just a showcase platform but also a collaborative space. Here, everyone can contribute their wisdom and share the agents they have developed.

[!TIP]

By 🤖/🏪 Submit Agents, you can easily submit your agent creations to our platform. Importantly, LobeHub has established a sophisticated automated internationalization (i18n) workflow, capable of seamlessly translating your agent into multiple language versions. This means that no matter what language your users speak, they can experience your agent without barriers.

[!IMPORTANT]

We welcome all users to join this growing ecosystem and participate in the iteration and optimization of agents. Together, we can create more interesting, practical, and innovative agents, further enriching the diversity and practicality of the agent offerings.

| Recent Submits | Description |

|---|---|

| Turtle Soup Host By CSY2022 on 2025-06-19 |

A turtle soup host needs to provide the scenario, the complete story (truth of the event), and the key point (the condition for guessing correctly).turtle-soup reasoning interaction puzzle role-playing |

| Academic Writing Assistant By swarfte on 2025-06-17 |

Expert in academic research paper writing and formal documentationacademic-writing research formal-style |

| Gourmet Reviewer🍟 By renhai-lab on 2025-06-17 |

Food critique expertgourmet review writing |

| Minecraft Senior Developer By iamyuuk on 2025-06-17 |

Expert in advanced Java development and Minecraft mod and server plugin developmentdevelopment programming minecraft java |

📊 Total agents: 505

Support Local / Remote Database

LobeHub supports the use of both server-side and local databases. Depending on your needs, you can choose the appropriate deployment solution:

- Local database: suitable for users who want more control over their data and privacy protection. LobeHub uses CRDT (Conflict-Free Replicated Data Type) technology to achieve multi-device synchronization. This is an experimental feature aimed at providing a seamless data synchronization experience.

- Server-side database: suitable for users who want a more convenient user experience. LobeHub supports PostgreSQL as a server-side database. For detailed documentation on how to configure the server-side database, please visit Configure Server-side Database.

Regardless of which database you choose, LobeHub can provide you with an excellent user experience.

Support Multi-User Management

LobeHub supports multi-user management and provides flexible user authentication solutions:

- Better Auth: LobeHub integrates

Better Auth, a modern and flexible authentication library that supports multiple authentication methods, including OAuth, email login, credential login, magic links, and more. WithBetter Auth, you can easily implement user registration, login, session management, social login, multi-factor authentication (MFA), and other functions to ensure the security and privacy of user data.

Progressive Web App (PWA)

We deeply understand the importance of providing a seamless experience for users in today's multi-device environment. Therefore, we have adopted Progressive Web Application (PWA) technology, a modern web technology that elevates web applications to an experience close to that of native apps.

Through PWA, LobeHub can offer a highly optimized user experience on both desktop and mobile devices while maintaining high-performance characteristics. Visually and in terms of feel, we have also meticulously designed the interface to ensure it is indistinguishable from native apps, providing smooth animations, responsive layouts, and adapting to different device screen resolutions.

[!NOTE]

If you are unfamiliar with the installation process of PWA, you can add LobeHub as your desktop application (also applicable to mobile devices) by following these steps:

- Launch the Chrome or Edge browser on your computer.

- Visit the LobeHub webpage.

- In the upper right corner of the address bar, click on the Install icon.

- Follow the instructions on the screen to complete the PWA Installation.

Mobile Device Adaptation

We have carried out a series of optimization designs for mobile devices to enhance the user's mobile experience. Currently, we are iterating on the mobile user experience to achieve smoother and more intuitive interactions. If you have any suggestions or ideas, we welcome you to provide feedback through GitHub Issues or Pull Requests.

Custom Themes

As a design-engineering-oriented application, LobeHub places great emphasis on users' personalized experiences, hence introducing flexible and diverse theme modes, including a light mode for daytime and a dark mode for nighttime. Beyond switching theme modes, a range of color customization options allow users to adjust the application's theme colors according to their preferences. Whether it's a desire for a sober dark blue, a lively peach pink, or a professional gray-white, users can find their style of color choices in LobeHub.

[!TIP]

The default configuration can intelligently recognize the user's system color mode and automatically switch themes to ensure a consistent visual experience with the operating system. For users who like to manually control details, LobeHub also offers intuitive setting options and a choice between chat bubble mode and document mode for conversation scenarios.

* What's more

Beside these features, LobeHub also have much better basic technique underground:

- 💨 Quick Deployment: Using the Vercel platform or docker image, you can deploy with just one click and complete the process within 1 minute without any complex configuration.

- 🌐 Custom Domain: If users have their own domain, they can bind it to the platform for quick access to the dialogue agent from anywhere.

- 🔒 Privacy Protection: All data is stored locally in the user's browser, ensuring user privacy.

- 💎 Exquisite UI Design: With a carefully designed interface, it offers an elegant appearance and smooth interaction. It supports light and dark themes and is mobile-friendly. PWA support provides a more native-like experience.

- 🗣️ Smooth Conversation Experience: Fluid responses ensure a smooth conversation experience. It fully supports Markdown rendering, including code highlighting, LaTex formulas, Mermaid flowcharts, and more.

✨ more features will be added when LobeHub evolve.

🛳 Self Hosting

LobeHub provides Self-Hosted Version with Vercel, Alibaba Cloud, and Docker Image. This allows you to deploy your own chatbot within a few minutes without any prior knowledge.

[!TIP]

Learn more about 📘 Build your own LobeHub by checking it out.

A Deploying with Vercel, Zeabur , Sealos or Alibaba Cloud

"If you want to deploy this service yourself on Vercel, Zeabur or Alibaba Cloud, you can follow these steps:

- Prepare your OpenAI API Key.

- Click the button below to start deployment: Log in directly with your GitHub account, and remember to fill in the

OPENAI_API_KEY(required) on the environment variable section. - After deployment, you can start using it.

- Bind a custom domain (optional): The DNS of the domain assigned by Vercel is polluted in some areas; binding a custom domain can connect directly.

| Deploy with Vercel | Deploy with Zeabur | Deploy with Sealos | Deploy with RepoCloud | Deploy with Alibaba Cloud |

|---|---|---|---|---|

|

After Fork

After fork, only retain the upstream sync action and disable other actions in your repository on GitHub.

Keep Updated

If you have deployed your own project following the one-click deployment steps in the README, you might encounter constant prompts indicating "updates available." This is because Vercel defaults to creating a new project instead of forking this one, resulting in an inability to detect updates accurately.

[!TIP]

We suggest you redeploy using the following steps, 📘 Auto Sync With Latest

B Deploying with Docker

We provide a Docker image for deploying the LobeHub service on your own private device. Use the following command to start the LobeHub service:

- create a folder to for storage files

$ mkdir lobehub-db && cd lobehub-db

- init the LobeHub infrastructure

bash <(curl -fsSL https://lobe.li/setup.sh)

- Start the LobeHub service

docker compose up -d

[!NOTE]

For detailed instructions on deploying with Docker, please refer to the 📘 Docker Deployment Guide

Environment Variable

This project provides some additional configuration items set with environment variables:

| Environment Variable | Required | Description | Example |

|---|---|---|---|

OPENAI_API_KEY |

Yes | This is the API key you apply on the OpenAI account page | sk-xxxxxx...xxxxxx |

OPENAI_PROXY_URL |

No | If you manually configure the OpenAI interface proxy, you can use this configuration item to override the default OpenAI API request base URL | https://api.chatanywhere.cn or https://aihubmix.com/v1 The default value is https://api.openai.com/v1 |

OPENAI_MODEL_LIST |

No | Used to control the model list. Use + to add a model, - to hide a model, and model_name=display_name to customize the display name of a model, separated by commas. |

qwen-7b-chat,+glm-6b,-gpt-3.5-turbo |

[!NOTE]

The complete list of environment variables can be found in the 📘 Environment Variables

📦 Ecosystem

| NPM | Repository | Description | Version |

|---|---|---|---|

| @lobehub/ui | lobehub/lobe-ui | Open-source UI component library dedicated to building AIGC web applications. |  |

| @lobehub/icons | lobehub/lobe-icons | Popular AI / LLM Model Brand SVG Logo and Icon Collection. | |

| @lobehub/tts | lobehub/lobe-tts | High-quality & reliable TTS/STT React Hooks library |  |

| @lobehub/lint | lobehub/lobe-lint | Configurations for ESlint, Stylelint, Commitlint, Prettier, Remark, and Semantic Release for LobeHub. |  |

🧩 Plugins

Plugins provide a means to extend the Function Calling capabilities of LobeHub. They can be used to introduce new function calls and even new ways to render message results. If you are interested in plugin development, please refer to our 📘 Plugin Development Guide in the Wiki.

- lobe-chat-plugins: This is the plugin index for LobeHub. It accesses index.json from this repository to display a list of available plugins for LobeHub to the user.

- chat-plugin-template: This is the plugin template for LobeHub plugin development.

- @lobehub/chat-plugin-sdk: The LobeHub Plugin SDK assists you in creating exceptional chat plugins for LobeHub.

- @lobehub/chat-plugins-gateway: The LobeHub Plugins Gateway is a backend service that provides a gateway for LobeHub plugins. We deploy this service using Vercel. The primary API POST /api/v1/runner is deployed as an Edge Function.

[!NOTE]

The plugin system is currently undergoing major development. You can learn more in the following issues:

- Plugin Phase 1: Implement separation of the plugin from the main body, split the plugin into an independent repository for maintenance, and realize dynamic loading of the plugin.

- Plugin Phase 2: The security and stability of the plugin's use, more accurately presenting abnormal states, the maintainability of the plugin architecture, and developer-friendly.

- Plugin Phase 3: Higher-level and more comprehensive customization capabilities, support for plugin authentication, and examples.

⌨️ Local Development

You can use GitHub Codespaces for online development:

Or clone it for local development:

$ git clone https://github.com/lobehub/lobehub.git

$ cd lobehub

$ pnpm install

$ pnpm dev # Full-stack (Next.js + Vite SPA)

$ bun run dev:spa # SPA frontend only (port 9876)

Debug Proxy: After running

dev:spa, the terminal prints a proxy URL likehttps://app.lobehub.com/_dangerous_local_dev_proxy?debug-host=http%3A%2F%2Flocalhost%3A9876. Open it to develop locally against the production backend with HMR.

If you would like to learn more details, please feel free to look at our 📘 Development Guide.

🤝 Contributing

Contributions of all types are more than welcome; if you are interested in contributing code, feel free to check out our GitHub Issues and Projects to get stuck in to show us what you're made of.

[!TIP]

We are creating a technology-driven forum, fostering knowledge interaction and the exchange of ideas that may culminate in mutual inspiration and collaborative innovation.

Help us make LobeHub better. Welcome to provide product design feedback, user experience discussions directly to us.

Principal Maintainers: @arvinxx @canisminor1990

|

|

|

|---|---|

|

|

|

|

❤️ Sponsor

Every bit counts and your one-time donation sparkles in our galaxy of support! You're a shooting star, making a swift and bright impact on our journey. Thank you for believing in us – your generosity guides us toward our mission, one brilliant flash at a time.

🔗 More Products

- 🅰️ Lobe SD Theme: Modern theme for Stable Diffusion WebUI, exquisite interface design, highly customizable UI, and efficiency-boosting features.

- ⛵️ Lobe Midjourney WebUI: WebUI for Midjourney, leverages AI to quickly generate a wide array of rich and diverse images from text prompts, sparking creativity and enhancing conversations.

- 🌏 Lobe i18n : Lobe i18n is an automation tool for the i18n (internationalization) translation process, powered by ChatGPT. It supports features such as automatic splitting of large files, incremental updates, and customization options for the OpenAI model, API proxy, and temperature.

- 💌 Lobe Commit: Lobe Commit is a CLI tool that leverages Langchain/ChatGPT to generate Gitmoji-based commit messages.

Copyright © 2026 LobeHub.

This project is LobeHub Community License licensed.