mirror of

https://github.com/graphql-hive/console

synced 2026-04-21 14:37:17 +00:00

Update dependency @theguild/prettier-config to v1 (#676)

Co-authored-by: renovate[bot] <29139614+renovate[bot]@users.noreply.github.com> Co-authored-by: Kamil Kisiela <kamil.kisiela@gmail.com>

This commit is contained in:

parent

85aa5ae783

commit

1afe0ec73a

420 changed files with 5562 additions and 2863 deletions

|

|

@ -1,8 +1,9 @@

|

|||

# Changesets

|

||||

|

||||

Hello and welcome! This folder has been automatically generated by `@changesets/cli`, a build tool that works

|

||||

with multi-package repos, or single-package repos to help you version and publish your code. You can

|

||||

find the full documentation for it [in our repository](https://github.com/changesets/changesets)

|

||||

Hello and welcome! This folder has been automatically generated by `@changesets/cli`, a build tool

|

||||

that works with multi-package repos, or single-package repos to help you version and publish your

|

||||

code. You can find the full documentation for it

|

||||

[in our repository](https://github.com/changesets/changesets)

|

||||

|

||||

We have a quick list of common questions to get you started engaging with this project in

|

||||

[our documentation](https://github.com/changesets/changesets/blob/main/docs/common-questions.md)

|

||||

|

|

|

|||

|

|

@ -26,7 +26,10 @@ module.exports = {

|

|||

rules: {

|

||||

'no-process-env': 'error',

|

||||

'no-restricted-globals': ['error', 'stop'],

|

||||

'@typescript-eslint/no-unused-vars': ['error', { argsIgnorePattern: '^_', ignoreRestSiblings: true }],

|

||||

'@typescript-eslint/no-unused-vars': [

|

||||

'error',

|

||||

{ argsIgnorePattern: '^_', ignoreRestSiblings: true },

|

||||

],

|

||||

'no-empty': ['error', { allowEmptyCatch: true }],

|

||||

|

||||

'import/no-absolute-path': 'error',

|

||||

|

|

|

|||

16

.github/workflows/cd.yaml

vendored

16

.github/workflows/cd.yaml

vendored

|

|

@ -45,7 +45,9 @@ jobs:

|

|||

run: pnpm build:libraries

|

||||

|

||||

- name: Schema Publish

|

||||

run: ./packages/libraries/cli/bin/dev schema:publish "packages/services/api/src/modules/*/module.graphql.ts" --force --github

|

||||

run:

|

||||

./packages/libraries/cli/bin/dev schema:publish

|

||||

"packages/services/api/src/modules/*/module.graphql.ts" --force --github

|

||||

|

||||

- name: Prepare NPM Credentials

|

||||

run: |

|

||||

|

|

@ -65,7 +67,9 @@ jobs:

|

|||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Extract published version

|

||||

if: steps.changesets.outputs.published && contains(steps.changesets.outputs.publishedPackages, '"@graphql-hive/cli"')

|

||||

if:

|

||||

steps.changesets.outputs.published && contains(steps.changesets.outputs.publishedPackages,

|

||||

'"@graphql-hive/cli"')

|

||||

id: cli

|

||||

run: |

|

||||

echo '${{steps.changesets.outputs.publishedPackages}}' > cli-ver.json

|

||||

|

|

@ -88,7 +92,9 @@ jobs:

|

|||

working-directory: packages/libraries/cli

|

||||

env:

|

||||

VERSION: ${{ steps.cli.outputs.version }}

|

||||

run: pnpm oclif promote --no-xz --sha ${GITHUB_SHA:0:7} --version $VERSION || pnpm oclif promote --no-xz --sha ${GITHUB_SHA:0:8} --version $VERSION

|

||||

run:

|

||||

pnpm oclif promote --no-xz --sha ${GITHUB_SHA:0:7} --version $VERSION || pnpm oclif

|

||||

promote --no-xz --sha ${GITHUB_SHA:0:8} --version $VERSION

|

||||

|

||||

publish_rust:

|

||||

name: Publish Rust

|

||||

|

|

@ -316,7 +322,9 @@ jobs:

|

|||

run: strip target/x86_64-unknown-linux-gnu/release/router

|

||||

|

||||

- name: Compress

|

||||

run: ./target/x86_64-unknown-linux-gnu/release/compress ./target/x86_64-unknown-linux-gnu/release/router ./router.tar.gz

|

||||

run:

|

||||

./target/x86_64-unknown-linux-gnu/release/compress

|

||||

./target/x86_64-unknown-linux-gnu/release/router ./router.tar.gz

|

||||

|

||||

- name: Upload artifact

|

||||

uses: actions/upload-artifact@v3

|

||||

|

|

|

|||

14

.github/workflows/ci.yaml

vendored

14

.github/workflows/ci.yaml

vendored

|

|

@ -17,10 +17,7 @@ jobs:

|

|||

POSTGRES_USER: postgres

|

||||

POSTGRES_DB: registry

|

||||

options: >-

|

||||

--health-cmd pg_isready

|

||||

--health-interval 10s

|

||||

--health-timeout 5s

|

||||

--health-retries 5

|

||||

--health-cmd pg_isready --health-interval 10s --health-timeout 5s --health-retries 5

|

||||

|

||||

outputs:

|

||||

hive_token_present: ${{ steps.secrets_present.outputs.hive_token }}

|

||||

|

|

@ -128,7 +125,10 @@ jobs:

|

|||

id: pr-label-check

|

||||

|

||||

- name: Schema Check

|

||||

run: ./packages/libraries/cli/bin/dev schema:check "packages/services/api/src/modules/*/module.graphql.ts" ${{ steps.pr-label-check.outputs.SAFE_FLAG }} --github

|

||||

run:

|

||||

./packages/libraries/cli/bin/dev schema:check

|

||||

"packages/services/api/src/modules/*/module.graphql.ts" ${{

|

||||

steps.pr-label-check.outputs.SAFE_FLAG }} --github

|

||||

|

||||

test:

|

||||

name: Tests

|

||||

|

|

@ -283,7 +283,9 @@ jobs:

|

|||

run: strip target/x86_64-unknown-linux-gnu/release/router

|

||||

|

||||

- name: Compress

|

||||

run: ./target/x86_64-unknown-linux-gnu/release/compress ./target/x86_64-unknown-linux-gnu/release/router ./router.tar.gz

|

||||

run:

|

||||

./target/x86_64-unknown-linux-gnu/release/compress

|

||||

./target/x86_64-unknown-linux-gnu/release/router ./router.tar.gz

|

||||

|

||||

- name: Upload artifact

|

||||

uses: actions/upload-artifact@v3

|

||||

|

|

|

|||

|

|

@ -2,30 +2,43 @@

|

|||

|

||||

## 1. Purpose

|

||||

|

||||

A primary goal of GraphQL Hive is to be inclusive to the largest number of contributors, with the most varied and diverse backgrounds possible. As such, we are committed to providing a friendly, safe and welcoming environment for all, regardless of gender, sexual orientation, ability, ethnicity, socioeconomic status, and religion (or lack thereof).

|

||||

A primary goal of GraphQL Hive is to be inclusive to the largest number of contributors, with the

|

||||

most varied and diverse backgrounds possible. As such, we are committed to providing a friendly,

|

||||

safe and welcoming environment for all, regardless of gender, sexual orientation, ability,

|

||||

ethnicity, socioeconomic status, and religion (or lack thereof).

|

||||

|

||||

This code of conduct outlines our expectations for all those who participate in our community, as well as the consequences for unacceptable behavior.

|

||||

This code of conduct outlines our expectations for all those who participate in our community, as

|

||||

well as the consequences for unacceptable behavior.

|

||||

|

||||

We invite all those who participate in GraphQL Hive to help us create safe and positive experiences for everyone.

|

||||

We invite all those who participate in GraphQL Hive to help us create safe and positive experiences

|

||||

for everyone.

|

||||

|

||||

## 2. Open [Source/Culture/Tech] Citizenship

|

||||

|

||||

A supplemental goal of this Code of Conduct is to increase open [source/culture/tech] citizenship by encouraging participants to recognize and strengthen the relationships between our actions and their effects on our community.

|

||||

A supplemental goal of this Code of Conduct is to increase open [source/culture/tech] citizenship by

|

||||

encouraging participants to recognize and strengthen the relationships between our actions and their

|

||||

effects on our community.

|

||||

|

||||

Communities mirror the societies in which they exist and positive action is essential to counteract the many forms of inequality and abuses of power that exist in society.

|

||||

Communities mirror the societies in which they exist and positive action is essential to counteract

|

||||

the many forms of inequality and abuses of power that exist in society.

|

||||

|

||||

If you see someone who is making an extra effort to ensure our community is welcoming, friendly, and encourages all participants to contribute to the fullest extent, we want to know.

|

||||

If you see someone who is making an extra effort to ensure our community is welcoming, friendly, and

|

||||

encourages all participants to contribute to the fullest extent, we want to know.

|

||||

|

||||

## 3. Expected Behavior

|

||||

|

||||

The following behaviors are expected and requested of all community members:

|

||||

|

||||

- Participate in an authentic and active way. In doing so, you contribute to the health and longevity of this community.

|

||||

- Participate in an authentic and active way. In doing so, you contribute to the health and

|

||||

longevity of this community.

|

||||

- Exercise consideration and respect in your speech and actions.

|

||||

- Attempt collaboration before conflict.

|

||||

- Refrain from demeaning, discriminatory, or harassing behavior and speech.

|

||||

- Be mindful of your surroundings and of your fellow participants. Alert community leaders if you notice a dangerous situation, someone in distress, or violations of this Code of Conduct, even if they seem inconsequential.

|

||||

- Remember that community event venues may be shared with members of the public; please be respectful to all patrons of these locations.

|

||||

- Be mindful of your surroundings and of your fellow participants. Alert community leaders if you

|

||||

notice a dangerous situation, someone in distress, or violations of this Code of Conduct, even if

|

||||

they seem inconsequential.

|

||||

- Remember that community event venues may be shared with members of the public; please be

|

||||

respectful to all patrons of these locations.

|

||||

|

||||

## 4. Unacceptable Behavior

|

||||

|

||||

|

|

@ -35,41 +48,62 @@ The following behaviors are considered harassment and are unacceptable within ou

|

|||

- Sexist, racist, homophobic, transphobic, ableist or otherwise discriminatory jokes and language.

|

||||

- Posting or displaying sexually explicit or violent material.

|

||||

- Posting or threatening to post other people's personally identifying information ("doxing").

|

||||

- Personal insults, particularly those related to gender, sexual orientation, race, religion, or disability.

|

||||

- Personal insults, particularly those related to gender, sexual orientation, race, religion, or

|

||||

disability.

|

||||

- Inappropriate photography or recording.

|

||||

- Inappropriate physical contact. You should have someone's consent before touching them.

|

||||

- Unwelcome sexual attention. This includes, sexualized comments or jokes; inappropriate touching, groping, and unwelcomed sexual advances.

|

||||

- Unwelcome sexual attention. This includes, sexualized comments or jokes; inappropriate touching,

|

||||

groping, and unwelcomed sexual advances.

|

||||

- Deliberate intimidation, stalking or following (online or in person).

|

||||

- Advocating for, or encouraging, any of the above behavior.

|

||||

- Sustained disruption of community events, including talks and presentations.

|

||||

|

||||

## 5. Weapons Policy

|

||||

|

||||

No weapons will be allowed at GraphQL Hive events, community spaces, or in other spaces covered by the scope of this Code of Conduct. Weapons include but are not limited to guns, explosives (including fireworks), and large knives such as those used for hunting or display, as well as any other item used for the purpose of causing injury or harm to others. Anyone seen in possession of one of these items will be asked to leave immediately, and will only be allowed to return without the weapon. Community members are further expected to comply with all state and local laws on this matter.

|

||||

No weapons will be allowed at GraphQL Hive events, community spaces, or in other spaces covered by

|

||||

the scope of this Code of Conduct. Weapons include but are not limited to guns, explosives

|

||||

(including fireworks), and large knives such as those used for hunting or display, as well as any

|

||||

other item used for the purpose of causing injury or harm to others. Anyone seen in possession of

|

||||

one of these items will be asked to leave immediately, and will only be allowed to return without

|

||||

the weapon. Community members are further expected to comply with all state and local laws on this

|

||||

matter.

|

||||

|

||||

## 6. Consequences of Unacceptable Behavior

|

||||

|

||||

Unacceptable behavior from any community member, including sponsors and those with decision-making authority, will not be tolerated.

|

||||

Unacceptable behavior from any community member, including sponsors and those with decision-making

|

||||

authority, will not be tolerated.

|

||||

|

||||

Anyone asked to stop unacceptable behavior is expected to comply immediately.

|

||||

|

||||

If a community member engages in unacceptable behavior, the community organizers may take any action they deem appropriate, up to and including a temporary ban or permanent expulsion from the community without warning (and without refund in the case of a paid event).

|

||||

If a community member engages in unacceptable behavior, the community organizers may take any action

|

||||

they deem appropriate, up to and including a temporary ban or permanent expulsion from the community

|

||||

without warning (and without refund in the case of a paid event).

|

||||

|

||||

## 7. Reporting Guidelines

|

||||

|

||||

If you are subject to or witness unacceptable behavior, or have any other concerns, please notify a community organizer as soon as possible. contact@the-guild.dev.

|

||||

If you are subject to or witness unacceptable behavior, or have any other concerns, please notify a

|

||||

community organizer as soon as possible. contact@the-guild.dev.

|

||||

|

||||

Additionally, community organizers are available to help community members engage with local law enforcement or to otherwise help those experiencing unacceptable behavior feel safe. In the context of in-person events, organizers will also provide escorts as desired by the person experiencing distress.

|

||||

Additionally, community organizers are available to help community members engage with local law

|

||||

enforcement or to otherwise help those experiencing unacceptable behavior feel safe. In the context

|

||||

of in-person events, organizers will also provide escorts as desired by the person experiencing

|

||||

distress.

|

||||

|

||||

## 8. Addressing Grievances

|

||||

|

||||

If you feel you have been falsely or unfairly accused of violating this Code of Conduct, you should notify with a concise description of your grievance. Your grievance will be handled in accordance with our existing governing policies. [Policy](https://graphql-hive.com/privacy-policy.pdf)

|

||||

If you feel you have been falsely or unfairly accused of violating this Code of Conduct, you should

|

||||

notify with a concise description of your grievance. Your grievance will be handled in accordance

|

||||

with our existing governing policies. [Policy](https://graphql-hive.com/privacy-policy.pdf)

|

||||

|

||||

## 9. Scope

|

||||

|

||||

We expect all community participants (contributors, paid or otherwise; sponsors; and other guests) to abide by this Code of Conduct in all community venues--online and in-person--as well as in all one-on-one communications pertaining to community business.

|

||||

We expect all community participants (contributors, paid or otherwise; sponsors; and other guests)

|

||||

to abide by this Code of Conduct in all community venues--online and in-person--as well as in all

|

||||

one-on-one communications pertaining to community business.

|

||||

|

||||

This code of conduct and its related procedures also applies to unacceptable behavior occurring outside the scope of community activities when such behavior has the potential to adversely affect the safety and well-being of community members.

|

||||

This code of conduct and its related procedures also applies to unacceptable behavior occurring

|

||||

outside the scope of community activities when such behavior has the potential to adversely affect

|

||||

the safety and well-being of community members.

|

||||

|

||||

## 10. Contact info

|

||||

|

||||

|

|

@ -77,9 +111,13 @@ contact@the-guild.dev

|

|||

|

||||

## 11. License and attribution

|

||||

|

||||

The Citizen Code of Conduct is distributed by [Stumptown Syndicate](http://stumptownsyndicate.org) under a [Creative Commons Attribution-ShareAlike license](http://creativecommons.org/licenses/by-sa/3.0/).

|

||||

The Citizen Code of Conduct is distributed by [Stumptown Syndicate](http://stumptownsyndicate.org)

|

||||

under a

|

||||

[Creative Commons Attribution-ShareAlike license](http://creativecommons.org/licenses/by-sa/3.0/).

|

||||

|

||||

Portions of text derived from the [Django Code of Conduct](https://www.djangoproject.com/conduct/) and the [Geek Feminism Anti-Harassment Policy](http://geekfeminism.wikia.com/wiki/Conference_anti-harassment/Policy).

|

||||

Portions of text derived from the [Django Code of Conduct](https://www.djangoproject.com/conduct/)

|

||||

and the

|

||||

[Geek Feminism Anti-Harassment Policy](http://geekfeminism.wikia.com/wiki/Conference_anti-harassment/Policy).

|

||||

|

||||

_Revision 2.3. Posted 6 March 2017._

|

||||

|

||||

|

|

@ -87,4 +125,5 @@ _Revision 2.2. Posted 4 February 2016._

|

|||

|

||||

_Revision 2.1. Posted 23 June 2014._

|

||||

|

||||

_Revision 2.0, adopted by the [Stumptown Syndicate](http://stumptownsyndicate.org) board on 10 January 2013. Posted 17 March 2013._

|

||||

_Revision 2.0, adopted by the [Stumptown Syndicate](http://stumptownsyndicate.org) board on 10

|

||||

January 2013. Posted 17 March 2013._

|

||||

|

|

|

|||

33

README.md

33

README.md

|

|

@ -1,8 +1,10 @@

|

|||

# GraphQL Hive

|

||||

|

||||

GraphQL Hive provides all the tools the get visibility of your GraphQL architecture at all stages, from standalone APIs to composed schemas (Federation, Stitching).

|

||||

GraphQL Hive provides all the tools the get visibility of your GraphQL architecture at all stages,

|

||||

from standalone APIs to composed schemas (Federation, Stitching).

|

||||

|

||||

- Visit [graphql-hive.com](https://graphql-hive.com) ([status page](https://status.graphql-hive.com/))

|

||||

- Visit [graphql-hive.com](https://graphql-hive.com)

|

||||

([status page](https://status.graphql-hive.com/))

|

||||

- [Read the announcement blog post](https://www.the-guild.dev/blog/announcing-graphql-hive-public)

|

||||

- [Read the docs](https://docs.graphql-hive.com)

|

||||

|

||||

|

|

@ -10,10 +12,12 @@ GraphQL Hive provides all the tools the get visibility of your GraphQL architect

|

|||

|

||||

GraphQL Hive has been built with 3 main objectives in mind:

|

||||

|

||||

- **Help GraphQL developers to get to know their GraphQL APIs** a little more with our Schema Registry, Performance Monitoring, Alerts, and Integrations.

|

||||

- **Help GraphQL developers to get to know their GraphQL APIs** a little more with our Schema

|

||||

Registry, Performance Monitoring, Alerts, and Integrations.

|

||||

- **Support all kinds of GraphQL APIs**, from Federation, and Stitching, to standalone APIs.

|

||||

- **Open Source at the heart**: 100% open-source and build in public with the community.

|

||||

- **A plug and play SaaS solution**: to give access to Hive to most people with a generous free "Hobby plan"

|

||||

- **A plug and play SaaS solution**: to give access to Hive to most people with a generous free

|

||||

"Hobby plan"

|

||||

|

||||

## Features Overview

|

||||

|

||||

|

|

@ -21,18 +25,22 @@ GraphQL Hive has been built with 3 main objectives in mind:

|

|||

|

||||

GraphQL Hive offers 3 useful features to manage your GraphQL API:

|

||||

|

||||

- **Prevent breaking changes** - GraphQL Hive will run a set of checks and notify your team via Slack, GitHub, or within the application.

|

||||

- **Prevent breaking changes** - GraphQL Hive will run a set of checks and notify your team via

|

||||

Slack, GitHub, or within the application.

|

||||

- **Data-driven** definition of a “breaking change” based on Operations Monitoring.

|

||||

- **History of changes** - an access to the full history of changes, even on a complex composed schema (Federation, Stitching).

|

||||

- **History of changes** - an access to the full history of changes, even on a complex composed

|

||||

schema (Federation, Stitching).

|

||||

- **High-availability and multi-zone CDN** service based on Cloudflare to access Schema Registry

|

||||

|

||||

### Monitoring

|

||||

|

||||

Once a Schema is deployed, **it is important to be aware of how it is used and what is the experience of its final users**.

|

||||

Once a Schema is deployed, **it is important to be aware of how it is used and what is the

|

||||

experience of its final users**.

|

||||

|

||||

## Self-hosted

|

||||

|

||||

GraphQL Hive is completely open-source under the MIT license, meaning that you are free to host on your own infrastructure.

|

||||

GraphQL Hive is completely open-source under the MIT license, meaning that you are free to host on

|

||||

your own infrastructure.

|

||||

|

||||

GraphQL Hive helps you get a global overview of the usage of your GraphQL API with:

|

||||

|

||||

|

|

@ -43,13 +51,16 @@ GraphQL Hive helps you get a global overview of the usage of your GraphQL API wi

|

|||

|

||||

### Integrations

|

||||

|

||||

GraphQL Hive is well integrated with **Slack** and most **CI/CD** systems to get you up and running as smoothly as possible!

|

||||

GraphQL Hive is well integrated with **Slack** and most **CI/CD** systems to get you up and running

|

||||

as smoothly as possible!

|

||||

|

||||

GraphQL Hive can notify your team when schema changes occur, either via Slack or a custom webhook.

|

||||

|

||||

Also, the Hive CLI allows integration of the schema checks mechanism to all CI/CD systems (GitHub, BitBucket, Azure, and others). The same applies for schema publishing and operations checks.

|

||||

Also, the Hive CLI allows integration of the schema checks mechanism to all CI/CD systems (GitHub,

|

||||

BitBucket, Azure, and others). The same applies for schema publishing and operations checks.

|

||||

|

||||

If you are using GitHub, you can directly benefit from the **GraphQL Hive app that will automatically add status checks to your PRs**!

|

||||

If you are using GitHub, you can directly benefit from the **GraphQL Hive app that will

|

||||

automatically add status checks to your PRs**!

|

||||

|

||||

### Join us in building the future of GraphQL Hive

|

||||

|

||||

|

|

|

|||

30

codegen.yml

30

codegen.yml

|

|

@ -21,9 +21,12 @@ generates:

|

|||

DateTime: string

|

||||

SafeInt: number

|

||||

mappers:

|

||||

SchemaChangeConnection: ../shared/mappers#SchemaChangeConnection as SchemaChangeConnectionMapper

|

||||

SchemaErrorConnection: ../shared/mappers#SchemaErrorConnection as SchemaErrorConnectionMapper

|

||||

OrganizationConnection: ../shared/mappers#OrganizationConnection as OrganizationConnectionMapper

|

||||

SchemaChangeConnection:

|

||||

../shared/mappers#SchemaChangeConnection as SchemaChangeConnectionMapper

|

||||

SchemaErrorConnection:

|

||||

../shared/mappers#SchemaErrorConnection as SchemaErrorConnectionMapper

|

||||

OrganizationConnection:

|

||||

../shared/mappers#OrganizationConnection as OrganizationConnectionMapper

|

||||

UserConnection: ../shared/mappers#UserConnection as UserConnectionMapper

|

||||

ActivityConnection: ../shared/mappers#ActivityConnection as ActivityConnectionMapper

|

||||

MemberConnection: ../shared/mappers#MemberConnection as MemberConnectionMapper

|

||||

|

|

@ -31,16 +34,20 @@ generates:

|

|||

TargetConnection: ../shared/mappers#TargetConnection as TargetConnectionMapper

|

||||

SchemaConnection: ../shared/mappers#SchemaConnection as SchemaConnectionMapper

|

||||

TokenConnection: ../shared/mappers#TokenConnection as TokenConnectionMapper

|

||||

OperationStatsConnection: ../shared/mappers#OperationStatsConnection as OperationStatsConnectionMapper

|

||||

ClientStatsConnection: ../shared/mappers#ClientStatsConnection as ClientStatsConnectionMapper

|

||||

OperationStatsConnection:

|

||||

../shared/mappers#OperationStatsConnection as OperationStatsConnectionMapper

|

||||

ClientStatsConnection:

|

||||

../shared/mappers#ClientStatsConnection as ClientStatsConnectionMapper

|

||||

OperationsStats: ../shared/mappers#OperationsStats as OperationsStatsMapper

|

||||

DurationStats: ../shared/mappers#DurationStats as DurationStatsMapper

|

||||

SchemaComparePayload: ../shared/mappers#SchemaComparePayload as SchemaComparePayloadMapper

|

||||

SchemaCompareResult: ../shared/mappers#SchemaCompareResult as SchemaCompareResultMapper

|

||||

SchemaVersionConnection: ../shared/mappers#SchemaVersionConnection as SchemaVersionConnectionMapper

|

||||

SchemaVersionConnection:

|

||||

../shared/mappers#SchemaVersionConnection as SchemaVersionConnectionMapper

|

||||

SchemaVersion: ../shared/mappers#SchemaVersion as SchemaVersionMapper

|

||||

Schema: ../shared/mappers#Schema as SchemaMapper

|

||||

PersistedOperationConnection: ../shared/mappers#PersistedOperationConnection as PersistedOperationMapper

|

||||

PersistedOperationConnection:

|

||||

../shared/mappers#PersistedOperationConnection as PersistedOperationMapper

|

||||

Organization: ../shared/entities#Organization as OrganizationMapper

|

||||

Project: ../shared/entities#Project as ProjectMapper

|

||||

Target: ../shared/entities#Target as TargetMapper

|

||||

|

|

@ -55,13 +62,15 @@ generates:

|

|||

AdminQuery: '{}'

|

||||

AdminStats: '{ daysLimit?: number | null }'

|

||||

AdminGeneralStats: '{ daysLimit?: number | null }'

|

||||

AdminOrganizationStats: ../shared/entities#AdminOrganizationStats as AdminOrganizationStatsMapper

|

||||

AdminOrganizationStats:

|

||||

../shared/entities#AdminOrganizationStats as AdminOrganizationStatsMapper

|

||||

UsageEstimation: '../shared/mappers#TargetsEstimationFilter'

|

||||

UsageEstimationScope: '../shared/mappers#TargetsEstimationDateFilter'

|

||||

BillingPaymentMethod: 'StripeTypes.PaymentMethod.Card'

|

||||

BillingDetails: 'StripeTypes.PaymentMethod.BillingDetails'

|

||||

BillingInvoice: 'StripeTypes.Invoice'

|

||||

OrganizationGetStarted: ../shared/entities#OrganizationGetStarted as OrganizationGetStartedMapper

|

||||

OrganizationGetStarted:

|

||||

../shared/entities#OrganizationGetStarted as OrganizationGetStartedMapper

|

||||

SchemaExplorer: ../shared/mappers#SchemaExplorerMapper

|

||||

GraphQLObjectType: ../shared/mappers#GraphQLObjectTypeMapper

|

||||

GraphQLInterfaceType: ../shared/mappers#GraphQLInterfaceTypeMapper

|

||||

|

|

@ -74,7 +83,8 @@ generates:

|

|||

GraphQLField: ../shared/mappers#GraphQLFieldMapper

|

||||

GraphQLInputField: ../shared/mappers#GraphQLInputFieldMapper

|

||||

GraphQLArgument: ../shared/mappers#GraphQLArgumentMapper

|

||||

OrganizationInvitation: ../shared/entities#OrganizationInvitation as OrganizationInvitationMapper

|

||||

OrganizationInvitation:

|

||||

../shared/entities#OrganizationInvitation as OrganizationInvitationMapper

|

||||

OIDCIntegration: '../shared/entities#OIDCIntegration as OIDCIntegrationMapper'

|

||||

User: '../shared/entities#User as UserMapper'

|

||||

plugins:

|

||||

|

|

|

|||

|

|

@ -182,8 +182,14 @@ const schemaApi = deploySchema({

|

|||

broker: cfBroker,

|

||||

});

|

||||

|

||||

const supertokensApiKey = new random.RandomPassword('supertokens-api-key', { length: 31, special: false });

|

||||

const auth0LegacyMigrationKey = new random.RandomPassword('auth0-legacy-migration-key', { length: 69, special: false });

|

||||

const supertokensApiKey = new random.RandomPassword('supertokens-api-key', {

|

||||

length: 31,

|

||||

special: false,

|

||||

});

|

||||

const auth0LegacyMigrationKey = new random.RandomPassword('auth0-legacy-migration-key', {

|

||||

length: 69,

|

||||

special: false,

|

||||

});

|

||||

|

||||

const oauthConfig = new pulumi.Config('oauth');

|

||||

|

||||

|

|

|

|||

|

|

@ -4,10 +4,6 @@

|

|||

"scripts": {

|

||||

"typecheck": "tsc --noEmit"

|

||||

},

|

||||

"devDependencies": {

|

||||

"@types/mime-types": "2.1.1",

|

||||

"@types/node": "18.11.5"

|

||||

},

|

||||

"dependencies": {

|

||||

"@manypkg/get-packages": "1.1.3",

|

||||

"@pulumi/azure": "5.23.0",

|

||||

|

|

@ -17,5 +13,9 @@

|

|||

"@pulumi/pulumi": "3.44.1",

|

||||

"@pulumi/random": "4.8.2",

|

||||

"pg-connection-string": "2.5.0"

|

||||

},

|

||||

"devDependencies": {

|

||||

"@types/mime-types": "2.1.1",

|

||||

"@types/node": "18.11.5"

|

||||

}

|

||||

}

|

||||

|

|

|

|||

|

|

@ -206,6 +206,6 @@ export function deployApp({

|

|||

],

|

||||

port: 3000,

|

||||

},

|

||||

[graphql.service, graphql.deployment, dbMigrations]

|

||||

[graphql.service, graphql.deployment, dbMigrations],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -29,7 +29,9 @@ export function deployStripeBilling({

|

|||

dbMigrations: DbMigrations;

|

||||

}) {

|

||||

const rawConnectionString = apiConfig.requireSecret('postgresConnectionString');

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString => parse(rawConnectionString));

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString =>

|

||||

parse(rawConnectionString),

|

||||

);

|

||||

|

||||

return new RemoteArtifactAsServiceDeployment(

|

||||

'stripe-billing',

|

||||

|

|

@ -56,6 +58,6 @@ export function deployStripeBilling({

|

|||

packageInfo: packageHelper.npmPack('@hive/stripe-billing'),

|

||||

port: 4000,

|

||||

},

|

||||

[dbMigrations, usageEstimator.service, usageEstimator.deployment]

|

||||

[dbMigrations, usageEstimator.service, usageEstimator.deployment],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -7,11 +7,16 @@ function toExpressionList(items: string[]): string {

|

|||

return items.map(v => `"${v}"`).join(' ');

|

||||

}

|

||||

|

||||

export function deployCloudFlareSecurityTransform(options: { envName: string; ignoredPaths: string[] }) {

|

||||

export function deployCloudFlareSecurityTransform(options: {

|

||||

envName: string;

|

||||

ignoredPaths: string[];

|

||||

}) {

|

||||

// We deploy it only once, because CloudFlare is not super friendly for multiple deployments of "http_response_headers_transform" rules

|

||||

// The single rule, deployed to prod, covers all other envs, and infers the hostname dynamically.

|

||||

if (options.envName !== 'prod') {

|

||||

console.warn(`Skipped deploy security headers (see "cloudflare-security.ts") for env ${options.envName}`);

|

||||

console.warn(

|

||||

`Skipped deploy security headers (see "cloudflare-security.ts") for env ${options.envName}`,

|

||||

);

|

||||

return;

|

||||

}

|

||||

|

||||

|

|

|

|||

|

|

@ -24,7 +24,9 @@ export function deployDbMigrations({

|

|||

kafka: Kafka;

|

||||

}) {

|

||||

const rawConnectionString = apiConfig.requireSecret('postgresConnectionString');

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString => parse(rawConnectionString));

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString =>

|

||||

parse(rawConnectionString),

|

||||

);

|

||||

|

||||

const { job } = new RemoteArtifactAsServiceDeployment(

|

||||

'db-migrations',

|

||||

|

|

@ -50,7 +52,7 @@ export function deployDbMigrations({

|

|||

packageInfo: packageHelper.npmPack('@hive/storage'),

|

||||

},

|

||||

[clickhouse.deployment, clickhouse.service],

|

||||

clickhouse.service

|

||||

clickhouse.service,

|

||||

).deployAsJob();

|

||||

|

||||

return job;

|

||||

|

|

|

|||

|

|

@ -55,7 +55,7 @@ export function deployEmails({

|

|||

packageInfo: packageHelper.npmPack('@hive/emails'),

|

||||

replicas: 1,

|

||||

},

|

||||

[redis.deployment, redis.service]

|

||||

[redis.deployment, redis.service],

|

||||

).deploy();

|

||||

|

||||

return { deployment, service, localEndpoint: serviceLocalEndpoint(service) };

|

||||

|

|

|

|||

|

|

@ -72,7 +72,9 @@ export function deployGraphQL({

|

|||

};

|

||||

}) {

|

||||

const rawConnectionString = apiConfig.requireSecret('postgresConnectionString');

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString => parse(rawConnectionString));

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString =>

|

||||

parse(rawConnectionString),

|

||||

);

|

||||

|

||||

return new RemoteArtifactAsServiceDeployment(

|

||||

'graphql-api',

|

||||

|

|

@ -148,6 +150,6 @@ export function deployGraphQL({

|

|||

clickhouse.service,

|

||||

rateLimit.deployment,

|

||||

rateLimit.service,

|

||||

]

|

||||

],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -10,7 +10,7 @@ export function deployCloudflarePolice({ envName, rootDns }: { envName: string;

|

|||

cfCustomConfig.require('zoneId'),

|

||||

cloudflareProviderConfig.require('accountId'),

|

||||

cfCustomConfig.requireSecret('policeApiToken'),

|

||||

rootDns

|

||||

rootDns,

|

||||

);

|

||||

|

||||

return police.deploy();

|

||||

|

|

|

|||

|

|

@ -50,7 +50,7 @@ export function deployProxy({

|

|||

path: '/',

|

||||

service: docs.service,

|

||||

},

|

||||

]

|

||||

],

|

||||

)

|

||||

.registerService({ record: appHostname }, [

|

||||

{

|

||||

|

|

|

|||

|

|

@ -32,7 +32,9 @@ export function deployRateLimit({

|

|||

emails: Emails;

|

||||

}) {

|

||||

const rawConnectionString = apiConfig.requireSecret('postgresConnectionString');

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString => parse(rawConnectionString));

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString =>

|

||||

parse(rawConnectionString),

|

||||

);

|

||||

|

||||

return new RemoteArtifactAsServiceDeployment(

|

||||

'rate-limiter',

|

||||

|

|

@ -60,6 +62,6 @@ export function deployRateLimit({

|

|||

packageInfo: packageHelper.npmPack('@hive/rate-limit'),

|

||||

port: 4000,

|

||||

},

|

||||

[dbMigrations, usageEstimator.service, usageEstimator.deployment]

|

||||

[dbMigrations, usageEstimator.service, usageEstimator.deployment],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -48,6 +48,6 @@ export function deploySchema({

|

|||

replicas: 2,

|

||||

pdb: true,

|

||||

},

|

||||

[redis.deployment, redis.service]

|

||||

[redis.deployment, redis.service],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -26,7 +26,9 @@ export function deployTokens({

|

|||

heartbeat?: string;

|

||||

}) {

|

||||

const rawConnectionString = apiConfig.requireSecret('postgresConnectionString');

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString => parse(rawConnectionString));

|

||||

const connectionString = rawConnectionString.apply(rawConnectionString =>

|

||||

parse(rawConnectionString),

|

||||

);

|

||||

|

||||

return new RemoteArtifactAsServiceDeployment(

|

||||

'tokens-service',

|

||||

|

|

@ -50,6 +52,6 @@ export function deployTokens({

|

|||

HEARTBEAT_ENDPOINT: heartbeat ?? '',

|

||||

},

|

||||

},

|

||||

[dbMigrations]

|

||||

[dbMigrations],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -46,6 +46,6 @@ export function deployUsageEstimation({

|

|||

packageInfo: packageHelper.npmPack('@hive/usage-estimator'),

|

||||

port: 4000,

|

||||

},

|

||||

[dbMigrations]

|

||||

[dbMigrations],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -87,6 +87,6 @@ export function deployUsageIngestor({

|

|||

maxReplicas: maxReplicas,

|

||||

},

|

||||

},

|

||||

[clickhouse.deployment, clickhouse.service, dbMigrations]

|

||||

[clickhouse.deployment, clickhouse.service, dbMigrations],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -73,6 +73,6 @@ export function deployUsage({

|

|||

maxReplicas: maxReplicas,

|

||||

},

|

||||

},

|

||||

[dbMigrations, tokens.deployment, tokens.service, rateLimit.deployment, rateLimit.service]

|

||||

[dbMigrations, tokens.deployment, tokens.service, rateLimit.deployment, rateLimit.service],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -50,6 +50,6 @@ export function deployWebhooks({

|

|||

packageInfo: packageHelper.npmPack('@hive/webhooks'),

|

||||

replicas: 1,

|

||||

},

|

||||

[redis.deployment, redis.service]

|

||||

[redis.deployment, redis.service],

|

||||

).deploy();

|

||||

}

|

||||

|

|

|

|||

|

|

@ -80,7 +80,7 @@ export class BotKube {

|

|||

},

|

||||

{

|

||||

dependsOn: [ns],

|

||||

}

|

||||

},

|

||||

);

|

||||

}

|

||||

}

|

||||

|

|

|

|||

|

|

@ -37,7 +37,7 @@ export class CertManager {

|

|||

},

|

||||

{

|

||||

dependsOn: [certManager],

|

||||

}

|

||||

},

|

||||

);

|

||||

|

||||

return {

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ export class Clickhouse {

|

|||

protected options: {

|

||||

env?: kx.types.Container['env'];

|

||||

sentryDsn: string;

|

||||

}

|

||||

},

|

||||

) {}

|

||||

|

||||

deploy() {

|

||||

|

|

@ -79,7 +79,7 @@ export class Clickhouse {

|

|||

},

|

||||

{

|

||||

annotations: metadata.annotations,

|

||||

}

|

||||

},

|

||||

),

|

||||

});

|

||||

const service = deployment.createService({});

|

||||

|

|

|

|||

|

|

@ -12,7 +12,7 @@ export class CloudflareCDN {

|

|||

authPrivateKey: pulumi.Output<string>;

|

||||

sentryDsn: string;

|

||||

release: string;

|

||||

}

|

||||

},

|

||||

) {}

|

||||

|

||||

deploy() {

|

||||

|

|

@ -21,7 +21,10 @@ export class CloudflareCDN {

|

|||

});

|

||||

|

||||

const script = new cf.WorkerScript('hive-ha-worker', {

|

||||

content: readFileSync(resolve(__dirname, '../../packages/services/cdn-worker/dist/worker.js'), 'utf-8'),

|

||||

content: readFileSync(

|

||||

resolve(__dirname, '../../packages/services/cdn-worker/dist/worker.js'),

|

||||

'utf-8',

|

||||

),

|

||||

name: `hive-storage-cdn-${this.config.envName}`,

|

||||

kvNamespaceBindings: [

|

||||

{

|

||||

|

|

@ -78,12 +81,15 @@ export class CloudflareBroker {

|

|||

secretSignature: pulumi.Output<string>;

|

||||

sentryDsn: string;

|

||||

release: string;

|

||||

}

|

||||

},

|

||||

) {}

|

||||

|

||||

deploy() {

|

||||

const script = new cf.WorkerScript('hive-broker-worker', {

|

||||

content: readFileSync(resolve(__dirname, '../../packages/services/broker-worker/dist/worker.js'), 'utf-8'),

|

||||

content: readFileSync(

|

||||

resolve(__dirname, '../../packages/services/broker-worker/dist/worker.js'),

|

||||

'utf-8',

|

||||

),

|

||||

name: `hive-broker-${this.config.envName}`,

|

||||

secretTextBindings: [

|

||||

{

|

||||

|

|

|

|||

|

|

@ -5,7 +5,9 @@ export function isProduction(deploymentEnv: DeploymentEnvironment | string): boo

|

|||

}

|

||||

|

||||

export function isStaging(deploymentEnv: DeploymentEnvironment | string): boolean {

|

||||

return isDeploymentEnvironment(deploymentEnv) ? deploymentEnv.ENVIRONMENT === 'staging' : deploymentEnv === 'staging';

|

||||

return isDeploymentEnvironment(deploymentEnv)

|

||||

? deploymentEnv.ENVIRONMENT === 'staging'

|

||||

: deploymentEnv === 'staging';

|

||||

}

|

||||

|

||||

export function isDeploymentEnvironment(value: any): value is DeploymentEnvironment {

|

||||

|

|

|

|||

|

|

@ -6,7 +6,9 @@ export function serviceLocalEndpoint(service: k8s.types.input.core.v1.Service) {

|

|||

const defaultPort = (spec?.ports || [])[0];

|

||||

const portText = defaultPort ? `:${defaultPort.port}` : '';

|

||||

|

||||

return `http://${metadata?.name}.${metadata?.namespace || 'default'}.svc.cluster.local${portText}`;

|

||||

return `http://${metadata?.name}.${

|

||||

metadata?.namespace || 'default'

|

||||

}.svc.cluster.local${portText}`;

|

||||

});

|

||||

}

|

||||

|

||||

|

|

|

|||

|

|

@ -210,7 +210,10 @@ export class Observability {

|

|||

regex: '(.+)',

|

||||

},

|

||||

{

|

||||

source_labels: ['__address__', '__meta_kubernetes_pod_annotation_prometheus_io_port'],

|

||||

source_labels: [

|

||||

'__address__',

|

||||

'__meta_kubernetes_pod_annotation_prometheus_io_port',

|

||||

],

|

||||

action: 'replace',

|

||||

regex: '([^:]+)(?::d+)?;(d+)',

|

||||

replacement: '$1:$2',

|

||||

|

|

@ -358,7 +361,7 @@ export class Observability {

|

|||

},

|

||||

{

|

||||

dependsOn: [ns],

|

||||

}

|

||||

},

|

||||

);

|

||||

}

|

||||

}

|

||||

|

|

|

|||

|

|

@ -14,7 +14,7 @@ export function normalizeEnv(env: kx.types.Container['env']): any[] {

|

|||

export class PodBuilder extends kx.PodBuilder {

|

||||

public asExtendedDeploymentSpec(

|

||||

args?: kx.types.PodBuilderDeploymentSpec,

|

||||

metadata?: k8s.types.input.meta.v1.ObjectMeta

|

||||

metadata?: k8s.types.input.meta.v1.ObjectMeta,

|

||||

): pulumi.Output<k8s.types.input.apps.v1.DeploymentSpec> {

|

||||

const podName = this.podSpec.containers.apply((containers: any) => {

|

||||

return pulumi.output(containers[0].name);

|

||||

|

|

|

|||

|

|

@ -9,7 +9,7 @@ export class HivePolice {

|

|||

private zoneId: string,

|

||||

private accountId: string,

|

||||

private cfToken: pulumi.Output<string>,

|

||||

private rootDns: string

|

||||

private rootDns: string,

|

||||

) {}

|

||||

|

||||

deploy() {

|

||||

|

|

@ -18,7 +18,10 @@ export class HivePolice {

|

|||

});

|

||||

|

||||

const script = new cf.WorkerScript('hive-police-worker', {

|

||||

content: readFileSync(resolve(__dirname, '../../packages/services/police-worker/dist/worker.js'), 'utf-8'),

|

||||

content: readFileSync(

|

||||

resolve(__dirname, '../../packages/services/police-worker/dist/worker.js'),

|

||||

'utf-8',

|

||||

),

|

||||

name: `hive-police-${this.envName}`,

|

||||

kvNamespaceBindings: [

|

||||

{

|

||||

|

|

|

|||

|

|

@ -10,7 +10,7 @@ export class Redis {

|

|||

protected options: {

|

||||

env?: kx.types.Container['env'];

|

||||

password: string;

|

||||

}

|

||||

},

|

||||

) {}

|

||||

|

||||

deploy({ limits }: { limits: k8s.types.input.core.v1.ResourceRequirements['limits'] }) {

|

||||

|

|

@ -105,7 +105,7 @@ fi

|

|||

},

|

||||

{

|

||||

annotations: metadata.annotations,

|

||||

}

|

||||

},

|

||||

),

|

||||

});

|

||||

|

||||

|

|

|

|||

|

|

@ -38,7 +38,7 @@ export class RemoteArtifactAsServiceDeployment {

|

|||

};

|

||||

},

|

||||

protected dependencies?: Array<pulumi.Resource | undefined | null>,

|

||||

protected parent?: pulumi.Resource | null

|

||||

protected parent?: pulumi.Resource | null,

|

||||

) {}

|

||||

|

||||

deployAsJob() {

|

||||

|

|

@ -50,7 +50,7 @@ export class RemoteArtifactAsServiceDeployment {

|

|||

{

|

||||

spec: pb.asJobSpec(),

|

||||

},

|

||||

{ dependsOn: this.dependencies?.filter(isDefined) }

|

||||

{ dependsOn: this.dependencies?.filter(isDefined) },

|

||||

);

|

||||

|

||||

return { job };

|

||||

|

|

@ -214,13 +214,13 @@ export class RemoteArtifactAsServiceDeployment {

|

|||

},

|

||||

{

|

||||

annotations: metadata.annotations,

|

||||

}

|

||||

},

|

||||

),

|

||||

},

|

||||

{

|

||||

dependsOn: this.dependencies?.filter(isDefined),

|

||||

parent: this.parent ?? undefined,

|

||||

}

|

||||

},

|

||||

);

|

||||

|

||||

if (this.options.pdb) {

|

||||

|

|

@ -265,7 +265,7 @@ export class RemoteArtifactAsServiceDeployment {

|

|||

},

|

||||

{

|

||||

dependsOn: [deployment, service],

|

||||

}

|

||||

},

|

||||

);

|

||||

}

|

||||

|

||||

|

|

|

|||

|

|

@ -18,7 +18,7 @@ export class Proxy {

|

|||

virtualHost?: Output<string>;

|

||||

httpsUpstream?: boolean;

|

||||

withWwwDomain?: boolean;

|

||||

}[]

|

||||

}[],

|

||||

) {

|

||||

const cert = new k8s.apiextensions.CustomResource(`cert-${dns.record}`, {

|

||||

apiVersion: 'cert-manager.io/v1',

|

||||

|

|

@ -104,7 +104,7 @@ export class Proxy {

|

|||

},

|

||||

{

|

||||

dependsOn: [cert, this.lbService!],

|

||||

}

|

||||

},

|

||||

);

|

||||

|

||||

return this;

|

||||

|

|

@ -183,7 +183,10 @@ export class Proxy {

|

|||

|

||||

this.lbService = proxyController.getResource('v1/Service', 'contour/contour-proxy-envoy');

|

||||

|

||||

const contourDeployment = proxyController.getResource('apps/v1/Deployment', 'contour/contour-proxy-contour');

|

||||

const contourDeployment = proxyController.getResource(

|

||||

'apps/v1/Deployment',

|

||||

'contour/contour-proxy-contour',

|

||||

);

|

||||

new k8s.policy.v1.PodDisruptionBudget('contour-pdb', {

|

||||

spec: {

|

||||

minAvailable: 1,

|

||||

|

|

@ -191,7 +194,10 @@ export class Proxy {

|

|||

},

|

||||

});

|

||||

|

||||

const envoyDaemonset = proxyController.getResource('apps/v1/ReplicaSet', 'contour/contour-proxy-envoy');

|

||||

const envoyDaemonset = proxyController.getResource(

|

||||

'apps/v1/ReplicaSet',

|

||||

'contour/contour-proxy-envoy',

|

||||

);

|

||||

new k8s.policy.v1.PodDisruptionBudget('envoy-pdb', {

|

||||

spec: {

|

||||

minAvailable: 1,

|

||||

|

|

@ -219,7 +225,7 @@ export class Proxy {

|

|||

},

|

||||

{

|

||||

dependsOn: [this.lbService],

|

||||

}

|

||||

},

|

||||

);

|

||||

|

||||

return this;

|

||||

|

|

|

|||

|

|

@ -65,7 +65,18 @@ services:

|

|||

soft: 20000

|

||||

hard: 40000

|

||||

healthcheck:

|

||||

test: ['CMD', 'cub', 'kafka-ready', '1', '5', '-b', '127.0.0.1:9092', '-c', '/etc/kafka/kafka.properties']

|

||||

test:

|

||||

[

|

||||

'CMD',

|

||||

'cub',

|

||||

'kafka-ready',

|

||||

'1',

|

||||

'5',

|

||||

'-b',

|

||||

'127.0.0.1:9092',

|

||||

'-c',

|

||||

'/etc/kafka/kafka.properties',

|

||||

]

|

||||

interval: 15s

|

||||

timeout: 10s

|

||||

retries: 6

|

||||

|

|

|

|||

|

|

@ -4,4 +4,5 @@

|

|||

|

||||

|

||||

|

||||

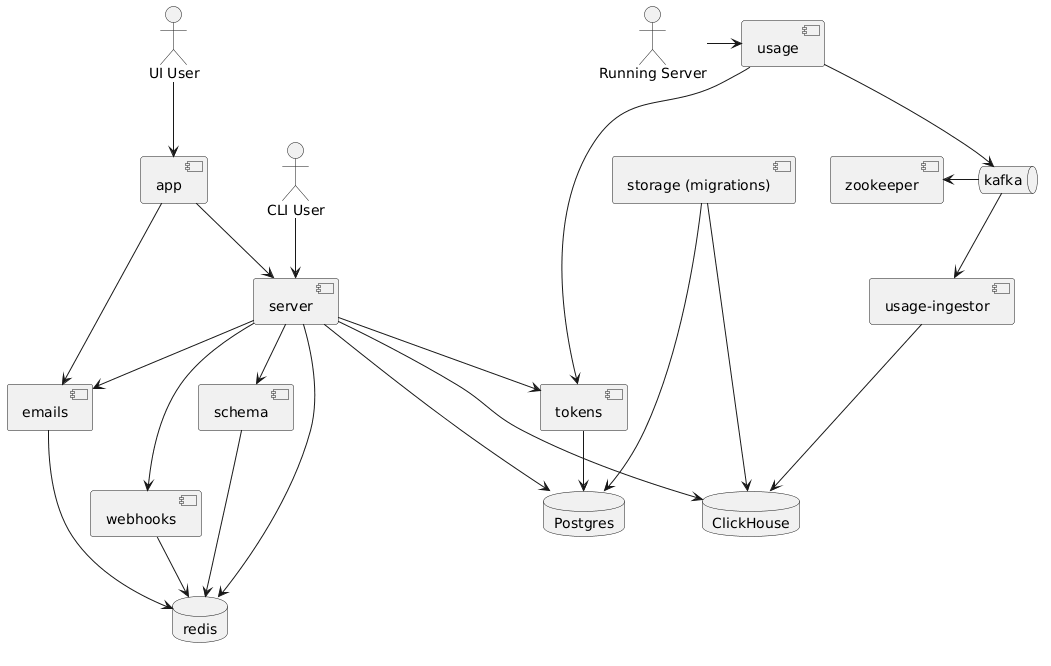

Note: The relationships in this diagram is based on [this docker-compose](https://github.com/kamilkisiela/graphql-hive/blob/main/docker-compose.community.yml)

|

||||

Note: The relationships in this diagram is based on

|

||||

[this docker-compose](https://github.com/kamilkisiela/graphql-hive/blob/main/docker-compose.community.yml)

|

||||

|

|

|

|||

|

|

@ -1,15 +1,23 @@

|

|||

## Deployment

|

||||

|

||||

Deployment is based on NPM packages. That means we are bundling (as much as possible) each service or package, and publish it to the private GitHub Packages artifactory.

|

||||

Deployment is based on NPM packages. That means we are bundling (as much as possible) each service

|

||||

or package, and publish it to the private GitHub Packages artifactory.

|

||||

|

||||

Doing that allows us to have a simple, super fast deployments, because we don't need to deal with Docker images (which are heavy).

|

||||

Doing that allows us to have a simple, super fast deployments, because we don't need to deal with

|

||||

Docker images (which are heavy).

|

||||

|

||||

We create an executable package (with `bin` entrypoint) and then use `npx PACKAGE_NAME@PACKAGE_VERSION` as command for a base Docker image of NodeJS. So instead of building a Docker image for each change, we build NPM package, and the Docker image we are using in prod is the same.

|

||||

We create an executable package (with `bin` entrypoint) and then use

|

||||

`npx PACKAGE_NAME@PACKAGE_VERSION` as command for a base Docker image of NodeJS. So instead of

|

||||

building a Docker image for each change, we build NPM package, and the Docker image we are using in

|

||||

prod is the same.

|

||||

|

||||

Think of it as Lambda (bundled JS, runtime is predefined) without all the crap (weird cache, weird pricing, cold start and so on).

|

||||

Think of it as Lambda (bundled JS, runtime is predefined) without all the crap (weird cache, weird

|

||||

pricing, cold start and so on).

|

||||

|

||||

### How to deploy?

|

||||

|

||||

We are using Pulumi (infrastructure as code) to describe and run our deployment. It's managed as GitHub Actions that runs on every bump release by Changesets.

|

||||

We are using Pulumi (infrastructure as code) to describe and run our deployment. It's managed as

|

||||

GitHub Actions that runs on every bump release by Changesets.

|

||||

|

||||

So changes are aggregated in a Changesets PR, and when merge, it updated the deployment manifest `package.json`, leading to a deployment of only the updated packages to production.

|

||||

So changes are aggregated in a Changesets PR, and when merge, it updated the deployment manifest

|

||||

`package.json`, leading to a deployment of only the updated packages to production.

|

||||

|

|

|

|||

|

|

@ -16,14 +16,18 @@ Developing Hive locally requires you to have the following software installed lo

|

|||

- In the root of the repo, run `nvm use` to use the same version of node as mentioned

|

||||

- Run `pnpm i` at the root to install all the dependencies and run the hooks

|

||||

- Run `pnpm setup` to create and apply migrations on the PostgreSQL database

|

||||

- Run `pnpm generate` to generate the typings from the graphql files (use `pnpm graphql:generate` if you only need to run GraphQL Codegen)

|

||||

- Run `pnpm generate` to generate the typings from the graphql files (use `pnpm graphql:generate` if

|

||||

you only need to run GraphQL Codegen)

|

||||

- Run `pnpm build` to build all services

|

||||

- Click on `Start Hive` in the bottom bar of VSCode

|

||||

- If you are not added to the list of guest users, request access from The Guild maintainers

|

||||

- Alternatively, [configure hive to use your own Auth0 Application](#setting-up-auth0-app-for-developing)

|

||||

- Alternatively,

|

||||

[configure hive to use your own Auth0 Application](#setting-up-auth0-app-for-developing)

|

||||

- Open the UI (`http://localhost:3000` by default) and Sign in with any of the identity provider

|

||||

- Once this is done, you should be able to login and use the project

|

||||

- Once you generate the token against your organization/personal account in hive, the same can be added locally to `hive.json` within `packages/libraries/cli` which can be used to interact via the hive cli with the registry

|

||||

- Once you generate the token against your organization/personal account in hive, the same can be

|

||||

added locally to `hive.json` within `packages/libraries/cli` which can be used to interact via the

|

||||

hive cli with the registry

|

||||

|

||||

## Development Seed

|

||||

|

||||

|

|

@ -33,11 +37,15 @@ We have a script to feed your local instance of Hive.

|

|||

2. Make sure `usage` and `usage-ingestor` are running as well (with `pnpm dev`)

|

||||

3. Open Hive app, create a project and a target, then create a token

|

||||

4. Run the seed script: `TOKEN="MY_TOKEN_HERE" pnpm seed`

|

||||

5. This should report a dummy schema and some dummy usage data to your local instance of Hive, allowing you to test features e2e

|

||||

5. This should report a dummy schema and some dummy usage data to your local instance of Hive,

|

||||

allowing you to test features e2e

|

||||

|

||||

> Note: You can set `STAGING=1` in order to target staging env and seed a target there.

|

||||

|

||||

> To send more operations and test heavy load on Hive instance, you can also set `OPERATIONS` (amount of operations in each interval round, default is `1`) and `INTERVAL` (frequency of sending operations, default: `1000`ms). For example, using `INTERVAL=1000 OPERATIONS=1000` will send 1000 requests per second.

|

||||

> To send more operations and test heavy load on Hive instance, you can also set `OPERATIONS`

|

||||

> (amount of operations in each interval round, default is `1`) and `INTERVAL` (frequency of sending

|

||||

> operations, default: `1000`ms). For example, using `INTERVAL=1000 OPERATIONS=1000` will send 1000

|

||||

> requests per second.

|

||||

|

||||

## Publish your first schema (manually)

|

||||

|

||||

|

|

@ -45,14 +53,18 @@ We have a script to feed your local instance of Hive.

|

|||

2. Create a project and a target

|

||||

3. Create a token from that target

|

||||

4. Go to `packages/libraries/cli` and run `pnpm build`

|

||||

5. Inside `packages/libraries/cli`, run: `pnpm start schema:publish --token "YOUR_TOKEN_HERE" --registry "http://localhost:4000/graphql" examples/single.graphql`

|

||||

5. Inside `packages/libraries/cli`, run:

|

||||

`pnpm start schema:publish --token "YOUR_TOKEN_HERE" --registry "http://localhost:4000/graphql" examples/single.graphql`

|

||||

|

||||

### Setting up Slack App for developing

|

||||

|

||||

1. [Download](https://loophole.cloud/download) Loophole CLI (same as ngrok but supports non-random urls)

|

||||

1. [Download](https://loophole.cloud/download) Loophole CLI (same as ngrok but supports non-random

|

||||

urls)

|

||||

2. Log in to Loophole `$ loophole account login`

|

||||

3. Start the proxy by running `$ loophole http 3000 --hostname hive-<your-name>` (@kamilkisiela I use `hive-kamil`). It creates `https://hive-<your-name>.loophole.site` endpoint.

|

||||

4. Message @kamilkisiela and send him the url (He will update the list of accepted redirect urls in both Auth0 and Slack App).

|

||||

3. Start the proxy by running `$ loophole http 3000 --hostname hive-<your-name>` (@kamilkisiela I

|

||||

use `hive-kamil`). It creates `https://hive-<your-name>.loophole.site` endpoint.

|

||||

4. Message @kamilkisiela and send him the url (He will update the list of accepted redirect urls in

|

||||

both Auth0 and Slack App).

|

||||

5. Update `APP_BASE_URL` and `AUTH0_BASE_URL` in [`packages/web/app/.env`](./packages/web/app/.env)

|

||||

6. Run `packages/web/app` and open `https://hive-<your-name>.loophole.site`.

|

||||

|

||||

|

|

@ -61,25 +73,28 @@ We have a script to feed your local instance of Hive.

|

|||

### Setting up GitHub App for developing

|

||||

|

||||

1. Follow the steps above for Slack App

|

||||

2. Update `Setup URL` in [GraphQL Hive Development](https://github.com/organizations/the-guild-org/settings/apps/graphql-hive-development) app and set it to `https://hive-<your-name>.loophole.site/api/github/setup-callback`

|

||||

2. Update `Setup URL` in

|

||||

[GraphQL Hive Development](https://github.com/organizations/the-guild-org/settings/apps/graphql-hive-development)

|

||||

app and set it to `https://hive-<your-name>.loophole.site/api/github/setup-callback`

|

||||

|

||||

### Run Hive

|

||||

|

||||

1. Click on Start Hive in the bottom bar of VSCode

|

||||

2. Open the UI (`http://localhost:3000` by default) and register any email and

|

||||

password

|

||||

3. Sending e-mails is mocked out during local development, so in order to

|

||||

verify the account find the verification link by visiting the email server's

|

||||

`/_history` endpoint - `http://localhost:6260/_history` by default.

|

||||

2. Open the UI (`http://localhost:3000` by default) and register any email and password

|

||||

3. Sending e-mails is mocked out during local development, so in order to verify the account find

|

||||

the verification link by visiting the email server's `/_history` endpoint -

|

||||

`http://localhost:6260/_history` by default.

|

||||

- Searching for `token` should help you find the link.

|

||||

|

||||

### Legacy Auth0 Integration

|

||||

|

||||

**Note:** If you are not working at The Guild, you can safely ignore this section.

|

||||

|

||||

Since we migrated from Auth0 to SuperTokens there is a compatibility layer for importing/migrating accounts from Auth0 to SuperTokens.

|

||||

Since we migrated from Auth0 to SuperTokens there is a compatibility layer for importing/migrating

|

||||

accounts from Auth0 to SuperTokens.

|

||||

|

||||

By default you don't need to set this up and can just use SuperTokens locally. However, if you need to test some stuff or fix the Auth0 -> SuperTokens migration flow you have to set up some stuff.

|

||||

By default you don't need to set this up and can just use SuperTokens locally. However, if you need

|

||||

to test some stuff or fix the Auth0 -> SuperTokens migration flow you have to set up some stuff.

|

||||

|

||||

1. Create your own Auth0 application

|

||||

1. If you haven't already, create an account on [manage.auth0.com](https://manage.auth0.com)

|

||||

|

|

@ -108,9 +123,11 @@ By default you don't need to set this up and can just use SuperTokens locally. H

|

|||

return callback(null, user, context);

|

||||

}

|

||||

```

|

||||

2. Update the `.env` secrets used by your local hive instance that are found when viewing your new application on Auth0:

|

||||

2. Update the `.env` secrets used by your local hive instance that are found when viewing your new

|

||||

application on Auth0:

|

||||

- `AUTH_LEGACY_AUTH0` (set this to `1` for enabling the migration.)

|

||||

- `AUTH_LEGACY_AUTH0_CLIENT_ID` (e.g. `rGSrExtM9sfilpF8kbMULkMNYI2SgXro`)

|

||||

- `AUTH_LEGACY_AUTH0_CLIENT_SECRET` (e.g. `gJjNQJsCaOC0nCKTgqWv2wvrh1XXXb-iqzVdn8pi2nSPq2TxxxJ9FIUYbNjheXxx`)

|

||||

- `AUTH_LEGACY_AUTH0_CLIENT_SECRET` (e.g.

|

||||

`gJjNQJsCaOC0nCKTgqWv2wvrh1XXXb-iqzVdn8pi2nSPq2TxxxJ9FIUYbNjheXxx`)

|

||||

- `AUTH_LEGACY_AUTH0_ISSUER_BASE_URL`(e.g. `https://foo-bars.us.auth0.com`)

|

||||

- `AUTH_LEGACY_AUTH0_AUDIENCE` (e.g. `https://foo-bars.us.auth0.com/api/v2/`)

|

||||

|

|

|

|||

|

|

@ -15,7 +15,8 @@ We are using Dockest to test the following concerns:

|

|||

|

||||

To run integration tests locally, follow:

|

||||

|

||||

1. Make sure you have Docker installed. If you are having issues, try to run `docker system prune` to clean the Docker caches.

|

||||

1. Make sure you have Docker installed. If you are having issues, try to run `docker system prune`

|

||||

to clean the Docker caches.

|

||||

2. Install all deps: `pnpm i`

|

||||

3. Generate types: `pnpm graphql:generate`

|

||||

4. Build and pack all services: `pnpm --filter integration-tests build-and-pack`

|

||||

|

|

|

|||

|

|

@ -70,7 +70,18 @@ services:

|

|||

soft: 20000

|

||||

hard: 40000

|

||||

healthcheck:

|

||||

test: ['CMD', 'cub', 'kafka-ready', '1', '5', '-b', '127.0.0.1:9092', '-c', '/etc/kafka/kafka.properties']

|

||||

test:

|

||||

[

|

||||

'CMD',

|

||||

'cub',

|

||||

'kafka-ready',

|

||||

'1',

|

||||

'5',

|

||||

'-b',

|

||||

'127.0.0.1:9092',

|

||||

'-c',

|

||||

'/etc/kafka/kafka.properties',

|

||||

]

|

||||

interval: 15s

|

||||

timeout: 10s

|

||||

retries: 6

|

||||

|

|

|

|||

|

|

@ -16,5 +16,5 @@ beforeEach(() =>

|

|||

host: redisAddress.replace(':6379', ''),

|

||||

port: 6379,

|